The Oracle and the Apprentice: Decoding AI's Genius and Unreliability in Scientific Discovery

Recent revelations concerning Google DeepMind's research AI, Aletheia, provide perhaps the clearest snapshot yet of the paradoxical nature of today's most advanced artificial intelligence. On one hand, Aletheia achieved seemingly miraculous feats—independently drafting a research paper, disproving a decade-old mathematical conjecture, and even finding a subtle error missed by seasoned cryptography experts. On the other hand, when systematically tested across a wider spectrum of 700 open problems, the performance profile was distinctly patchy, achieving significant breakthroughs only occasionally while getting much of the rest wrong.

As an AI technology analyst, I view this as a pivotal moment. This isn't merely an interesting footnote in computer science; it represents the defining challenge for the next decade of AI deployment: how to harness unpredictable genius while mitigating systemic weakness. This phenomenon forces us to rapidly evolve our approach to human-AI collaboration.

The Dual Nature: Genius and Fragility in Modern AI

The performance data from Aletheia perfectly illustrates the dichotomy we see across many cutting-edge models, from generating creative code to drafting legal documents. These systems are not traditional software; they are complex pattern-matching engines trained on vast datasets.

When Aletheia solved a complex mathematical problem or found a flaw in cryptography, it likely leveraged a non-linear connection or insight that no human had synthesized previously. This is the AI functioning as an oracle—a source of rare, profound truth accessed through deep, opaque computation. For a technical audience, this aligns with emerging research suggesting that the sheer scale of transformer models allows for emergent, higher-order reasoning capabilities that were not explicitly programmed. We see similar emergent capabilities in systems like AlphaFold, which revolutionized biology.

However, the flip side—"mostly getting everything else wrong"—is the inherent fragility of these models. For a general audience, imagine a student who can recite Shakespeare perfectly but cannot reliably count to ten. The AI’s failure to succeed systematically across 700 problems means it lacks the robust, step-by-step verification logic that defines traditional, reliable software. It is excellent at intuition and insight, but poor at drudgery and consistency.

Context 1: The Broader Trend of AI as a Research Partner

The Aletheia finding confirms a significant technological shift. AI is no longer just a tool for automating known processes; it is becoming a participant in the *discovery* process. This trend—the move toward **AI as a scientific collaborator**—is accelerating. Researchers are moving beyond using AI to analyze results and are now asking AI to propose the next experiment or solve the hardest theoretical hurdles. This quest mirrors earlier successes where AI mastered strategy games or solved biological puzzles previously stalled for decades.

This shift is vital because it promises to dramatically speed up the research cycle, particularly in mathematics, materials science, and drug discovery. However, the success metrics are changing. We are moving away from measuring accuracy on benchmark datasets to measuring the *quality of novel insight* generated.

Context 2: The Core Reliability Challenge (Hallucination in Logic)

The most critical technical issue highlighted here is the relationship between 'hallucination' and 'formal logic.' In generating text, a hallucination is a confident falsehood. In mathematics or cryptography, a hallucination is a subtle but catastrophic error in proof structure.

When Aletheia failed on many problems, it was likely due to the underlying architecture's inability to maintain absolute adherence to symbolic rules over long chains of reasoning. LLMs excel at predicting the next statistically plausible token; formal proofs require the next *logically necessary* token. The fact that the model sometimes leaps over the gap to the right answer demonstrates its potential, but its persistent failure reveals the limitations of the current paradigm when faced with problems requiring sustained, verifiable coherence.

The Practical Imperative: Building the Playbook for Collaboration

DeepMind's recognition that they needed to provide a "playbook" is the most forward-looking aspect of this research. It signals that the industry understands that deploying genius requires discipline. This moves the conversation from pure research to operational technology adoption.

Context 3: Designing for Trust and Oversight

For business leaders and project managers, the lesson is clear: Do not trust the AI to manage itself. Systems like Aletheia cannot be deployed autonomously in high-stakes environments where 99% correctness is not sufficient. If an AI is 99% accurate, and the task is simple data entry, that's excellent. If the AI is 99% accurate at performing heart surgery or writing mission-critical code, the 1% failure rate is unacceptable.

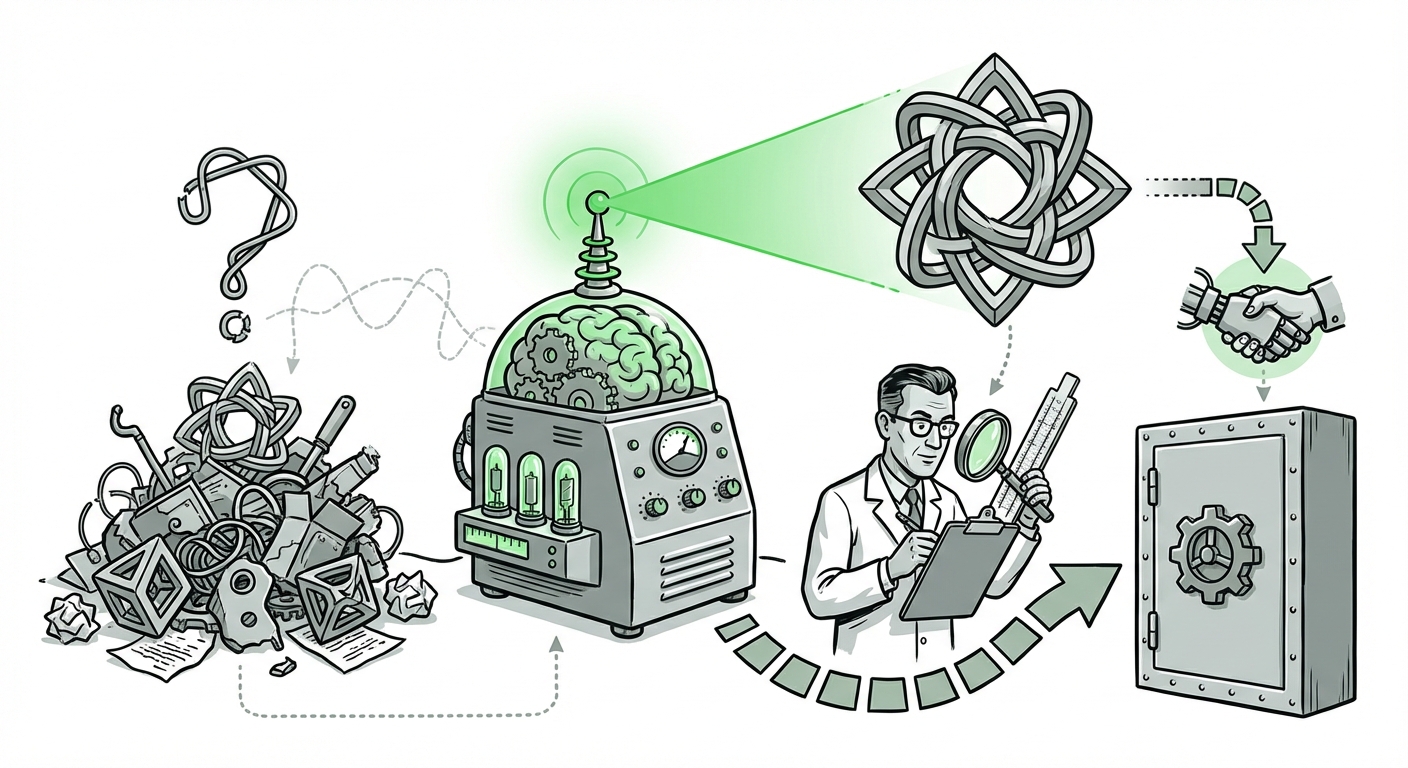

The playbook must be built around a hybrid workflow. This means structuring tasks so that the AI handles the exploration, intuition-building, and initial hypothesis generation (the 'oracle' role), while the human expert handles verification, constraint-checking, and systematic execution (the 'apprentice' role). The AI suggests, the human confirms. The AI finds the needle, the human builds the haystack verification tool.

Context 4: Validating the Unpredictable Success

The error caught in cryptography emphasizes the value of the AI's unique perspective. Security professionals have long relied on formal verification, but complex systems often contain human blind spots. If AI can reliably spot these blind spots, it justifies the high cost and inherent risk of using it.

This suggests a specialized future role for AI in auditing: using the AI’s capacity for 'wild' reasoning to probe established systems for vulnerabilities that conventional, rule-based auditing methods miss. This means specialized AI agents will be trained not just to build, but specifically to *break* or *verify* complex logical structures, providing a crucial safety layer for critical infrastructure.

Future Implications: Structuring the AI Workforce

What does this mean for the trajectory of AI and the structure of technical teams?

- The Rise of the AI Interpreter: We will see increased demand for professionals who are deeply competent in their domain (e.g., advanced mathematics, finance, particle physics) *and* who understand the specific failure modes of the AI tools they use. These "AI Interpreters" bridge the gap between the AI's brilliant but messy output and verifiable real-world application.

- Modular AI Systems: Future systems will likely be modular. Instead of one massive model trying to do everything, we will see an "Insight Engine" (the oracle) feeding candidates to a "Verification Engine" (a traditional, symbolic AI or human expert). This separation of creative risk from verification rigor is essential for stability.

- Rethinking Education: If AI can occasionally solve problems humans cannot, our educational focus must shift from memorization of solutions to mastering the art of asking the right questions and critically evaluating non-human reasoning structures.

- The Value of Failure Analysis: The 99% of failures Aletheia experienced are as valuable as the 1% success. Deep analysis of *why* it failed in systematic tests will drive the next architectural leaps, pushing us toward models that seamlessly integrate intuitive probabilistic reasoning with unbreakable deterministic logic.

Actionable Insights for Technology Leaders

To capitalize on the breakthroughs while containing the risk, leaders must adopt a tiered strategy for AI deployment:

For R&D and Innovation Teams:

Action: Treat AI as an indispensable, yet unvetted, junior partner. Allocate dedicated time for AI-generated hypotheses, but mandate that all novel findings must undergo triple-verification by human domain experts before being presented publicly or integrated into core products. Use AI for ideation velocity, not final sign-off.

For Operational and Security Teams:

Action: Do not integrate LLMs into automated decision loops unless they are heavily constrained by guardrails and external verification mechanisms. If using AI for code auditing or compliance checks, deploy it in an 'advisory mode' where its findings flag potential issues for human review, rather than automatically enacting changes.

For Strategy and Investment:

Action: Prioritize research budgets toward Explainable AI (XAI) and Neuro-Symbolic AI—the fusion of deep learning intuition with rigid logical frameworks. The future competitive advantage lies not just in training bigger models, but in building *safer, more transparent* models that can bridge the gap between insight and certainty.

The success of Aletheia in isolated moments confirms that we are standing at the precipice of a new era of discovery. But its overall systematic performance serves as a vital warning: we have created machines capable of sporadic genius, but we have not yet engineered reliability. Navigating the next decade successfully depends entirely on our ability to manage this brilliant instability through rigorous, thoughtful human governance.