GLM-5 Unleashed: How China's Open-Source LLM Redefines Global AI Power and Accessibility

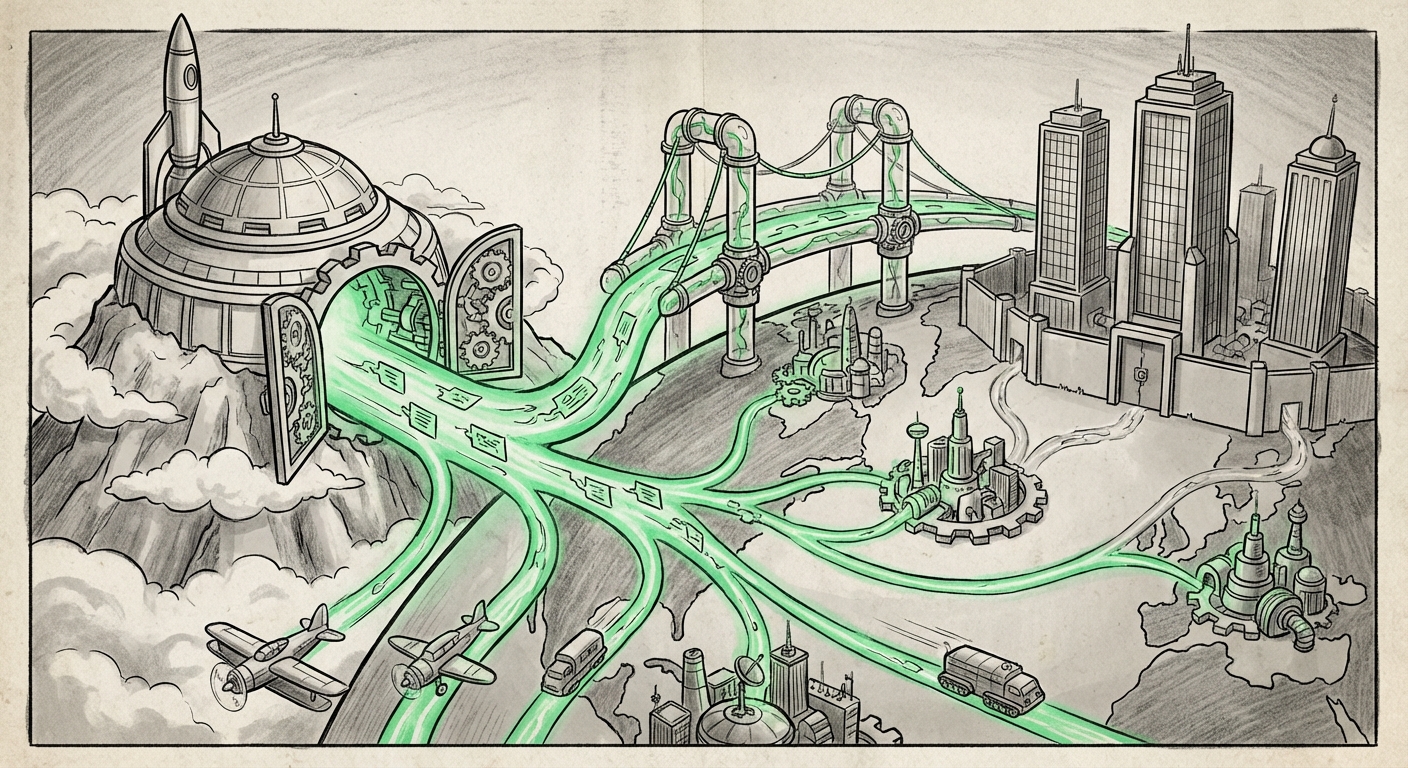

The landscape of Artificial Intelligence is characterized by intense, often secretive, development behind closed doors by a handful of well-funded Western labs. However, recent developments signal a profound tectonic shift. The release of Zhipu AI’s GLM-5, a massive 744-billion-parameter model, is more than just another high-performance language model; it is a declaration of competitive intent, delivered with the unprecedented gesture of an open-source MIT license.

For too long, the cutting edge of frontier AI capabilities—models rivaling the best from OpenAI or Anthropic—was inaccessible to independent researchers and smaller enterprises. GLM-5 challenges this status quo by claiming parity with proprietary giants like Claude Opus 4.5 and GPT-5.2, particularly in crucial areas like coding and autonomous agent performance. This development forces us to re-examine the vectors of technological leadership, the democratization of powerful tools, and the geopolitical implications of who controls the foundational models shaping our digital future.

The Magnitude of the Claim: Parity at Scale

When Zhipu AI asserts that GLM-5 rivals models like GPT-5.2 and Claude Opus 4.5, they are making a bold statement about the state of Chinese AI capability. We are not just talking about a general-purpose chatbot; we are discussing models that excel at complex reasoning, multi-step problem-solving, and advanced coding—the bedrock of next-generation AI agents.

Technical Validation: Beyond the Hype Cycle

For the technical analyst and the skeptical researcher, the immediate next step is validation. Claiming parity is one thing; proving it under diverse, rigorous, and reproducible conditions is another. Our corroborating search trajectory, focusing on benchmarks comparing GLM-5 against the proprietary leaders, is essential here. If GLM-5 truly performs comparably on agentic benchmarks—tasks requiring planning, tool use, and execution correction—it means its underlying architecture and training techniques are world-class. This forces the entire community to scrutinize the data used for training, the novel architectural choices, and the evaluation methodologies employed by Zhipu.

For the business leader, this means the barrier to entry for using high-end AI logic is dropping dramatically. You no longer have to pay premium API access fees to the dominant players if a functionally equivalent, self-hostable model exists.

The Strategic Shockwave: The MIT License Mandate

The technical specifications are impressive, but the licensing choice is the true strategic disruptor. Releasing a model of this caliber under the MIT License is an act of calculated generosity designed to maximize adoption and impact. The MIT license is famously permissive; it allows virtually unrestricted use, modification, and distribution, even commercially.

Democratizing Frontier Models

Imagine an industrial engineer needing a hyper-specialized AI assistant for complex supply chain optimization. Previously, they might have been locked into the API ecosystem of a US giant, dealing with latency, data sovereignty concerns, and recurring costs. Now, with GLM-5, that engineer’s team can download the weights, fine-tune the model on their proprietary internal data within their own secure cloud environment, and deploy it without perpetual licensing fees.

This directly addresses the concerns of policy analysts and business strategists tracking data sovereignty and vendor lock-in. When a model is open source, the *control* over the model’s deployment shifts from the provider to the user. This is a powerful counter-narrative to the trend of increasingly closed, gated AI development.

We must analyze the strategic intent (Query 2): Is Zhipu using open-source distribution as a rapid path to global developer mindshare, gathering feedback and contributions that proprietary labs must pay for? Or is this a move designed to embed Chinese-developed architecture deeply into global infrastructure, creating a de facto standard?

Contextualizing the Global Race: The Narrowing Gap

GLM-5 is not emerging in a vacuum. It is part of a sustained, well-funded effort within China to achieve technological independence and leadership in key AI domains. For investors and industry observers (Query 3), this confirms the trend: the world is rapidly moving toward a multi-polar AI development structure.

The traditional narrative positioned Western labs months, if not years, ahead of international competitors. While the US still leads in pure GPU compute aggregation, the *software efficiency*—the ability to squeeze world-class performance out of comparable training runs—appears to be rapidly equalizing. We are seeing Chinese firms not just catching up, but setting new benchmarks for accessibility while maintaining performance.

Implications for the "Closed" Ecosystem

The existence of a leading open-source model forces the proprietary incumbents to respond. If GLM-5 offers 95% of GPT-5.2’s capability for 0% licensing cost (assuming the user handles compute), the value proposition for paying top-tier API prices diminishes significantly, unless the proprietary models deliver truly differentiated, verifiable breakthroughs in safety or emergent reasoning capabilities.

This dynamic creates a fascinating bifurcation in the market: one path relies on highly controlled, premium, and often safer, closed models; the other champions rapid innovation, customization, and cost efficiency via accessible open weights.

Practical Implications: What Businesses Must Do Now

For enterprises looking to leverage Large Language Models effectively, the arrival of GLM-5 mandates a strategic pivot. Ignoring the implications of high-quality, open-source competition is no longer tenable.

1. Re-evaluating Build vs. Buy Decisions

The primary decision shifts from "Which API provider do we use?" to "Can we host a leading open model ourselves?" For highly regulated industries (finance, healthcare) or those with extreme latency requirements, self-hosting a model like GLM-5 becomes a compelling strategy. Our focus on developer reaction and hardware feasibility (Query 4) addresses this: if the 744B model is too large for practical deployment outside of a massive data center, its open-source nature is less valuable than a smaller, highly optimized model.

2. The Rise of Agentic Workflows

The stated strength in "coding and agent benchmarks" is critical. AI agents are the next phase of AI integration—systems that don't just answer questions but execute complex digital tasks (e.g., managing cloud resources, debugging complex codebases, or conducting detailed market research). If GLM-5 provides a robust, customizable foundation for these agents, businesses must immediately begin prototyping agentic workflows using this accessible foundation.

3. Talent and Skill Diversification

Companies that have focused solely on prompt engineering for proprietary APIs may find themselves needing new skills: model quantization, fine-tuning techniques (like LoRA), and distributed inference management. The openness of GLM-5 encourages a deeper dive into the mechanics of AI, requiring teams to evolve from mere consumers of AI services to informed integrators and operators.

The Future Outlook: A Truly Competitive AI Frontier

The release of GLM-5 is a powerful indicator that the AI frontier is becoming decentralized. While the largest labs will continue to push the absolute physical boundaries of model size and capability (often behind closed doors), the "sweet spot" of high-performance, highly customizable, and immediately usable models is migrating rapidly toward the open ecosystem, fueled by international competition.

This democratization carries both opportunity and risk. The opportunity lies in vastly accelerated innovation across sectors previously priced out of advanced AI access. The risk lies in the speed at which powerful, potentially dual-use technology spreads without the standardized safety guardrails that proprietary labs attempt (with varying success) to enforce. Independent verification of safety and alignment standards for GLM-5 will become a critical area of focus for regulators and researchers alike.

Ultimately, Zhipu AI's move injects necessary competitive tension into the market. It validates the quality emerging from non-Western labs and hands powerful tools directly to the global developer community. The next 12 months will be defined by how quickly the open-source community can optimize, verify, and build upon this powerful new foundation, forever changing the accessibility equation in the race for Artificial General Intelligence.