The Open Frontier: How GLM-5’s MIT Release is Reshaping Global AI Economics and Power

The release of a truly competitive, massive foundational model under a maximally permissive license is a monumental event in technology. Zhipu AI’s unveiling of GLM-5—a 744-billion-parameter system claiming feature parity with proprietary leaders like Claude Opus 4.5—is forcing a fundamental reassessment of where cutting-edge AI resides and who controls its deployment. This is not merely an incremental update; it’s a strategic disruption that touches on technical capability, global licensing standards, and the intense geopolitical AI race.

To understand the earthquake this release causes, we must look beyond the immediate press release and investigate the three pillars supporting this development: technical validation, the power of the MIT license, and the long-term economic consequences for the industry.

Beyond the Hype: Validating World-Class Open Performance

The core challenge any powerful open model faces is trust. When Zhipu claims GLM-5 rivals models only accessible via closed APIs, the technical community—developers, researchers, and engineers—immediately demands proof. This community needs assurance that the performance isn't inflated or niche-specific. Queries concerning "744B parameter LLM performance vs proprietary models" help contextualize this claim.

For a 7th-grade understanding, imagine two highly advanced toy robots. One is controlled remotely by a big company (the proprietary model), and the other is given to everyone to keep and modify (GLM-5). If the company’s robot performs slightly better on a complex maze, that’s interesting. But if the open robot performs just as well on the maze—and can be customized to also cook pancakes—the utility of the open one skyrockets.

The focus here is on parameter efficiency and architectural breakthroughs. Is 744 billion parameters the magic number? Or has Zhipu achieved efficiency through superior data curation or innovative training techniques (like Mixture-of-Experts, or MoE, architectures)? If external validation confirms strong performance on coding and reasoning tasks, it proves that the highest levels of AI competency are no longer exclusive to the handful of corporations capable of building closed-off, billion-dollar compute clusters.

Future Implication: The Rise of Specialized, Self-Hosted AI

Validated parity means businesses can stop treating SOTA models as a distant goal only accessible through subscription. It shifts the focus from *access* to *implementation*. For technical teams, this means training can pivot toward efficiency and specialization rather than chasing the largest public benchmarks. It empowers smaller nations and research institutions to participate fully in the next wave of generative AI innovation.

The MIT License: Democratization Through Radical Openness

The decision to release GLM-5 under the MIT License is arguably the most significant strategic element. The MIT license is famously simple and permissive, contrasting with the more restrictive licenses sometimes attached to other large open models (which often limit commercial use or require specific governance disclosures).

This aligns with ongoing discussions about "China open source AI licensing trends." While the nation navigates complex technological sovereignty issues, making a foundational model freely available removes the single biggest barrier to global adoption: control. Policy analysts view this as a long-term play to establish architectural dominance by making their technology the default starting point for global development.

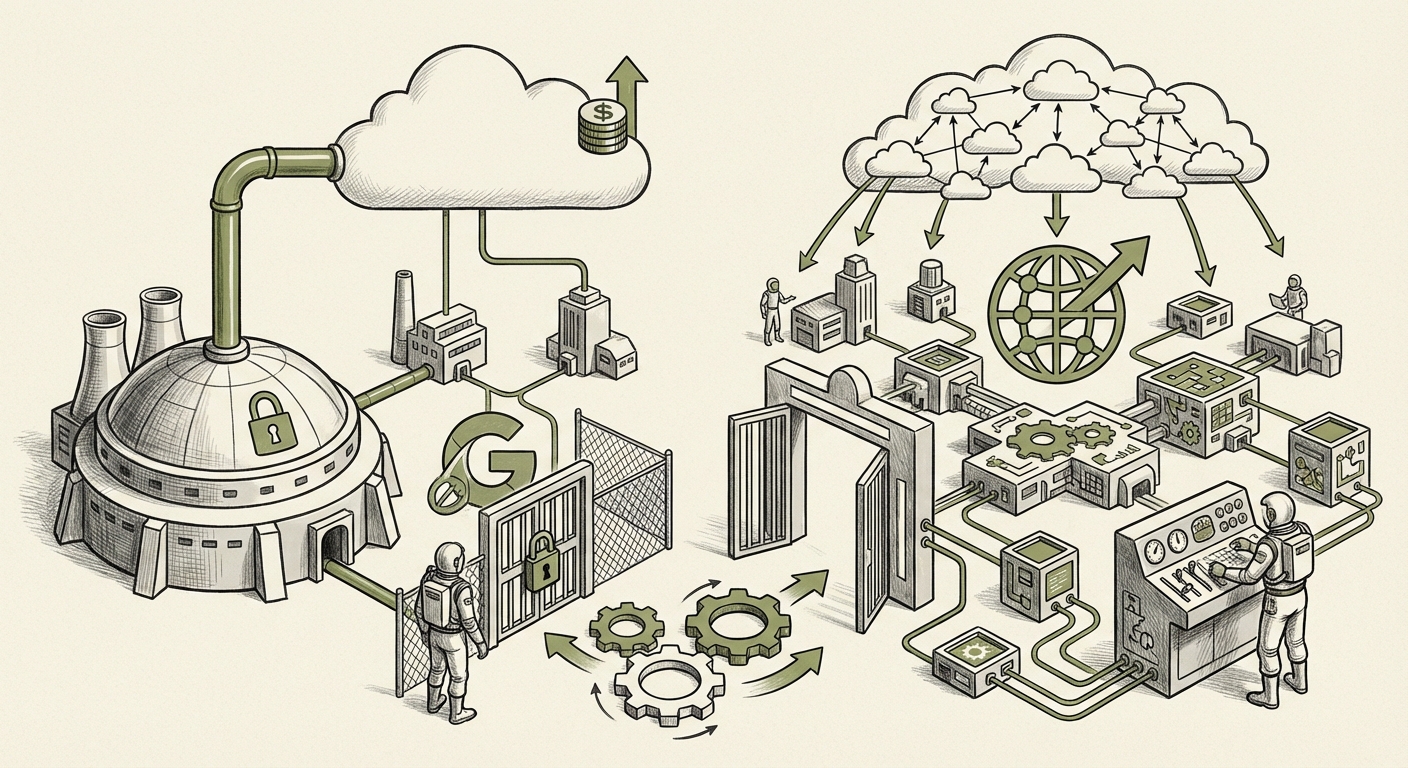

Economic Disruption: Breaking the Cloud Monopoly

This licensing strategy directly impacts the operating costs of AI worldwide. Queries investigating the "Impact of open LLMs on cloud computing costs" highlight the immediate financial benefit. For years, using models like GPT-4 meant paying per token, a cost that scales directly with usage, locking enterprises into specific cloud providers.

With GLM-5, companies can download the entire model structure. If they have the necessary high-end hardware (or can procure it), they shift the variable cost of API calls into a fixed, upfront cost of infrastructure. This is a massive incentive for risk-averse corporations worried about unpredictable monthly cloud bills for inference. For CTOs, this is a direct mandate to stress-test self-hosting options; the total cost of ownership for running specialized, high-volume AI tasks may drastically favor the open-source route now that the capability ceiling has been raised so high.

Geopolitical Context: Strategy, Funding, and Influence

No major AI release occurs in a vacuum, especially when it originates from a nation currently subject to technological competition restrictions. Analyzing "Zhipu AI funding and international partnerships" provides the necessary background to understand the strategic depth behind GLM-5.

Zhipu AI is not just a startup; it operates within a broader national technological ecosystem often supported by significant strategic investment. Releasing GLM-5 openly serves as a powerful form of technological diplomacy. It says to the global developer community: "Our foundational science is world-class, and we invite you to build upon it."

This strategy potentially mitigates the impact of restrictions on advanced hardware imports. If the open-source community rapidly develops highly optimized ways to run, compress, and deploy GLM-5—ways that perhaps circumvent the restrictions placed on specific GPU models—then the restriction on hardware becomes less damaging to the overall pace of innovation.

Actionable Insights for Enterprise Adoption

Businesses must adjust their AI sourcing strategy based on this new reality:

- The 'Open' Security Review: For organizations dealing with critical infrastructure or sensitive IP, having the weights allows for unparalleled security auditing. You can verify precisely what the model is trained on and how it operates—a level of transparency closed models cannot offer.

- Decentralization of Inference: Begin planning for hybrid deployment models. Use proprietary APIs for rapid prototyping and highly novel tasks, but plan to migrate high-volume, routine tasks to self-hosted, fine-tuned versions of models like GLM-5 to lock in long-term cost savings.

- Talent Acquisition Focus: Prioritize hiring talent skilled in model deployment frameworks (like vLLM or ONNX) and quantization techniques, as these skills will unlock the financial benefits of open models immediately.

Conclusion: A True Multi-Polar AI World Emerges

The release of Zhipu’s GLM-5 is a watershed moment that reinforces the trend toward a more multi-polar world for frontier AI development. It validates the global talent pool outside the immediate control of the Silicon Valley incumbents and aggressively pushes the envelope on accessibility through the MIT license.

For the future of AI, this means increased speed, lower costs for deployment, and richer diversity in the tools available to solve human problems. The power balance is shifting: developers now have a powerful, unrestricted choice that competes directly with the most expensive, closed services. The next few months will be defined by how thoroughly the technical community validates these parity claims and how quickly global enterprises move to integrate this highly capable, open-source foundation into their core operations, forever changing the economics of artificial intelligence.