GLM-5 Unleashed: How China's Open-Source Powerhouse Redefines the Global AI Race

The landscape of generative Artificial Intelligence is undergoing a seismic shift. For years, the narrative has been dominated by closed, proprietary ecosystems controlled by a handful of well-funded Western giants. However, the recent announcement from Zhipu AI, a prominent Chinese laboratory, changes the equation entirely. Their release of **GLM-5**, a massive 744-billion-parameter model, and its distribution under the permissive **MIT license**, signals a declaration of technical parity and an open challenge to the existing order.

This development is not just about a new benchmark score; it is a strategic maneuver that touches upon accessibility, geopolitical competition, and the very speed at which global AI innovation will proceed. To truly grasp the implications, we must examine three core areas: technical credibility, licensing strategy, and competitive positioning.

The Technical Gauntlet: Claims of Parity and the Need for Validation

The headline claim accompanying GLM-5’s debut is staggering: it rivals industry leaders like Anthropic’s Claude Opus 4.5 and the rumored capabilities of OpenAI’s next-generation model on complex reasoning and coding tasks. For the AI community, this immediately raises the question: Is it true?

When a major lab makes a claim of this magnitude, the first step for developers and researchers—those we might call the technical analysts—is rigorous validation. We look to established leaderboards and dedicated community stress tests. Queries focusing on "GLM-5 benchmark validation" are designed to uncover independent confirmations of performance across standardized metrics like HumanEval (for coding) or MMLU (for general knowledge).

If GLM-5 genuinely achieves state-of-the-art (SOTA) performance in crucial domains like advanced coding and complex agentic workflows, it means the gap between the leading proprietary API services and the best available open-source weights has effectively closed. For developers, this translates directly into capability. They can now download, inspect, modify, and run cutting-edge reasoning power locally, without paying API fees or relying on external servers. This democratization of raw capability is transformative.

What This Means for the Future of AI Development

If validated, GLM-5 validates the decentralized approach to LLM scaling. It proves that world-class performance is achievable outside the highly centralized, multi-billion-dollar compute clusters primarily held by US tech giants. This encourages faster iteration cycles globally. Developers in smaller labs, universities, or even startups with modest resources can now stand on the shoulders of a 744B parameter giant, fine-tuning it for niche languages, specific regulatory environments, or proprietary enterprise tasks.

The Licensing Gambit: The Power of the MIT License

Perhaps even more significant than the model’s size is its deployment license: the MIT License. This is the ultimate open-source signal, and it has profound implications for AI governance and adoption.

Unlike some previous "open" models that came with significant usage restrictions (often prohibiting use in competitive commercial products or requiring attribution that slows down integration), the MIT license is about as permissive as it gets. It essentially says: "Do whatever you want with this code and weights, just don't sue us."

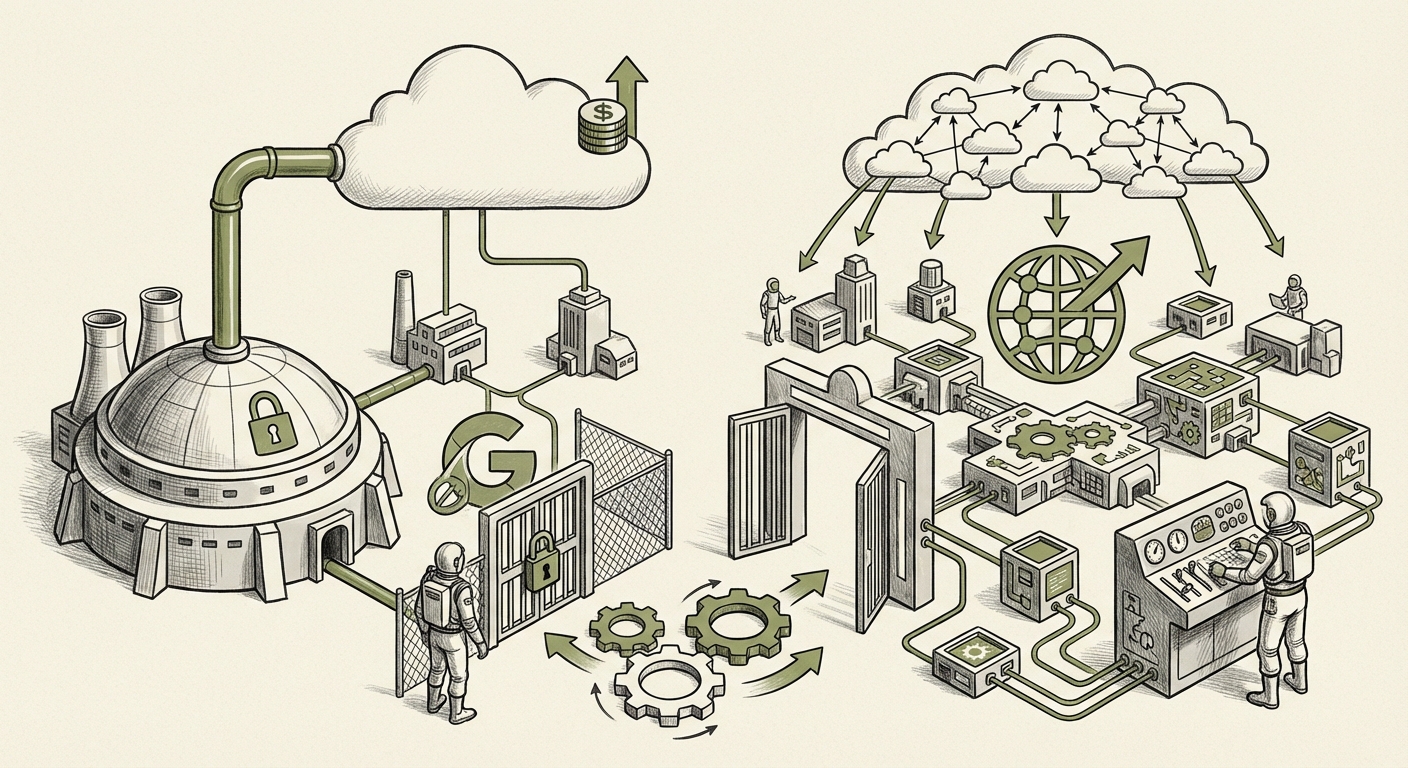

Our analytical focus here shifts to AI policy analysts and legal experts, who would investigate the "Impact of MIT license on large language models." This move directly challenges the prevailing philosophy of the proprietary leaders. When powerful models are locked behind APIs, control is maintained via pricing, usage policies, and access limitations. By releasing GLM-5 under MIT, Zhipu is maximizing its potential global footprint, ensuring that developers worldwide adopt and build upon its architecture.

Practical Implications for Businesses and Society

For businesses, the MIT license offers unprecedented flexibility:

- Data Sovereignty: Companies handling sensitive data (finance, healthcare) can run GLM-5 entirely on their own hardware, ensuring data never leaves their controlled environment.

- Customization and Fine-Tuning: Deep specialization becomes far cheaper. Instead of paying per-token for a proprietary model, a firm can invest once in fine-tuning the open weights for their specific internal documentation or technical jargon.

- Avoiding Vendor Lock-in: Businesses are no longer tethered to the pricing structure or sudden policy changes of a single provider (like OpenAI or Google). If one service gets too expensive or restricts certain use cases, they have a fully capable local alternative ready to deploy.

However, this accessibility also introduces risks concerning misuse, a factor analysts must monitor closely regarding the downstream deployment of such a powerful, unrestricted tool.

The Geopolitical Chessboard: Strategy vs. Openness

The release of a leading-edge Chinese model to the global community under a US-originating open-source license cannot be viewed outside the context of ongoing technological competition. This directly feeds into discussions around "China's LLM strategy open source vs proprietary."

In the face of Western export controls aimed at slowing China's progress in cutting-edge hardware (like advanced GPUs), releasing a world-class model openly serves a dual purpose. It secures global developer adoption, fostering an ecosystem around Zhipu’s technology stack, and subtly undercuts the walled-garden approach favored by many US competitors. It’s a soft-power play using technological excellence.

For geopolitical tech strategists and investors, this indicates a shift. While much R&D in China remains highly centralized and proprietary, strategically powerful tools are being pushed into the open to ensure the underlying architecture becomes a global standard. This contrasts sharply with the cautious, tiered release strategies often seen from leading Western labs, where models are often released sequentially or with significant guardrails.

Actionable Insights for Global Technology Leaders

The emergence of GLM-5 forces executives everywhere to update their AI strategy:

- Audit Your Cloud Dependency: If your AI strategy relies 100% on proprietary APIs, you are exposed to future price hikes or geopolitical friction. Begin architecting solutions that can seamlessly switch between proprietary and powerful open-source backends.

- Invest in Open-Source Talent: The value is shifting from simply *using* models to *adapting* and *hosting* them. Talent skilled in fine-tuning, quantization, and deploying large open models will become premium assets.

- Prepare for Benchmark Realism: Do not wait for official announcements from Western labs. Actively test models like GLM-5 against your specific business use cases now. The performance parity may already be here for your needs.

The Future: Decentralization and Accelerated Innovation

What does the confluence of these three factors—SOTA performance, permissive licensing, and geopolitical competition—mean for the long-term trajectory of AI? It signals an acceleration toward decentralization.

The era where only three or four companies could truly advance the frontier of LLMs may be ending. When a 744B parameter model, capable of complex reasoning, is freely available for anyone to run and modify, the pace of derivative innovation skyrockets. We can expect an explosion in specialized, highly efficient, and domain-specific models built upon the GLM-5 foundation.

While proprietary models will continue to lead on the absolute bleeding edge, often leveraging access to unique, secret data or superior compute infrastructure, the *utility curve* for the general public and most enterprises will flatten much faster due to open-source releases like GLM-5. The gap between the best proprietary system and the best *available open* system is shrinking rapidly, fundamentally shifting the economic calculus for AI adoption across the globe.