The Great AI Model Purge: Why OpenAI Is Retiring GPT-4o and What It Signals for the Future

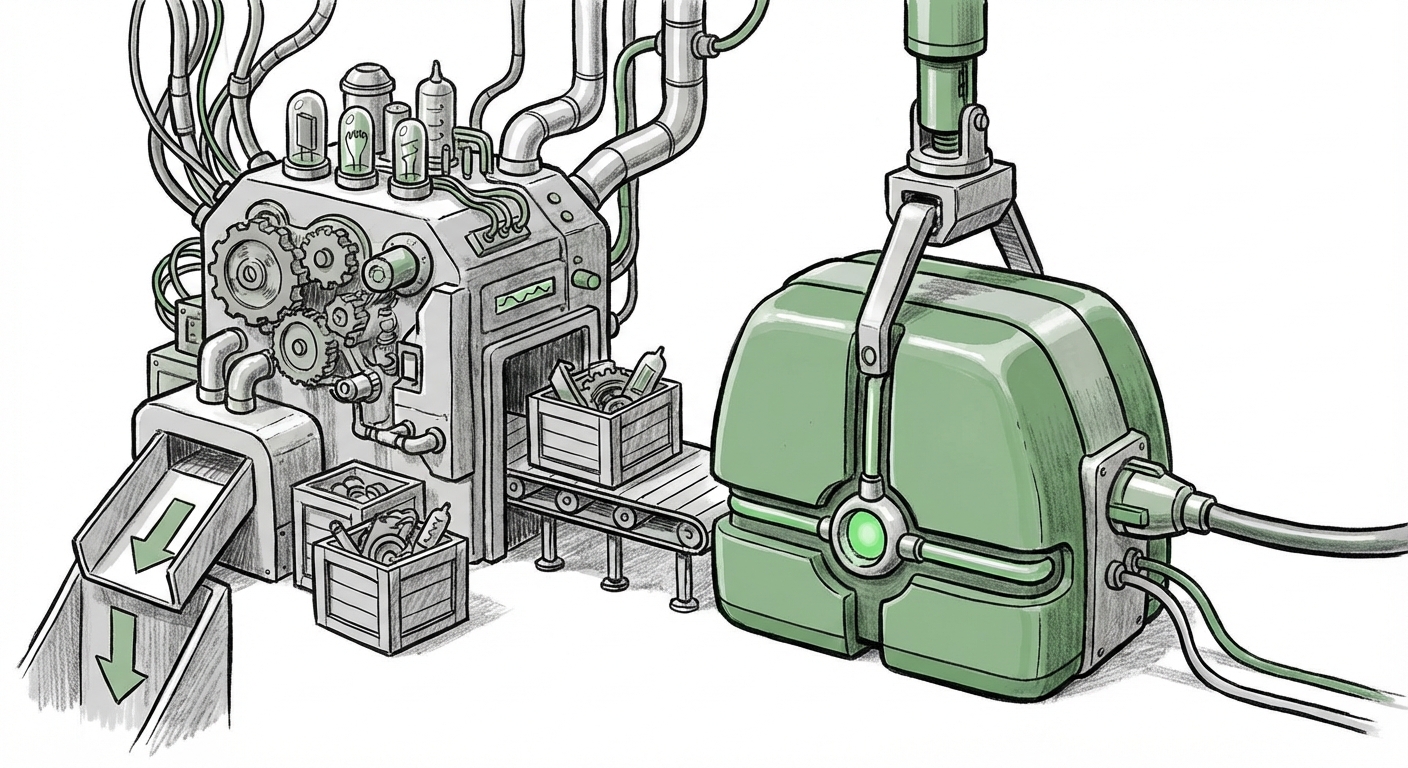

The pace of innovation in Artificial Intelligence is not just fast; it’s bordering on frantic. Yesterday’s state-of-the-art is today’s legacy system, destined for the digital scrapyard. A recent announcement detailing the retirement of OpenAI’s GPT-4o alongside several older models underscores this relentless cycle. While this might appear to be a simple, mundane technical cleanup—a footnote in the release notes—the decision to sunset a model as significant as GPT-4o hints at deeper strategic maneuvers shaping the very foundation of the next generation of AI.

As an AI technology analyst, my focus shifts immediately from *what* is being removed to *why* it is being removed, and what the removal allows OpenAI to build next. This is less about saying goodbye to old code and more about setting the stage for an accelerated future.

The Economics of Speed: Why Consolidation Trumps Legacy Support

The most immediate, non-sentimental reason for dropping older models is infrastructure and operational cost. Think of it like an auto manufacturer trying to service two different engine types from two different decades—it doubles the complexity and the bill. GPT-4o was introduced as the "omni-model," boasting faster speeds and lower operational costs than its predecessors, particularly GPT-4 Turbo.

When a company like OpenAI supports multiple active endpoints—each requiring distinct training weights, fine-tuning pathways, and serving clusters—the cost multiplies. If the vast majority of users have already migrated to the superior, cheaper option (GPT-4o), keeping the older endpoints running becomes an exercise in planned obsolescence forced by technical debt.

We must consider the economics of running these systems. Inference—the process of getting an answer from the AI—is expensive at scale. If GPT-4o requires 20% less computation to deliver a 10% better result than the model it is replacing, the financial argument for immediate retirement is overwhelming. This is confirmed when we examine industry trends that prioritize **efficiency and cost-per-token** above all else for long-term sustainability. For developers relying on the API, this rapid turnover means that AI deployment is no longer a "set it and forget it" operation; it demands continuous re-evaluation to leverage cost savings and performance bumps.

Actionable Insight for Businesses (Technical Audience)

CTOs and infrastructure leads must design AI pipelines with built-in abstraction layers. Your application logic should interface with a conceptual model layer (e.g., "Use the best current multimodal model") rather than hard-coding API calls to specific, named versions (e.g., `gpt-4o-2024-05-13`). This shields your operations from sudden deprecations, allowing you to benefit from cost reductions without breaking production builds.

The Emotional Interface: Backlash and the Humanization of AI

The second, more nuanced layer to this story involves user perception. The source article noted the "emotional attachments" users form. This is amplified when dealing with highly expressive models like those powering GPT-4o’s voice interface.

When GPT-4o launched, its responsiveness and eerily human tone sparked significant public conversation, even triggering controversy over likenesses (e.g., the Scarlett Johansson voice concern). Users were quick to form personalities around these models. When an established interface, even if technically inferior, is abruptly removed, it feels like a betrayal or a forced change in a working relationship.

For the average user, the retirement of a model isn't about optimizing GPU utilization; it's about the sudden disappearance of a familiar tool. This dynamic highlights a critical future implication: **as AI becomes seamlessly integrated into daily workflows, its personality and consistency become part of the user experience.** Companies like OpenAI must become masters of managing this "AI persona lifecycle" to avoid alienating their user base, even when technical superiority dictates a change.

The Competitive Mirror: How Rivals Handle Lifecycle Management

This aggressive deprecation pace is also a direct response to competitive pressure. Google’s Gemini and Anthropic’s Claude are relentlessly closing the gap. In this environment, stagnation means death. If OpenAI leaves an older, slightly less capable model available, it creates a testing ground for competitors to poach users looking for stability or specific feature sets.

By forcing the pivot to the newest standard (GPT-4o), OpenAI ensures its developer ecosystem is concentrated on the bleeding edge, where their current advantages lie. Analyzing how rivals manage their own model versions—often offering longer transition periods—reveals the inherent risk OpenAI takes by adopting this 'rip the band-aid off' strategy. Market maturity demands that companies must quickly prove that the *next* model is worth the switch, or risk user churn.

The Future Implication: Consolidation Precedes Specialization

What comes after the sunsetting of the powerful, generalist GPT-4o? The industry often follows a pattern: build a massive, general intelligence, consolidate it for efficiency, and then use that efficient base to launch specialized versions.

This massive cleanup likely precedes the widespread rollout of next-generation, potentially smaller, more focused models. We are already seeing significant industry interest in **Mixture of Experts (MoE)** architectures and models optimized for **edge deployment** (running directly on phones or laptops). These smaller, efficient models are perfect for specific tasks—like processing voice commands instantly or handling quick summarization—without needing the massive overhead of a full-stack GPT-4o.

If OpenAI consolidates its main cloud offering onto a single, highly efficient architecture, it frees up resources and architectural blueprints to aggressively push smaller, domain-specific models. This aligns perfectly with the broader trend of LLMs moving off the massive cloud servers and onto our personal devices.

The Shift to Edge AI

For developers, this means future innovation won't just be about calling the biggest model in the cloud. It will be about knowing which small, fast, task-specific model to deploy locally for maximum speed and data privacy. The retirement of a large model suggests the focus is moving from *general capability* to *efficient deployment*. This is a fundamental shift from prioritizing raw power to prioritizing ubiquitous accessibility.

Societal Impact: Dealing with Digital Amnesia

On a broader, societal level, the rapid deprecation of models creates a problem of digital history and reproducibility. Researchers, journalists, and even hobbyists who relied on the specific output characteristics of a retired model—its tone, its knowledge cutoff, or its biases—suddenly find their historical records invalidated or difficult to replicate.

If an AI chatbot gave a specific set of instructions last year, and that model version is retired, proving what the AI *actually* said or recommended becomes a challenge of accessing archived—and often costly—APIs or obscure private logs.

This forces us to confront the ethics of digital amnesia in AI development. If the AI we interact with today is fundamentally different from the one we used six months ago, how do we maintain accountability? The industry must standardize better version logging and perhaps offer long-term, read-only access to critical retired models for archival and auditing purposes.

Conclusion: Embracing the Velocity of Change

The retirement of GPT-4o is a high-visibility indicator of the underlying forces driving the AI industry: cost optimization, competitive warfare, and architectural evolution toward multimodal efficiency. This isn't just OpenAI cleaning house; it’s a signal flare to the entire ecosystem.

For businesses, the takeaway is clear: **Agility is the new moat.** Your technical stack must be nimble enough to absorb these sudden leaps in performance and efficiency without fracturing your operations. For developers, it means mastering the art of abstraction and preparing for a world where AI logic is modular, rapidly evolving, and increasingly deployed where you least expect it—on the edge.

We are moving past the era of proving large models *can* exist; we are entering the era of proving they can exist *affordably, fast, and everywhere*. The quick sunsetting of GPT-4o is the sound of the gate closing on the previous generation, pushing us all toward the next, faster frontier.