The Reasoning Race: How Gemini 3's "Deep Think" Redefines AI for Science and Engineering

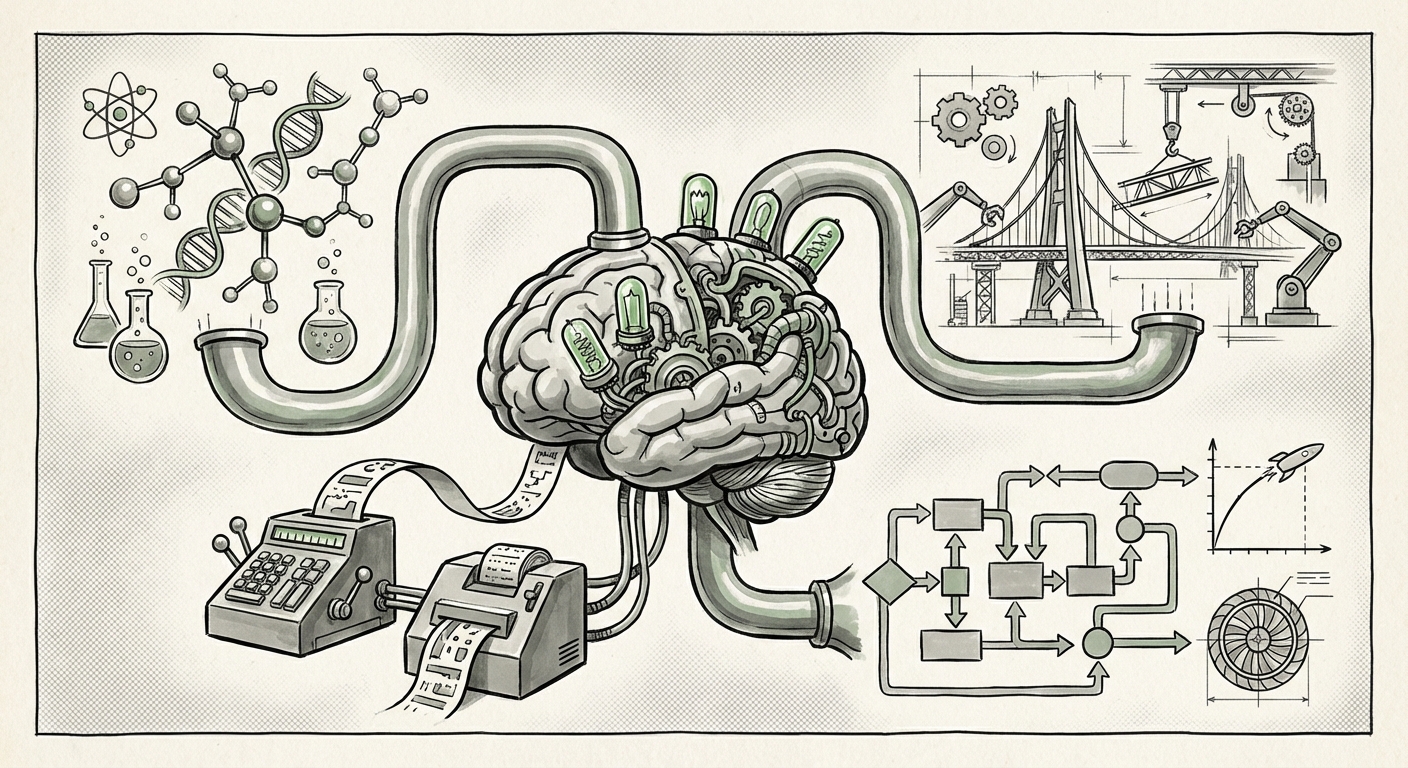

The field of Artificial Intelligence has often been characterized by breathtaking forward leaps in scale—more parameters, more data, bigger models. However, the recent announcement regarding Google DeepMind's upgraded Gemini 3, specifically its enhanced "Deep Think" mode, suggests the industry is entering a crucial new phase: the **Age of Reliable Reasoning**.

This isn't just about building a better chatbot. This upgrade is a direct challenge to the status quo, aiming to transform foundational models from sophisticated assistants into genuine partners in complex scientific discovery and rigorous engineering design. When an AI claims major improvements in reasoning and coding benchmarks, it indicates a fundamental shift in capability that warrants deep analysis.

The Shift: From Chatbot to Scientific Collaborator

For years, the primary interaction with Large Language Models (LLMs) centered on creativity, summarization, and general knowledge retrieval. While powerful, these tasks often tolerate a degree of "hallucination" or inaccuracy, which is acceptable in brainstorming but catastrophic in applied science or critical infrastructure design.

The goal of "Deep Think" appears to be addressing this gap. Think of it like upgrading from a sharp student who can ace multiple-choice tests to a seasoned engineer who can design a stable bridge. Engineering tasks and complex scientific modeling demand:

- Multi-Step Planning: Breaking down massive problems into sequential, verifiable steps.

- Constraint Adherence: Respecting the rigid laws of physics, chemistry, or computational limits.

- Symbolic Manipulation: Accurately managing abstract mathematical and logical relationships.

If Gemini 3’s Deep Think lives up to its promises, it moves AI into a high-stakes domain. As we investigate this development, we must look beyond marketing and examine the competitive evidence and architectural underpinnings.

1. Validating the Claims: The New Benchmark Battleground

Any major model release is immediately scrutinized against its peers. The true measure of Gemini 3’s prowess will be its performance relative to OpenAI’s latest frontier models, such as GPT-4o, and Anthropic’s Claude models. We must look for corroborating data that places these models head-to-head in rigorous tests.

The key areas of validation are the benchmarks used for complex thought. Are we talking about improved performance on the MMLU (Massive Multitask Language Understanding) benchmark, which tests broad knowledge, or specialized coding and mathematical reasoning tests like GSM8K or HumanEval? For science and engineering, the latter is far more critical.

When analysts review these metrics, they seek evidence that the architectural improvements are not merely iterative, but transformative in their ability to maintain coherence over deep, multi-layered problem-solving sessions. The market keenly watches these comparisons because, in the world of AI investment, demonstrable, superior reasoning capability translates directly into market leadership.

(For readers interested in granular metric comparisons, tracking dedicated performance analyses from leading tech outlets is essential, as these sources break down the specific reasoning scores that confirm the viability of these claims.)

2. The Scientific Horizon: From Data Crunching to Hypothesis Generation

The potential for LLMs in scientific discovery is perhaps the most exciting implication of a "Deep Think" mode. Currently, AI excels at synthesizing existing literature or analyzing vast datasets. The next frontier, which Gemini 3 aims to breach, is **autonomous scientific hypothesis generation and simulation validation**.

Imagine an AI not just reading every paper ever written on protein folding, but proposing novel, computationally feasible molecular configurations that human scientists haven't yet conceived. This requires an understanding of complex systems that mirrors specialized domain expertise.

Reports on the broader role of LLMs in R&D suggest that the bottleneck is shifting from data collection to high-fidelity simulation and interpretation. If Gemini 3 can reliably draft, execute, and interpret complex simulations—for instance, testing aerodynamic properties or designing new chemical catalysts—it shortens R&D cycles by years. This capability is not just an efficiency gain; it is a fundamental accelerator for human progress in fields like medicine and sustainable energy.

(The trajectory for AI in these fields is well-documented; articles exploring recent breakthroughs in AI-driven materials science offer a real-world view of the problems a model like Deep Think is designed to solve.)

3. The Architectural Secret: Tool Use and Planning Agents

How does a model move from "thinking" to "doing"? The secret often lies not just in the size of the model but in its ability to interact with the world—a concept known as **tool use** or **function calling**.

For an AI to solve a complex engineering problem, it cannot rely solely on its internal memory (its training data). It must be able to decide: "I need to calculate the stress coefficient; I will call the specialized internal Python interpreter with this code." Or, "I need to check the latest market data; I will call the live web search API."

Google DeepMind’s focus on enabling "Deep Think" implies significant advancements in their system architecture that manages these tool calls. This means the model is getting better at self-correction and planning—it can map out a difficult task, realize it needs an external resource at step three, use that resource, and then seamlessly reintegrate the result back into its original logical flow.

For CTOs and architects, this points toward the future of enterprise AI deployment: **Reasoning Agents**. These agents don't just answer questions; they execute long-running projects by orchestrating multiple specialized tools.

Practical Implications: Reshaping Industry Workflows

The ability to reliably handle complex logic and engineering code has immediate, tangible effects across several sectors:

For Software Development

If Gemini 3 can lead in coding benchmarks, it signals a future where AI moves from generating boilerplate code snippets to architecting entire microservices or debugging massive legacy systems. The implication is a massive acceleration in software delivery, provided developers trust the reasoning behind the suggested architecture. The bar for "good enough code" is rapidly rising.

For Engineering and Manufacturing

Engineers can offload the first-pass iteration on designs. Whether optimizing airflow over a turbine blade or refining the cooling loop for a data center, the AI can explore the solution space far faster than traditional computational fluid dynamics (CFD) workflows might allow initially. This democratizes advanced simulation, making high-level optimization accessible to smaller firms.

For Financial Modeling

In finance, complex tasks involve intricate modeling, risk assessment, and regulatory compliance checks. A reasoning model that excels at symbolic math and detailed logical structure is crucial for building reliable, auditable quantitative trading strategies or complex derivative pricing models.

The Road Ahead: Accuracy, Safety, and Trust

This move toward high-stakes application introduces critical considerations that must be addressed by both providers and users. When an AI is tasked with designing a critical component, a failure in reasoning is no longer an annoyance—it’s a liability.

This is why the market sentiment regarding specialized reasoning models is so important. Investors and enterprise buyers are not just looking for speed; they are looking for **trustworthiness**. Google and its competitors face immense pressure to provide transparency into *how* the "Deep Think" process arrives at its conclusion. The ability to trace the chain of reasoning (interpretability) becomes as important as the final answer itself.

As these models become deeply integrated into the R&D pipelines of major corporations, the focus will shift from broad LLM deployment to rigorous **AI Validation and Verification (V&V)** protocols. Businesses must establish internal processes to test AI-generated engineering solutions against real-world constraints before deployment.

Actionable Insights for Tomorrow's Leaders

For those building teams and setting technological strategy, the Gemini 3 upgrade is a clear signal:

- Invest in Tooling Literacy: Focus training not just on prompt engineering, but on how your teams can effectively integrate and verify the outputs from AI tool-use pipelines. Know which external tools your AI agents will need to call.

- Identify High-Leverage Reasoning Gaps: Pinpoint the bottlenecks in your R&D or engineering workflows that involve multi-step, logic-heavy processes. These are the areas where advanced reasoning models will offer immediate, asymmetrical advantages.

- Demand Benchmark Specificity: When evaluating vendors, press beyond generic MMLU scores. Demand proof of concept results in your specific domain's complex reasoning benchmarks. The "major reasoning" claim must be quantifiable in your context.

We are witnessing the maturation of AI capabilities. The race is no longer about who can sound the most human, but who can solve the hardest problems reliably. Google DeepMind’s emphasis on "Deep Think" suggests they believe the future of AI competition lies not in generalized intelligence, but in specialized, trustworthy, high-fidelity reasoning designed to push the boundaries of science and engineering.