The Deep Think Era: How Gemini 3's Scientific Leap Redefines AI's Role in Engineering and Discovery

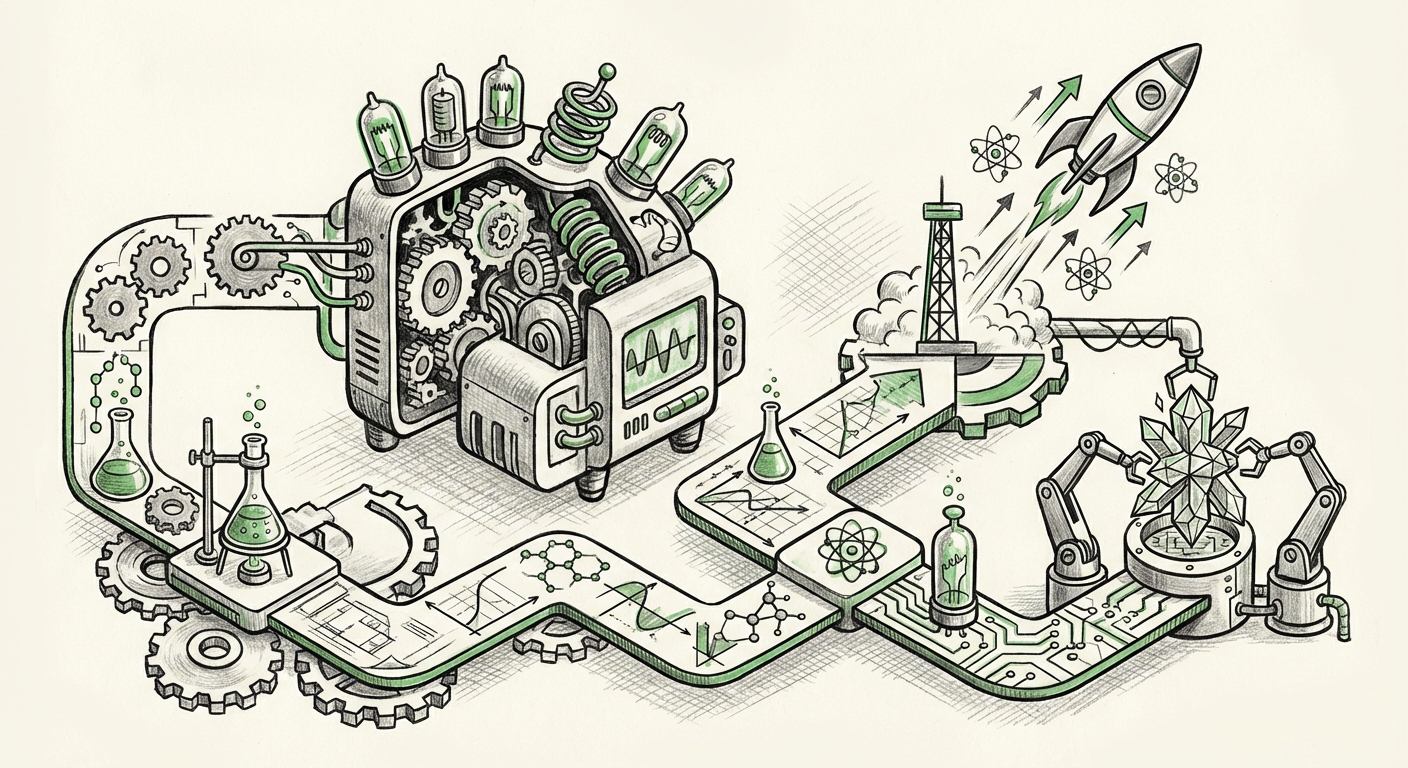

The recent announcement from Google DeepMind regarding the upgrade to Gemini 3, specifically its enhanced "Deep Think" mode focused on complex science and engineering tasks, marks a critical inflection point in the development of Artificial Intelligence. For years, the narrative around large language models (LLMs) has centered on their prowess in generating human-like text, summarizing data, and excelling at creative tasks. Now, the goalposts are moving dramatically. We are witnessing a decisive pivot from generalized intelligence to specialized, high-fidelity *reasoning* in domains that demand mathematical rigor and systematic logic.

The Shift from Eloquence to Expertise: What is "Deep Think"?

When a system like Gemini achieves state-of-the-art performance on major reasoning and coding benchmarks, it’s not just a small update; it represents a fundamental architectural or training improvement. The term "Deep Think" is suggestive. It implies that the model is no longer simply pattern-matching or retrieving information; it is engaging in sequential, verifiable steps of logic necessary to solve novel, complex problems—the kind of thinking traditionally reserved for highly trained human experts.

Consider a high school student writing an essay versus a Ph.D. candidate deriving a new thermodynamic equation. Early LLMs were excellent at the former. The success of Gemini 3’s Deep Think suggests progress toward the latter. This capability relies on overcoming two major hurdles:

- Error Propagation Management: In multi-step reasoning (like solving a complex engineering problem), a small mistake early on ruins the final answer. "Deep Think" implies the model can self-correct or maintain higher fidelity across many logical steps.

- Grounding in Formal Systems: Science and engineering rely on immutable laws (physics, mathematics, strict coding syntax). The model must deeply internalize these rules, rather than just knowing about them statistically.

Contextualizing the Leap: The Benchmark Battleground

Claims of superiority in AI are only as strong as the tests used to measure them. The initial reports focus on leading standard benchmarks, but true validation requires looking deeper into the evaluation methodologies. As corroborated by the focus of searches like **"LLM benchmarks for scientific reasoning MMLU vs MATH"**, the industry is acutely aware that older tests are becoming obsolete against models like Gemini 3.

If the model merely excels on the classic MMLU (Massive Multitask Language Understanding) test, it shows breadth. However, real scientific advancement demands depth. We must look for evidence in tests that stress multi-step deduction, which often involves mathematics (like the GSM8K benchmark for grade school math, but extended to college-level physics problems). For technical audiences, the real metric is whether the model performs well on benchmarks that simulate real-world complexity, where solutions are not found in the training data but must be *constructed* logically.

The Need for Specialized Metrics

The challenge facing researchers is that generic benchmarks are saturated. This forces labs to either develop proprietary, highly difficult tests (which can lead to accusations of data contamination) or focus on verifiable, published challenges in specific fields. The success of Gemini 3 hinges on whether its improvements hold up against these new, rigorous, and domain-specific evaluations that test logical coherence over mere memorization.

The Competitive Dynamics: An AI Arms Race in Specialized Reasoning

The advancement of Gemini 3 immediately frames the strategic landscape for its main competitors. The industry is not standing still; this is a direct catalyst for immediate response. Understanding where competitors like OpenAI stand, as suggested by queries such as **"OpenAI GPT-5 expected capabilities scientific coding,"** is crucial for market forecasting.

If Google DeepMind successfully embeds superior reasoning into Gemini 3, rivals must reallocate resources away from incremental general improvements and toward deep architectural specialization. For the software development sector, this means the focus shifts from models that can write *boilerplate* code to models that can design complex, robust systems from scratch.

This escalating competition ensures that specialized LLMs—those tailored for chemistry, finance, or high-level software architecture—will become the next major competitive front. The era of the single, monolithic, general-purpose model dominating all tasks might be receding, replaced by optimized engines built for specific, high-value cognitive loads.

Real-World Velocity: Implications for Science and Industry

The true measure of Gemini 3’s "Deep Think" mode is its potential to accelerate real-world discovery. This is where the focus moves beyond the lab and into industries like those explored in searches concerning **"Impact of advanced LLMs on chemical simulation and drug discovery."**

Accelerating Scientific Discovery

In fields like drug discovery, the bottleneck isn't just collecting data; it's generating and testing plausible hypotheses based on complex molecular interactions, protein folding, and reaction kinetics. An AI capable of deep scientific reasoning can:

- Analyze massive, disparate research papers to synthesize novel, testable hypotheses about drug targets.

- Simulate, with higher accuracy than current tools, the potential efficacy and toxicity of millions of candidate molecules.

- Streamline the theoretical design phase of novel materials by predicting atomic arrangements and stability.

For domains requiring massive coding expertise, such as building new operating systems or optimizing complex industrial control software, the implications are equally profound. Engineers will leverage these models not just for debugging, but for architectural validation—asking the model to prove the security or efficiency of a proposed system design against thousands of known failure modes.

The Convergence of Data: Multimodality as the Next Frontier

Deep reasoning in engineering cannot exist purely in text format. A structural engineer needs to understand force vectors shown in a diagram; a chemist needs to interpret a spectrogram. This necessitates the trend suggested by searches into **"Multimodality in AI for engineering design synthesis."**

A true "Deep Think" mode for engineering must be multimodal. It needs to ingest CAD files, 3D models, circuit schematics, and complex mathematical visualizations, reason across these formats, and output refined plans or code. Gemini 3’s success in science likely relies on leveraging its multimodal roots to connect abstract concepts (textual theory) with concrete representations (code or simulation data). This convergence means that future AI assistants will not just describe how to build something; they will dynamically revise the blueprint itself based on real-time simulation feedback.

Actionable Insights for Technology Leaders

For businesses operating in technical sectors, the rise of deeply reasoned AI is not a future event; it is a present competitive mandate. Here are actionable takeaways:

- Audit Your Reasoning Bottlenecks: Identify the complex, multi-step analytical tasks within your R&D or engineering pipeline where human experts are slow or prone to systematic error. These are the first tasks ripe for integration with advanced reasoning models like Gemini 3.

- Invest in Validation Frameworks: Do not accept benchmark scores at face value. Develop internal, proprietary evaluation suites that mirror your hardest, most unique engineering challenges. If your competitor masters their core logic, your general AI use will fall behind.

- Prioritize Multimodal Data Infrastructure: If your company uses specialized visual data (diagrams, schematics, sensor feeds), begin structuring this data for AI consumption now. The models that can reason across text, code, *and* visual engineering artifacts will offer the highest ROI.

- Upskill for AI Collaboration: The role of the engineer or scientist is changing from primary solver to expert validator. Train teams not just on *using* LLMs, but on how to prompt them for verifiable logical proofs and how to spot subtle, non-obvious errors introduced by deep reasoning chains.

Conclusion: The Ascent of the Synthetic Engineer

The upgrade to Gemini 3’s Deep Think mode confirms that the primary race in AI today is about cognitive depth, not just breadth. The industry is successfully engineering systems capable of rigorous, systematic thought previously exclusive to specialized human cognition. This development elevates LLMs from powerful assistants to nascent synthetic colleagues capable of contributing meaningfully to foundational scientific and engineering endeavors.

As these systems become more adept at tackling the "hard problems"—those requiring deep logical chaining, complex coding, and cross-domain synthesis—we will see an acceleration in discovery that far outpaces what manual human effort alone could achieve. The future belongs to the organizations that can seamlessly integrate these powerful, reasoning engines into the core of their most complex technical workflows, ushering in an era defined by synthetic expertise.