The Reasoning Revolution: Why Google DeepMind's Gemini 3 "Deep Think" Signals AI's Move Beyond Fluency

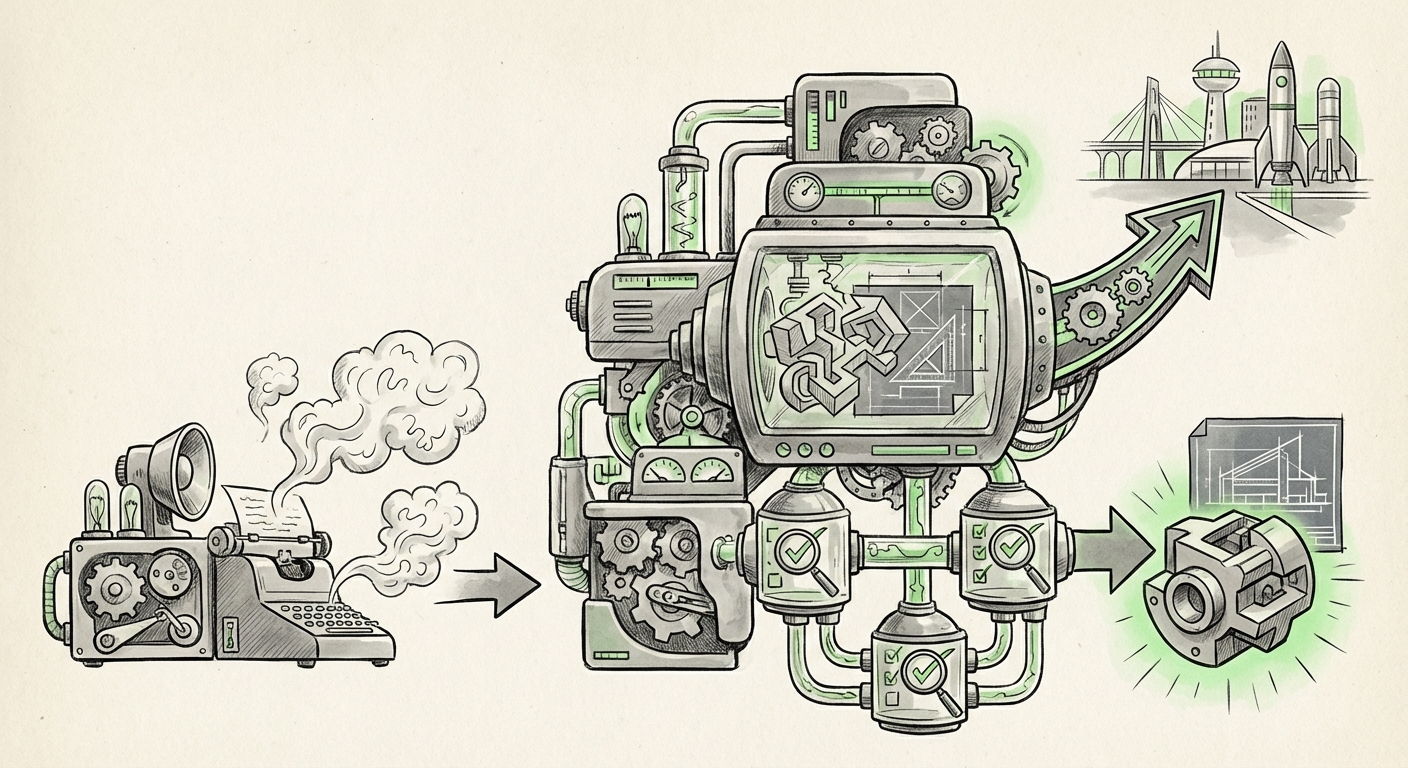

For years, the public perception of Artificial Intelligence, particularly Large Language Models (LLMs), centered on fluency: the ability to write poetry, summarize articles, or carry on natural conversations. While impressive, this represented a form of highly sophisticated pattern matching. Today, we are witnessing a foundational industry pivot, confirmed by the recent unveiling of Google DeepMind’s upgraded Gemini 3 with "Deep Think" mode.

This upgrade is not just about making the model slightly smarter; it signifies a strategic commitment to Reliable, Deep Reasoning AI—the necessary ingredient for tackling the world's most complex, multi-step scientific and engineering challenges.

The End of the Fluency Era: Why Deep Thinking Matters

Think of previous LLMs like a brilliant, fast-talking intern. They sound convincing and know a lot of facts. If you ask them to design a microchip, they might give you a beautiful, eloquent essay on chip design. However, if you ask them to actually *write the optimized Verilog code* for a novel gate array that must meet strict latency constraints, they might introduce subtle, catastrophic logical errors.

The "Deep Think" mode directly addresses this gap. It suggests the model is dedicating more computational cycles—perhaps employing internal recursive loops or advanced search algorithms—to work through problems step-by-step, verifying intermediate conclusions before arriving at a final answer. This shift is crucial because accuracy in complex domains cannot be guessed; it must be derived.

Corroboration 1: The Unforgiving Arena of Benchmarks

Any significant AI advancement is immediately put to the test on public leaderboards. The key to validating Google's announcement lies in rigorous benchmark comparison (Search Query: "Gemini 3 Deep Think" vs GPT-4 vs Claude 3 benchmark comparison). If Gemini 3 is leading in reasoning and coding benchmarks, it means its internal logic engine is proving superior against the current champions, GPT-4 and Claude 3 Opus.

For technical audiences, this means looking beyond general knowledge tests (like MMLU) and focusing intensely on benchmarks that demand:

- Advanced Logical Deduction: Multi-hop reasoning where conclusions build upon one another, testing consistency over dozens of steps.

- Complex Code Generation & Debugging: Metrics like HumanEval or specialized programming exams that require not just functional code, but code optimized for efficiency and correctness in specific environments.

- Scientific Problem Solving: Exams mimicking undergraduate or graduate-level physics, chemistry, or advanced mathematics problems that require applying established laws, not just recalling definitions.

A superior score here confirms that the "Deep Think" architecture translates tangible performance improvements directly into quantifiable results, moving the conversation from "Is it good?" to "How much better is it at hard math?"

The Mechanism of Mastery: Tool Use and Augmentation

While internal computation is vital, achieving breakthrough reasoning in modern AI is rarely done in isolation. A powerful engine still needs the right tools. This brings us to the second vital trend: the maturation of tool use and external augmentation (Search Query: LLM tool use scientific reasoning complex tasks).

For an LLM to solve real-world engineering problems, it must reliably use external resources. It must know when to:

- Stop generating text and execute Python code via a Code Interpreter to calculate fluid dynamics.

- Query a verified external database (like a materials science repository) for precise physical constants.

- Formulate a multi-step plan, execute Step 1, analyze the results, and use those results to inform Step 2 (Agentic Behavior).

If Gemini 3’s "Deep Think" is successfully integrating these tools—executing code, debugging its own failed attempts, and then rerunning the calculation—it moves from being a sophisticated calculator to a reliable scientific assistant. For the Software Architect, this means less time spent patching the model’s output and more time trusting its proposed solutions.

Transforming Industry: The Real-World Impact of Reasoning AI

When an LLM masters complex, verifiable reasoning, the implications spill out of the lab and directly into high-value industries. The sectors that rely on iterative, high-stakes problem-solving stand to be revolutionized.

Corroboration 3: Accelerating R&D from Molecules to Metal

The ability to handle complex science directly targets the bottlenecks in Research and Development (Search Query: "AI large language models accelerating scientific discovery" engineering applications). Consider the pharmaceutical industry. Drug discovery is incredibly expensive and slow, bottlenecked by trial-and-error experimentation. An LLM that can reliably reason about molecular interactions, predict toxicity based on complex structural analysis, and optimize synthesis pathways could shave years and billions off the development timeline.

This is the same for engineering. Designing a next-generation battery requires modeling thousands of chemical reactions under varying stresses. If Gemini 3 can manage these simulations with greater accuracy and speed than current computational methods, the pace of innovation in energy storage or aerospace materials design accelerates dramatically.

For business leaders in these fields, the actionable insight is clear: investing in custom fine-tuning or application layers atop reasoning-capable models is no longer a futuristic gamble; it is rapidly becoming a competitive necessity.

The Competitive Crucible: Navigating the Frontier Race

Google DeepMind’s advancement does not happen in a vacuum. It is a direct response to, and simultaneous challenge against, the work being done by OpenAI and Anthropic. The race for reasoning is the new battleground.

Corroboration 4: Watching the Rivals Keep Pace

Understanding the pressure points on Google requires looking at competitor activity (Search Query: `OpenAI's next steps after GPT-4 reasoning improvements` or `Anthropic Claude 3 Opus advanced reasoning capabilities`). Anthropic’s Claude 3 Opus has already laid significant claims regarding its superior analytical capabilities and long context window proficiency—perfect for ingesting massive engineering specification documents.

The market views this as a continuous leapfrogging contest. When one lab demonstrates a breakthrough in reasoning (via a "Deep Think" mode or a similar proprietary technique), the others are forced to respond immediately, often by leveraging their own research breakthroughs in scaling laws or training efficiency.

This intense competition is fantastic for end-users. It drives rapid commoditization of advanced features. What was cutting-edge reasoning six months ago becomes standard proficiency today, forcing the development frontier further out toward true AGI capabilities.

Practical Implications: What Businesses Should Do Now

For organizations looking to leverage this new generation of reasoning AI, the approach must shift from basic integration to strategic deployment.

1. Embrace Verification, Not Blind Trust

Even with "Deep Think," LLMs are not infallible oracles. For engineering and scientific tasks, you must build verification loops. If the AI proposes a design or a block of code, the next step in the workflow should involve testing that output against a known simulation environment or established physical laws. This hybrid human-AI verification pipeline is the sweet spot for maximum productivity gain.

2. Prioritize Problem Decomposition

Complex problems that previously required days of specialized analysis can now be broken down into smaller, sequential reasoning steps suitable for an LLM. Teach your teams to architect prompts that force the AI to show its work—to output the steps, the calculations, and the resulting data—before delivering the final answer. This taps directly into the mechanism the "Deep Think" mode is designed to enhance.

3. Re-Evaluate Your Talent Needs

The value of a pure executor (someone who merely executes known procedures) will diminish. The value of the problem framer—the person who can ask the AI the right, precise, and deeply technical questions—will skyrocket. Upskilling in prompt engineering, specifically for scientific/coding tasks, is now a core competency.

Looking Ahead: The Road to Agentic Systems

The move to Deep Reasoning is the essential bridge between current generative AI and truly autonomous agentic systems. An autonomous agent capable of running a software project, managing a complex experiment, or navigating a logistical network requires near-perfect reliability in its internal logic.

Gemini 3’s focus suggests that the next iteration of AI won't just suggest answers; it will confidently assert verified solutions built through deliberate, methodical thought. We are moving from a world where AI helps us draft documents to one where AI actively designs and builds complex, functional realities. The race is no longer about who can generate the most text, but who can reliably solve the hardest problems.