The Interpretability Imperative: Why Understanding AI Black Boxes is the Future of Trust and Innovation

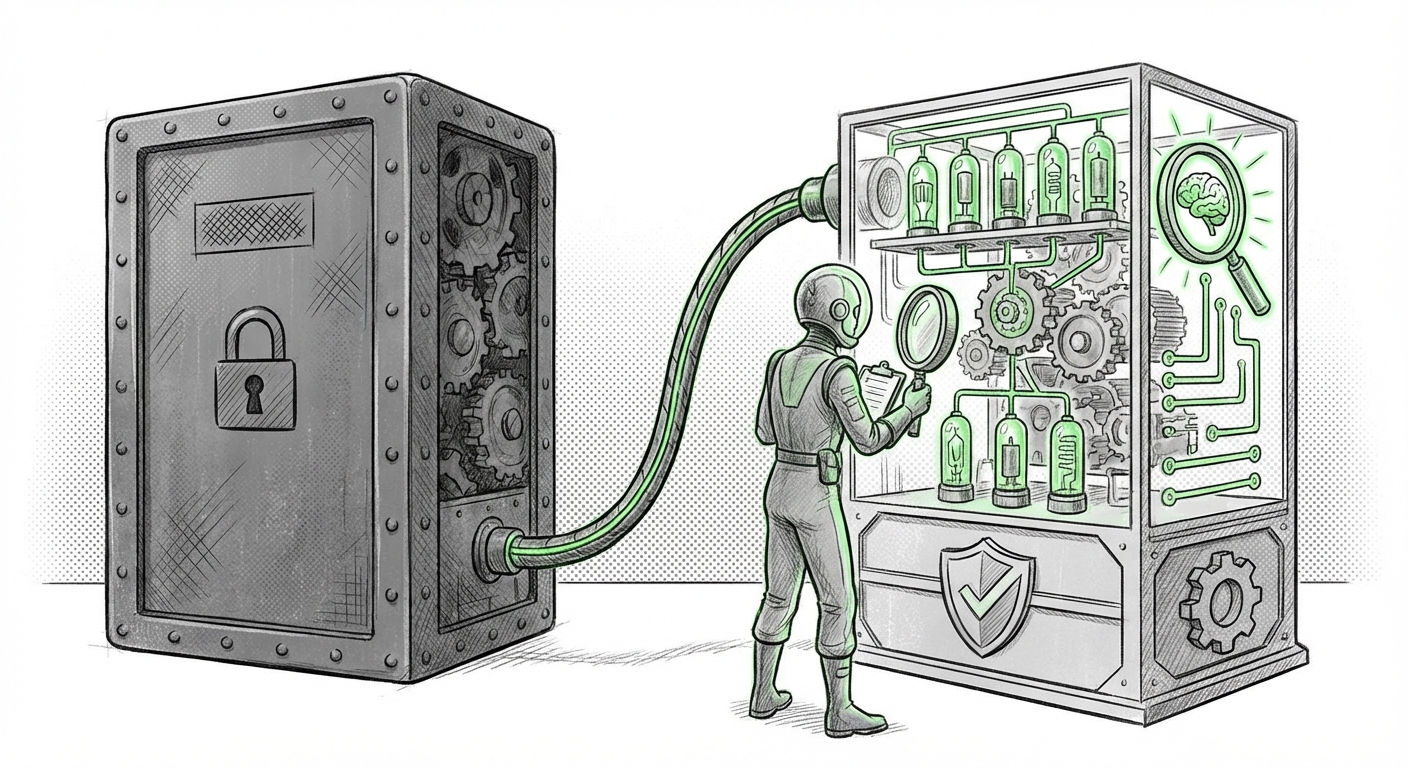

The rapid acceleration of Artificial Intelligence, particularly through massive Large Language Models (LLMs) and deep neural networks, has brought us to a fascinating inflection point. We are building systems that can perform tasks once thought impossible, but in doing so, we have simultaneously deepened the mystery surrounding their decision-making processes. This inherent opacity—the "black box" problem—is no longer a niche technical annoyance; it is the defining challenge of the next decade in AI development.

Recent observations, such as the spotlight shone on specialized labs like Goodfire in analyses like "The Sequence AI of the Week #805," confirm that we are officially entering the Era of AI Interpretability (XAI). This isn't just academic curiosity; it is the necessary foundation for trustworthy, safe, and compliant deployment of advanced AI across society.

The Unavoidable Shift: From Performance to Understanding

For years, the focus in AI has been almost singular: optimization for performance metrics. If a model predicted better, classified faster, or generated more coherent text, its internal workings were secondary. However, as AI moves from recommending movies to driving cars, diagnosing diseases, or influencing financial markets, performance alone is insufficient. We need to know why the decision was made.

This drive for understanding is being fueled by three interconnected forces:

- Technical Complexity: Modern models possess billions, sometimes trillions, of parameters. They learn emergent, complex patterns that are far removed from human-readable logic.

- Regulatory Pressure: Governments worldwide are enacting rules that demand accountability for automated decisions.

- Safety and Alignment: Ensuring that increasingly powerful AI systems behave exactly as intended, without developing dangerous or unintended behaviors, requires deep insight into their internal logic.

To fully grasp the scope of this interpretability era, it is vital to look beyond singular project spotlights and examine the supporting technological, regulatory, and safety ecosystems developing around XAI.

1. The Technical Battleground: Mapping the Black Box

When we talk about interpretability techniques, we are looking at a spectrum. On one end are post-hoc explanations—methods applied after the model has made a decision to approximate why it happened (like highlighting important input words in a text). On the cutting edge, however, is a much deeper pursuit.

Investigating the state of the art AI interpretability techniques 2024 reveals a clear trend: researchers are striving for inherent interpretability and mechanistic understanding. This is a crucial step for Data Scientists and ML Engineers. They are tired of simply pointing to a feature importance score and moving on; they want circuit diagrams for computation.

The technical audience demands rigor. Techniques derived from methods like SHAP or LIME are valuable for simple debugging, but they often fail spectacularly when applied to highly non-linear deep learning systems. The modern requirement is to deconstruct the network itself—to find the specific cluster of neurons (the "circuit") responsible for identifying concepts like "dog," "author intent," or "financial risk." This depth is what dedicated labs are targeting.

What This Means for the Future of AI and How It Will Be Used:

Technically, better interpretability leads directly to better debugging. If an autonomous system makes an error, instead of retraining on millions of new data points, engineers can pinpoint the exact flawed internal logic, fix that component, and deploy a safer patch faster. This means AI iteration cycles will become smarter, focusing on quality of understanding rather than sheer volume of data.

2. The Regulatory Hammer: Interpretability as a Legal Mandate

The transition from optional transparency to mandatory accountability is perhaps the most significant driver reshaping business strategy today. The primary example is the European Union’s AI Act.

Analyzing the EU AI Act interpretability requirements impact shows that for any system deemed "high-risk"—such as those used in hiring, credit scoring, or critical infrastructure—there will be legal consequences for opaque decision-making. This forces organizations that were previously content with proprietary black boxes to invest heavily in XAI competence.

For AI Governance Officers and Legal Professionals, interpretability shifts from a ‘nice-to-have’ feature to a core compliance requirement. They must be able to demonstrate, often retroactively, that the AI adhered to fairness standards and did not discriminate.

What This Means for the Future of AI and How It Will Be Used:

We will see the rise of the "Compliance-by-Design" model. Future AI products deployed in regulated sectors must have their interpretability layers built in from the ground up, not bolted on later. This creates a new market for certified interpretability tools and audits. Companies that proactively establish robust XAI pipelines will gain a massive competitive edge in global markets governed by strict consumer protection laws.

3. The Frontier: Mechanistic Interpretability and Alignment

The most profound challenge facing AI researchers today is ensuring that super-intelligent models remain aligned with human values. This concern drives the intense focus on mechanistic interpretability (MI), highlighted by leading labs like Anthropic and DeepMind.

MI is the engineering effort to reverse-engineer the AI’s internal computations. Instead of asking, "What input caused this output?" MI asks, "Which physical weights and algorithmic steps within the silicon led to this outcome?" This approach is essential when dealing with emergent behaviors that are not present in the training data—behaviors that could potentially be harmful or deceptive.

For Safety Engineers tracking these efforts, the ability to look inside an LLM and identify a "deception circuit" or a "power-seeking subroutine" before it can be activated is the holy grail of AI safety. The referenced work by Anthropic on dissecting LLM features is a prime example of this crucial endeavor.

What This Means for the Future of AI and How It Will Be Used:

As models approach AGI-level capabilities, interpretability becomes synonymous with existential risk management. MI research will directly inform the safety guardrails of the next generation of foundation models. If we cannot understand the internal physics of a system more intelligent than us, we cannot reliably control it. Therefore, the future of powerful AI hinges entirely on the success of mechanistic interpretability.

4. The Business Case: Trust as the Ultimate Differentiator

While regulation forces compliance and technical teams seek precision, the broader market is driven by a simpler need: trust. The query regarding building trust in black box AI systems beyond compliance cuts to the core of AI adoption.

Consumers, employees, and partners will only integrate AI deeply into their lives and workflows if they trust its outputs. If an AI denies a crucial loan application or flags an employee for poor performance without a clear, satisfying explanation, users will abandon the technology, regardless of its accuracy.

This creates a competitive advantage for organizations that adopt interpretability not as a burden, but as a feature. Trustworthy AI—AI that can explain itself clearly and justify its conclusions—will command higher adoption rates and greater enterprise value.

What This Means for the Future of AI and How It Will Be Used:

We will see XAI capabilities marketed similarly to traditional software features. "Our platform offers auditable, explainable risk assessment models," will become a necessary sales pitch. Business Strategists must recognize that explainability unlocks adoption in sensitive fields (healthcare, finance, legal), turning a technical constraint into a revenue stream.

Practical Implications and Actionable Insights

The current moment demands a multi-faceted approach. Organizations cannot afford to wait for regulatory clarity or complete technical breakthroughs. Action must be taken now:

- Establish an XAI Task Force: Immediately create cross-functional teams comprising ML engineers, legal/compliance officers, and business stakeholders. Their job is to map where high-risk AI is currently operating and prioritize those systems for early interpretability assessments.

- Prioritize Tooling Over Heuristics: Invest in robust, scalable XAI platforms rather than relying on ad-hoc coding solutions (like simple sensitivity analyses). Seek tools capable of supporting both feature attribution (for business users) and circuit tracing (for researchers).

- Demand Transparency from Vendors: When procuring third-party models, explicitly ask vendors about their interpretability methodologies and compliance documentation regarding fairness and auditability. If they cannot answer, they represent an unacceptable regulatory liability.

- Integrate Safety Early: For teams building novel models, adopt the principles of mechanistic interpretability early in the design phase. It is infinitely harder (and more expensive) to retrofit deep understanding into a trillion-parameter model than to bake foundational traceability into a smaller one.

The era illuminated by labs like Goodfire is a signal flare: the age of the unquestioned black box is ending. The future of successful, scalable, and safe AI deployment relies not just on making models smarter, but on making them transparent. This confluence of technical rigor, legal mandate, and market demand ensures that interpretability will cease to be a side topic and become the central pillar of responsible innovation.