The Algorithmic Watchdog: How AI is Transforming Corporate Surveillance and Employee Trust

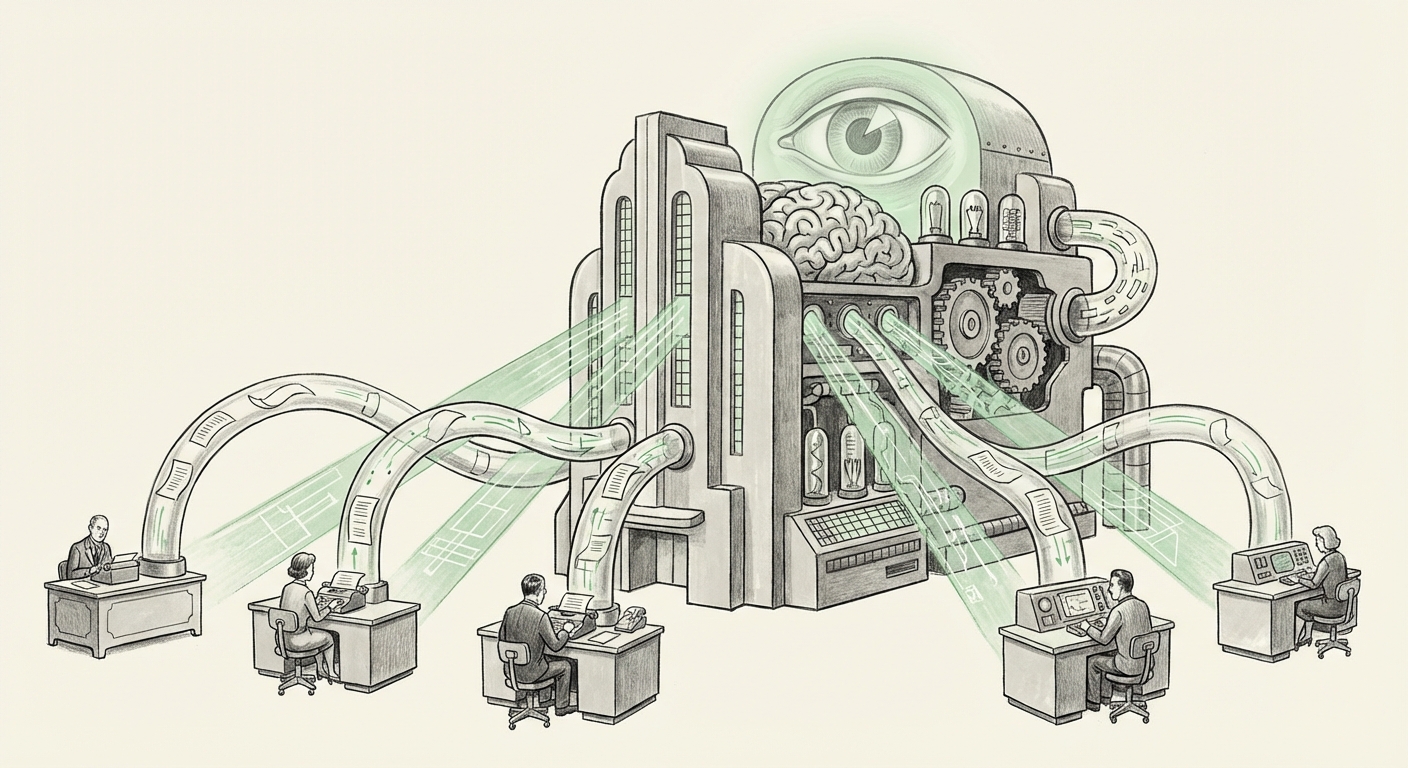

The narrative surrounding Large Language Models (LLMs) like ChatGPT has primarily focused on their creative potential, productivity gains, and disruption of white-collar work. However, a more immediate and perhaps darker application is emerging in the realm of corporate security. Recent reports suggest that leading AI developers themselves are deploying highly specialized versions of these powerful tools not to build the future, but to police their present—specifically, hunting down internal information leakers by scanning employee communications.

This development is a profound turning point. It signals the rapid adoption of advanced AI for internal governance and control, marking the transition from simple keyword monitoring to sophisticated, contextual surveillance. This shift necessitates a critical look at the broader technological trends, the ethical tightrope companies must walk, and what this means for the future relationship between employees and employers in the digital age.

The New Frontier: AI-Powered Internal Monitoring

When we discuss AI in security, we usually think of external threats—stopping hackers or identifying malware. The focus has now moved inward. The use of a "special version" of ChatGPT to sift through the massive, unstructured data streams of modern corporate life (emails, instant messages, code commits) is not just an incremental security upgrade; it is a qualitative leap.

Moving Beyond Key Words: Contextual Awareness

Traditional Digital Forensics and Insider Threat (DFIT) programs rely on static rules. If an employee types "secret project file location," the system flags it. But sophisticated leakers are savvy; they use coded language, discuss information in fragments across multiple channels, or leak proprietary concepts without using specific forbidden terms.

This is where the LLM excels. By fine-tuning the model on vast amounts of internal context—company jargon, project names, and past communications—the specialized system gains a human-like understanding of intent. It can correlate a seemingly innocent mention in a Slack channel about "the blue widget specs" with a subsequent draft document shared via encrypted email, flagging the *intent* to leak rather than just the specific words used. This capability represents the broad technological trend mentioned in industry analysis: the integration of LLMs into AI-powered internal monitoring systems designed to defend intellectual property against insider threats.

The Technical Implementation: A Customized LLM

The phrase "special version" is key. This isn't just running customer service ChatGPT against internal emails. It implies a secure, potentially air-gapped deployment where the model has been customized, or fine-tuned, on proprietary internal data. This process, which analysts search for when exploring LLM fine-tuning for proprietary data security, involves training the general model to become an expert in the specific nuances of that company’s communications.

For engineering teams, this means the LLM can distinguish between a standard engineering discussion and a conversation where sensitive architecture details are being solicited or shared outside approved channels. For ML practitioners, this raises the question of governance: how do you secure the model that is designed to secure your secrets, especially when that model has been exposed to highly sensitive metadata and personal communication?

The Erosion of Trust: Ethical and Cultural Implications

The moment a company deploys an AI agent to read every message—even if it's only flagging potential risks—the dynamic of employee trust fundamentally shifts. Employees, knowing an impartial, tireless, and context-aware intelligence is watching their every word, are likely to self-censor. This chills open communication, which is ironically the lifeblood of innovation in high-tech fields.

The Policy Vacuum and Legal Gray Zones

Who sets the rules for algorithmic monitoring? As legal experts investigate Generative AI policy and employee data privacy, they find that existing regulations often lag behind technology. In many jurisdictions, employers have significant rights to monitor communications conducted on company-owned equipment and networks. However, the *depth* of this monitoring—analyzing intent, sentiment, and subtle contextual linkage across platforms—tests the boundaries of reasonable expectation of privacy.

If an LLM misinterprets a joke or a frustration as a genuine threat to leak data, the resulting internal investigation, fueled by an AI 'whistleblower,' can destroy careers. This introduces the concept of algorithmic liability. Is the error the fault of the employee, the security team, or the team that fine-tuned the language model?

The Corporate Motivation: High Stakes Security

To understand why a company might adopt such an intense measure, we must consider the inherent value of their intellectual property. Companies developing frontier AI models are sitting on some of the most valuable, sensitive, and potentially world-altering data in existence. The motivation isn't just protecting source code; it’s protecting the years of research, the emergent safety protocols, and the competitive advantage.

The context of organizations like OpenAI—which has seen internal disagreements and high-profile departures—suggests that the drive for this level of control stems from a perception of extreme risk. When the secret sauce is the core product, zero-trust security extends to the very fabric of internal communication.

What This Means for the Future of AI Deployment

The deployment of AI for internal surveillance is not an isolated incident; it's a precedent. As this technology matures, it will move beyond the high-stakes world of frontier AI labs and into every major corporation, defining the next generation of enterprise risk management.

Trend 1: The Rise of the Private, Specialized Model

We are moving away from relying solely on massive, public foundational models for enterprise tasks. The future involves highly secure, internally hosted, or VPC-gated LLMs (often based on smaller, open-source architectures or specialized versions of proprietary models) that are trained exclusively on internal documentation. These "Digital Twins" of the corporate knowledge base will serve functions from automated compliance checking to complex contract review, and yes, leak detection.

Trend 2: Algorithmic Governance Over Human Oversight

As these monitoring systems become more accurate, human review of initial flags will decrease, leading to faster, automated actions against employees based on algorithmic suspicion. This shift toward algorithmic governance demands robust explainability frameworks (XAI). If an AI flags an employee, that employee—and their legal counsel—will need to know *why* based on concrete, traceable data points, not simply "the model determined intent."

Trend 3: The Battle for Workplace Digital Rights

The use of these monitoring tools will inevitably fuel significant labor and legal battles. We can expect to see new legislation specifically addressing the use of generative AI in employee monitoring, potentially requiring transparency notices, audit rights for employees, and limits on the types of communications that can be analyzed for intent versus content.

Actionable Insights for Business Leaders and Technologists

For organizations looking to navigate this new landscape, proactive strategy is essential:

- Establish Clear AI Usage Policies Now: Before deploying any AI for monitoring, HR and Legal must collaborate to define precisely what data AI can access, what constitutes a policy violation based on AI analysis, and how an employee can appeal an AI-generated flag. Ambiguity breeds distrust and legal risk.

- Prioritize Transparency in Monitoring: If you are implementing LLM-based monitoring, clearly communicate its scope to employees. A lack of transparency often causes more damage to morale than the monitoring itself. Consider implementing a "Trust But Verify" approach where the AI flags high-risk communications, but a human analyst always makes the final decision.

- Invest in Secure LLM Infrastructure: For any organization fine-tuning models on sensitive internal data (Query 3), securing the deployment environment is paramount. Ensure that data used for training remains segmented and protected, as the LLM itself becomes a high-value target for adversaries.

- Look Beyond Leak Detection: While surveillance is a current application, encourage teams to focus on LLM integration that *builds* trust, such as AI assistants that automate rote compliance checks or help synthesize complex regulatory documents, improving productivity rather than policing output.

Conclusion: The Double-Edged Sword of Intelligence

The deployment of specialized LLMs to root out internal threats is a stark realization of AI's dual-use potential. The same technology capable of synthesizing novel scientific hypotheses or accelerating software development is now being repurposed to maintain corporate orthodoxy and control the flow of information.

For the industry developing this technology, it highlights a deep-seated tension: how do you foster radical transparency and open collaboration internally while simultaneously protecting secrets that could reshape global competition? The answer may define the sustainability of trust in the knowledge economy. As AI becomes intrinsically woven into the fabric of our communication channels, the definition of professional privacy—and the boundaries of corporate oversight—are being rewritten, one scanned email at a time.