The Inference Revolution: Why OpenAI’s GPT-Codex-Spark and Cerebras Signal Real-Time AI Dominance

For the last few years, the story of Artificial Intelligence has been dominated by size. Bigger models, more parameters, and exponentially larger data sets promised greater general intelligence. However, a recent announcement from OpenAI—the unveiling of GPT-5.3-Codex-Spark, a specialized coding model achieving over 1,000 tokens per second, powered by Cerebras chips—marks a profound strategic pivot. This isn't just an incremental update; it’s a declaration that the future of AI isn't just about being smarter, but about being instantaneously useful.

This development signifies a critical convergence point: the marriage of highly optimized, smaller models with custom, high-performance inference hardware. To fully grasp the implications, we must analyze this move across hardware specialization, the technical definition of "real-time," and the shift away from monolithic general models.

The Hardware Shift: Why Cerebras Matters More Than Parameters

The most fascinating technical detail in the Codex-Spark announcement is its dependence on Cerebras chips. This signals a departure from the industry's default reliance on massive GPU clusters (primarily NVIDIA) for large-scale inference. For the non-expert, think of it this way: GPUs are like incredibly versatile, powerful sports cars built for general racing. Cerebras, with its wafer-scale architecture, is like a custom-built drag racer designed for one specific, blindingly fast task.

Why does this matter for speed? LLMs, especially when generating code or long sequences of text, are often bottlenecked not by calculation speed, but by memory bandwidth—how fast the model's vast knowledge base can be read and fed into the processing core. Cerebras's architecture, utilizing Wafer-Scale Engines (WSE), drastically reduces the distance data has to travel, overcoming the memory wall that constrains many GPU setups during large-scale inference. As context-rich analyses often point out, efficiency in this phase is paramount for cost management in deployment [Example Link Type: Direct search on Google Scholar or major tech news for "Cerebras LLM inference"].

For the Business Audience: Moving to specialized hardware means shifting the cost structure. While initial investment might be high, the cost-per-query (inference cost) for a task like coding assistance plummets when the process is orders of magnitude more efficient. This allows companies to offer services at a speed and scale previously uneconomical.

Beyond Autocomplete: Defining True Real-Time AI Programming

The headline promises "real-time programming." But what does that truly mean in the context of software creation? Current AI assistants, like the widely adopted GitHub Copilot, offer powerful suggestions, but they often operate on a slight delay—a pause while you finish a line or hit Enter. This is near-real-time, but it still requires the developer to consciously wait or prompt.

Achieving 1,000 tokens per second moves the needle from "helpful assistant" to "active collaborator." This level of responsiveness requires latency measured in the low single-digit milliseconds. We need external benchmarks to truly understand the competitive landscape here [Search for "GitHub Copilot Latency Benchmarks" on platforms like Medium or specialized development blogs]. If Codex-Spark can suggest the next five lines of boilerplate code, refactor an entire function signature, or correct a syntax error instantly—before your fingers have even moved to the next key—the interaction fundamentally changes.

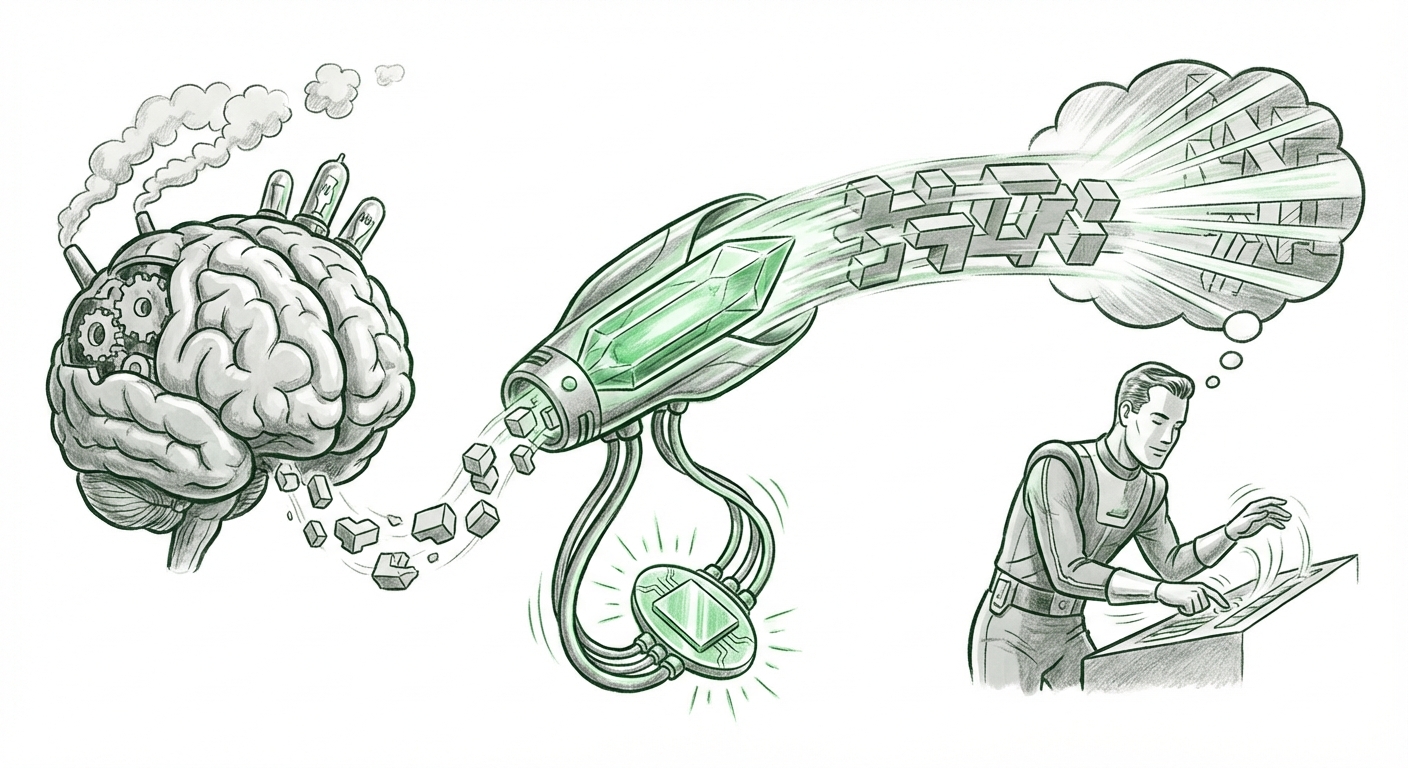

Implication for Developers: This immediacy preserves the developer's "flow state." Flow is the peak psychological state where creativity and productivity soar. Any interruption, even a small technological lag, breaks this state. True real-time AI eliminates this friction, making the AI an invisible, integrated extension of the programmer’s cognitive process.

The Pivot: Smaller Models for Sharper Focus

The most significant strategic signal is the explicit focus on a "smaller coding model." This challenges the conventional wisdom that only models like GPT-4 or the theoretical GPT-5 (generalists) can handle complex tasks. Codex-Spark proves that when you trade general knowledge for deep specialization—in this case, the entire world of programming syntax, best practices, and debugging patterns—you can achieve superior speed and accuracy for that specific domain.

This trend toward specialization reflects broader industry analysis [Search for "LLM model distillation and efficiency gains" on sources like VentureBeat or Towards Data Science]. Companies are realizing that running a multi-trillion parameter model to answer a simple SQL query is incredibly wasteful. The future likely involves an ecosystem:

- The Giant Model (e.g., GPT-5): For novel research, complex reasoning, and ambiguous creative tasks.

- Specialized Models (e.g., Codex-Spark): Highly efficient, cost-effective models deployed for high-volume, defined tasks like coding, customer service triage, or medical charting.

Codex-Spark is OpenAI betting that, in the productivity sector, specialization wins on deployment efficiency.

Shaping the Software Development Lifecycle (SDLC)

If high-speed, specialized coding AI becomes the norm, what does the future of building software look like? We move beyond mere suggestion toward co-creation that happens at the speed of thought.

1. Instantaneous Code Auditing and Security

Imagine an AI scanning every line you type, not just for errors, but for potential security vulnerabilities (SQL injection, buffer overflows) the moment they are typed—and correcting them instantly. This proactive, real-time auditing powered by Codex-Spark could dramatically raise the baseline security of all deployed code.

2. Democratization of Complex Engineering

When the mechanical aspects of coding (syntax, repetitive scaffolding) are handled instantly, engineering becomes less about memorizing libraries and more about architecture and problem decomposition. A developer can focus 90% of their time on the unique business logic, letting the specialized AI handle the universal plumbing. This lowers the barrier to entry for junior engineers and accelerates expert output.

3. The New Speed Barrier

As discussed in forward-looking analyses [Search for "Future of Programming with real-time AI assistants" on management consulting websites], the competition will shift. The race will no longer be to integrate AI tools, but to ensure those tools operate seamlessly within the developer’s real-time workflow. Firms that lag in adoption or rely on slower inference models will find their engineering teams inherently less productive.

Actionable Insight for CTOs: The time to evaluate proprietary inference stacks (like Cerebras partnerships) or invest heavily in model distillation for internal tasks is now. Relying solely on large, general-purpose APIs will become an infrastructure liability due to cost and speed constraints for critical tasks.

Navigating the Trade-offs: Accuracy vs. Speed

While 1,000 tokens per second is revolutionary for speed, we must address the elephant in the room: Is it as accurate as the behemoths?

GPT-5.3-Codex-Spark is smaller, which usually implies less breadth of knowledge. However, because it is trained intensely on code, its accuracy *within the domain of coding* might exceed that of a general model of similar size. The trade-off is clear: If you need a philosophical essay, use the giant GPT. If you need production-ready Python that adheres to company standards, the specialized, lightning-fast Spark model is the superior tool.

This echoes the hardware insight: specialized tools outperform generalized ones when the job is narrowly defined. The deployment architecture (Cerebras) enables the speed, and the model specialization (Codex-Spark) ensures the utility matches that speed.

Conclusion: The Era of Invisible Intelligence

The introduction of GPT-5.3-Codex-Spark is more than just a new product release; it represents a maturing phase of the AI industry. We are moving past the "wow factor" of massive parameter counts and into the practical, deployable era of instantaneous utility. The focus is shifting from centralized, slow-but-smart inference to distributed, specialized, and blindingly fast execution.

For businesses, this means competitive advantages will increasingly stem from how efficiently they can deploy specialized AI capabilities at the point of need. For developers, it promises an evolution of their craft where cognitive burden decreases, and creative output accelerates beyond anything previously imagined. The latency gap is closing, and as it disappears, AI stops feeling like an external tool and starts feeling like an intrinsic capability of modern engineering.