The Inference Revolution: Why OpenAI's Ultra-Fast, Specialized Chips Signal AI's Next Era

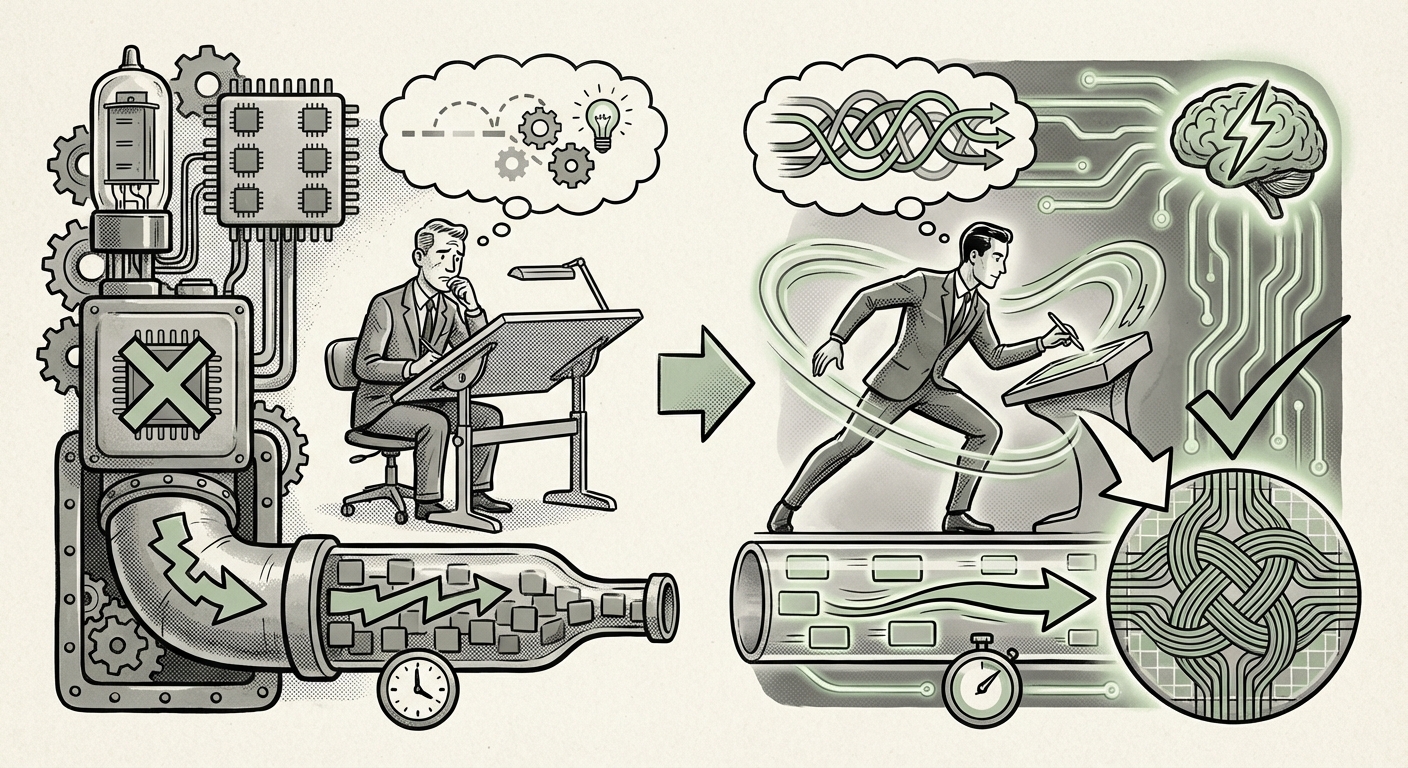

The Artificial Intelligence landscape is often defined by sheer scale—the massive parameter counts and gargantuan training datasets of foundational models like GPT-4. However, the recent announcement of OpenAI’s GPT-5.3-Codex-Spark tells a different, perhaps more critical, story about where the industry is heading: speed, specialization, and hardware independence.

This new coding model, capable of pushing over 1,000 tokens per second thanks to its deployment on Cerebras chips, is not just another incremental update. It represents a clear strategic pivot toward optimizing AI for inference—the phase where models are actually used—especially in latency-sensitive environments like live programming. For developers, businesses, and infrastructure planners, this development forces a re-evaluation of AI deployment strategies.

The Triple Threat: Specialization, Speed, and Silicon Diversification

The significance of the Codex-Spark model rests on three interconnected pillars that redefine how we think about deploying powerful AI:

- Specialization: It’s a model built *specifically* for coding, rather than a generalist model dabbling in code.

- Real-Time Performance: Hitting 1,000 tokens per second moves the interaction from "suggestion" to seamless "collaboration."

- Hardware Diversification: Moving beyond the ubiquitous GPU ecosystem to leverage specialized silicon like Cerebras’ wafer-scale engines.

To truly understand the implications of this move, we must look beyond the initial announcement and examine the surrounding trends in hardware, developer experience, and model optimization.

Pillar 1: The Need for Speed—Killing Latency in Development

For any tool to feel natural, the lag must disappear. When using a coding assistant, waiting even a fraction of a second for an auto-complete suggestion can break a programmer's concentration—their "flow state." The goal of real-time programming is to make the AI feel less like a search engine and more like a genuine pair programmer.

This push for sub-second, or even sub-100-millisecond, latency is driving intense competition. We need models that can respond instantly. As one prominent industry analysis suggests, low latency directly correlates with higher developer satisfaction and adoption rates for AI coding tools: "What Low Latency Means for Programmers" emphasizes that exceeding certain latency thresholds turns helpful suggestions into frustrating interruptions.

This means that the next generation of AI coding tools won't just be judged on the quality of their code output, but on their ability to keep pace with human thought. For businesses, this translates directly into higher developer velocity and reduced cognitive load.

Pillar 2: The Great Shrink—The Rise of Specialized, Smaller Models (SLMs)

Historically, AI progress was synonymous with adding more parameters. GPT-5.3-Codex-Spark, being a "smaller coding model," suggests that OpenAI recognizes the diminishing returns—and skyrocketing costs—associated with running their largest foundational models for every single, routine task. This aligns perfectly with the broader industry trend toward Small Language Models (SLMs).

The argument for SLMs is compelling. As detailed in analyses like "The Rise of SLMs: Why Efficiency Outweighs Scale in Edge AI Deployment," not every task requires the vast general knowledge embedded in a trillion-parameter model. A coding model needs deep knowledge of syntax, libraries, and logic, but perhaps not the nuance required for creative writing or philosophical debate.

For Business Leaders: Deploying a smaller, heavily fine-tuned SLM for a core business function (like generating SQL queries or specific API boilerplate) is dramatically cheaper to run, faster to deploy, and easier to secure than routing every request through a massive, general-purpose model.

Codex-Spark embodies this efficiency: a sharp tool for a sharp job, optimized for its specific domain.

Pillar 3: The Hardware Arms Race Beyond the GPU

Perhaps the most revolutionary aspect of this announcement is the specific mention of Cerebras chips. For years, the AI hardware market has been effectively dominated by NVIDIA GPUs. While GPUs excel at the massive, parallel computations required for training, they can sometimes be less efficient for the highly specific, often sparse, inference workloads of specialized models.

Cerebras specializes in wafer-scale computing—effectively building one giant chip instead of many smaller ones linked together. This architecture shines when managing data movement across the entire model structure efficiently, a critical factor for maintaining 1,000+ token speeds.

Research into the ongoing "AI Hardware War" confirms that diversification is necessary. We are seeing an environment where the "best" chip depends entirely on the task.

- Training LLMs: Still heavily GPU-dependent, though specialized ASICs are emerging.

- General Inference: Mix of GPUs and specialized TPUs/Inference Accelerators.

- Hyper-Specialized, Low-Latency Inference (like Codex-Spark): Opening the door for alternatives like Cerebras' Wafer Scale Engines, which promise efficiency by minimizing communication bottlenecks between smaller processing units.

This signals that reliance on a single vendor for all AI infrastructure is becoming a strategic vulnerability. Companies are now exploring multiple paths to silicon to secure necessary performance envelopes.

Future Implications: What This Means for Developers and Infrastructure

The convergence of these three trends—ultra-speed, tailored models, and diverse hardware—is setting the stage for the next phase of enterprise AI integration.

Implication 1: The Death of the 'Generalist' AI Interface

We are moving away from a future where one massive chat interface handles everything. Instead, expect specialized AI agents embedded directly into workflow tools. Your IDE will have a dedicated, lightning-fast Code Spark engine. Your CRM will have a lightning-fast Sales Pitch generator. These small, dedicated engines will run constantly, watching your keystrokes or analyzing your data stream, ready to act instantly.

This requires IT departments to shift focus from simply procuring massive GPU clusters to strategically selecting the right accelerator for the right workload. The skill set needed shifts from "training large models" to "optimizing inference pipelines across heterogeneous hardware."

Implication 2: Code Security and Proprietary Logic

When specialized models run locally or on dedicated, private infrastructure leveraging specialized chips, the data security implications improve significantly. Running a small, internal coding model on private Cerebras hardware means sensitive intellectual property (the code being written) never has to leave the corporate firewall to achieve high performance.

This addresses a major hurdle for widespread AI adoption in highly regulated or IP-sensitive industries like finance, defense, and healthcare. Real-time performance without sacrificing security is a massive competitive advantage.

Implication 3: Re-evaluating Total Cost of Ownership (TCO)

While the initial capital expenditure for specialized hardware like wafer-scale engines can be significant, the operational cost savings for high-volume inference are potentially transformative. If Codex-Spark can deliver 10x the tokens per second for the same power consumption as a traditional setup handling the same task, the TCO for 24/7 coding assistance plummets.

This economic reality will accelerate the adoption of AI tooling across an even wider swath of the enterprise, moving it from a novelty to a standard operational expense.

Actionable Insights for Technology Leaders

How should businesses prepare for this specialized, hyper-fast future?

- Audit Your Inference Workloads: Identify which tasks require real-time interaction (e.g., coding, live customer service responses) versus batch processing (e.g., generating monthly reports). Real-time tasks are the immediate targets for optimization via SLMs and specialized hardware.

- Explore Hardware Partnerships: Do not wait for GPU manufacturers to solve every problem. Begin testing and benchmarking alternative accelerators like Cerebras, Groq, or specialized startups for your most latency-sensitive deployment scenarios.

- Invest in Model Distillation and Fine-Tuning: Focus R&D budgets on techniques that efficiently shrink large, capable models into smaller, domain-expert SLMs. The future of enterprise AI lies in precision, not just raw size.

- Prioritize Flow State Maintenance: When evaluating new AI tools, mandate performance testing that measures latency in the context of human interaction. If the tool slows the user down, it’s not ready for prime time, regardless of output quality.

OpenAI’s Codex-Spark, powered by Cerebras, is more than a fast code generator; it is a bellwether. It signals that the era of monolithic, generalized AI powering everything is giving way to an ecosystem of purpose-built, lightning-fast AI agents running on optimized, diverse silicon. The race is no longer just about building the biggest brain; it's about building the fastest nervous system.