The AI Internal Investigator: How Specialized LLMs are Redefining Corporate Security and Privacy

The world watched as Large Language Models (LLMs) like ChatGPT revolutionized public interaction, creativity, and information processing. Now, the focus is shifting inward. A recent report suggesting that OpenAI—a leader in developing this technology—is employing a highly customized version of its own AI to hunt down internal leakers marks a pivotal moment. This isn't just corporate IT beefing up its security; it’s the adoption of next-generation AI for internal monitoring, creating a profound collision point between cutting-edge capability, corporate necessity, and fundamental employee privacy rights.

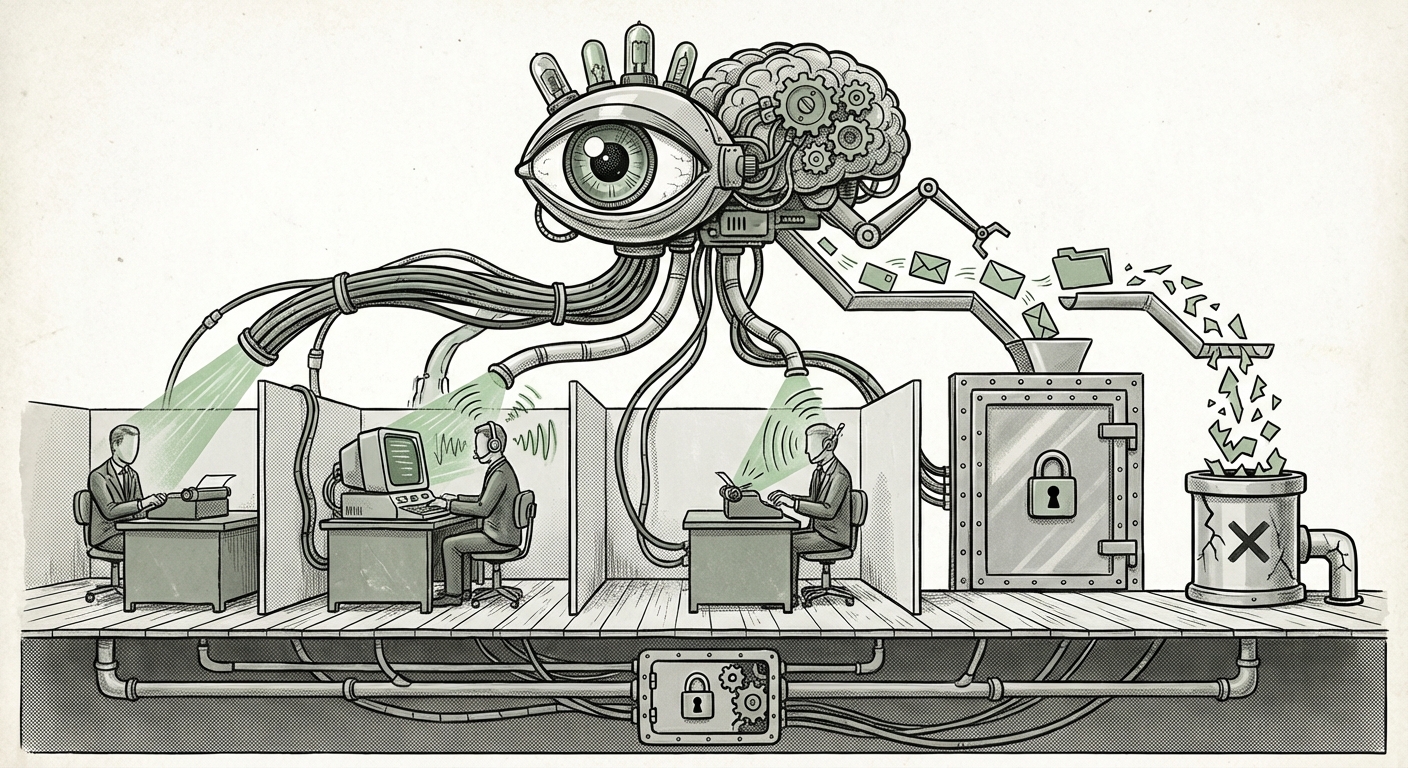

As an AI analyst, what strikes me most is the transition: we are moving decisively away from older monitoring techniques toward **contextual, semantic surveillance**. This development doesn't just affect OpenAI; it sets a powerful precedent for how nearly every major enterprise will manage sensitive data and monitor internal discourse in the coming years.

The End of Simple Keyword Alerts: The Rise of Semantic Surveillance

For decades, Data Loss Prevention (DLP) systems worked based on simple rules: if a document contained a Social Security Number pattern, a specific project codename, or an explicit keyword combination, an alarm would sound. This was clumsy, prone to high rates of false positives, and easily bypassed by employees who simply used synonyms or coded language.

The reported use of a "special version" of ChatGPT changes the game entirely. This specialized model, likely fine-tuned on vast amounts of internal, proprietary context (Search Query 2 focus), can perform **semantic analysis**. Imagine the difference:

- Old System: Flags the message "I'm sending the Phoenix file to the press."

- New LLM System: Understands that "Phoenix file" is code for the unreleased Q4 strategy, and flags the message based on the *intent* to share classified information, even if the leaker used phrases like, "I’m giving the background data for the *early bird report* to my friend in media."

This capability represents a significant technological leap. It means that corporate security can now interpret the *meaning* behind conversations on Slack or in emails, understanding jargon, relationships, and the context of information flow. This sophistication is what makes the tool so powerful for security teams but simultaneously so worrying for employees.

The Broader Trend: AI Moving Inside the Firewall

The OpenAI story is an accelerant, not an anomaly. The technological groundwork for internal AI monitoring has been laid by major software providers. If we examine the wider enterprise landscape (Search Query 1), we see security suites from giants like Microsoft and Google rapidly integrating generative AI capabilities designed to understand content contextually for compliance and DLP.

For Chief Information Security Officers (CISOs), the value proposition is clear: these LLM-based tools promise a dramatic reduction in false positives and a significant increase in the ability to detect nuanced, insider threats, which often account for the most damaging data breaches. These tools are marketed as essential defenses against IP theft, sabotage, and compliance violations.

For the AI industry, this signifies a crucial expansion: moving from customer-facing, public models to highly sensitive, *private* enterprise deployments. The development of these specialized internal models—trained on the very data they are meant to protect—demonstrates that the most impactful applications of LLMs might occur not on the open internet, but deep within corporate networks.

Implications for Business and Technology Architects

For technology architects, the challenge is twofold: building the security tool and securing the security tool itself. A fine-tuned LLM is now a crown jewel asset. If a specialized leak-detection model is compromised, the attacker gains deep insight into how the company monitors itself. This creates a new security layer that must be protected with the highest level of encryption and access control.

The Ethical Abyss: Privacy, Trust, and Legal Uncertainty

While security teams see a necessary tool, labor advocates and legal experts see a potential erosion of trust and a major privacy challenge (Search Query 3). When an AI system is built to constantly infer intent across all employee communications, the nature of workplace trust fundamentally shifts.

The debate centers on where the line between protecting company assets and invading personal space is drawn. Employees often use company communication channels for necessary, non-work-related interaction. An AI capable of understanding sarcasm, personal context, or vague frustrations about the company could easily misclassify innocent venting as a security risk.

This is amplified by geographical and legal differences:

- In the United States, workplace monitoring standards often lean in favor of the employer, provided employees are aware they are on company property/systems.

- In jurisdictions governed by GDPR or similar privacy frameworks, continuous, invasive monitoring without explicit, narrow consent faces much higher legal hurdles. The scope and necessity of the data collection must be strictly justified.

The risk here is that the rapid deployment of powerful AI monitoring technology precedes the establishment of clear regulatory and ethical guardrails. If a major tech firm like OpenAI leads with this implementation, it puts immense pressure on other companies to follow suit, potentially setting a low bar for digital oversight worldwide.

Case Study in Scrutiny: OpenAI's Internal Paradox

OpenAI’s position makes this story particularly fascinating (Search Query 4). This is a company dedicated to ensuring AI remains "beneficial to humanity." Yet, its internal security protocol employs the most advanced surveillance technology available to police its own staff. This raises questions about internal transparency and policy:

- Transparency of Deployment: Was this system deployed with full internal consultation, or was it quietly implemented as a response to past security incidents?

- Model Governance: How is the data used to train this internal hunting model protected? If the model learns to perfectly mimic an employee’s writing style to detect leaks, that profile of the employee’s communication style itself becomes highly sensitive data.

- Alignment with Mission: Can a company whose mission is openness and beneficial AI justify using opaque, powerful tools to monitor its workforce? The trust employees place in their employer is directly challenged by the deployment of a technology designed to find their perceived intent to betray that trust.

Industry observers are keenly watching for any official statements or policy clarifications from OpenAI regarding the deployment and scope of this "special version." How they navigate this paradox will offer vital clues about the future governance of AI tools within the organizations that create them.

Actionable Insights for Businesses Navigating the AI Monitoring Era

Regardless of your industry, the trend towards semantic monitoring is inevitable. Here is what businesses need to do now:

1. Re-evaluate and Clarify Acceptable Use Policies (AUPs)

Vague monitoring policies are no longer sufficient. Businesses must clearly articulate what level of communication analysis is being performed (keyword vs. semantic), what data is retained, and how long it is kept. Employees must be explicitly notified that their communications are being analyzed not just for prohibited words, but for contextual intent related to security and compliance.

2. Prioritize Explainability (XAI) in Monitoring Tools

If a system flags an employee for potential leak activity, the security team must be able to immediately show *why*. If the LLM offers an assessment without a clear, auditable path back to the source text and the reasoning behind the classification, you are inviting legal liability and internal chaos. Demand explainable AI (XAI) in all purchased or developed security software.

3. Balance Security Investment with Culture Investment

The most secure company is one where employees feel respected and trusted. Over-reliance on powerful surveillance tools signals distrust, which can lead to low morale, quiet quitting, or *actual* malicious intent among those who feel constantly watched. Security must be balanced with proactive measures like better internal communication channels, clearer executive direction, and strong internal grievance procedures.

4. Prepare for Regulatory Scrutiny

As seen in Query 3, regulatory bodies worldwide are catching up to AI capabilities. Businesses deploying these tools must document their necessity exhaustively. They must prove the monitoring is strictly proportionate to the security risk being mitigated. Assume that any internal surveillance system you implement today will be subject to public and regulatory review tomorrow.

The Future Landscape: Pervasive Intelligence, Pervasive Oversight

The deployment of specialized LLMs for internal threat hunting is a milestone. It confirms that the next frontier of AI application is the optimization and policing of internal organizational processes. These tools will become cheaper, more powerful, and more integrated into standard enterprise software suites.

The future of work will involve an invisible, always-on layer of AI intelligence analyzing organizational flow, productivity metrics, and security posture. While this promises unprecedented levels of corporate safety against external threats and insider malice, it concurrently demands an urgent, serious conversation about the societal contract between employer and employee. The sophistication of our monitoring tools is rapidly outpacing our consensus on digital rights. The winners in the next decade will be the companies that master this technology ethically, while the losers will be those who adopt it purely for control without considering the human cost.