The AI Watchdog: How Specialized LLMs Are Becoming the New Frontier in Corporate Surveillance

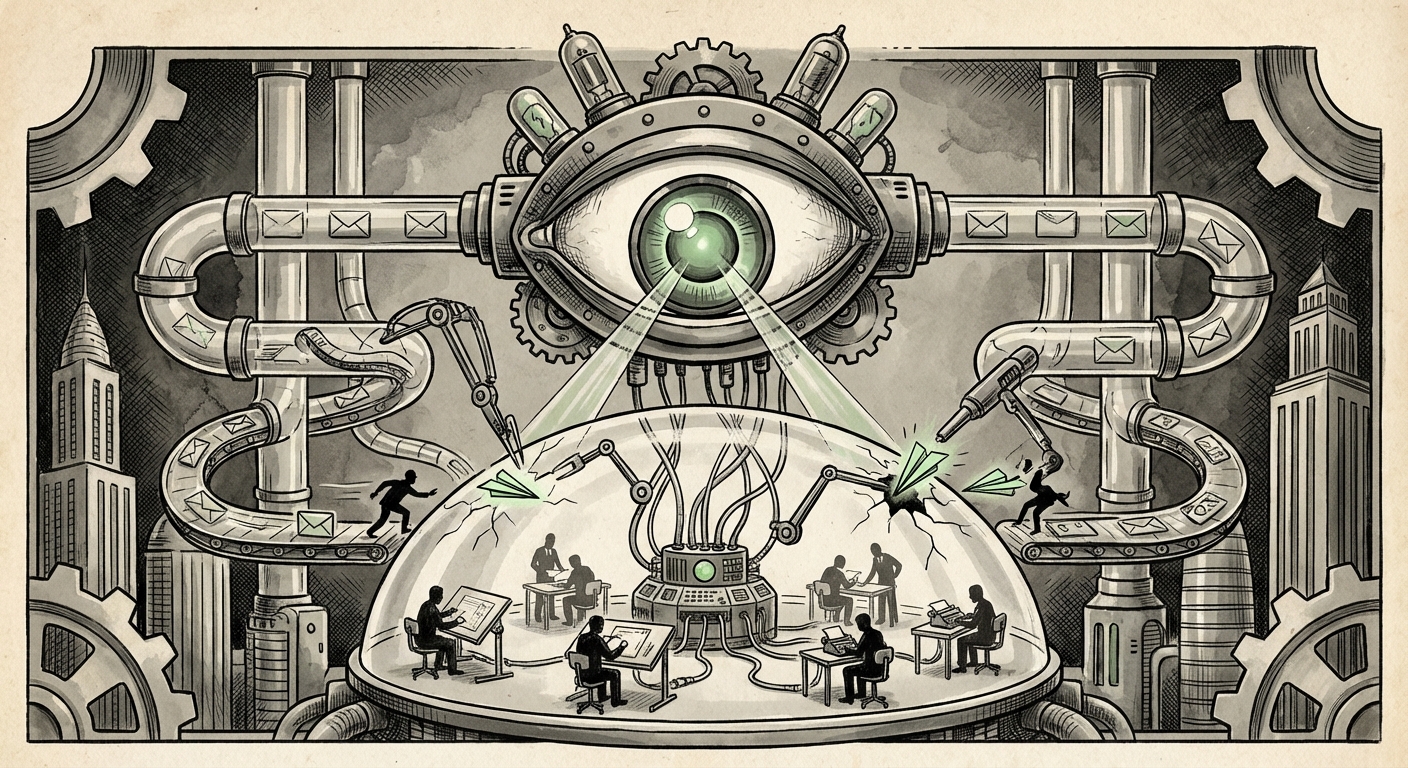

The recent reports suggesting that OpenAI is deploying a highly customized version of its own technology—a 'special version of ChatGPT'—to scan internal Slack messages and emails for information leaks is more than just corporate drama. It is a powerful signal about the immediate future of security, data protection, and employee trust in the age of artificial intelligence.

For companies building world-changing technology, information security is not a secondary concern; it is existential. When the intellectual property (IP) is the model itself, the very code, the training data, or the proprietary research breakthroughs, the risk of a rogue employee or espionage operation poses an immediate existential threat. This move, however, positions AI itself as the primary internal security enforcer, creating a powerful, yet deeply controversial, double-edged sword.

The Technological Shift: From Keywords to Context

To understand why using a specialized LLM is such a leap beyond traditional security tools, we must look at the technology itself. Older security systems, known as Data Loss Prevention (DLP) software, primarily relied on **keyword matching** or rigid rule sets. If an employee typed "Project Chimera confidential pricing," the system flagged it. This is like using a metal detector—it catches obvious items but misses things hidden in plain sight.

A fine-tuned LLM, however, operates on semantic understanding. Because it has been trained on vast amounts of language, it can detect intent, context, and paraphrasing. This means:

- Identifying Code Words: It can spot when employees subtly shift jargon or use coded language to discuss proprietary information, even if those words have never been explicitly listed as forbidden.

- Analyzing Flow and Pattern: It can track the natural flow of conversation across emails and Slack, looking for anomalous activity—like an engineer suddenly discussing customer pricing structures with a marketing colleague outside of standard channels.

- Distinguishing Noise from Signal: For technical audiences, imagine training a model not just on what proprietary data *looks like*, but on the *style* of communication used by the researchers who normally handle that data. It becomes an expert internal linguist dedicated solely to security.

As corroborated by the search interest in **"LLM internal data leak detection systems,"** this is the direction the entire security industry is rapidly moving. Every major security vendor is now focused on integrating Large Language Models (LLMs) into their security stacks to move beyond rigid rules into proactive, contextual threat hunting.

The Context: AI Labs Under Siege

The reason this is happening at OpenAI first is straightforward: the value of their IP is astronomical. Leaking the parameters of GPT-5, or the secret methodology behind a new alignment technique, is worth billions and could hand a significant competitive advantage to a rival. For these foundational labs, the risk profile is unique.

This reinforces the second trend: **Security Becomes Inseparable from Product.** For traditional manufacturing, security focused on the factory floor or physical servers. For the AI industry, the battlefield is the communication channel. Protecting the research process is now just as critical as securing the cloud infrastructure.

The Ethical Minefield: Privacy vs. Protection

While the need for robust security in high-stakes environments is undeniable, the implementation of AI surveillance ignites severe ethical and practical concerns. This development forces a confrontation between corporate necessity and fundamental workplace expectations.

The Chilling Effect on Innovation

For technical and creative employees—the very people generating the IP—the knowledge that an AI is perpetually analyzing every casual Slack message can be devastating. Innovation thrives on open, informal communication, brainstorming, and sometimes, venting frustration or questioning internal processes. If employees fear that an algorithm, without human nuance, might misinterpret a joking comment or a half-formed idea as a policy violation, they will self-censor.

This **"trust erosion"** drives away top talent. High-performing engineers and researchers often prioritize environments where they feel respected and autonomous. Overly invasive monitoring, regardless of how sophisticated the underlying AI is, creates a toxic environment. We see this echoed in broader discussions concerning **"Ethical implications of AI used for internal surveillance."**

The Fairness and Bias Question

Who audits the AI watchdog? If the specialized ChatGPT flags an employee for potential leakage, what is the appeal process? LLMs are powerful, but they are not infallible; they can inherit or amplify biases present in their training data. If the model learns that communication patterns common among certain teams or demographic groups are statistically correlated with past leaks (even if the correlation is spurious), it could lead to disproportionate targeting.

This is a critical concern for HR and legal professionals targeting **"AI powered employee monitoring compliance."** Establishing a clear, transparent, and auditable framework for AI-driven monitoring is essential to avoid lawsuits related to unfair termination or invasion of privacy.

Practical Implications for Businesses Everywhere

While OpenAI is operating at the extreme edge of data value, their actions set a precedent that will cascade down to every medium and large enterprise. This is not just a problem for AI labs; it’s for pharma, defense contractors, high finance, and any sector dealing with significant competitive secrets.

1. The Inevitability of Contextual DLP

The industry is moving toward **"enterprise DLP tools using generative AI."** Businesses must start auditing their current security tools. If they are still relying only on static keyword blocking, they are vulnerable to sophisticated insider threats. The actionable insight here is that security budgets must shift from simple detection to **contextual analysis**, leveraging AI to understand *what* is being said and *why*.

2. Re-evaluating Acceptable Use Policies

Companies need immediate, transparent updates to their Acceptable Use Policies (AUPs). Employees must know, in clear terms, *what* is being monitored, *how* the AI makes decisions, and *what* happens after an alert is triggered. Vagueness is the enemy of trust.

For a technology team, this means developing technical guardrails. For HR, it means defining the human intervention points. For employees, it means understanding the new social contract of work.

3. Investing in Trust Infrastructure

The most advanced security infrastructure in the world cannot compensate for a complete breakdown of internal trust. The counter-strategy to surveillance must be empowerment and transparency. This means:

- Data Segmentation: Minimizing the number of employees who have access to the most sensitive information in the first place.

- Positive Reinforcement: Creating cultures where employees feel valued enough not to leak, rather than monitored enough to stay silent.

- Open Auditing: If an internal AI is being used, external or internal teams must regularly audit its decisions to ensure it is not biased or overreaching.

The Road Ahead: Specialized AI and the New Labor Landscape

The deployment of a custom ChatGPT to find leakers solidifies a crucial realization: **The next wave of AI application isn't just external product development; it's internal optimization and enforcement.** We are witnessing the creation of specialized, highly potent LLMs for hyper-specific corporate roles—the AI legal counsel, the AI compliance officer, and now, the AI internal security agent.

For those in technology and development, this underscores the necessity of engaging with security from the earliest stages of model design. For business leaders, it is a call to action: the tools for surveillance are evolving faster than the frameworks for governance. The adoption of AI surveillance technology—while potentially necessary for protecting revolutionary IP—must be accompanied by robust, human-centered governance.

The future of work in high-stakes industries will be defined by how effectively leaders can wield this new digital watchdog without destroying the very innovation culture that fuels their success. The challenge is not stopping leaks; the challenge is maintaining an environment where people feel compelled to stay and build, rather than compelled to leave and expose.