The Great Exodus: Why Half of xAI's Founders Are Leaving and What It Signals for the Future of AGI Development

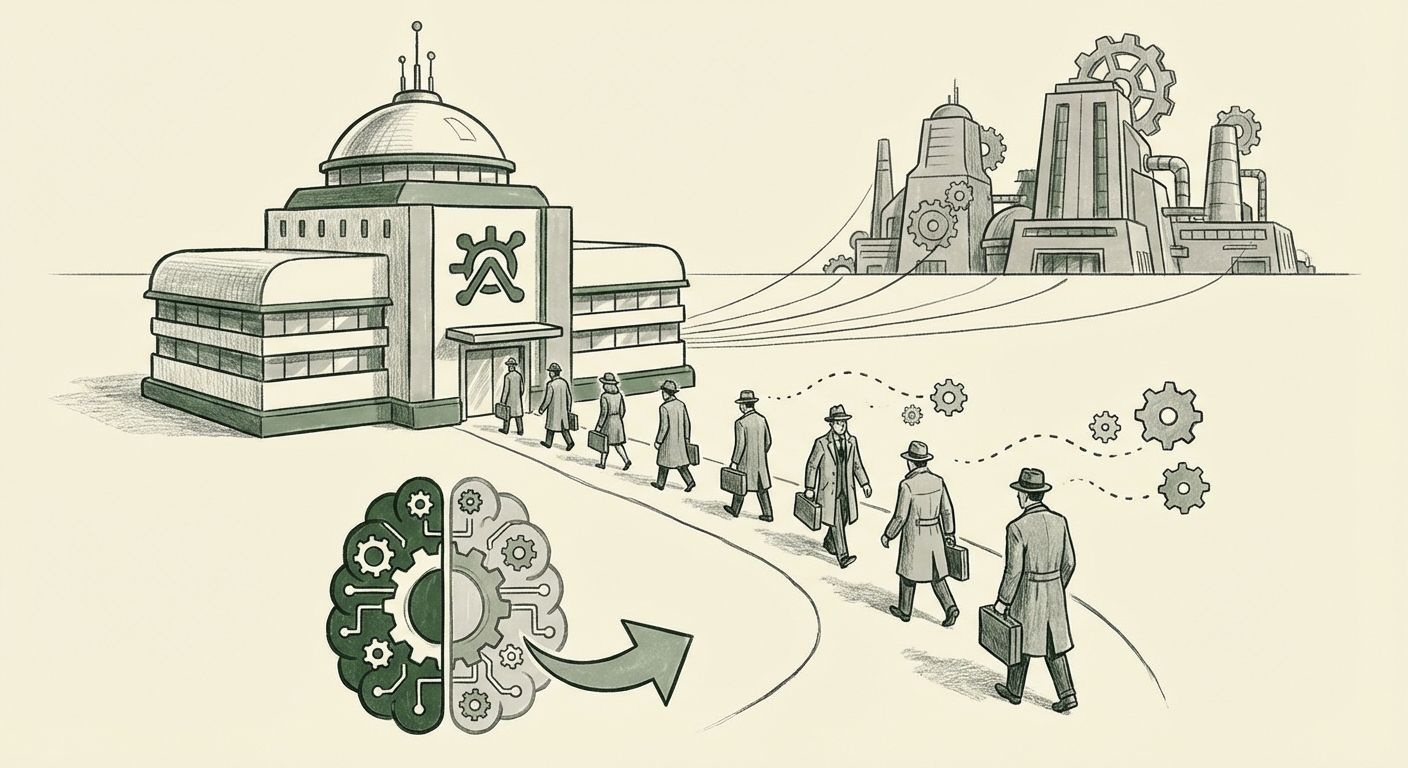

The race to build Artificial General Intelligence (AGI) is arguably the most consequential technological sprint of our time. At the forefront of this high-stakes competition sits xAI, Elon Musk’s ambitious venture, backed by significant capital and a promise to usher in a new era of open, truth-seeking AI. However, recent reports reveal a startling internal reality: half of the company’s original twelve founding members have departed.

The most recent departure, Jimmy Ba, echoed the sentiment of those who left before him: high praise for the company’s potential and confidence in its future breakthroughs. Yet, when a founding cohort dissolves so rapidly, it sends powerful ripples through the tech ecosystem. As an analyst dedicated to charting the trends shaping our future, this exodus is not just a staffing note; it is a critical data point in understanding the friction points—cultural, ideological, and technical—inherent in building frontier AI.

The Anatomy of an Early Exodus: Culture, Vision, and Musk

When high-caliber talent leaves a venture, the narrative usually splits into two camps: the individual seeking new opportunities, or the venture grappling with deep-seated issues. In the case of xAI, the consensus among departing founders is often couched in glowing reviews, suggesting the issue is less about immediate failure and more about the *path* to success. This leads us directly to the central question fueling industry speculation:

Search Query 1: Digging into the Culture and Retention

To move past the PR statements, one must investigate the internal environment. Our first analytical step involves targeting searches like `"xAI" co-founder departures culture retention`. This search aims to uncover the subtle or not-so-subtle misalignment between the research scientists' desire for academic rigor and the typical, often demanding, pace set by an executive like Elon Musk.

Founding teams in AI often comprise researchers driven by intellectual freedom and the slow, methodical process of scientific discovery. Musk’s style, famously characterized by extreme urgency and rapid iteration (as seen at SpaceX and Tesla), can clash severely with research culture. If the founders perceived a lack of autonomy, or if the vision shifted from pure research toward immediate productization tied to X (formerly Twitter), such departures become almost inevitable. For Tech Industry Insiders and VCs, understanding this cultural friction is paramount; it predicts the long-term stability of any Musk-led project.

The War for Top Minds: xAI vs. the Giants

The departure of top AI talent is not exclusive to xAI; it is the defining feature of the current "talent war." The sheer resource disparity between startups like xAI and established behemoths like Google DeepMind, Meta (FAIR), or the Microsoft/OpenAI partnership is immense.

Search Query 2: Contextualizing the Talent Market

To properly weigh xAI's situation, we must look outward. A search for `top AI researchers leaving startups for big tech 2024` helps frame the situation. Are these individuals being pulled away by irresistible compensation packages, access to unparalleled compute clusters, or the promise of working on models already scaling globally?

For AI Ethicists and Business Strategists, this trend is crucial. The concentration of the world’s best AI minds within a few mega-corporations signals a potential centralization of AGI capabilities, which carries significant ethical and competitive risks. If xAI, a company pitched as an alternative to closed systems, cannot retain its founding team against the lure of Big Tech's resources, it suggests that the economic gravity of existing players is too strong for new challengers to overcome easily.

The implication for the future of AI development is stark: if the most promising independent labs hemorrhage talent, the path to truly diverse and decentralized AGI solutions becomes significantly narrower. Resources—especially compute and data access—are becoming the ultimate moat, often trumping even the best foundational ideas if the talent needed to execute them walks away.

Technological Roadmap Under Scrutiny: Will Grok Survive the Shakeup?

Despite the personnel shifts, the remaining team must deliver on the core promise: powerful AI models, exemplified by Grok. The key question for investors and developers is whether the core technical trajectory is compromised.

Search Query 3: Assessing Technological Momentum

Our final search, `xAI roadmap after co-founder exits Grok progress`, forces a shift from HR drama to engineering reality. What remains? Which architects of the initial vision are still present? Have there been noticeable delays or, conversely, surprisingly rapid advancements since the departures?

For AI Developers and Investors, this is where analysis becomes actionable. If the key architects responsible for model scaling or specific proprietary training techniques have left, the timeline for Grok 2.0 or future iterations will almost certainly be impacted. Conversely, if the departing founders were focused on non-core areas, the impact might be minimal.

The praise given by the departing members, noting massive breakthroughs are still ahead, is a crucial piece of evidence. It suggests the foundational research environment might still be fertile, perhaps even *more* fertile if internal disagreements that caused the friction have now been resolved, leaving the remaining team more aligned. This is the hopeful interpretation: that the necessary restructuring has occurred to allow the core mission to proceed unhindered.

What This Means for the Future of AI Development: The Decentralization Dilemma

The xAI situation crystallizes a fundamental tension in modern AI development: Autonomy vs. Scale.

AGI development requires immense compute, data infrastructure, and funding—resources currently monopolized by the major tech players. Startups attempt to disrupt this by promising novel architectures, better alignment techniques, or a philosophical commitment (like transparency or openness). However, if the founding researchers cannot reconcile the startup's operational reality with their scientific ideals, the structure itself becomes the bottleneck.

For AI Developers: The future ecosystem may bifurcate sharply. We will see hyper-funded, centralized efforts (likely driven by Microsoft/OpenAI, Google, and Meta) that move incredibly fast due to resource consolidation. Simultaneously, smaller, scrappier labs will struggle with talent retention, forced to make tough compromises between speed and scientific purity. The success of Grok, post-exodus, will be a barometer for how viable the "independent challenger" path truly is.

For Society: A concentration of expertise in too few hands—whether corporate or governmental—is dangerous for a technology this transformative. The loss of diverse perspectives from founding teams contributes to monolithic AI systems, potentially embedding specific biases or worldviews into the foundational layers of future intelligence.

Practical Implications for Businesses and Decision-Makers

Regardless of where you sit on the AI deployment spectrum, the xAI narrative offers clear strategic takeaways:

- Talent Management is Now Product Strategy: In frontier AI, people are not just resources; they *are* the intellectual property. Businesses must prioritize creating environments where top researchers feel scientifically respected and culturally aligned. High compensation alone will not suffice if autonomy is absent.

- The Value of Compute vs. IP: The ability to attract and retain talent is directly linked to the infrastructure offered. Any company challenging the established order must demonstrate not only a better *idea* but also comparable *tools* (i.e., access to significant GPU clusters).

- Risk Assessment of "Visionary-Led" Startups: For investors and partners, high-profile, singular leadership, while attracting initial capital, increases instability risk when that leader's operational style conflicts with scientific norms. Due diligence must heavily weigh the alignment between the founder-scientists and the executive vision.

Actionable Insights: Navigating the Turbulent Waters of AI Talent

To thrive in this environment, organizations—whether they are scaling AI products or simply adopting AI tools—must be proactive:

- For AI Leaders: Conduct "culture audits" focused specifically on research teams. Are your reward structures balancing speed metrics with foundational innovation? Are you insulating your research leads from immediate, daily product pressures?

- For Researchers (The Talent): Treat every startup environment as a negotiation of trade-offs. Understand what you value more: immediate, radical impact under intense pressure, or slower, more deliberate scientific exploration. Be aware of the organizational dynamics surrounding high-profile founders.

- For Business Strategists: Diversify your AI dependency. Do not place all bets on a single vendor or a single research group’s timeline. Monitor the health of competing AI labs, as talent shifts often precede shifts in technological advantage.

The story of xAI’s founding team vanishing like smoke highlights that even the largest, best-funded initiatives in AGI are still incredibly fragile human endeavors. The technology may be advancing at light speed, but the human element—vision, culture, and ambition—remains the primary variable. The future of intelligence will be shaped not just by the code written, but by the environment in which that code is conceived.

We are watching a grand experiment play out in real-time. The remaining team at xAI will prove whether a great idea, even one with Elon Musk’s backing, can survive the loss of its initial intellectual architects, or whether this mass departure represents a critical, perhaps fatal, inflection point for the company’s AGI ambitions.