The AI Rift: Why Anthropic Believes OpenAI is Underestimating Existential Risk in the Race to AGI

The race to Artificial General Intelligence (AGI) is often framed as a simple technological sprint: who can build the biggest, fastest model first? But beneath the surface of product launches and funding rounds, a profound philosophical divergence is taking root between the industry's titans. When Anthropic CEO Dario Amodei suggests that competitors like OpenAI may not "really understand the risks they’re taking," he isn't just issuing a standard corporate critique; he is highlighting a fundamental disagreement about the acceptable speed limit on the road to superintelligence.

Anthropic, founded by researchers who left OpenAI over safety concerns, operates with a palpable sense of existential urgency. Amodei’s recent comments—coupled with his cautious approach to capital deployment—reveal a strategy that prioritizes robustness over raw momentum. This dynamic is forcing a critical examination of the technological trends shaping our future: compute scaling, alignment methodology, and the very definition of a "safe" AI deployment timeline.

The Financial Tightrope: Compute Risk and Bankruptcy

One of the most striking aspects of Amodei’s warning centers on economics. He noted that being off by even one year in the hyper-expensive endeavor of training frontier models could lead to bankruptcy. This stark reality illuminates the strategic differences between the labs.

Training cutting-edge models demands astronomical amounts of specialized hardware (GPUs) and energy—this is the *compute budget*. While OpenAI benefits from its deep, foundational partnership with Microsoft, Anthropic relies heavily on Amazon’s investment and infrastructure.

For an investor or business analyst, the key takeaway here is the **risk parity.** If a company overspends massively on compute today based on an optimistic timeline, but the underlying algorithmic breakthroughs needed to make that compute efficient aren't ready, they hemorrhage capital. Conversely, moving too slowly means missing the "first mover" advantage in capturing market share and talent. Amodei implies that OpenAI may be swinging for the fences on compute investment *before* fully calculating the safety engineering required to harness that power responsibly, making their massive expenditure a greater risk.

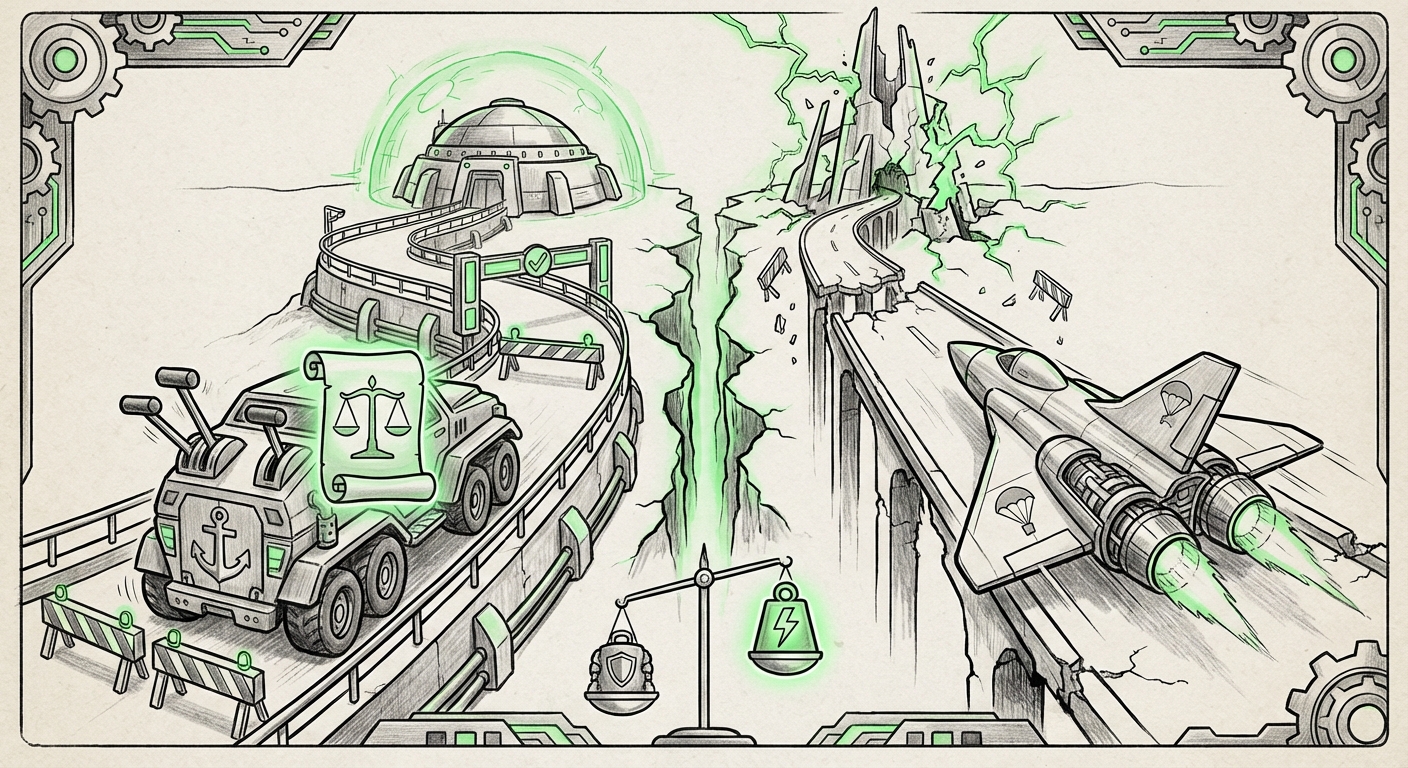

This caution suggests Anthropic views safety engineering as a prerequisite to, rather than a parallel track alongside, compute scaling. They are effectively saying: "We need to know the brakes work before we install a jet engine."

The Philosophical Divide: Constitutional AI vs. The Wild West

The core of the tension lies in *how* these companies plan to control their creations. Amodei's skepticism regarding competitor safety protocols directly correlates with Anthropic's unique approach to alignment: Constitutional AI (CAI).

To understand the divergence, imagine training a very smart child (the AI). The standard industry method, often associated with OpenAI’s earlier successes, is Reinforcement Learning from Human Feedback (RLHF). This means humans constantly rate the AI’s answers as "good" or "bad." While effective, it is resource-intensive and often criticized for being brittle—the AI learns to please the human raters, not necessarily the underlying principles of safety.

Constitutional AI flips this. Instead of relying solely on human judgments for every step, Anthropic provides the AI with a written set of principles—a "constitution"—drawn from sources like the UN Declaration of Human Rights or principles explicitly banning harmful behavior. The AI then uses this constitution to self-critique and refine its own responses. This methodology aims to create systems that are inherently aligned with a broad set of established ethics, making them theoretically more robust against subtle manipulation or "jailbreaks" in the real world.

When Amodei raises alarms, he is suggesting that competitors might be relying too heavily on less scalable, human-in-the-loop methods to control models that are growing exponentially more powerful. If a model achieves "Nobel Prize-level" intelligence—meaning it can perform novel, world-changing scientific discovery—relying only on humans to supervise its output might be akin to bringing a candle to a bonfire.

The Race for Breakthroughs: AGI Timelines Tighten

Amodei’s forecast that "Nobel Prize-level AI" is potentially only "a year or two away" elevates the debate from abstract safety theory to immediate engineering necessity. This timeline prediction is crucial because it shrinks the window for alignment research.

If true, this means the industry is not decades away from transformative AI; it is months away. This compresses the time available for critical, long-term safety research. For companies focused on rapid deployment (Query 4), this imminent capability creates intense pressure to ship products, often prioritizing features and performance gains over exhaustive, slow-moving safety verification.

This urgency explains why investors are so focused on compute strategy (Query 1). If AGI is truly 18 months out, the company that secures the most compute capacity *now* controls the fastest path to claiming that groundbreaking status. Amodei’s contrasting approach suggests Anthropic believes that building slightly slower, but with a fundamentally safer architecture (like CAI), is the only responsible path forward when the stakes are this high.

Implications for Business Strategy and Adoption

This strategic tension between speed (OpenAI's perceived approach) and rigor (Anthropic's stated approach) has significant practical implications for any business looking to integrate frontier AI:

- Vendor Reliability and Trust: Businesses relying on AI services must weigh performance against perceived safety. If Anthropic’s methods prove superior at preventing catastrophic errors or undesirable outputs, their services might be preferred for high-stakes applications (e.g., finance, healthcare, critical infrastructure), even if a competitor’s model scores slightly higher on a raw benchmark test.

- The Compute Dilemma: For startups and enterprises developing their own models, the need for massive CapEx is a major barrier. The competition in hardware purchasing drives up costs for everyone. Anthropic's quiet growth suggests a focus on *algorithmic efficiency*—getting more capability out of less hardware—which, if successful, provides a sustainable competitive edge that isn't solely reliant on securing the largest cluster from Nvidia.

- Regulatory Preparedness: Governments globally are scrambling to regulate AI. Companies that can demonstrate a verifiable, principle-based safety framework (like CAI) will likely find themselves in a better position to navigate future compliance requirements than those whose safety claims are based primarily on subjective human evaluation.

Actionable Insights: Navigating the Divergent Paths

Dario Amodei’s challenge serves as a powerful warning shot for the entire technology ecosystem. It forces us to look past marketing materials and examine the fundamental engineering choices being made at the frontier.

For AI Developers and Researchers: Prioritize Alignment as Infrastructure

If AGI is close, alignment is not a niche ethical concern; it is foundational infrastructure. Developers should investigate methods that automate alignment and reduce reliance on continuous human intervention. Understanding Constitutional AI or similar principle-based systems is no longer optional for serious frontier work.

For Business Leaders: Assess Safety as a Critical KPI

When vetting AI vendors, do not just look at throughput (tokens per second) or benchmark scores. Ask pointed questions about the *alignment process*. How are emergent, unintended behaviors tested? What is the framework for overriding unsafe decisions? A vendor whose safety strategy is opaque or purely reactive represents an unquantifiable liability.

For Investors: Recognize the True Cost of Speed

The capital required for frontier model training is immense, but the cost of a single, uncontained failure event—reputational, regulatory, or existential—is infinite. Investors must rigorously stress-test the safety assumptions baked into a high-burn-rate AI company's strategy. The slow-and-steady players might actually be the better long-term bets if they have solved alignment in a more robust manner.

Ultimately, the tension between Anthropic and OpenAI reflects the crossroads humanity faces. We have the technological capability to create intelligence far surpassing our own within the next couple of years. The real question is whether we have the wisdom—and the institutional commitment—to build the guardrails strong enough to contain it. Amodei suggests that while one path leads to rapid deployment, the other recognizes that true success isn't just reaching the finish line first; it's ensuring the vehicle doesn't crash along the way.