The Compute Cold War: Why Anthropic is Building a 10GW Infrastructure Empire and What It Means for AI’s Future

In the world of artificial intelligence, compute—the sheer processing power needed to train and run massive models—is the new oil. For years, AI companies operated like digital nomads, renting space on the supercomputers owned by Google, Amazon (AWS), and Microsoft (Azure). This was efficient, fast, and flexible. But as frontier models like Claude and GPT become exponentially more complex, the terms of engagement are changing.

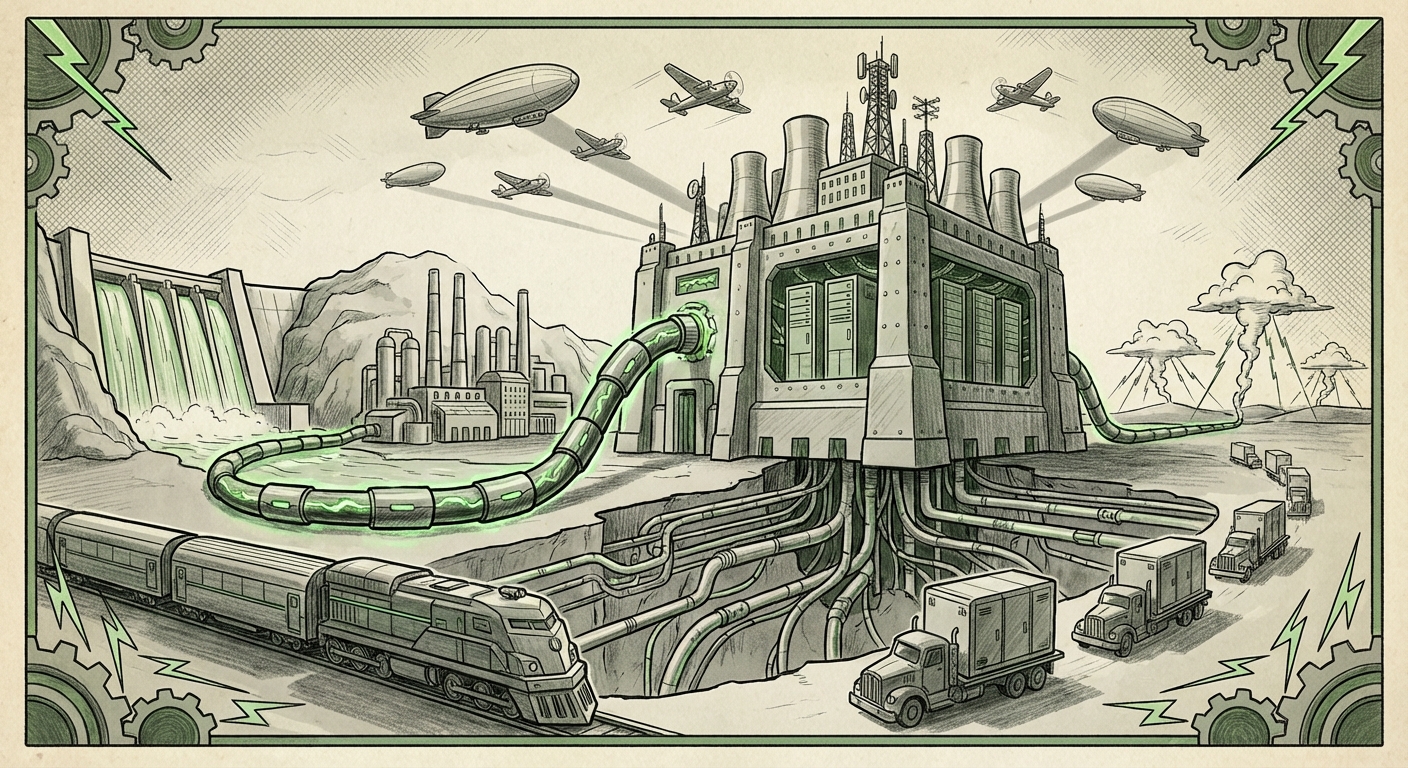

The recent news that Anthropic, a leading developer of frontier AI, is aggressively planning to build its own data center capacity—potentially reaching a staggering **10 gigawatts (GW)** of power capacity—signals a monumental shift. This isn't just about buying more servers; it’s about taking direct control of the entire physical foundation of AI. It’s a declaration that for the elite few pushing the boundaries of intelligence, relying on external cloud providers is no longer tenable. Anthropic is going vertical, and the technology world must pay attention.

The Scale of Ambition: Why 10 Gigawatts Matters

To understand the magnitude of Anthropic’s move, we must first translate 10 GW into relatable terms. A gigawatt is enough power for roughly 750,000 typical US homes running simultaneously. Anthropic is aiming to construct the digital equivalent of a small, dedicated power utility, just for training and running its AI models.

This level of investment confirms one of the technology sector’s worst-kept secrets: the cost curve for frontier AI development is vertical, not just steep.

Contextualizing the Compute Demand

The sheer appetite for processing power driven by models with trillions of parameters is unprecedented. As confirmed by broader industry analysis regarding "AI infrastructure spending projections for 2024 and 2025," market watchers anticipate investments soaring into the hundreds of billions of dollars globally, driven primarily by GPU and specialized chip procurement.

- Training vs. Inference: Training a model like Claude 3 Opus requires months on tens of thousands of high-end accelerators. Once trained, running the model for millions of users (inference) still demands massive, always-on power. Anthropic needs its own infrastructure to manage both phases economically and reliably.

- Cost Control: Cloud compute is expensive because hyperscalers must amortize the cost of building, cooling, and maintaining specialized hardware across all their customers. By building its own, Anthropic aims to dramatically reduce its operational cost per computation, freeing up capital for more research.

This is where the expertise comes in. Anthropic isn't just booking real estate; they are hiring the masters of the game. The recruitment of ex-Google data center veterans is not a coincidence. These are the individuals who have managed the physical realities of scaling Google's global computing footprint—a skill set far scarcer than software engineering talent. They understand power density, thermal management at scale, and the logistics of equipping facilities for specialized AI hardware.

The Shifting Balance of Power: Challenging the Hyperscalers

Historically, the relationship between cloud providers and AI innovators was mutually beneficial: hyperscalers provided the racks and GPUs, and the AI companies provided massive, high-margin revenue streams. Anthropic’s infrastructure push directly challenges this ecosystem.

The Strategic Tension

When a company is both an investor (as Google is in Anthropic) and a primary infrastructure provider, strategic tension is inevitable. By building its own "empire," Anthropic gains:

- Autonomy: Freedom from cloud vendor roadmaps, pricing hikes, and capacity limitations.

- Optimization: The ability to design hardware layouts and cooling systems perfectly tuned for their specific chips (whether NVIDIA, custom ASICs, or future silicon), achieving peak efficiency that a multi-tenant cloud environment cannot match.

- Custom Silicon Push: AWS deepening its investment in chips like Trainium and Inferentia, and Google leveraging its custom TPUs (Tensor Processing Units), are defensive measures designed to make their proprietary hardware so compelling that the incentive to leave diminishes.

- Sovereign AI & Partnerships: Cloud providers are pivoting to offer highly secure, localized infrastructure solutions for governments and large enterprises, segmenting the market where Anthropic may not want to play immediately.

- Grid Stress: Where will this power come from, and how quickly can utilities build the necessary transmission infrastructure? Regions that aggressively court AI development (like parts of the US South or Ireland) will face rapid grid saturation.

- Sustainability Commitments: Companies like Anthropic must secure massive, long-term renewable energy contracts (PPAs) to maintain their stated ESG commitments. Building a sustainable AI future requires integrating directly with renewable generation, a complex logistical undertaking far beyond standard data center procurement.

This necessitates a close examination of the "cloud providers' reaction to independent AI infrastructure builds." Hyperscalers cannot simply afford to lose their largest, most forward-looking customers. We are seeing cloud providers accelerate their own differentiation:

For the business world, this signals that owning the "last mile" of compute—the physical building and power—is becoming the ultimate competitive moat, potentially creating a two-tiered AI landscape: those who rent the highest-end compute, and those who own it.

The Practical Realities: Talent, Energy, and Execution Risk

While the ambition is clear, the path to operating a 10 GW infrastructure fleet is fraught with complexity. This isn't just about writing a big check; it's about mastering physical engineering and geopolitical risk.

The Talent Bottleneck Deepens

The emphasis on hiring data center leadership from Google underscores that the critical shortage isn't just specialized AI chips, but specialized people who know how to run those chips 24/7 without failure. Scaling compute is an infrastructure game, not just a software game. Companies must secure land rights, negotiate massive power contracts, and design facilities that manage extreme heat density. The move validates the thesis that the "AI Wars" are being fought on the factory floor and in utility company boardrooms as much as in coding environments.

The Energy Tsunami

The most profound implication lies in energy demand. Analyzing the "energy requirements for 10GW AI data centers" reveals a significant societal challenge. If one leading AI lab demands power equivalent to several major cities, the immediate questions become:

For regulators and energy analysts, Anthropic's plan is a flashing warning light. AI compute is about to become a primary driver of national and regional energy policy.

Future Implications: Fragmentation and Specialization

Anthropic’s vertical integration strategy sets a precedent that will ripple across the entire technology stack. What does this mean for the next five years of AI?

1. The Fragmentation of the Cloud

We will see a clear split. The vast majority of businesses, startups, and researchers will continue to rely on the hyperscalers for accessibility, scalability, and cost-effectiveness for smaller or specialized models. However, the absolute frontier—the next generation of AGI development—will likely pull away into bespoke, self-owned infrastructure.

This could lead to a world where the most powerful models (developed by a handful of well-funded labs) are running on entirely different physics than the models powering your local enterprise applications.

2. Increased Focus on Energy Efficiency (Hardware Wars)

When power becomes the single greatest ongoing operating cost, the focus shifts intensely toward efficiency. We can expect an acceleration in the development of lower-power inference chips and exotic cooling solutions (like liquid immersion cooling) that can maximize computation per kilowatt. The design mandate changes from "fastest possible training" to "most efficient training over the lifespan of the data center."

3. The Consolidation of Capital

Building a 10 GW footprint requires *hundreds of billions* in capital, a figure only achievable through deep, persistent financial backing (like the relationship with Google). This move acts as a powerful moat, making it exponentially harder for new AI entrants to compete at the frontier level. The capital required for infrastructure now rivals the capital required for talent acquisition, solidifying the dominance of the already well-capitalized players.

Actionable Insights for the Technology Ecosystem

This infrastructure race offers clear takeaways for different stakeholders:

For Enterprise Leaders:

Actionable Insight: Diversify Compute Strategy. Do not anchor your long-term AI roadmap exclusively to one cloud provider. Recognize that capacity constraints are real. Start experimenting with hybrid approaches today, exploring specialized hardware vendors or co-locating smaller, dedicated AI clusters if your internal workloads justify the expense and operational complexity.

For Infrastructure & Energy Companies:

Actionable Insight: Target AI Power Solutions. The demand for power delivery, grid modernization, and advanced cooling solutions dedicated to high-density AI racks will explode. Utility companies, specialized construction firms, and cooling technology vendors should pivot their strategy toward servicing these concentrated, high-value AI clients.

For Investors:

Actionable Insight: Look Beyond the GPUs. While semiconductor firms remain crucial, the next major investment opportunity might be in the "picks and shovels" of physical infrastructure: specialized data center REITs focused on AI loads, high-voltage transmission equipment, and energy storage solutions positioned near planned AI hubs.

Conclusion: Ownership Defines the Next Era of AI

Anthropic’s foray into building its own infrastructure empire is more than a financial maneuver; it is a strategic declaration of independence and a necessary step for maintaining competitive parity in the race toward advanced AI. The shift from renting compute to owning it redefines the barriers to entry, dramatically increases the industry's energy footprint, and creates new friction points with the cloud giants.

The future of AI will not solely be written in code; it will be forged in concrete, cooled by innovative engineering, and powered by massive, dedicated energy supplies. The companies that master the physics of scale—the operational excellence exemplified by those ex-Google veterans—will be the ones that ultimately dictate the speed and shape of artificial intelligence innovation for the next decade.