The $380 Billion AI Arms Race: Why Anthropic's Mega-Funding Signals the Next AI Era

The world of Artificial Intelligence has entered a phase best described as hyper-capitalization. The recent announcement that Anthropic, the research lab focused on safe AI development, secured a colossal $30 billion Series G funding round, setting its post-money valuation at an eye-watering $380 billion, is a seismic event. This figure is not just a headline grabber; it is a concrete signal of where the future value in technology resides and how aggressively players are willing to fund the race for superior intelligence.

For context, this investment dwarfs the capital required to run many successful, established tech companies. It underscores a critical reality: building truly capable, next-generation Large Language Models (LLMs) requires resources so immense that only the largest pools of global capital can participate. This article dissects what this valuation means for the industry, the immediate infrastructure demands it creates, and the strategic positioning it affords Anthropic in an increasingly competitive landscape dominated by giants like Google and Microsoft-backed OpenAI.

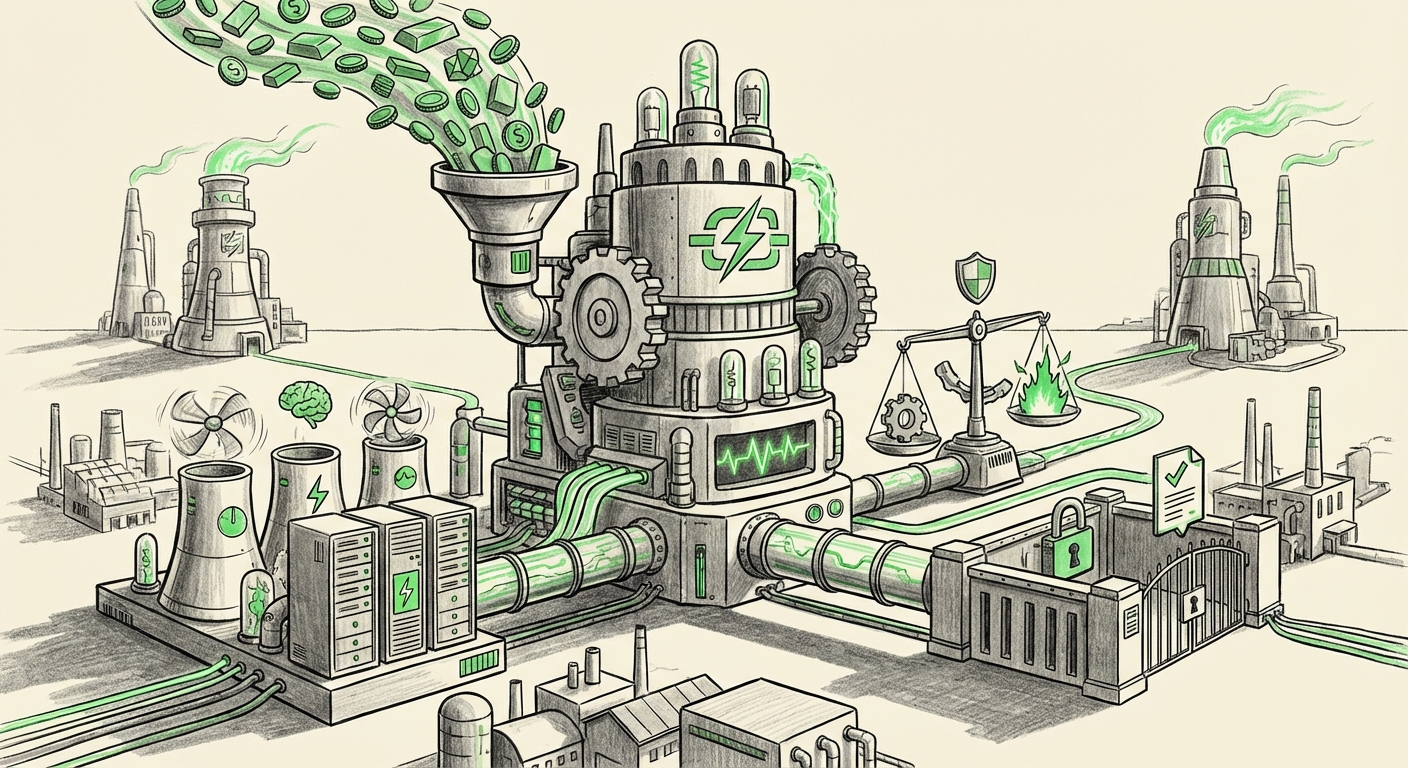

The New Math of AI Development: Compute as Currency

In the early days of the internet, growth was measured by user adoption or clicks. Today, the currency of AI dominance is compute—the raw processing power, measured in Graphics Processing Units (GPUs), needed to train increasingly complex models. Anthropic’s $30 billion infusion directly translates into securing staggering amounts of this scarce resource.

To understand the scale, imagine the training run for a state-of-the-art model. It requires millions of specialized chips running for months. This process is astronomically expensive. The new capital ensures Anthropic can bypass frustrating waitlists and secure dedicated clusters, giving it a clear runway for the next generation of Claude models.

This phenomenon has caused significant secondary effects, which require further investigation:

- The Nvidia Dependency: The massive deployment of capital directly impacts the GPU supply chain. As we search for analysis on the impact of massive AI funding on GPU supply chain, we see market reports confirming that companies like Anthropic are placing multi-billion dollar orders that shape Nvidia’s revenue forecasts for years to come. This creates a bottleneck where access to hardware dictates the pace of innovation.

- The Consolidation Effect: When funding rounds become this large (as seen when searching for AI funding rounds exceeding $10 billion), it automatically locks out smaller startups. Only companies backed by strategic partners (often major cloud providers like Amazon or Google, who are investors in Anthropic) can compete at this tier. This means the future of foundational AI is rapidly consolidating into the hands of a few well-funded entities.

For the business audience, this means that proprietary AI capabilities—the engine behind competitive advantage—are becoming increasingly proprietary and expensive to access, solidifying the lead of the well-funded incumbents.

Constitutional AI vs. Open Competition: The Strategic Stance

Anthropic was founded by former OpenAI researchers with a core mission: to develop AI systems that are helpful, harmless, and honest, chiefly through a methodology they term "Constitutional AI" (CAI). This involves aligning models not just through human feedback (RLHF) but through a set of explicit, written principles—a constitution.

The $380 billion valuation validates the market's belief in the *viability* of this differentiated safety approach. When comparing Anthropic vs OpenAI valuation comparison 2024, we often see a narrative pitting OpenAI’s aggressive commercialization against Anthropic’s deliberate, safety-first scaling. The massive cash infusion suggests investors believe Anthropic can achieve parity—or even superiority—in performance while maintaining a stronger ethical framework, which is increasingly attractive to enterprise clients concerned about brand risk.

Safety as a Product Differentiator

In a world where synthetic content runs rampant, the promise of a more reliable, less prone-to-hallucination model is immensely valuable. For regulated industries—finance, healthcare, and defense—a model whose guardrails are explicitly programmed via a constitution offers a higher degree of auditability and predictability.

The implication for the future is clear: Safety is no longer a feature; it is a required license to operate at scale. Companies that can demonstrate superior alignment might win the crucial, high-value enterprise contracts, even if their raw performance metrics are marginally behind the most cutting-edge, but riskier, open models.

Navigating the Regulatory Maze: Capital for Compliance

The era of building first and asking forgiveness later is over, particularly in Europe. The rise of comprehensive legislation like the EU AI Act forces developers of powerful general-purpose AI systems to shoulder significant responsibility.

Our investigation into the EU AI Act implications for large language model developers reveals that compliance is a massive, long-term operational cost. It requires dedicated legal teams, specialized safety auditing infrastructure, and extensive documentation proving non-malicious intent. Anthropic’s deep war chest is perfectly positioned to absorb these costs.

This funding allows Anthropic to build regulatory resilience into its core product from the ground up. While competitors might retroactively apply safety layers or face substantial fines for non-compliance, Anthropic is effectively funding its own path to global regulatory acceptance.

What This Means for Business Adoption

Businesses looking to integrate foundational AI into core operations need partners they can trust for the long haul. This capital commitment signals stability and longevity. If a small startup’s AI partner folds or pivots due to insufficient funding, the business utilizing that AI faces massive operational disruption. Anthropic, with this level of backing, signals it will be a major player for the next decade, de-risking the partnership proposition for major corporate clients.

Practical Implications: What Should Businesses Do Now?

The escalating valuations and fierce competition demand strategic shifts from organizations attempting to leverage this technology:

- Embrace Multi-Model Strategy: Relying on a single provider, especially one valued this highly, carries platform risk. Businesses must design architectures flexible enough to utilize models from Anthropic, OpenAI, Google, and specialized open-source alternatives. This mitigates reliance on any single provider's pricing, availability, or alignment decisions.

- Invest in AI Governance Early: Do not wait for regulation to force your hand. Use the clarity provided by Anthropic’s safety focus as a template. Create internal AI ethics boards and data governance protocols immediately. This preparation will be critical when integrating these powerful, expensive models into sensitive workflows.

- Focus on Application Layer Moats: If the foundational models are becoming commodities (albeit expensive ones), the real defensible value lies in the application layer. Businesses must focus intensely on proprietary data ingestion, unique user interface design, workflow integration, and fine-tuning specific models to their proprietary knowledge bases. The model is the engine; your data and processes are the car itself.

The Road to Trillion-Dollar AI

The jump from a high valuation to the much-discussed trillion-dollar market capitalization for an AI entity seems less science fiction and more an inevitable outcome of this capital influx. The current race is less about incremental performance gains and more about securing the *right* to define the next technological platform.

The $380 billion valuation for Anthropic demonstrates that the market is pricing in massive future revenue streams derived from enterprise licensing, specialized API access, and cloud compute services built around these foundational models. It shows a collective belief that generative AI is not just another software cycle; it is a fundamental shift in how value is created and captured.

The crucial tension moving forward will be balancing speed and safety. Can Anthropic leverage this massive war chest to advance safety protocols *faster* than competitors advance raw capability, or will the sheer weight of capital push everyone toward a speed-at-all-costs mentality? This latest funding round ensures that whatever path the industry takes, Anthropic will be charting a highly funded, high-visibility course.