The AI Infrastructure Showdown: Why Anthropic is Building a 10GW Empire and What It Means for Compute Power

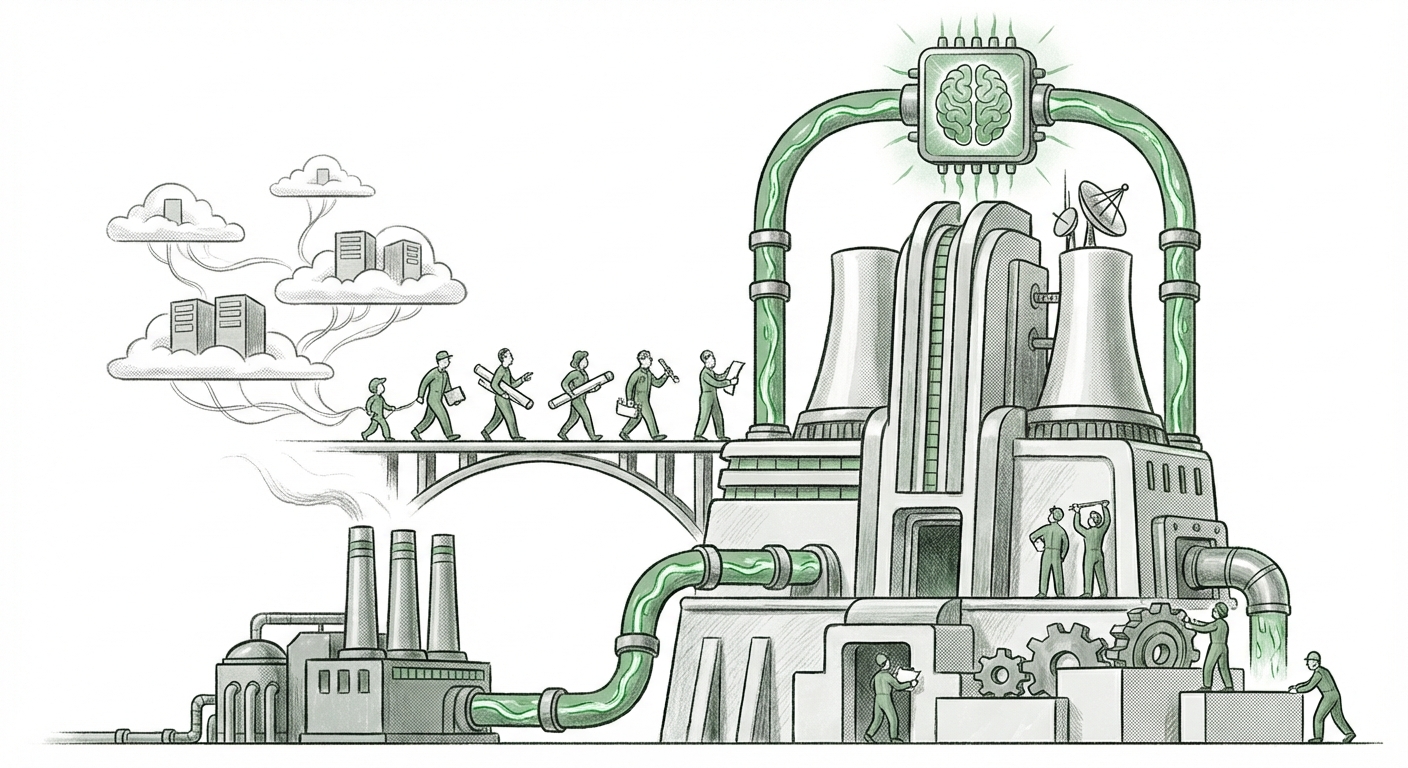

The race to build the next generation of Artificial Intelligence is no longer just about algorithms and model parameters; it has fundamentally become a battle over physical resources. The recent news that Anthropic—a leading developer of powerful Large Language Models (LLMs) like Claude—is making massive moves to construct its own colossal data center capacity, potentially reaching 10 gigawatts (GW), is not just a footnote in the tech news cycle. It is a declaration of intent that signals a seismic shift in how foundational AI is developed and deployed.

This scale of ambition—hundreds of billions of dollars—and the recruitment of top-tier infrastructure talent from established giants like Google underscore a critical realization in the AI industry: reliance on third-party cloud providers, while necessary today, is becoming a strategic vulnerability for tomorrow’s AI giants.

The Capacity Crunch: Why Cloud Leasing Isn't Enough

For years, AI startups outsourced their massive computational needs—the processing power required to train models—to the "Hyperscalers": Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). This was efficient: pay-as-you-go compute meant less upfront capital expenditure (CapEx) and faster time-to-market.

However, as models grow exponentially (measured in trillions of parameters), the demand for specialized hardware, particularly high-end GPUs, has outstripped supply. The current reality is a global AI data center buildout facing severe supply constraints. When you are building frontier models, waiting for your reserved compute time from a cloud provider is like waiting in a line that stretches around the block.

Anthropic’s 10 GW target suggests they are attempting to jump the line entirely. To put 10 GW into perspective: this capacity rivals the energy draw of a small industrialized nation or several large nuclear power facilities. This move transforms Anthropic from a purely software-focused AI lab into an integrated hardware-software entity.

Corroborating the Trend: The Hardware Bottleneck

When looking at broader market analysis, the need for dedicated infrastructure becomes clear. Industry analysts frequently report on the astronomical CapEx figures being poured into compute by every major tech player. This scramble for chips and physical space is driving an intense competition where even the largest cloud vendors are struggling to secure enough supply to meet demand.

For an independent lab like Anthropic, securing guaranteed, exclusive access to this volume of power and hardware prevents them from being held hostage by cloud pricing fluctuations, service disruptions, or competition from their own cloud landlords (e.g., Microsoft competing with OpenAI, or Google working on its own internal models).

The Human Factor: Talent Migrating to Build the Future

A massive infrastructure buildout requires infrastructure expertise. The fact that Anthropic is actively recruiting high-level data center veterans, specifically from Google, is telling. These individuals are the seasoned professionals who know how to design, deploy, and operate data centers at massive scale—managing everything from cooling and power distribution to network topology.

Why would someone leave the stability of a hyperscaler for an ambitious, high-risk startup endeavor?

The answer lies in the promise of *building something new* with a clear mandate. These veterans are likely attracted by the opportunity to architect a compute environment specifically optimized for their next-generation models, bypassing legacy cloud architectural compromises. They are moving from managing generalized cloud infrastructure to designing AI-native compute from the ground up. This talent shift confirms that the next competitive edge won't just be in model weight optimization, but in infrastructure efficiency.

Vertical Integration: Owning the Stack for Strategic Dominance

This development places Anthropic squarely in the camp of companies pursuing deep vertical integration. Vertical integration means controlling multiple stages of the production process. In AI, this stack includes:

- Hardware Design (Potentially): Designing specialized chips or server racks.

- Infrastructure: Building and owning the power and physical data centers (Anthropic’s 10 GW focus).

- Software/Model Training: Developing the core LLMs.

- Deployment/Inference: Serving the model to end-users.

When a company owns its entire stack, it gains unparalleled advantages:

- Cost Control: While initial CapEx is enormous, the marginal cost of running inference for billions of queries becomes significantly lower over time compared to paying cloud premiums.

- Optimization: The hardware can be custom-tuned for the specific demands of Anthropic’s models, leading to faster training times and lower latency during real-time use (inference).

- Data Sovereignty and Security: Keeping sensitive training data and proprietary model architecture entirely within self-managed facilities mitigates external security risks.

This is the playbook that companies like Meta have already aggressively pursued, designing custom silicon and dedicated AI supercomputers. Anthropic’s move suggests they view this level of infrastructure control not as an optional benefit, but as a mandatory prerequisite for remaining at the cutting edge.

The Hidden Cost: Energy, Real Estate, and Geopolitics

While controlling compute is powerful, controlling 10 GW of power brings a host of new, non-technical challenges. Building this empire is as much an energy and real estate play as it is a technology play.

Securing access to 10 GW of reliable, affordable electricity means engaging directly with utility companies, navigating complex energy regulations, and likely signing long-term Power Purchase Agreements (PPAs). This requires negotiating power on a scale typically reserved for heavy industries like aluminum smelting or semiconductor fabrication.

For governments and local communities, a 10 GW facility is a massive injection of investment, but also an enormous draw on local resources, particularly water (for cooling) and grid stability. This places organizations like Anthropic at the center of energy policy debates faster than almost any other enterprise software company.

We are seeing localized impacts already, as evidenced by discussions around AI strain on regional power grids in places like Northern Virginia or Oregon. Anthropic’s decision to build signals they are ready to commit to these long-term physical realities, effectively betting that the future of AI models will sustain this infrastructure investment.

Future Implications for the AI Ecosystem

This infrastructure arms race fundamentally changes the barriers to entry for developing truly frontier AI.

1. The Bifurcation of AI Developers

The AI landscape will likely cleave into two tiers:

- The Integrated Giants: Companies like Google, Microsoft (via OpenAI), Meta, and now Anthropic, which can afford the hundreds of billions required to own the silicon, the data center, and the model. They will train the most advanced, proprietary models.

- The Application Layer Builders: Startups and smaller firms who will rely on API access from the giants. They will compete fiercely on user experience, niche application, and speed of deployment, but will always be constrained by the pricing and policies of the infrastructure owners.

2. Shifting Cloud Revenue Streams

While Hyperscalers still dominate today, this move signals a long-term threat to their future revenue growth from top-tier LLM training clients. Cloud providers will need to pivot quickly, focusing their offerings on optimized inference services, specialized networking, and providing the foundational infrastructure (like networking fabric and AI-optimized chips) that these integrated players still need to purchase.

3. Democratization Through Hardware Innovation

Paradoxically, Anthropic’s move might spur innovation that benefits smaller players. When the demand for custom infrastructure becomes this visible, it creates massive opportunities for alternatives to NVIDIA. We may see a surge in demand for specialized AI accelerators from startups (e.g., custom ASICs) designed to offer better price-performance ratios on these purpose-built data centers, eventually lowering the cost of access for everyone else.

Actionable Insights for Business Leaders

What should technology leaders and investors take away from Anthropic’s bold infrastructure declaration?

- Assess Compute Dependency: If your business relies on cutting-edge AI, calculate how vulnerable you are to price hikes or service limitations from your current cloud provider. Look for models that can run efficiently on diverse hardware, not just the latest, most expensive specialized chips.

- Invest in Infrastructure Talent: Data center design, power management, and specialized networking talent are now high-value, strategic assets. These skills are critical for managing the next generation of AI workloads, even if you aren't building 10 GW yourself.

- Prepare for Cost Normalization: As leaders like Anthropic bring massive capacity online, the marginal cost of running AI will eventually decrease. Look for opportunities to transition high-volume use cases from high-cost API calls to self-managed, optimized inference environments when the technology matures.

- Watch the Power Maps: Infrastructure location matters. Companies securing long-term, sustainable power agreements in favorable regulatory environments will have a distinct cost advantage for the next decade.

Anthropic isn’t just hiring veterans to build a bigger server farm; they are securing their competitive future. By controlling the energy, the physical plant, and the human capital required to run it, they are transitioning from being a tenant in the digital real estate owned by the Hyperscalers to becoming a peer landowner in the emerging map of global AI power.

The age of AI infrastructure supremacy has officially begun.