The Great Leap: How Bird Training Unlocked Whale Detection in AI Generalization

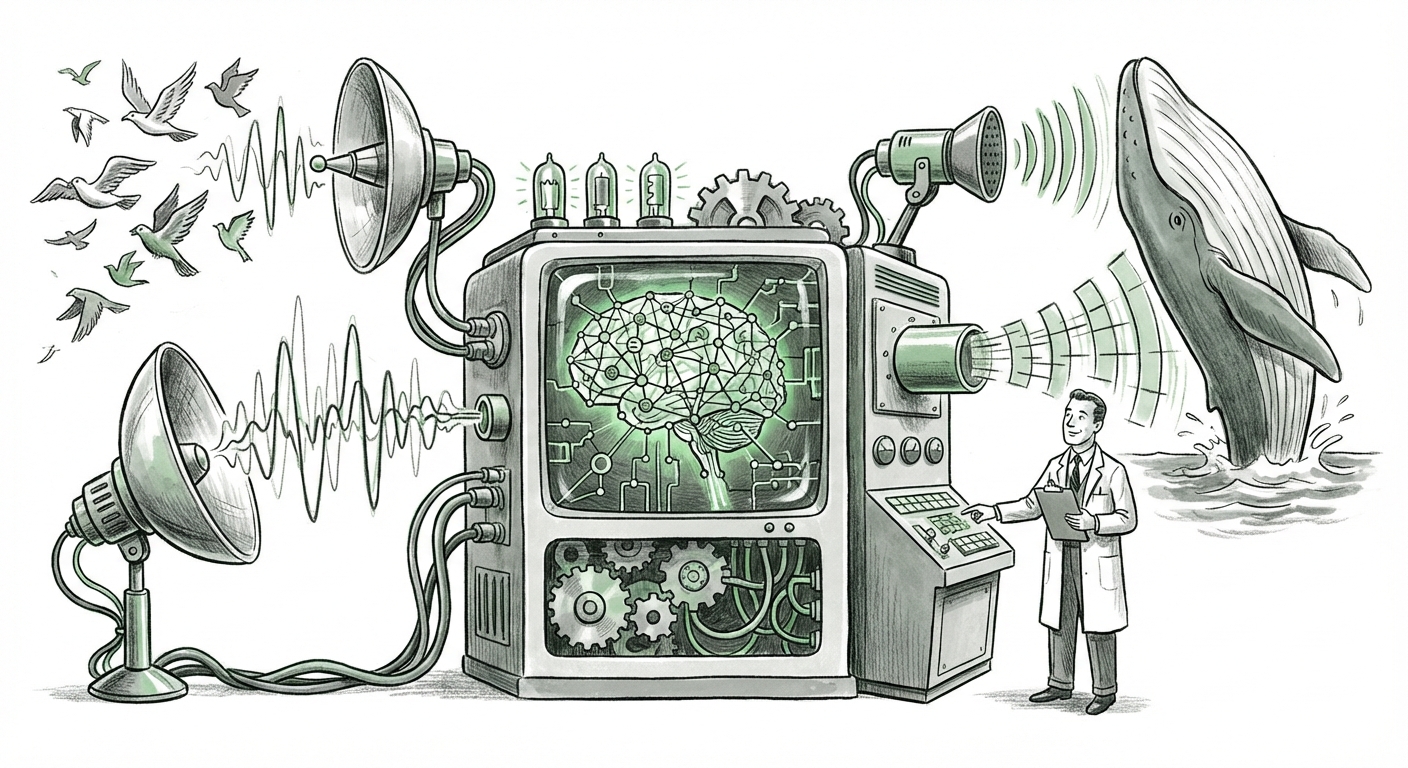

We stand at a fascinating inflection point in artificial intelligence. For years, the focus was on building specialized tools: an AI to read X-rays, an AI to play chess, or an AI to identify one specific dog breed. These systems were brittle; they worked perfectly for their assigned, narrow task, but failed spectacularly outside those boundaries. Now, thanks to massive advancements in how we train these digital brains, that era is ending. The recent news that a general-purpose bioacoustic model, trained mostly on **bird calls**, successfully detected **whale sounds** with state-of-the-art accuracy is not just a neat ecological story—it is a profound signal about the future architecture of AI.

As an AI technology analyst, I see this demonstration, spearheaded by Google DeepMind, as proof of concept for the next generation of technology: Foundation Models capable of broad, adaptable intelligence. This is the moment AI stopped learning vocabulary and started learning grammar. This article dives into the technological pillars supporting this breakthrough and analyzes what this cross-domain generalization means for business, science, and the technological landscape ahead.

The Shift: From Specific Tasks to Universal Understanding

Imagine teaching a child to identify every single type of bird in the forest. You show them pictures, play recordings, and label them: Robin, Sparrow, Blue Jay. If you then ask that child to identify a humpback whale call, they would likely fail unless you specifically trained them on whale sounds. Traditional AI worked the same way.

The DeepMind model skipped the "specific training" step. It learned the *structure* of sound so deeply while studying birds that it could recognize fundamentally similar patterns in the vastly different medium of underwater whale communication. This is the triumph of generalization and transfer learning.

Contextualizing the Breakthrough: The Pillars of Power

To fully grasp the significance, we must look at the underlying technological trends that made this cross-domain leap possible. These trends are not isolated; they reinforce each other to create models that are powerful, adaptable, and less data-hungry for new tasks.

1. Foundation Models: The Universal Engine

The bird-whale example is a perfect, real-world illustration of the power inherent in **Foundation Models**. These are massive AI systems (like large language models, LLMs) trained on colossal, broad datasets. Instead of training from scratch for every application, you start with this giant, pre-trained model. Its internal structures have already learned complex patterns of the world—be it human language, visual geometry, or, in this case, acoustic physics.

The ability of this bioacoustic model to jump domains confirms the hypothesis driving current research: the richer the initial training, the better the model transfers knowledge. When you build an AI system today, the question is shifting from "What data do I need for this task?" to "Which foundation model base can I adapt to this task?"

- For Strategists: This means investing in generalized AI platforms yields better returns than investing in dozens of siloed, narrow AIs. Adaptability is the new moat.

- For Researchers: This validates the scaling laws observed in LLMs—that brute-force training on massive, diverse data leads to emergent, unexpected abilities across domains.

2. Self-Supervised Learning (SSL): Learning Without Labels

How was this model trained so effectively on birds without needing every single bird call perfectly labeled? The secret often lies in **Self-Supervised Learning (SSL)**. Think of SSL as training the AI to play "fill in the blank" with its own data. For an audio model, this might involve masking out a few milliseconds of a sound clip and asking the model to predict what was missing, or asking it to determine if two different segments of audio came from the same recording.

This process forces the AI to learn the fundamental building blocks of sound—pitch contours, rhythm, spectral density—rather than just memorizing that "Chirp A = Robin." Because the model learns these underlying acoustic features from vast amounts of *unlabeled* environmental recordings, it builds a robust internal representation of *sound itself*. This representation, once learned, is highly portable, explaining why the patterns necessary to identify a complex bird song are transferable enough to spot a whale click or moan.

3. Zero-Shot and Few-Shot Power

The ultimate test of generalization is how well a model performs on a task it has seen little or no direct training data for—this is known as zero-shot or few-shot learning.

In the DeepMind case, the model likely required very few—perhaps zero—whale recordings (few-shot or zero-shot) to achieve high performance. It didn't need a million labeled whale recordings; it only needed the general auditory structure gleaned from the birds. This is a massive technological leap for fields suffering from data scarcity, like rare species monitoring.

Practical Implications: The Future of Cross-Domain AI

This ability to generalize across domains has profound implications that extend far beyond tracking marine mammals. It suggests that many "hard" problems requiring massive amounts of specialized data may become significantly easier simply by leveraging our existing, large, general-purpose models.

The Biological Connection: Shared Acoustic Principles

The initial article hints at evolutionary biology, and this is where the technical meets the fundamental. Why did it work? Because complex communication, whether in air or water, obeys similar physical rules. Bird songs and whale songs are both incredibly rich in temporal complexity (how sounds change over time) and spectral complexity (the mixture of frequencies).

The AI is not just classifying birds; it is mastering the physics of complex modulation. This suggests that if we train models broadly enough on human speech, music, machinery noise, and natural environments, they could potentially be adapted to new, entirely alien acoustic environments with minimal adjustment.

Revolutionizing Scientific Monitoring

The most immediate impact is in environmental science and ecology. Monitoring biodiversity is incredibly expensive and time-consuming. Deploying hydrophones to listen for endangered whales or setting up sensor arrays in remote rainforests generates oceans of raw audio data. Manual classification is impossible at scale.

- Cost Reduction: Conservation groups no longer need to spend years labeling data for every single new species discovered in a region. A model trained on known local fauna can often identify the unknown with surprising accuracy.

- Scalability: We can deploy comprehensive acoustic monitoring globally, catching rare events (like the presence of an endangered whale population) far faster than traditional methods allow.

This democratization of analysis capability means that the bottleneck shifts from *data collection* to *asking the right questions* of the pre-trained models.

The Business Angle: Faster, Cheaper Industrial Adaptation

This concept of generalization isn't just for the ocean or the air; it is the future of industrial AI. Consider predictive maintenance in manufacturing. Currently, you train an AI specifically on the sound signature of a failing bearing in Machine A.

With generalized acoustic foundation models, an AI trained on recordings of thousands of different industrial pumps, motors, and ventilation systems (the "birds") can be quickly adapted to diagnose a novel failure mode in a brand-new piece of equipment (the "whale") with only a handful of new sound samples.

This dramatically shortens deployment cycles. Instead of six months to build and tune a custom model, you might achieve near-baseline performance in a week, significantly reducing the time-to-value for automation and operational technology (OT) departments.

Navigating the Next Steps: Actionable Insights for Leaders

The DeepMind experiment serves as a clear waypoint. The path forward involves embracing large, general models rather than pursuing endless specialization. Leaders must position their organizations to harness this emergent adaptability.

Insight 1: Prioritize Data Breadth Over Depth (Initially)

For teams embarking on new AI projects, the initial investment should focus on creating the *broadest possible* dataset related to the domain, even if it is loosely labeled. A large, general audio dataset from a factory floor is likely more valuable long-term than a small, perfectly labeled dataset of just one failure mode. The broad data trains the foundation; the narrow data provides the final polish.

Insight 2: Invest in Cross-Modal Talent

The biggest constraint moving forward will not be processing power, but talent that understands how to bridge domains. We need engineers fluent in both the mechanics of signal processing (acoustic physics) and the architecture of large models (transformer models). This interdisciplinary expertise is crucial for successfully fine-tuning generalized models for niche, high-value tasks.

Insight 3: Prepare for "Black Box" Auditing

As models become more generalized, they often exhibit behaviors that are less intuitive to trace back to specific training examples. If the bird-trained model spots a whale due to subtle frequency shifts learned from a rare tropical insect sound, explaining that precise causal link becomes difficult. Businesses utilizing these systems for critical decisions (e.g., monitoring pipeline integrity via sound) must invest heavily in **Explainable AI (XAI)** techniques tailored to these complex feature representations to ensure reliability and meet regulatory scrutiny.

Conclusion: The Dawn of Fluent AI

The detection of whales by a bird-trained AI model is more than just an exciting headline; it is evidence that Artificial Intelligence is beginning to develop *fluency*. It’s moving from rote memorization to genuine pattern recognition that transcends superficial differences. Just as humans use fundamental principles of physics, emotion, or language structure to navigate new situations, our advanced AI systems are beginning to build those same foundational cognitive tools.

This generalization capability—fueled by massive scale and Self-Supervised Learning—promises a future where AI systems adapt faster, cost less to deploy in new environments, and unlock scientific and industrial capabilities previously thought impossible due to data limitations. The message is clear: the future belongs to those who build and master the generalized foundation, for they will be the ones capable of speaking the language of every new challenge the world throws at them, whether it chirps or sings deep beneath the waves.