The 18-Month Reckoning: Analyzing Microsoft AI CEO's Bold Prediction on White-Collar Automation

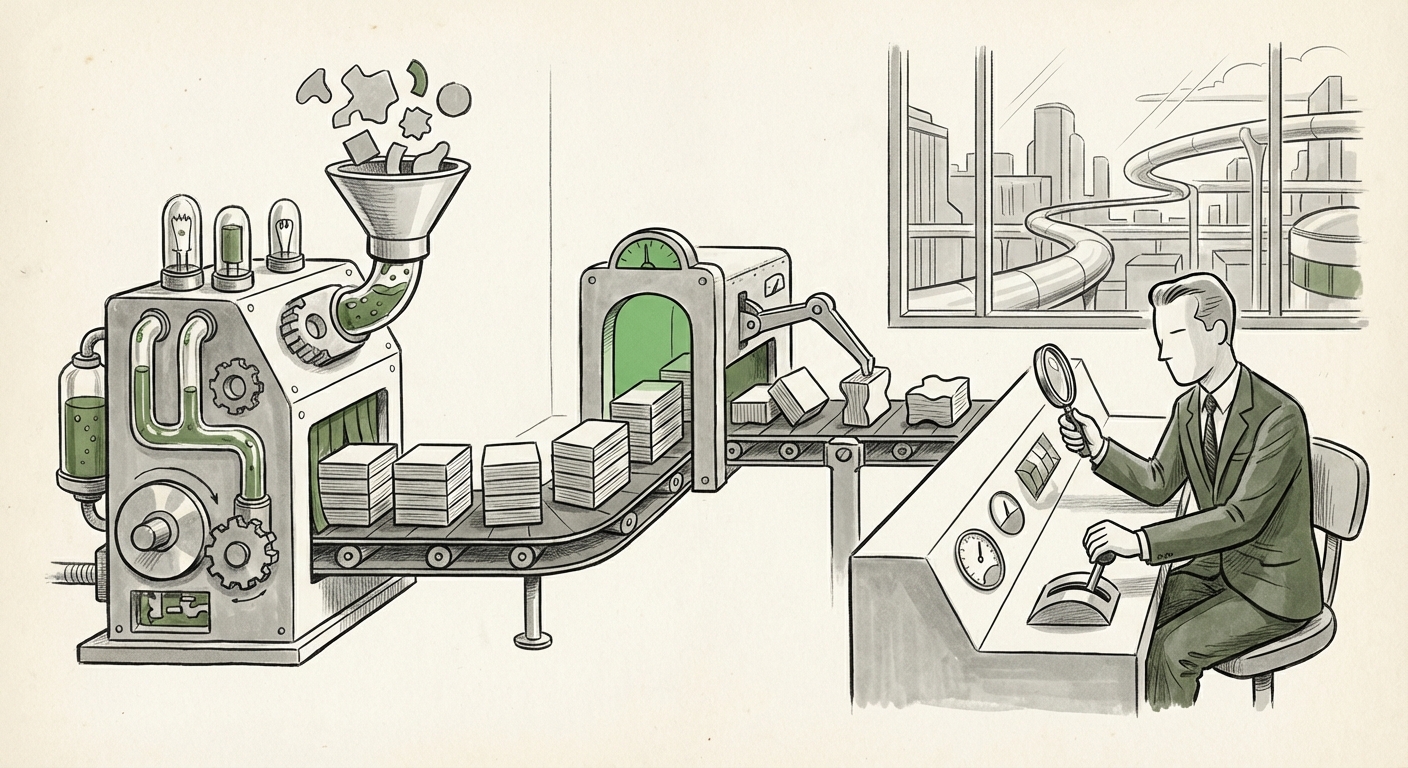

The pace of technological change has always been hard to predict, but when leaders of the companies building the future issue stark warnings, the market listens. Microsoft AI CEO Mustafa Suleyman recently dropped a bombshell, suggesting that the vast majority of traditional white-collar tasks could be automated within the next 18 months.

This is not merely an incremental upgrade; it suggests an *inflection point*—a moment where the capabilities of Generative AI leapfrog from being helpful assistants to near-autonomous executors of knowledge work. For business strategists, tech developers, and everyday professionals, this claim demands rigorous analysis. Is this fear-mongering, visionary foresight, or a realistic roadmap for technological adoption? To answer this, we must move beyond the headline and examine the underlying technological basis, the inevitable organizational friction, and the societal shifts required to meet this aggressive timeline.

The Technological Basis: Why 18 Months Seems Possible

Suleyman’s prediction is rooted firmly in the accelerating performance of modern AI models. A year ago, AI was great at summarizing emails. Today, advanced models are tackling multi-step reasoning, complex coding, and creative synthesis. The core question we address is: What technological leaps make this 18-month window thinkable?

1. The Rise of Integrated Enterprise AI

The biggest change isn't just the raw intelligence of the models, but their integration into workflow infrastructure. Suleyman is speaking from the vantage point of Microsoft, a company embedding AI deep within the tools billions use daily (like Office 365, Dynamics, and Azure). This "in-workflow" deployment removes the friction of having to switch to a separate tool.

Recent studies quantifying the Generative AI impact on knowledge worker productivity reports 2024 frequently cite gains of 30% to 50% in specific coding and writing tasks. When an AI can draft a complete first pass for a legal brief, a market analysis, or a software module in minutes—tasks that traditionally took a junior associate hours—the aggregation of these small gains across an organization leads to massive time savings. This productivity gain, when scaled across hundreds of tasks performed daily by a white-collar worker, makes the "automation of tasks" premise highly plausible.

2. Advancements in Multimodality and Reasoning

White-collar work is rarely just text in, text out. It involves looking at charts, understanding diagrams, cross-referencing codebases, and interpreting stakeholder tone. The push toward the Current state of multimodal AI task execution shows models are becoming proficient in interpreting mixed data types simultaneously. When an AI can read a spreadsheet, understand the accompanying technical drawing, and write the descriptive report based on both, it crosses the threshold from simple automation to complex task execution.

For technical audiences, this means AI agents are developing better "memory" and "planning," allowing them to sustain complex projects—the hallmark of many professional roles—without constant human intervention.

The Friction Points: Why Reality Might Be Slower Than the Prediction

While the technological ceiling might allow for 80% task automation, the organizational floor usually prevents immediate implementation. This is where expert skepticism, often found when searching for expert reaction to Microsoft AI 18-month automation timeline, becomes critical. The timeline is likely aggressive because it discounts human factors.

1. The Bottleneck of Trust and Verification

In high-stakes fields—law, medicine, finance, engineering—automation cannot simply be deployed; it must be *trusted*. A programmer might accept an AI-written code snippet if it passes unit tests, but a financial planner cannot approve a complex derivative strategy without rigorous human validation. The process of verifying AI output, often called "AI auditing" or "human-in-the-loop verification," ironically consumes a significant portion of the time saved by the AI itself.

For the next 18 months, this oversight function will remain firmly human. This shifts the job from "doer" to "validator." While tasks are automated, the *responsibility* for the final product remains with the human, creating a complex hybrid environment.

2. Regulatory Compliance and Liability

Deploying AI at scale requires navigating a patchwork of global regulations concerning data privacy, algorithmic bias, and professional conduct. An AI performing tasks that could lead to legal or financial liability requires stringent governance that simply doesn't exist uniformly today. Industry analyses often highlight that deployment speed, not capability, is the bottleneck. Building the necessary guardrails and receiving sign-off from legal and compliance departments often takes far longer than the time needed to train the underlying model.

3. Organizational Inertia and Cultural Resistance

Companies do not overhaul their processes overnight. Implementing new AI systems requires infrastructure upgrades, data sanitization, and, most importantly, overcoming employee resistance. If workers fear job loss, they are less likely to champion the adoption of the very tools threatening their roles. This cultural adoption curve ensures that while the technology might be ready in 18 months, the enterprise might only be 50% ready.

The Economic Earthquake: Implications for Business and Society

If we accept Suleyman’s premise as a *target* rather than a guaranteed outcome, the implications for capital allocation, education, and economic structure are profound.

1. The Shift from Task Automation to Job Transformation

The conversation must pivot from job elimination to task recombination. An accountant whose primary task was reconciliation may indeed see that task vanish. However, their new, elevated role might involve interpreting the strategic insights the AI generates from that reconciled data, focusing on advisory services instead of data entry. This is the core theme emerging from analyses on economic and labor market forecasting post-generative AI adoption.

The value proposition for white-collar workers will rapidly shift from *execution* to *prompting, strategy, and ethical oversight*. The successful professional of 2026 will be defined by their ability to manage an AI co-pilot effectively.

2. The Rising Premium on "Human-Centric" Skills

As routine cognitive work becomes commoditized by AI, skills that remain uniquely human will command a premium. These include:

- Complex Negotiation and Diplomacy: Negotiating mergers, handling interdepartmental conflict, or building client relationships.

- Unstructured Problem Solving: Tackling entirely novel problems where no training data exists (i.e., true innovation).

- Emotional Intelligence and Empathy: Roles requiring genuine human connection, such as high-level leadership or patient care coordination.

3. Urgent Need for Workforce Reskilling

This rapid change places an enormous burden on educational institutions and corporate training departments. The focus needs to rapidly align with the Skills gap and workforce retraining post-generative AI adoption research. Companies cannot wait for the external education system to catch up; they must proactively develop internal academies focused not just on how to use new software, but how to think critically *through* the AI.

For workers, complacency is the single greatest risk. The required transition demands continuous learning, moving from domain-specific mastery to meta-skills in AI interaction and prompt engineering. If the 18-month window is accurate, the time to begin retraining is not next year; it is now.

Actionable Insights: Navigating the Next 18 Months

For organizations and individuals hoping to thrive—not just survive—in this impending acceleration, the strategy must be proactive and dual-focused: optimize for today’s capabilities while preparing for tomorrow’s integration.

For Business Leaders and Executives:

- Identify "Task Clusters," Not "Job Titles": Stop analyzing which *jobs* will be eliminated. Instead, map out daily tasks within every department. Which 30% are purely data manipulation or first-draft generation? These are your immediate automation targets for pilot programs.

- Invest in AI Governance Early: Establish internal policies for data security, accuracy verification, and responsible use *before* wide-scale deployment. Waiting for compliance risks stalling your adoption past the 18-month window.

- Incentivize AI Fluency: Tie performance reviews and bonuses to the demonstrable effective use of new AI tools. If adopting AI saves an employee 10 hours a week, ensure that time is immediately redirected to high-value, human-centric work, not simply left idle.

For White-Collar Professionals:

- Master the AI Interface: Become an expert prompt engineer for your specific domain. Learn the nuances of telling advanced LLMs exactly what you need in terms of structure, tone, and constraints.

- Focus on the "Last Mile": Understand that AI handles the first 80% (drafting, summarizing, analyzing data). Your irreplaceable value lies in the final 20%—the judgment call, the stakeholder negotiation, and the ethical stamp of approval.

- Cross-Train in Adjacent Fields: If you are a technical writer, learn basic data visualization. If you are a financial analyst, study communication strategy. Broaden your portfolio beyond the core tasks now most susceptible to automation.

Conclusion: Transformation, Not Annihilation

Mustafa Suleyman’s 18-month prediction acts as a powerful stress test for the entire white-collar economy. Whether the timeline is met exactly is less important than the undeniable fact that the *direction* of travel is fixed. The tools are not just becoming better; they are becoming cheaper, faster, and more deeply integrated into the operating systems of business.

The future of white-collar work is not one of mass redundancy, but of radical redefinition. The tasks that define traditional roles are indeed fading, making way for roles focused on governance, strategy, creativity, and complex human interaction. The next year and a half will be defined by which organizations and individuals embrace this transformation with urgency, treating AI not as a threat to be managed, but as the most significant lever for productivity and role evolution in a generation.