The Price War Begins: How MiniMax M2.5 Is Shattering Western AI Dominance with "Too Cheap to Meter" Intelligence

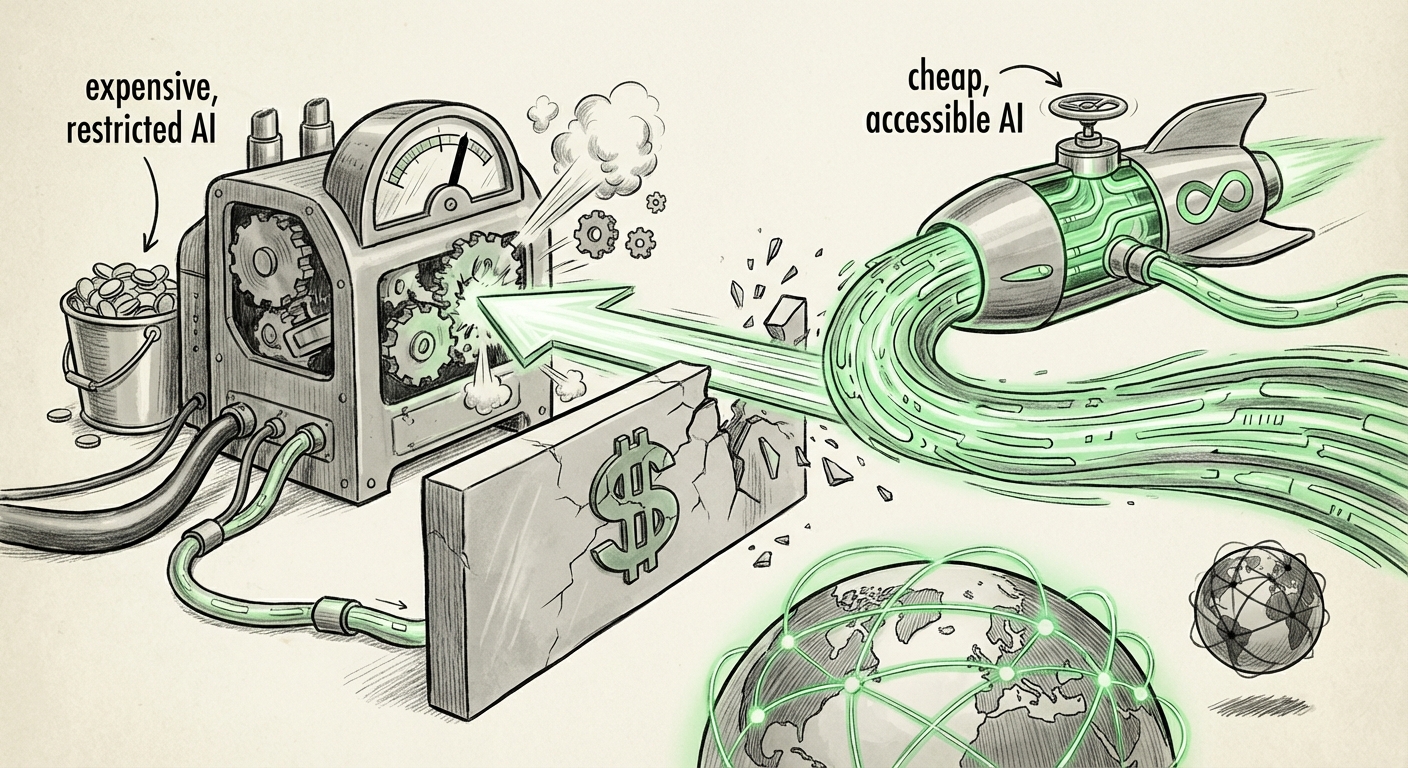

The Artificial Intelligence landscape, long dominated by a handful of well-funded Western labs, just experienced a seismic shift. Shanghai-based MiniMax has released its new foundational model, M2.5, not just as a competitor, but as a potential market disruptor. Packaged under the highly permissive MIT license and boasting the provocative claim of making intelligence "too cheap to meter," M2.5 is more than just another LLM; it is a strategic maneuver challenging the established economic structure of generative AI.

As an AI technology analyst, I see this as the opening salvo in a new era of hyper-efficient, globally distributed AI development. To truly understand the implications, we must examine the model's context—its performance, its licensing, and the geopolitical currents driving this price aggression.

The Core Challenge: Performance Meets Affordability

For years, the industry operated on a tiered structure: proprietary, closed-source models offered peak performance at a premium price, while open-source models offered accessibility at the cost of marginal performance gaps. MiniMax M2.5 appears designed to collapse this dichotomy. The goal isn't just to be good; it's to be *good enough* for the vast majority of enterprise tasks while being so inexpensive to run that usage volume skyrockets.

This concept—"too cheap to meter"—is the ultimate scaling strategy. If the cost of running inference drops precipitously, companies can afford to use AI for tasks previously deemed too expensive, such as summarizing every internal document, running highly granular, real-time sentiment analysis on customer service calls, or deploying localized, specialized AI assistants for every mid-level manager.

Corroborating Context: The Open Model Squeeze

This move doesn't exist in a vacuum. Recent market analysis highlights the intense pricing competition versus closed-source APIs (Query 1). As models like Meta's Llama and Mistral AI release increasingly capable open alternatives, the pricing leverage of closed API providers weakens. MiniMax is taking this pressure to its logical extreme by providing a high-quality model for essentially free deployment.

For AI Engineers and Data Scientists (Target Audience for Query 4), the crucial question is performance. Is M2.5 just cheap, or is it truly competitive? Initial technical evaluations comparing it against the latest offerings from Llama 3 or Mistral will determine if this model can handle complex reasoning, coding, and multilingual tasks efficiently. If it scores even 90% as well as a top proprietary model but at 10% of the deployment cost, the economic decision for businesses becomes obvious.

The Power of Permissiveness: Why the MIT License Matters

The choice of the MIT License is almost as significant as the model weights themselves. Unlike more restrictive licenses that might only allow non-commercial use or require disclosure of modifications, the MIT license is exceptionally permissive. It essentially says: "Do what you want with this, just don't sue us."

This choice directly targets the apprehension felt by CTOs and Legal Teams (Target Audience for Query 3) regarding AI governance and data sovereignty. For enterprises looking to adopt cutting-edge AI without being locked into a single vendor’s pricing structure or subjecting sensitive internal data to third-party API scrutiny, an MIT-licensed model offers supreme flexibility. They can fine-tune it on their proprietary data, host it entirely on their own secure infrastructure, and modify it for niche needs without licensing hurdles.

This is crucial for future enterprise AI adoption. If M2.5 is perceived as stable and trustworthy—a context we must establish by monitoring reports on Chinese AI ecosystem growth (Query 2)—its open-source nature transforms it from a risky alternative into a foundational utility.

Geopolitical Implications: A New Front in Tech Competition

The rise of powerful, aggressively priced, and openly distributed models from Chinese labs injects a new dynamic into the existing AI rivalry between the US and China. This isn't simply about who has the biggest model; it's about who can deploy the most *efficient* and *widespread* intelligence infrastructure globally.

Reports on China's LLM open-source strategy (Query 2) suggest a concerted effort to democratize access to cutting-edge technology domestically and increase technological influence abroad. By offering high-quality models cheaply and openly, MiniMax is positioning itself as a global infrastructure provider, potentially bypassing traditional trade friction associated with closed, state-of-the-art systems.

For Policy Analysts, this signals a pivot. The battleground shifts from foundational research supremacy to implementation efficiency and ecosystem control. If the world begins building its specialized AI applications on a highly capable, openly licensed Chinese foundation, it fundamentally changes the leverage held by US-based AI leaders.

The Mechanics of Cheap Inference: Efficiency is the New Scaling Law

How does one achieve "too cheap to meter"? The answer lies deeply in infrastructure and optimization. For businesses, running LLMs is costly because of the vast memory and computational power (GPUs) required for inference—that is, making the model *think* and generate an answer.

We must investigate the cost reduction in LLM inference (Query 5). Advances in techniques like 4-bit or 2-bit quantization, speculative decoding, and highly optimized kernels mean that a powerful model can now run efficiently on less expensive hardware. If M2.5 is built utilizing these newer efficiency standards, its operational cost plummets, directly enabling the low-cost promise.

Imagine a company that currently pays $10,000 a month for premium API access. If they can deploy M2.5 on their own cloud cluster for a fixed cost of $1,500 (or even less, depending on volume) and receive comparable output quality, their internal cost calculation fundamentally changes. They transition AI from an operational expense dictated by a vendor to a manageable capital investment.

Future Implications: Restructuring the AI Value Chain

The M2.5 release forces a hard reset on several industry assumptions. What does this mean for the future of AI development and usage?

1. Democratization of Customization

The era where only the largest corporations could afford proprietary models is ending. Smaller companies, startups, academic researchers, and even independent developers can now access world-class baseline intelligence for free. This will lead to an explosion of niche, highly specialized applications built upon this foundation. We will see faster innovation cycles as iteration speed improves when the cost barrier to testing new ideas is removed.

2. The Shift from API Provider to Infrastructure Specialist

For Western giants, the primary revenue stream—API calls—faces existential threat. Their future defense may need to shift away from selling raw compute power via API toward providing superior custom fine-tuning, enterprise-grade security wrappers, or specialized, proprietary data Moats that open-source models cannot easily replicate.

3. New Risks in Trust and Bias

While the MIT license offers freedom, it shifts the burden of responsibility entirely to the end-user. If M2.5 harbors subtle biases or safety vulnerabilities inherent in its training data, the deploying enterprise is fully liable. Businesses must immediately bolster their internal governance, auditing, and red-teaming processes for any self-hosted, open-weight model. Transparency regarding training data provenance becomes paramount.

4. Hardening the Open-Source Ecosystem

The presence of a powerful, aggressively priced model from China will likely spur its Western counterparts (Meta, Mistral) to accelerate their own high-performance open releases to maintain market share. This competitive pressure is excellent for developers, ensuring that open-source models continue to close the gap with proprietary leaders at an unprecedented pace.

Actionable Insights for Business Leaders

The deployment of M2.5 should trigger immediate strategic reviews within any organization currently relying heavily on paid, closed-source LLM APIs for core processes.

- Conduct a Cost-Benefit Audit: Identify your top five highest-volume AI usage cases (e.g., summarization, classification, internal knowledge retrieval). Compare the current API cost of these tasks against the estimated total cost of ownership (TCO) for self-hosting a model of M2.5’s caliber. This analysis must include GPU procurement/rental, maintenance overhead, and engineering time.

- Establish a Dual-Strategy Roadmap: Do not immediately abandon current vendors, but dedicate engineering resources to testing and fine-tuning M2.5 (or similar high-value open models) for non-critical or internal workloads. Create a phased roadmap to migrate cost-sensitive workloads to self-hosted infrastructure over the next 6-12 months.

- Prioritize Governance and Compliance: If you choose the "too cheap to meter" route by self-hosting, you inherit the full responsibility for model safety and data privacy. Invest immediately in internal alignment tuning layers and rigorous input/output filtering systems to mitigate risk.

- Monitor Performance Benchmarks Closely: Track independent evaluations (Query 4). A model that is 95% as good today might be 99% as good in three months due to community iteration. Your evaluation criteria must remain dynamic.

MiniMax M2.5 serves as a powerful market barometer. It signifies that the foundational layer of AI capability is rapidly commoditizing. The future value in AI will increasingly shift away from *who has the best base model* and toward *who can best apply, secure, and innovate on top of highly efficient, accessible infrastructure.* The era of paying premium prices for basic intelligence is officially under siege.