The AI Price War Begins: How MiniMax M2.5's "Too Cheap to Meter" Model Shifts Global Tech Power

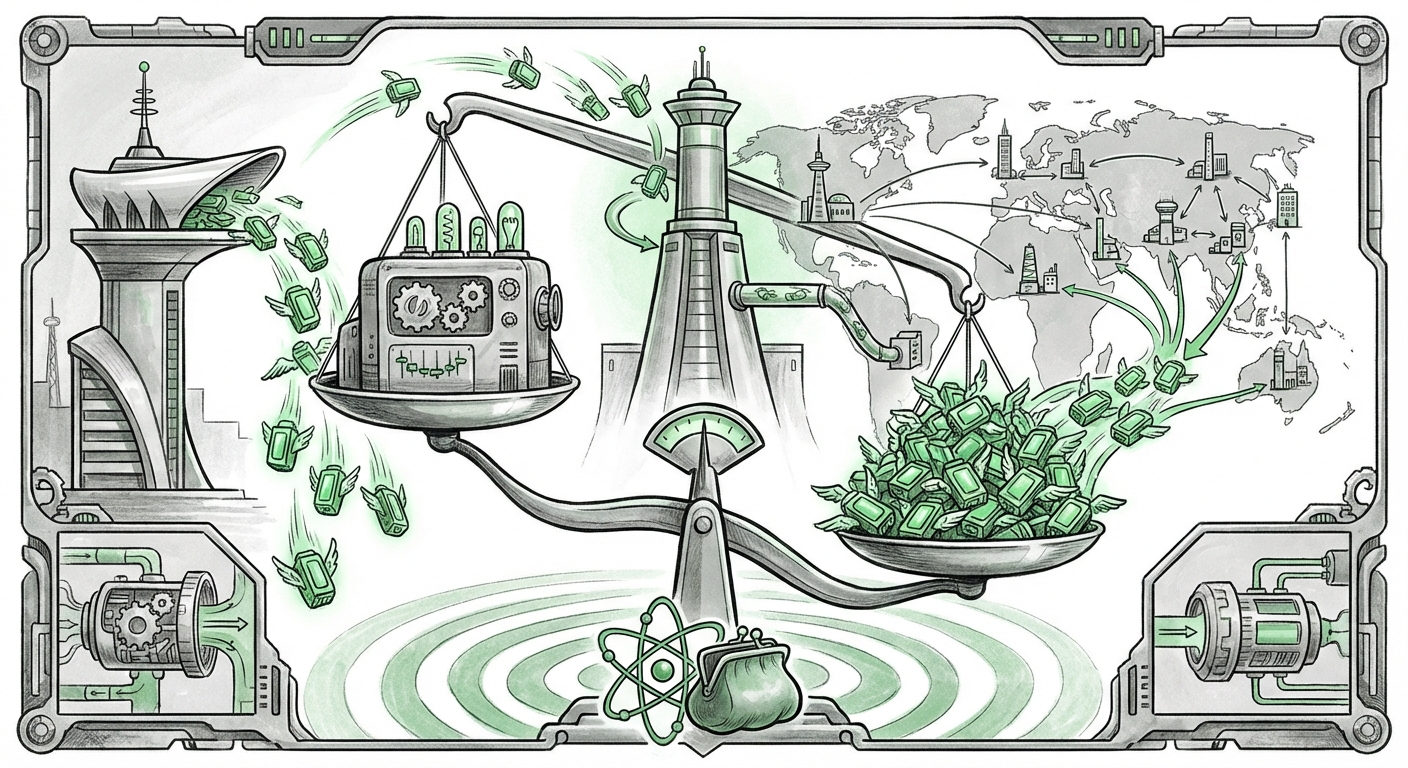

The Artificial Intelligence landscape is undergoing its most significant market realignment since the launch of GPT-4. Shanghai-based MiniMax has released its latest large language model, M2.5, under the highly permissive MIT license. This move is not just another technological iteration; it is an economic declaration: intelligence is about to become too cheap to meter. This development forces a direct confrontation with the business models of Western AI giants, intensifying the technological race while simultaneously democratizing access to powerful AI tools.

For years, the leading edge of AI development has been defined by proprietary models guarded behind expensive Application Programming Interfaces (APIs). MiniMax, by releasing M2.5 openly and aggressively pricing its services below market expectations, is attempting to hijack the adoption curve. To truly understand the gravity of this moment, we must analyze the competitive pressure, the strategic significance of Chinese open-source contributions, and the long-term geopolitical fallout.

1. The Squeeze: Price Compression and Competitive Reactions

The fundamental business model for companies like OpenAI, Anthropic, and Google has relied on training extremely expensive, state-of-the-art models and monetizing access through pay-per-token APIs. MiniMax’s M2.5 enters this ecosystem as a deflationary shockwave. The explicit goal is to make the cost of running competent AI infrastructure so low that it becomes a commodity, similar to cloud storage or basic web hosting.

The Open-Weights Challenge

When a powerful model is released under an open-weights license (like MIT), developers can download the model’s core architecture and run it on their own servers, fine-tune it for specific tasks, and crucially, avoid paying usage fees to the original creator. This directly impacts the Western giants’ ability to maintain high margins.

For AI Strategists and VCs, this signals a crucial strategic pivot. If a model like M2.5 achieves 85-90% of GPT-4’s performance—a benchmark that increasingly seems plausible for top open models—enterprises will naturally migrate to the option that saves them substantial operational expenditure (OpEx).

We must anticipate two main reactions from Western incumbents:

- Doubling Down on Frontier Models: Focusing development almost exclusively on models that offer capabilities (like true multimodal reasoning or complexity handling) that are demonstrably superior to any open-source alternative. If they cannot win on cost, they must win on absolute capability.

- Price Erosion: Inevitably, API costs for existing models will drop as the perceived value of "good enough" intelligence decreases. This commoditization phase will put severe pressure on startups built entirely on high-cost API consumption.

2. The Significance of Chinese Open-Weight Models: A Pattern Emerges

MiniMax is not operating in a vacuum. The release of M2.5 should be viewed through the lens of an evolving "China Open Source AI Strategy." For years, Western focus was on the secrecy of proprietary development. Now, Chinese labs are strategically contributing powerful, performant models to the global open-source ecosystem.

Technical Validity: Performance vs. Price

The crucial question for Engineers and Researchers is: Is M2.5 actually good enough? Technical reviews (which search queries like the one targeting benchmarks aim to uncover) are vital here. If M2.5 proves highly efficient—meaning it requires less computing power (fewer GPUs) to run tasks quickly—its "cheap to meter" claim becomes technically sound, irrespective of the API price. This efficiency often comes from superior data curation or optimized model architectures.

The implications for the global AI landscape are profound. A robust ecosystem of open, high-quality models originating from China ensures that the foundation layers of future AI applications are not exclusively controlled by US-based entities. This decentralizes innovation and accelerates iteration speeds worldwide, as global developers can build upon these shared foundations.

Geopolitical Standardization

From a Policy Maker's perspective, open-sourcing is a powerful tool for soft power and standardization. By making their models accessible under licenses like MIT, Chinese labs effectively export their preferred standards for safety alignment, model structure, and even inference optimization. This subtly influences the global developer base, potentially creating dependencies on Chinese-backed open frameworks, even if the hardware supply chain remains contested.

3. The Economic Earthquake: Implications for Enterprise Adoption

The MIT license is the accelerant in this scenario. It removes the two primary barriers to massive enterprise adoption: cost and vendor lock-in. For businesses—from small SaaS providers to large financial institutions—the implications are immediate and require urgent strategic review.

The Shift from API Consumption to Self-Hosting

Historically, smaller companies could only afford to experiment with cutting-edge AI via APIs. Now, the equation shifts. If MiniMax M2.5 offers competitive performance and the necessary weights are public, companies can assess the total cost of ownership (TCO) of self-hosting versus API calls.

For Business Development Teams, this means:

- Customization is King: Enterprises can fine-tune M2.5 on proprietary data without fear of that data leaving their secure environment or incurring high token costs during iterative testing.

- Inference Latency Control: Running models locally or in a private cloud allows companies to optimize for ultra-low latency—critical for real-time applications like customer service bots or algorithmic trading—something API calls cannot guarantee.

The threat to incumbents is vendor lock-in erosion. If the foundational model becomes a utility, the value shifts entirely to the application layer and the proprietary data used for fine-tuning. The ability to switch between MiniMax-derived architectures and Meta’s Llama derivatives, or even future models from startups, becomes seamless.

Future Trajectory: What Happens Next in the AI Arms Race

The MiniMax M2.5 launch is the bellwether for the "post-API era" of AI commoditization. The next 18 months will likely define the surviving business models in the sector.

Actionable Insight for Businesses: Audit Your AI Dependencies

Every technology leader must immediately initiate a "AI Dependency Audit."

- Categorize Use Cases: Segment all current AI workloads into two categories: Frontier Needs (requiring the absolute best, currently proprietary performance) and Commodity Needs (tasks where 85% accuracy at 10% of the cost is acceptable).

- Pilot Open-Source Tiers: Begin testing M2.5 or similar high-performing open models against your commodity needs. If M2.5 demonstrates strong inference efficiency, begin allocating R&D resources toward internal fine-tuning and deployment pipelines.

- Re-evaluate Vendor Lock-in: Assess how difficult it would be to switch from a proprietary provider to a self-hosted open model. The MIT license makes this switching cost extremely low from a legal perspective, but the engineering cost of migration must be accounted for now.

The Next Frontier: Efficiency Over Scale

For researchers and engineers, the focus shifts from simply "bigger models are better" to "smarter, smaller, and faster models rule the enterprise." The race is now on to optimize performance-per-watt and performance-per-dollar. Models that can achieve high utility on smaller, cheaper hardware (edge computing or mid-range data centers) will become the default enterprise choice.

This movement, spearheaded by releases like MiniMax M2.5, signals that the foundational layer of generative AI is democratizing at an unprecedented pace. While the leading proprietary labs will continue to push the absolute boundaries of intelligence, the vast majority of commercial value will soon be generated by applications running on models that are "too cheap to meter." The playing field is leveling, and innovation will now move faster than ever, driven by accessibility rather than exclusivity.

The global AI chessboard has just seen a major move, shifting the focus from proprietary control to accessible, high-efficiency innovation emanating from new global centers of power.