The Structured Web: How Google's WebMCP Is Forging the Internet for AI Agents

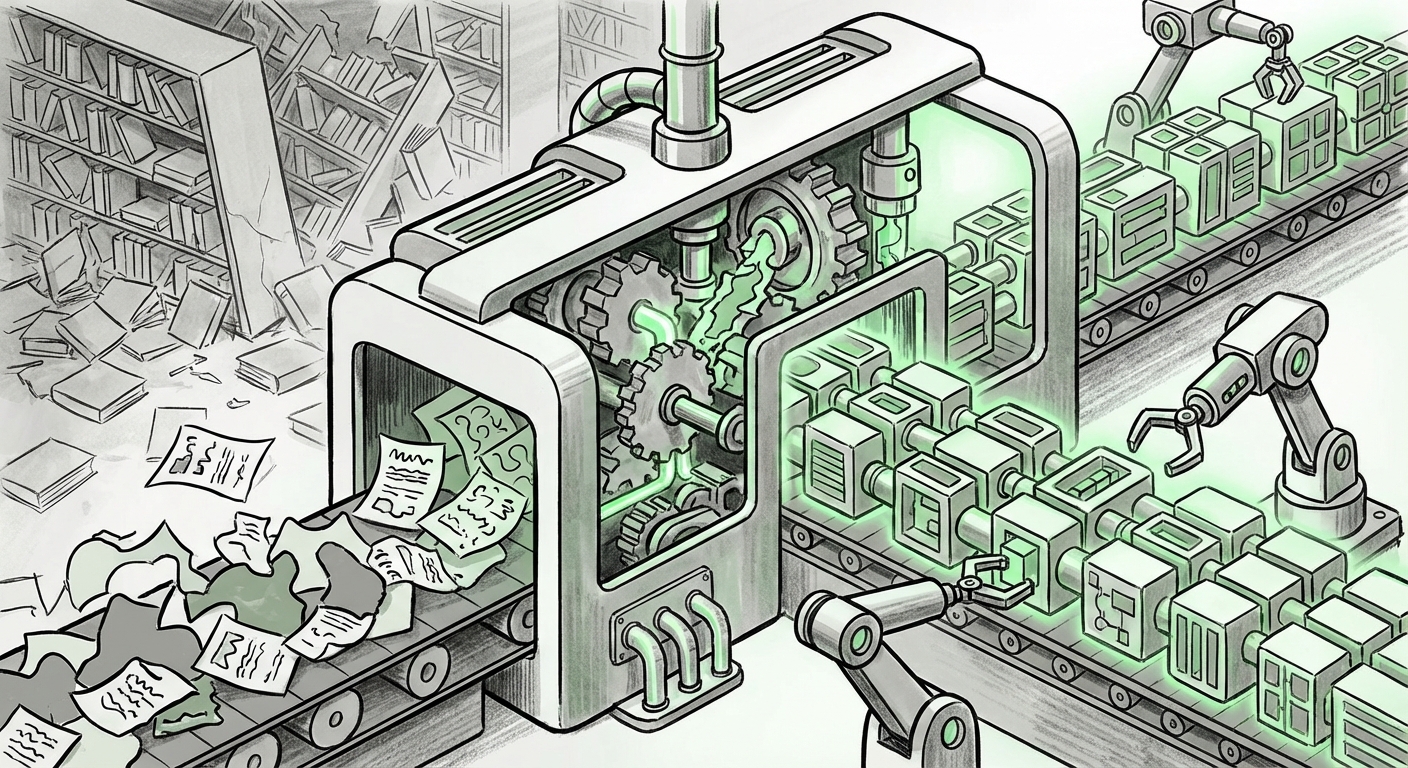

For decades, the World Wide Web has been a vast, glorious, and messy library built primarily for human consumption. We read text, we click buttons, and we scroll pages designed around our visual perception and patience levels. However, if you look closely at the trajectory of Artificial Intelligence, a major tectonic shift is underway: the internet is being redesigned, not for us, but for our digital successors—AI agents.

The leading indicator of this massive transition is Google’s initiative known as WebMCP (Web-based Multimodal Composition Protocol). As reported by The Decoder, WebMCP aims to transform websites from unstructured content hubs into standardized, machine-readable interfaces. This isn't just a slight upgrade to search engine optimization; it is the blueprint for the next generation of the internet, where AI doesn't just *find* information, but actively *uses* the web to complete complex, multi-step tasks.

The Great Transition: From Browsing to Executing

To understand WebMCP’s significance, we must first understand the evolution of AI interaction. Early AI assistants were like librarians: you asked for a book (information retrieval), and they pointed you to the shelf (a link). Modern LLMs are like highly knowledgeable research assistants who can summarize that book.

The next phase, **Agentic AI**, turns these assistants into operational employees. An agentic AI doesn't just search for flight prices; it browses Kayak, selects the cheapest non-stop option, checks your calendar, books the ticket using your stored payment information, and emails you the confirmation—all autonomously. For this to happen reliably across millions of disparate websites, those websites must speak a common machine language. That is WebMCP’s core promise.

This movement is not isolated to Google. Contextual analysis suggests this is a widespread industry goal. Efforts to enhance **"agentic AI browsing capabilities" standardization efforts** show that competitors like OpenAI and Microsoft are also prioritizing enabling their models to interact with external tools and interfaces robustly. WebMCP is Google’s proposed standardization protocol—the common API layer—that will allow any AI agent, regardless of its origin, to predictably interact with any compliant website.

In essence, we are moving from a system optimized for the $\text{human $\rightarrow$ interface $\rightarrow$ action}$ workflow to a $\text{AI Agent $\rightarrow$ Protocol $\rightarrow$ Action}$ workflow. This transition fundamentally redefines what the "front end" of the web will be.

Technical Deep Dive: WebMCP vs. The Old Guard (Schema.org)

How does this standardization work? For years, webmasters have used **Schema.org** markup—small bits of code that describe what content *is* (e.g., "this is a Product Price," "this is a Review Rating"). Schema helps search engines understand content contextually.

WebMCP appears to be a deeper, more *prescriptive* layer. It seeks to define not just what the data is, but how to manipulate the interface elements that contain that data. If Schema.org tells the AI, "The 'Buy Now' button is located here," WebMCP aims to tell the AI, "This is the standardized identifier for the checkout function, and clicking it initiates the secure transaction sequence according to these rules."

For developers, this requires an unprecedented level of specificity. The need to analyze **"WebMCP" vs "Schema.org" for AI agents** highlights a crucial technical fork in the road. Will WebMCP become the necessary successor to Schema, forcing a total overhaul of how sites are built? Or will it act as an advanced, required layer on top of existing semantic markup? The technical audience—web developers and SEO specialists—must prepare for a shift from semantic labeling to functional protocol adoption.

The Economic Shockwave: Disruption for Digital Publishers

While the technological elegance of a structured web is appealing, the economic implications for current web operators are stark. Websites often rely on friction—the necessary steps a human must take—to generate value. This value is typically captured via advertising impressions, time-on-site metrics, and lead generation forms.

If an AI agent can bypass the content entirely to achieve a goal, the current digital revenue model breaks down. Imagine an agent shopping for shoes. Instead of navigating ten different e-commerce sites, clicking through product galleries, and viewing ads, the agent uses WebMCP to identify the lowest price and execute the purchase in milliseconds.

This is analogous to the rise of "headless browsing," but far more automated. Reports focusing on the **economic impact of "headless browsing" on digital publishing revenue** already signal concern over traffic manipulation. With WebMCP, this manipulation becomes the intended function. Content creators must face the difficult reality: if their primary value proposition is guiding human eyeballs to a transaction, and AI agents can jump straight to the transaction, their existing monetization strategies may become obsolete.

Businesses may need to pivot rapidly to a subscription model, direct data licensing, or B2B data services, rather than relying on ad-supported or human-traffic models. The value proposition shifts from "the best content" to "the most reliable, structured data endpoints."

Navigating the New Frontier: Ethics, Security, and Governance

With great operational power comes significant systemic risk. If AI agents are empowered to act across the web using standardized protocols, the potential for large-scale errors, exploitation, or fraud skyrockets. This mandates urgent discussion on **"AI agents acting on the web" ethical concerns**.

1. Liability and Error Propagation

If an agent, operating under the WebMCP standard, misinterprets a structured command and books ten incorrect hotel rooms instead of one, who is liable? Is it the AI developer, the website operator who provided the faulty protocol structure, or the agent owner? The current legal framework for contractual agreements and consumer protection is ill-equipped for autonomous digital execution.

2. Security and Rate Limiting

A standardized protocol creates an optimized attack vector. Bad actors or poorly controlled agents could overwhelm services by executing high-frequency, automated transactions. Web operators will need robust new security layers designed specifically to differentiate between a legitimate, high-volume agent and a denial-of-service attack, potentially requiring new forms of AI-centric authentication.

3. Digital Literacy Redefined

For the average user, digital literacy will need to expand beyond knowing how to spot a phishing email. Users will need to understand which of their activities are being delegated to agents, and what level of trust they are extending to these automated employees. The ability to audit an agent's actions will become a crucial life skill.

Actionable Insights for the Transition

The shift toward an AI-executable internet is inevitable, driven by the sheer efficiency gains it offers. For stakeholders across the technological ecosystem, proactive adaptation is key:

- For Web Developers & Architects: Begin exploring protocols that dictate *functionality* over mere semantics. Monitor WebMCP specifications closely and develop internal sandboxes to test how existing site functions (checkout, registration, inventory checks) could be exposed via standardized agent interfaces.

- For E-commerce & Publishers: Immediately audit your revenue streams against the threat of "headless" consumption. Start experimenting with direct data subscription models or tiered access where agents pay for bulk, structured access, while human users retain the traditional, ad-supported experience.

- For AI Developers: Focus on building sophisticated internal planning and error-checking mechanisms. Reliable agentic AI requires agents that can safely interpret and verify the structured data provided by WebMCP interfaces before executing irreversible actions.

- For Policy Makers: Begin drafting flexible regulatory frameworks that address autonomous digital contracting and liability. The speed of technological adoption will far outpace traditional legislative cycles.

Google's WebMCP is more than just a new standard; it is a declaration of intent. It signals that the next trillion dollars of digital value will flow not through pages visited by humans, but through interactions executed by machines. The internet is being reforged into a global, shared database ready for its new, autonomous workforce.