The Operational Web: How Google's WebMCP is Forging the Internet's Backend for Autonomous AI Agents

For over three decades, the World Wide Web has been built primarily for human eyes. We navigate using hyperlinks, interpret visual layouts, and decipher meaning through context and graphic design. This massive, shared repository of information is fundamentally unstructured, relying on human intuition to bridge the gap between raw data and actionable intent. This era is rapidly drawing to a close.

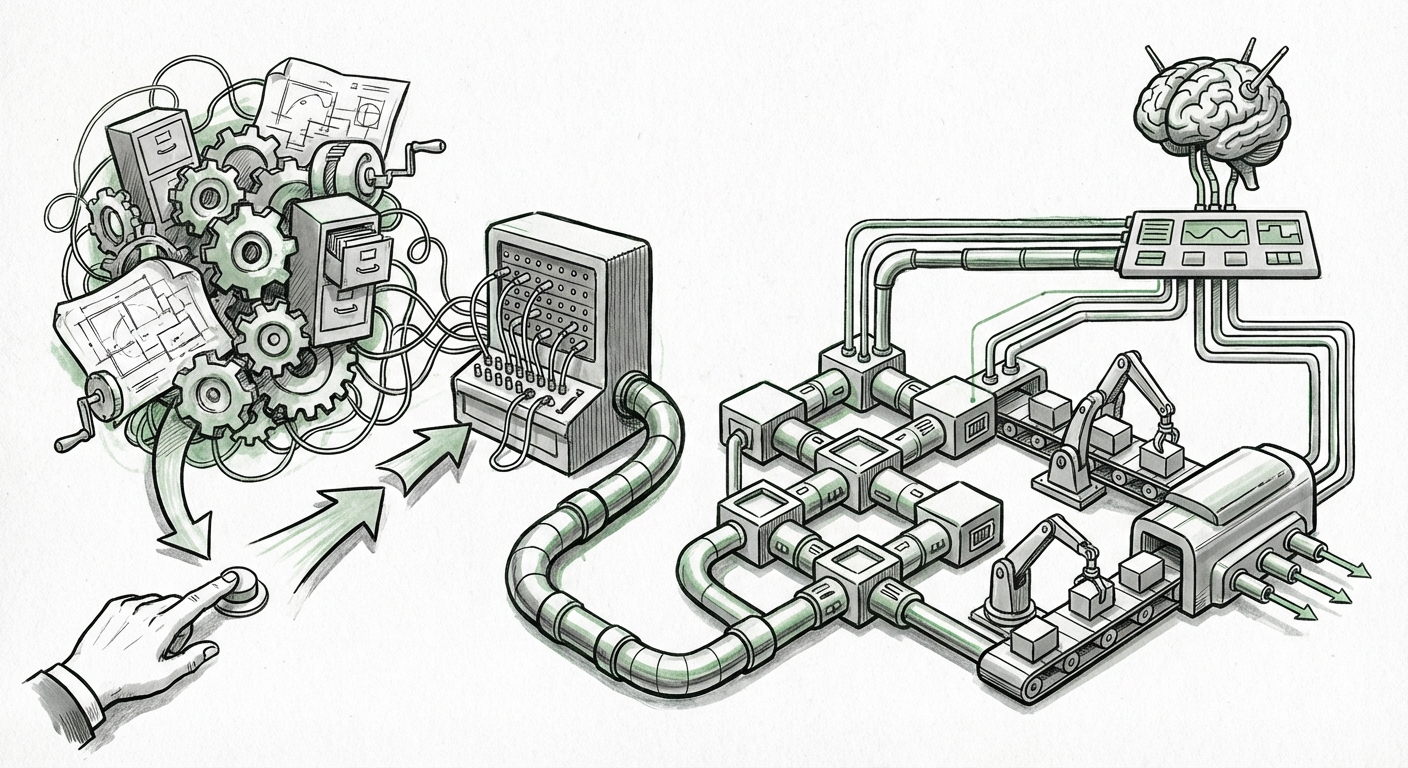

The arrival of sophisticated AI models capable of complex reasoning and planning signals a massive technological inflection point. Big AI no longer just wants to read the web; it wants to use it. This ambition has brought forth ambitious infrastructure projects, most notably Google’s WebMCP (Web Machine Control Protocol, or similar concept), which seeks to turn websites into standardized, machine-readable interfaces. This is not merely an SEO update; it is the blueprint for transforming the internet into the operational backend for a global network of autonomous AI agents.

The Great Unstructuring: From Documents to Databases

Imagine needing to order a pizza. A human opens a browser, looks for the menu, finds the customization options, locates the checkout button, and enters payment details. This process involves constant, real-time decision-making based on visual cues.

For an AI agent, this is chaos. Without standardization, an agent must rely on brittle, brittle screen-scraping techniques—interpreting the visual layout of one site today, only to fail tomorrow when the site owner slightly moves a button. This is why WebMCP is crucial. It represents a push, likely leveraging enhanced versions of **Schema.org** standards (as corroborated by discussions on **Schema.org AI agents structured data integration**), to embed explicit instructions directly into web code. The goal is to move from "Here is a picture of a button" to "This element is the `OrderConfirmationAction` node, and clicking it executes the transaction protocol."

This is the essential technical challenge: If we want AI agents to reliably complete tasks—like booking a complex itinerary or managing subscription renewals across multiple services—they must interact with these services through predictable, standardized Application Programming Interfaces (APIs), whether those APIs are explicitly coded or inferred through standardized metadata. WebMCP pushes the entire web toward this API-like state.

The Technical Blueprint: Schema.org’s Agent Upgrade

The technology driving this is rooted in the concept of the Semantic Web, made practical. For WebMCP to work, website operators must adopt formal ontologies. When we look at how major players are integrating structure, we see this focus intensify. For example, recent industry discussions suggest Schema.org vocabulary is being extended far beyond simple product descriptions or recipes. We are seeing the formalization of vocabularies for "Agent Capabilities," "Required Permissions," and "Action Flows."

This transition satisfies a core requirement for the emerging **Agent Economy** (a concept frequently discussed in analyses concerning the rise of models needing tools and access). Without this structure, an AI agent is merely a sophisticated calculator; with it, the internet becomes its toolbox, capable of executing the instructions we give it.

The Agent Economy: Agents That Browse, Shop, and Execute

The shift from search engine to task agent is perhaps the most significant development since the arrival of generative AI itself. Search engines retrieve information; agents retrieve information and execute the necessary steps based on that information. This future, often explored in articles analyzing the **shift from web search to web agents browse execute**, fundamentally changes the nature of online interaction.

Consider the competitive landscape. Competitors like Microsoft and OpenAI are not standing still. Their strategies, often analyzed in the context of **Microsoft Bing AI agent infrastructure vs. Google**, also point toward equipping their large models with robust, reliable methods of interacting with the external world. If one major platform standardizes agent interaction through WebMCP, the pressure mounts on others to either comply or develop competing infrastructure. This forces industry-wide convergence toward machine-executability.

From Browsing to Acting

For the end-user, the benefit is profound: delegation. Instead of spending hours comparing prices, filling out forms, and managing logistics, a user instructs their agent: "Book me a flight to London next Tuesday, ensuring the hotel is within walking distance of the financial district and under $300 a night." The agent, using structured data provided via protocols like WebMCP, performs these tasks instantly and reliably.

This capability moves AI from being a creative assistant to a true digital colleague, fundamentally shifting productivity curves across every industry.

The Disruption: Who Gets Paid in the Operational Web?

While the technological promise is immense, the economic disruption for the established web ecosystem cannot be overstated. This brings us to the serious implications noted for website operators who rely on human traffic.

The current web economy is largely predicated on the human visitor. Revenue streams such as display advertising, affiliate commissions based on human clicks, and even site conversions are all measured by human engagement. What happens when the majority of "traffic" is machine-driven, autonomous execution?

Articles exploring the **impact of AI agents on website monetization and direct traffic** highlight a looming crisis for mid-tier publishers and e-commerce sites that lack proprietary APIs:

- The Death of the Click: If an agent executes a purchase directly via a structured protocol (WebMCP), the site receives the transaction but bypasses the ad impression, the direct navigation, and the user journey that advertisers pay for.

- Content Value Erosion: If an agent can perfectly summarize or execute based on structured data, the value proposition of simply hosting a static page diminishes drastically. Content creators must shift their monetization to access fees, exclusive agent toolkits, or direct contracts, rather than relying on passive viewing.

- Agent Scraping vs. Fair Use: The ease with which agents can extract information (even structured information) raises severe questions about data ownership and whether companies should be compensated when an agent "uses" their standardized information to complete a task for a third party.

For business owners accustomed to SEO optimizing for Google Search, the challenge now is Agent Optimization. It’s less about keywords and more about providing clean, unambiguous APIs for the machines that will soon be doing the shopping and booking.

Actionable Insights for Navigating the Transition

For organizations still operating on the legacy web model, inaction is not an option. The future demands adaptability. Here are the primary actionable insights derived from this emerging infrastructure:

1. Prioritize Machine Readability Over Visual Design

If your business relies on users completing complex workflows on your site (e.g., B2B lead generation, complex bookings, subscription management), you must aggressively invest in structuring your content using industry standards like Schema.org. Think of your website's HTML not just as code for Chrome or Safari, but as a standardized interface contract for the coming generation of AI agents. If the structure is ambiguous, the agent will either fail or choose a competitor whose structure is clearer.

2. Develop API-First Strategies

The ultimate goal of WebMCP is to make the web function like a set of robust APIs. Businesses should proactively build and secure their own explicit APIs for core functions (inventory checking, checkout, data submission). Relying on agents to navigate your front-end interface is a temporary solution; proprietary, secure APIs will be the long-term standard for high-value transactions.

3. Reassess the Value Chain of Traffic

Media and advertising companies must immediately explore models where value is derived from the *action* or the *data access*, rather than the *impression* or the *click*. This may involve metered access for autonomous agents or licensing structured data feeds directly to enterprise AI platforms. The focus must shift from attracting eyeballs to establishing machine service agreements.

4. Monitor Agent Framework Standardization

Pay close attention to open-source agent frameworks (like those analogous to AutoGen or other autonomous web interaction tools). As these frameworks mature, they will dictate which web standards are prioritized. Early adoption of required standards ensures your digital presence remains accessible when the shift accelerates.

Conclusion: The Web’s Next Revolution is Under the Hood

Google’s WebMCP is a loud signal that the next phase of the internet revolution will happen beneath the surface. We are moving away from the relatively chaotic, human-centric web of the 2000s and toward a highly efficient, machine-governed operational network.

The future of AI is not just about smarter models; it's about models capable of doing things in the real world through digital interfaces. To enable this monumental leap in automation, the foundational layer—the web itself—must be rebuilt to speak the language of logic and structure. For technologists, this is an exciting mandate for innovation; for business leaders, it is an urgent call to restructure their digital assets before the machines arrive and start shopping without them.