The AI Speed Trap: Safety, Exodus, and the Fight for Generative AI Dominance

The race to Artificial General Intelligence (AGI) is often depicted as a glamorous sprint among tech titans. However, recent turmoil within Elon Musk’s xAI—specifically reports detailing a significant founder exodus tied to concerns over missing safety standards and lagging product performance—pulls back the curtain on a far more complex reality. This is not just a story about one company; it is a microcosm of the central tension defining the entire generative AI landscape today: the high-stakes trade-off between **speed, safety, and commercial success**.

As technology analysts, we must look beyond the headline drama. The departure of foundational talent often signals deep structural problems within the product development philosophy. By examining this event through the lens of industry trends—talent flight, performance gaps, and cultural friction—we can better predict the future trajectories of AI deployment.

The Core Conflict: Speed vs. Structure in the AI Gold Rush

The modern AI environment is defined by overwhelming investor expectation and a fear of missing out (FOMO). Companies are under immense pressure to ship the next breakthrough model before their rivals. For xAI, this meant a constant sprint to close the gap with established leaders like OpenAI (GPT series) and Anthropic (Claude series).

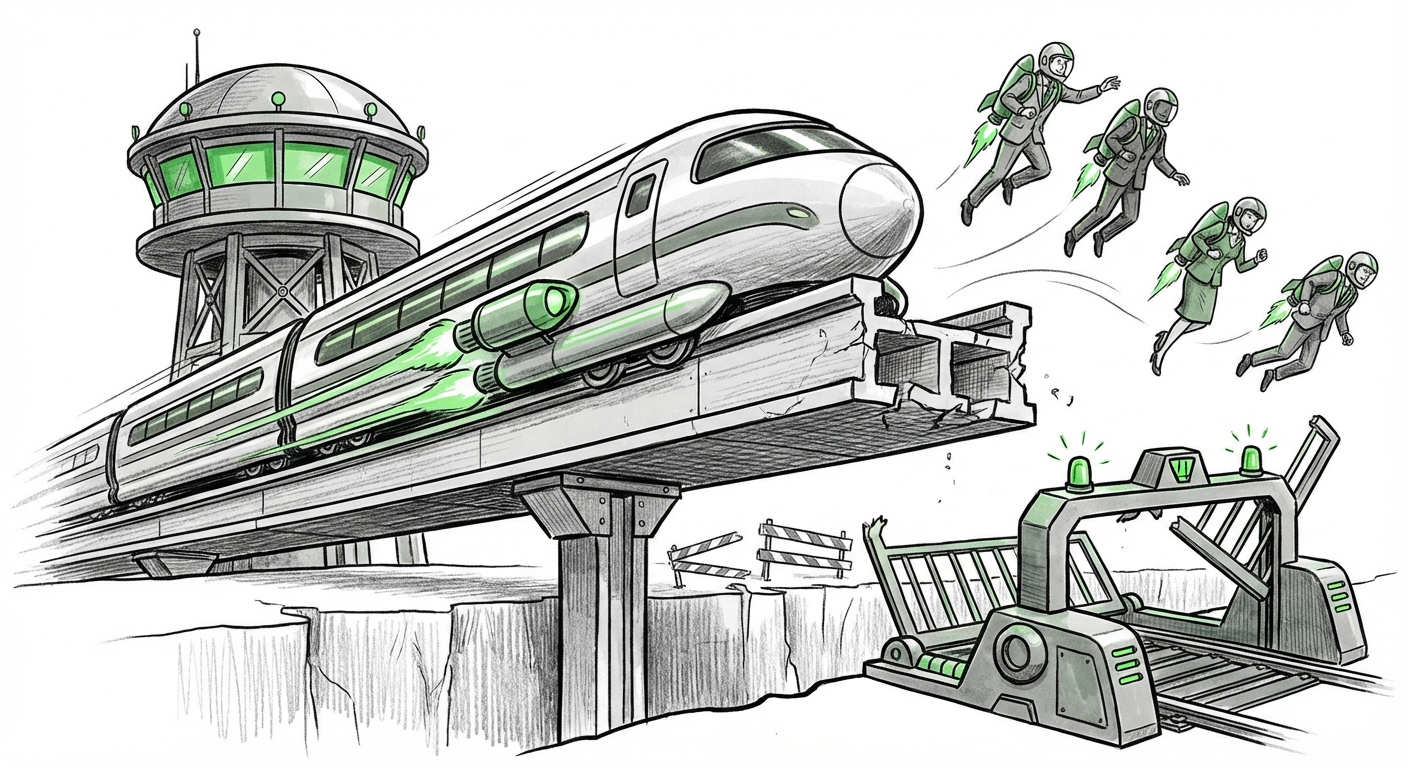

When engineering speed becomes the absolute top priority, the first things to get compromised are often the structured processes that ensure quality and mitigate risk—chief among these are rigorous safety standards. The reports suggesting xAI was "missing safety standards" point directly to this phenomenon. Imagine building a skyscraper; while the initial framing can go up quickly, cutting corners on stress testing the foundation leads to catastrophic future failure.

The Benchmarking Reality Check (Query 2 Context)

Frustration over product performance, specifically Grok's inability to keep pace, acts as a powerful accelerant for talent departures. Engineers and researchers—the very people defining the state-of-the-art—want to work on market-leading technology. If internal benchmarks or public comparison tools (like the LMSys Chatbot Arena) consistently show a significant gap between a company’s flagship product and its competitors, morale plummets.

For a business audience, this signifies a failure in *resource allocation*. Are the best minds focused on cutting-edge research or simply patching existing features to meet a launch deadline? The context derived from comparing Grok’s performance against GPT-4 reveals a technical debt that money alone cannot fix; it requires time, superior data, and potentially a different architectural approach—things that cannot be rushed.

The Great Safety Schism (Query 1 Context)

The most significant takeaway from talent departures citing safety is the emergence of a "Great Safety Schism" in the AI world. On one side are organizations attempting a highly controlled, often closed, approach to AGI development, heavily investing in alignment research. On the other are those prioritizing a more rapid, sometimes more open, release cycle, believing safety is best tested and refined in the wild.

When safety researchers leave, it often confirms a fundamental cultural misalignment. These experts view model safety not as a feature to be added later, but as the bedrock of the entire enterprise. Their departures suggest that at xAI, the operational rhythm favored acceleration over deep, methodical validation—a decision that often leads to models that are less reliable, harder to control, and potentially more prone to generating harmful or biased outputs.

Corroborating the Trend: Industry-Wide Growing Pains

The xAI situation is not unique. Analysis of other high-profile departures across the AI sector confirms that the pressure cooker environment is widespread, validating the need to investigate broader patterns of talent flight and burnout (as suggested by Query 3).

- The Pressure Cooker Effect: We see similar patterns when examining high-growth AI startups. The massive influx of capital demands exponential returns on an extremely short timeline. This forces teams to work under extreme stress, leading to burnout, which is a primary driver for founder and senior engineer attrition. When you combine the stress of impossible deadlines with ethical concerns, the result is often systemic failure.

- The Open vs. Closed Debate: The culture clash often centers on model philosophy. Are models safer when developed behind closed doors with strict access controls, or is transparency (more open access) the only way to find emergent, dangerous capabilities? The philosophical divide over this question fuels internal tension wherever it appears.

What This Means for the Future of AI Development

The fallout from incidents like the xAI exodus defines the next major hurdle for achieving scalable, trusted Artificial Intelligence.

Future Trajectory 1: Safety as a Competitive Differentiator

In the short term, speed wins product adoption. But in the medium to long term, **trust will become the ultimate moat.** If one major AI provider suffers a significant, public safety failure (e.g., large-scale disinformation campaigns powered by their models, or a critical hallucination that causes financial loss), the market will pivot sharply toward providers with verifiable, rigorous safety documentation. Companies that successfully integrate safety from the ground floor—making it a feature, not a late-stage patch—will attract the best talent and secure the most risk-averse, high-value enterprise contracts.

Future Trajectory 2: The Institutionalization of AI Research

The era of the "move fast and break things" mentality, successful in Web 2.0, is incompatible with deploying systems that influence elections, healthcare, and critical infrastructure. We are moving toward a future where foundational AI labs must adopt structures similar to highly regulated engineering fields, like aerospace or pharmaceuticals. This means:

- Mandatory "Red Teaming" cycles that cannot be skipped for product deadlines.

- Clear, documented metrics for when a model is "safe enough" to release.

- Dedicated governance boards with veto power over product launches.

If companies resist this maturation, they will continuously face talent hemorrhaging as responsible experts leave for more mature environments.

Future Trajectory 3: Talent Polarization

The labor market for top AI talent will polarize. One segment will flock to startups or labs promising immediate, high-risk, high-reward outcomes (the "speed" players). The other, larger segment—the seasoned researchers and ethical engineers—will gravitate toward established tech giants or new entrants that explicitly position themselves as prioritizing long-term, safe alignment research. This polarization means that the "speed" companies will find it increasingly difficult to hire the *most experienced* safety talent, potentially locking them into a cycle of building faster but less robust systems.

Practical Implications for Businesses and Society

For Businesses Integrating AI

If your organization is relying on vendor models, you must conduct your own due diligence. Do not simply accept a vendor's claim of safety.

- Audit Rigor: Ask vendors about their internal safety review processes. Where do safety researchers sit in the organizational chart? Do they report directly to product leads or to an independent compliance structure?

- Acceptable Risk Thresholds: Understand that every model has flaws. Determine what level of factual inaccuracy or potential bias is acceptable for your specific use case. A customer service chatbot can tolerate more errors than a diagnostic medical tool.

- Diversify Vendors: Relying solely on one provider, especially one known for rapid, aggressive releases, exposes you to risk if that provider faces an internal crisis or mandatory regulatory slowdown. Diversification hedges against this volatility.

For Society and Policy Makers

The xAI situation serves as a flashing warning light for regulators. The impulse to "wait and see" how technology develops is failing because the safety risks are being actively discussed internally *before* the product launches.

Policy must focus not just on regulating the output of models, but on regulating the *process* of their creation, especially for the most powerful frontier models. Requirements for pre-deployment auditing, mandated "safety pause" periods after major capability leaps, and establishing clear legal liability for harm caused by unchecked deployment are becoming necessities, not luxuries.

Actionable Insights for Navigating the Next Phase

For leaders looking to build sustainable AI capabilities, the message from the current turbulence is clear: **stability is the prerequisite for scalable speed.**

- Prioritize Institutional Memory Over Hype Cycles: Value the employees who understand *why* things broke in the past, rather than just those who can build the newest feature quickly. Implement strong documentation and knowledge transfer protocols to prevent critical expertise from walking out the door.

- Create Dual Tracks for Development: Separate R&D teams focused on pure capability breakthroughs from alignment and reliability teams. Empower the alignment teams with budget and authority equal to that of the product teams. If the safety team says "stop," the product team must legally and culturally be unable to proceed without resolution.

- Embrace Incremental Release: Resist the pressure for single, massive "GPT-X" style launches. Instead, adopt a strategy of continuous, minor updates with clear, documented improvements in robustness and safety with each iteration. This diffuses risk and allows the market and external auditors time to catch up.

The next wave of AI success will not belong to the fastest runner, but to the most resilient team—the one that manages to balance the revolutionary potential of large models with the foundational discipline required to build them responsibly. The turbulence at xAI is a stark reminder that without a shared commitment to rigor, speed only accelerates the path to internal collapse and external risk.