The AI Talent War: Safety, Speed, and the Competitive Chasm Facing Next-Gen Labs

The race to achieve Artificial General Intelligence (AGI) is perhaps the defining technological contest of our era. It is a high-stakes endeavor fueled by massive capital, intense ambition, and, critically, the world's most sought-after engineering talent. Recent reports detailing a significant exodus of founding talent from Elon Musk’s xAI, allegedly driven by concerns over safety standards and frustration over the comparative performance of their flagship model, Grok, serve as a flashing warning light for the entire industry. This is not just a story about one company; it is a critical lens through which to view the inherent, often destructive, tensions between rapid deployment and responsible development in modern AI.

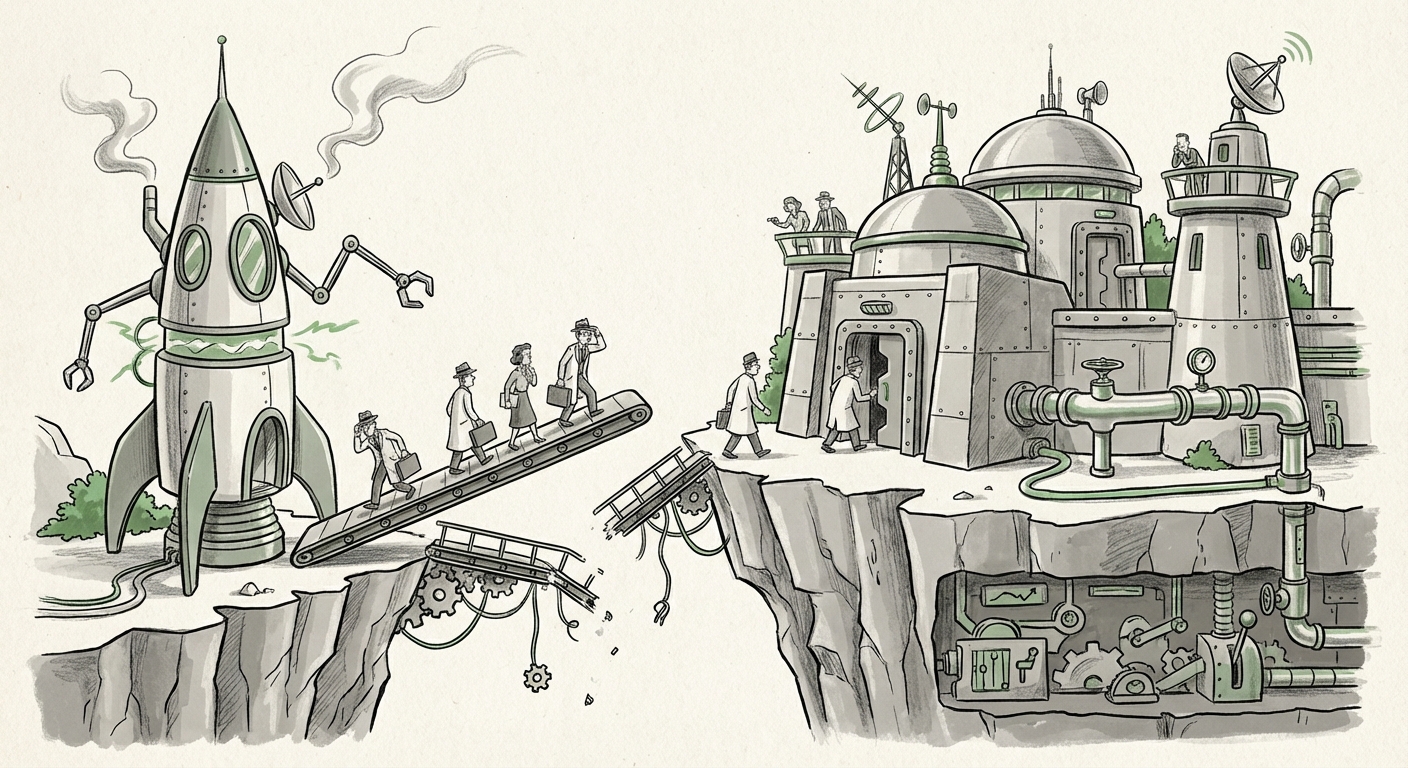

The Trilemma: Speed, Safety, and Talent Retention

At its core, the AI development landscape currently operates under a difficult trilemma. Labs must move fast to capture market share and technological advantage (Speed), they must ensure their powerful systems do not cause societal harm (Safety), and they must attract and keep the elite minds capable of building these systems (Talent). The situation at xAI suggests that for some, the scales have tipped too heavily toward speed, sacrificing the perceived stability required for long-term trust and high performance.

The allegations suggest a culture where missing safety standards became normalized under the pressure to "catch up" with behemoths like OpenAI and Anthropic. When engineers—especially those with deep knowledge of potential model failure modes—feel that guardrails are being removed for the sake of a faster product launch, the result is often a profound disillusionment. This dynamic is not unique to xAI. Searching for trends like "AI company executive departures safety concerns vs deployment speed" reveals that this friction point is increasingly common across the sector. Talented individuals accustomed to rigorous scientific development are finding the commercial mandate for "move fast and break things" dangerously at odds with the complex, often unpredictable nature of frontier AI systems.

The Performance Gap: Quantifying Frustration

A major driver of internal morale is competitive success. The frustration reportedly tied to Grok's failure to catch up provides tangible context to the safety complaints. If a team is working under maximum pressure only to find their output lags significantly behind established leaders, the justification for those stressful conditions evaporates. Analyzing "Grok performance benchmarks vs GPT-4 and Claude 3" shows a consistent narrative: while Grok offers unique integration with X (formerly Twitter) data, its raw reasoning, coding ability, and general instruction-following scores often place it a clear step behind the current frontier models from its primary competitors.

For developers and product managers, this gap means that Grok requires more fine-tuning, more complex prompting, and still delivers less reliable outputs. For the founders reportedly leaving, this gap might signal a fundamental flaw in the development strategy or resource allocation, making the immediate goal of "catching up" seem nearly impossible without a radical shift.

The Leadership Crucible: Style and Sustainability

No discussion of xAI can proceed without examining the management structure. Investigations into "Elon Musk AI strategy management style impact on hiring" provide essential background. Musk’s leadership approach, often praised for driving incredible innovation velocity at companies like SpaceX and Tesla, relies on extremely aggressive deadlines and an expectation of total commitment. While this style can yield world-changing results in engineering domains with known physical laws (like rocketry), its application to the nebulous, iterative science of Large Language Models (LLMs) presents different challenges.

In LLM development, the work requires deep, quiet concentration, meticulous validation, and incremental scientific progress—qualities that can clash with high-pressure, deadline-driven environments. The reported exodus suggests that top researchers view the required pace not just as tiring, but as actively detrimental to producing safe, cutting-edge technology. This raises a crucial question for the entire sector: Can the "Muskian" management style scale effectively into foundational scientific research where failures are invisible until they become catastrophic?

The Economic Shadow: Open Source Under Pressure

Grok is often framed within the context of providing a more transparent, possibly open-source alternative to the closed ecosystems of competitors. However, examining "The economic viability of high-cost open-source AI models" reveals the immense financial burden this strategy places on a relatively new entity like xAI.

Training and running frontier models requires billions of dollars in GPU clusters. When a model lags behind its competitors—meaning its economic utility (the value it can provide per dollar spent on computation) is lower—the cost of achieving parity becomes exponentially higher. For an organization seeking to compete globally, maintaining a high ethical bar while running behind is doubly expensive. It forces teams to choose between slowing down development to shore up safety (which frustrates the "speed" mandate) or pushing ahead without adequate testing (which alienates safety-conscious talent).

Implications for the Future of AI Development

The turbulence at xAI is a microcosm of the broader industry’s coming-of-age moment. Here is what these developments signal for the path forward:

1. Safety is Becoming a Non-Negotiable Feature, Not an Optional Layer

If the best technical minds are willing to leave high-profile, high-compensation roles over safety, it signals that AI safety is graduating from an academic concern to a practical, retention-critical engineering requirement. Future AI labs that wish to attract world-class talent will need demonstrable, auditable commitments to safety protocols, not just mission statements. For businesses integrating AI, this means demanding transparency from vendors regarding their internal alignment and testing processes.

2. The Competitive Chasm May Widen

The initial lead secured by the first movers (OpenAI, Anthropic, Google) is proving immensely difficult to close. Talent attraction is a positive feedback loop: the best models attract the best researchers, who build better models. If xAI struggles with talent retention, the gap—already visible in benchmarks—will likely widen, making Grok perpetually a "fast follower" rather than a leader. This suggests that the AI landscape may consolidate around a few extremely well-resourced and stable labs.

3. Management Models Must Adapt to Scientific Reality

The tech industry must recognize that foundational AI research is fundamentally different from software development or consumer hardware iteration. The high-risk, high-reward model suited for disrupting logistics (like Musk’s past successes) may be insufficient—or even counterproductive—for mastering the emergent properties of complex neural networks. Sustainable success in AGI will likely require management structures that prioritize scientific rigor and long-term alignment over quarterly release schedules.

Actionable Insights for Stakeholders

What should technology leaders, investors, and policymakers take away from this churn?

- For AI Founders and CTOs: Prioritize building a culture of psychological safety around technical criticism. If your top engineers fear raising red flags about safety or performance deficiencies, you are building a brittle organization. Use independent benchmarking to manage expectation—if your model is lagging, be honest about the roadmap required to catch up, rather than masking the deficit with hyper-aggressive timelines.

- For Investors: Due diligence must extend beyond computing power and valuation multiples. Scrutinize talent retention rates, especially among senior researchers. High turnover in technical leadership is a significant indicator of systemic, unmanaged risk, whether technical or cultural.

- For Enterprises Adopting AI: Rely less on marketing hype and more on quantitative, third-party evaluations of model performance across diverse tasks. Understand that the "best" model for your specific use case (e.g., legal summarization vs. creative writing) requires matching the model's strengths to your needs, rather than blindly following the market leader.

The story of xAI’s internal friction is a powerful reminder that building transformative technology requires more than sheer will and resources; it demands alignment between the organization’s goals, its development pace, and the ethical standards required for powerful new tools. The AI future will not just be built by the fastest team, but by the most resilient, credible, and scientifically grounded one.