The Great AI Clone Wars: How Distillation Attacks Threaten Billion-Dollar Models and Redefine IP

The world of Artificial Intelligence is experiencing a tectonic shift. For years, the narrative was dominated by the sheer size of foundational models—the multi-billion dollar investments by giants like Google and OpenAI required to train models on internet-scale data. These titans positioned themselves as the gatekeepers of high-end AI capability. However, a silent, insidious threat is undermining this very structure: model distillation attacks.

When major players recently voiced complaints about their expensive, proprietary models being systematically cloned "on the cheap," it wasn't just irony ringing hollow; it signaled a true crisis in AI development economics. This isn't about stealing data; it’s about stealing the *intelligence* baked into the model itself, turning massive capital expenditure into accessible, near-free blueprints.

The Irony of Innovation: Built on Openness, Defended by Secrecy

Let’s confront the central irony: Google and OpenAI trained their cutting-edge models largely by absorbing vast amounts of publicly available data—the collective works of humanity shared across the web. Now, they find themselves defending their *outputs* against attackers who use similar query-based methods to reverse-engineer that learned intelligence. This dynamic exposes a fundamental tension in modern AI: the tension between leveraging open information and protecting proprietary process.

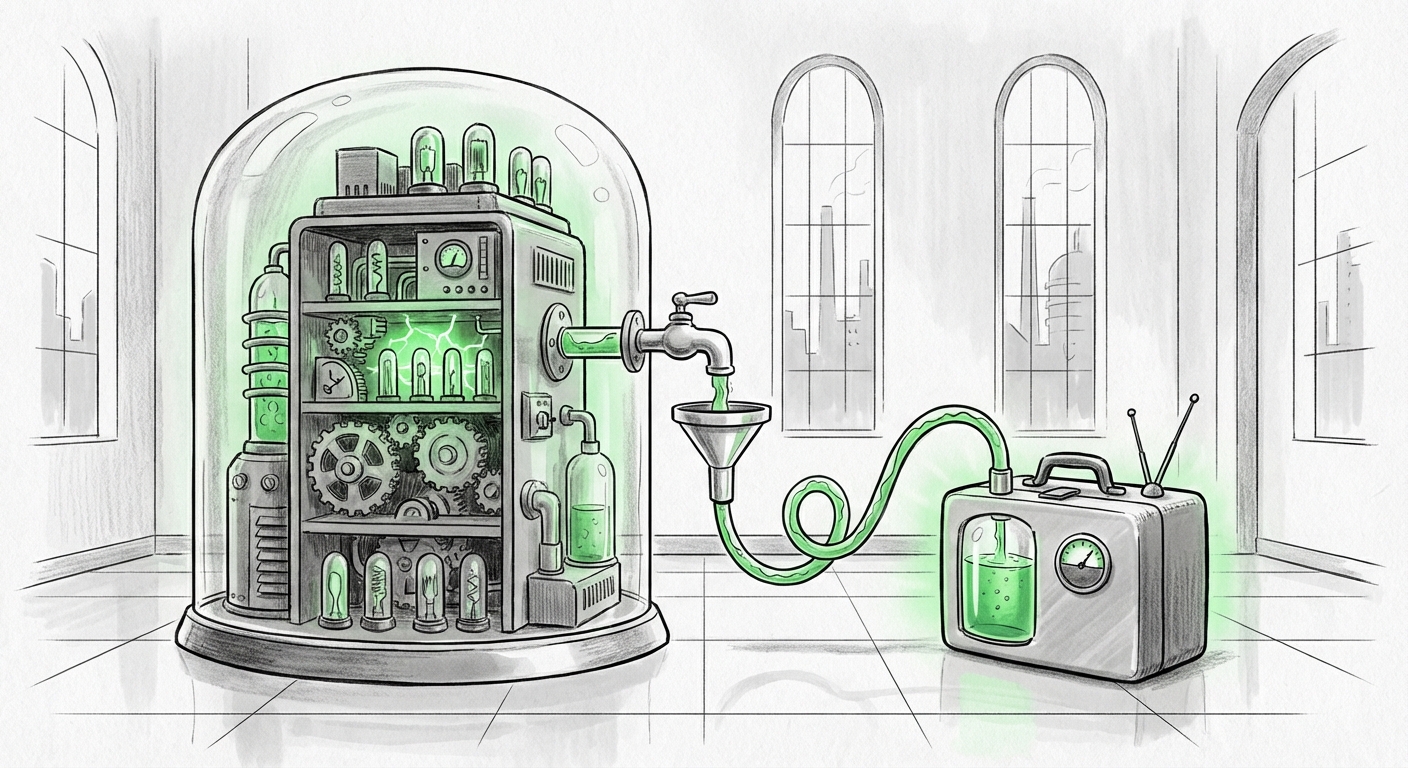

For the layperson, think of it this way: Building a world-class chef’s recipe book (the foundational model) costs millions in ingredients and years of tasting (training). A distillation attack is like hiring a cheap apprentice who watches the chef cook hundreds of meals through a window (API queries) and then writes a nearly identical cookbook without ever having bought the initial ingredients or ingredients.

Understanding the Mechanics: What is Distillation, Really?

Model distillation, in its original, benevolent form, is a legitimate research technique. It involves training a smaller, faster "student" model to mimic the performance of a larger, slower "teacher" model. The goal is efficiency—making powerful AI deployable on phones or cheaper servers.

The attack transforms this tool into a weapon. Attackers use the large model (the teacher) through its public Application Programming Interface (API) as a source of high-quality labeled data. They craft thousands, sometimes millions, of inputs and meticulously record the teacher model's outputs. This collection of input-output pairs is then used to train a new, compact model (the student).

As technical analysts note, the crucial factor is efficiency. Since the attacker skips the initial, excruciatingly expensive phase of gathering and cleaning billions of training tokens, they effectively clone the *learned expertise* at a fraction of the cost. This move shifts the competitive advantage away from those with the deepest pockets for compute power toward those with the keenest ability to query strategically.

The Security Arms Race: Defense Strategies Under Scrutiny

The response from major labs must be rapid and robust. Our technical investigations suggest a two-pronged defense strategy is emerging:

- Output Perturbation: Introducing subtle, random noise or slight variations into the API responses. This contaminates the dataset the attacker is building, making the student model’s training unstable or inaccurate.

- Behavioral Fingerprinting: Developing methods to track query patterns that suggest systematic extraction, rather than organic user interaction. This involves looking for patterns that match known distillation methodologies.

This defense strategy is crucial because, as security researchers confirm, ignoring this threat leaves the multi-billion dollar investment in foundational training vulnerable to rapid replication by competitors or malicious actors.

The Legal Quagmire: Who Owns the Algorithm's Ghost?

The most disruptive aspect of the distillation threat lies in the legal gray area it creates. Intellectual property law, designed for physical goods or traditional software code, struggles to categorize the extracted intelligence of an AI.

If a distilled model achieves 95% of the original model’s capability, does that constitute copyright infringement or unfair competition? This debate is central to the future of AI commercialization. If established IP frameworks cannot protect the learned weights and behaviors of a proprietary model, the incentive structure for undertaking decades-long, astronomical training costs dissolves.

Legal experts are grappling with these questions because the core IP in modern AI is increasingly abstract. It’s not just the code; it’s the statistical structure learned during training. When this structure is replicated via querying, we move from issues of data privacy to defining what constitutes **AI Model Intellectual Property (IP)**. The industry needs clarity, and regulators are scrambling to catch up.

Economic Upheaval: The Democratization of Elite Performance

While this is a headache for the giants, it is a massive opportunity for the rest of the ecosystem. This threat accelerates the **democratization of AI**. If a high-performing LLM can be cloned for mere thousands of dollars in API costs rather than billions in compute time, the barrier to entry for creating competitive, specialized AI products plummets.

We are seeing evidence of this in the growing focus on highly capable Small Language Models (SLMs). Startups no longer need to build from scratch; they can build *on top* of the giants’ capabilities using distillation. This forces a strategic pivot for foundational model developers:

- Shift from Capability Monopoly to Service Monopolies: If the model can be copied, the value must shift to the infrastructure, real-time tuning, superior fine-tuning data, or specialized vertical integration that the attacker cannot easily replicate.

- Usage Tiering: Expect stricter API usage terms, potentially limiting the volume or type of queries that can be used for non-human training purposes, or dramatically raising prices for heavy, structured querying indicative of extraction attempts.

For Venture Capitalists and startups, this means the competitive edge is no longer *owning* the largest model, but rapidly iterating on the *best application* of that model’s distilled intelligence.

Future Implications: A New Definition of AI Defensibility

What does this mean for the next phase of AI development? The era where simply having the biggest model guaranteed market leadership is fading. The future demands defensibility through means other than brute-force training investment.

1. The Rise of Closed, Proprietary Architectures

To fight extraction, we may see a retreat toward more proprietary or partially closed architectures where the most valuable parts of the model cannot be adequately inferred through simple input/output pairs. This could mean favoring techniques that rely less on fully observable final layers or incorporating proprietary hardware acceleration that makes replication difficult outside their specific ecosystem.

2. Security as a Feature, Not an Afterthought

For any enterprise deploying an AI service, security must evolve beyond traditional cybersecurity. Model extraction attacks, along with data poisoning, are now primary threats to the core product. CISOs must prioritize API security, query monitoring, and anomaly detection specifically tuned for AI behavioral threats.

3. Regulatory Push for Transparency (or Lack Thereof)

Governments seeking to understand AI risk might push for more transparency in model weights or training data. Ironically, the threat of cheap cloning could provide the very defense needed against mandated transparency. Developers might argue that revealing internal structures makes them immediately vulnerable to extraction, thus demanding secrecy as a necessary countermeasure.

Actionable Insights for Businesses Today

Whether you are building the next foundational model or deploying an AI feature, this trend requires immediate strategic adjustments:

- Audit Your API Access: If you rely on third-party models, understand their usage policies regarding training and distillation. If you offer an API, implement robust rate limiting and behavioral analysis to detect large-scale, systematic extraction attempts.

- Invest in Fine-Tuning Differentiation: Since the base capability can be cloned, focus your IP on specialized, proprietary fine-tuning data and alignment techniques. This specialized knowledge is harder to distill accurately than general knowledge.

- Evaluate Hybrid Models: For mission-critical tasks, consider hybrid architectures. Use a cloud-based proprietary model for the most complex reasoning, but use a lightweight, locally managed (and thus un-queryable) distilled model for high-volume, low-stakes tasks.

The challenges faced by Google and OpenAI with distillation attacks are not minor nuisances; they are structural threats to the current AI investment paradigm. This tension signals the end of the "pure scale wins" era and the beginning of the era defined by **AI IP defense and rapid, efficient replication.** The battleground is moving from who has the most data to who can best secure the intelligence derived from it.