The AI Heist: How Model Distillation Attacks Threaten the Giants of Generative AI

The world of Artificial Intelligence is currently defined by titans—Google, OpenAI, Meta—companies pouring billions into training massive Large Language Models (LLMs) like Gemini and GPT. These models represent an immense, virtually unquantifiable amount of intellectual property (IP), built layer by layer through exhaustive computation and curated data. However, this hard-won advantage is now under direct siege, not by competitors rebuilding the models from scratch, but by a far cheaper, more insidious method: Model Distillation Attacks.

When industry leaders like Google and OpenAI complain publicly about this theft, it signals a crucial inflection point. It’s no longer a theoretical vulnerability; it’s an active economic threat against the very foundation of their business models. These attacks allow small players to clone the intelligence of billion-dollar models for a fraction of the cost. To understand the scope of this challenge, we must analyze the mechanics, the economic disruption, and the necessary countermeasures.

What is Model Distillation, and Why is it a Security Threat?

Imagine a world-class master chef (the "Teacher" model, like GPT-4). This chef has spent years learning millions of recipes and techniques, resulting in highly refined, nuanced cooking skills. Now, imagine a junior cook (the "Student" model) observing the master chef’s final dishes—not the process, but the results. The student tries to create dishes that taste exactly like the master’s, practicing over and over until they achieve near-identical output quality.

In AI terms, distillation is a process where a large, highly capable model (the Teacher) is used to train a much smaller, more efficient model (the Student). The student doesn't learn from the original massive dataset; instead, it learns to mimic the outputs (the predictions, explanations, or code generations) provided by the teacher model. This is often achieved via "black-box" queries, meaning the attacker never needs access to the teacher's internal structure or weights.

The complaint from the major labs stems from the fact that attackers are turning their proprietary APIs into free, continuous training platforms. As described in reports detailing these attacks, the irony is sharp: companies that built empires on utilizing vast, publicly available data are now seeing their carefully guarded, computationally expensive knowledge harvested cheaply.

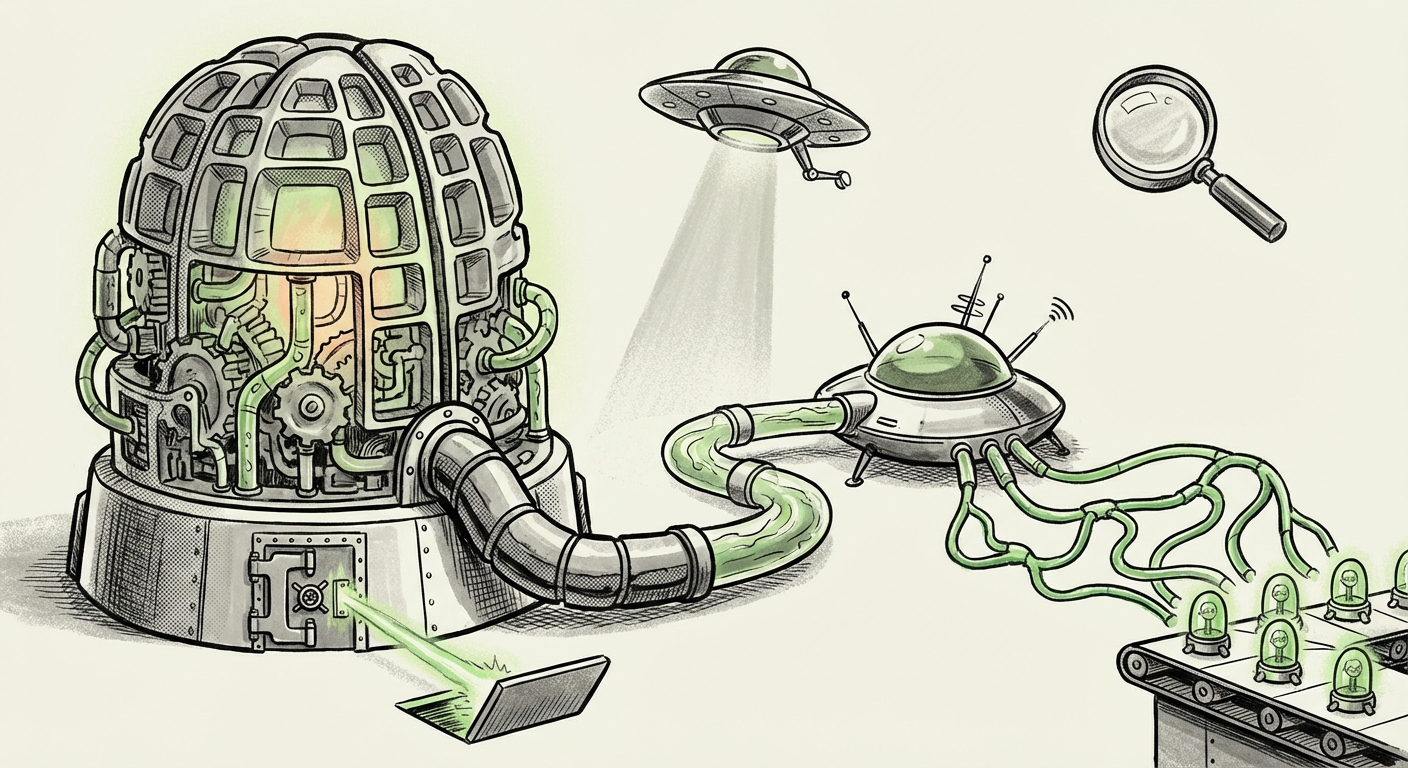

The Technical Core: Black-Box Extraction

The key mechanism here is the black-box attack. An attacker simply floods the target model's API with carefully chosen inputs. If the teacher model gives a nuanced, correct answer, the attacker saves that input/output pair. They repeat this thousands or millions of times. Once they have a large dataset of 'expert answers,' they train their own smaller model on this synthetic dataset. Because the student model only needs to mimic the *behavior* of the teacher, it requires significantly less data and far less computing power to train, effectively cloning the "brain" of the larger system.

For those interested in the technical history, understanding the broader field of black-box attack methods for large language models provides the context for how these probing techniques have evolved from simple data extraction to sophisticated behavioral cloning.

The Economics of the Heist: Quantifying the Threat

The difference in investment is staggering, which is why this issue cuts so deeply into the business strategy of AI pioneers. Training a state-of-the-art foundation model costs tens to hundreds of millions of dollars in compute time alone, not counting the engineering salaries or data curation costs. The resulting model is the company’s core asset.

In contrast, cloning that capability via distillation might cost mere thousands of dollars in API usage fees and minimal local compute time to train the student model. This massive asymmetry is what drives the economic incentive.

Analyzing the economics of model distillation vs foundation model training reveals that this isn't just about stealing code; it’s about democratizing the *capability* without paying the development toll. This undermines the entire premise of proprietary, closed-source AI development, which relies on high entry barriers to protect investment returns.

The Legal Abyss: Where Does IP Law Stand?

One of the most challenging aspects of distillation attacks is that they operate in a legal gray zone. Current intellectual property law was not written with software that learns complex, non-obvious patterns in mind.

Is the resulting distilled model a copyright infringement of the teacher model’s weights? Probably not, as the attacker never copied the weights directly. Is it a violation of a service agreement? Almost certainly, but enforcing Terms of Service across international borders against anonymous entities is difficult. This is why industry analysts are focusing on the legal implications of AI model cloning via distillation.

For businesses, this ambiguity means that the massive investment in training proprietary models lacks robust legal protection against replication via inference-layer attacks. The legal framework must quickly catch up to define whether the *behavioral pattern* embedded in a model constitutes a trade secret.

The Future Battlefield: Defense and Countermeasures

If the genie of open-source capability cannot be fully put back in the bottle, the giants must adapt their defense. The focus is rapidly shifting from securing the training data to securing the inference process.

Actionable Defense Strategies

AI security teams are now deeply engaged in researching AI model extraction defense strategies. Key techniques under investigation include:

- Output Perturbation (Noise Injection): Deliberately adding small, subtle errors or random variations to the teacher model’s output. This "noisy" data is hard for a student model to learn consistently, thereby poisoning the distillation dataset.

- Query Rate Limiting and Anomaly Detection: Aggressively tracking who is sending many similar queries in a short period. While this hurts legitimate users, developers must build sophisticated systems to differentiate between research probing and systematic extraction attempts.

- Digital Watermarking: Embedding hidden signals within the model’s output (e.g., specific phrasing choices or statistical biases) that can be detected later. If a distilled model produces watermarked content, it serves as undeniable proof of cloning.

- Internal Defenses: Developing models that are inherently more resistant to extraction by changing how information is encoded in the weights, making the output mapping less predictable for external queries.

What This Means for the Future of AI and How It Will Be Used

The rise of effective, cheap distillation attacks is not a death knell for proprietary AI, but it is a profound catalyst for change. It forces a re-evaluation of what "value" truly is in the age of ubiquitous LLMs.

Implications for Businesses and Society

1. Democratization of Capability (The Upside): For startups, non-profits, and researchers, distillation is a massive boon. They gain access to near-state-of-the-art performance without the need for a $100 million initial training budget. This rapidly accelerates innovation in specialized fields where the general knowledge of GPT-4 is sufficient, lowering the barrier to entry for creating powerful, domain-specific tools.

2. Shift in Competitive Advantage (The Business Angle): Companies like Google and OpenAI cannot win by simply making the *biggest* model anymore; they must make the model that is either impossible to distill or so far ahead of the competition that the clone is obsolete before it launches. The new moat will be built on speed of iteration, unique real-time data access (RAG systems), and superior multimodal capabilities that are difficult to replicate sequentially.

3. Sovereignty and Control: On the geopolitical and security front, the ability to clone models presents major risks. If a powerful LLM can be perfectly replicated and then deployed in an offline environment (without API monitoring or content filtering), it immediately becomes a powerful tool for disinformation, cyber operations, or espionage, circumventing safety guardrails implemented by the original creators.

Actionable Insights for Stakeholders

For AI Developers (The Giants): Invest aggressively in defensiveness. Treat your API access as the primary attack surface. Develop proprietary methods for tracking and watermarking outputs. Move faster than the distillers can clone.

For Startups and Innovators (The Users): Leverage distillation strategically. Use it to build specialized, fast models for narrow tasks, saving your venture capital for unique data sourcing or novel fine-tuning, rather than trying to compete directly on general foundational knowledge.

For Policymakers: New regulations must define AI output rights. If the *pattern* of intelligence is protectable, licensing models for distillation may become a formal revenue stream, offering a middle ground between fully open and fully closed systems.

Conclusion: Adaptation is the Only Strategy

The emergence of efficient model distillation attacks is the market’s brutal, yet necessary, response to unchecked centralized power in AI development. It forces the technological hegemony to prove that its value lies not just in the sheer scale of its initial effort, but in its continuous, defensible innovation.

The future of AI will be characterized by a dynamic tension: on one side, the relentless drive to create ever-more powerful teacher models; on the other, the sophisticated, low-cost efforts to strip that power away via the inference layer. Success in this new era will belong not to the deepest pockets, but to the fastest adapters in the emerging arms race between model protection and model extraction.