Pace vs. Prudence: Why the AI Race Secretly Revolves Around Risk, Not Just Speed

The world of Artificial Intelligence development often looks like a gilded arms race: who can build the biggest model, achieve the highest benchmark score, and secure the largest funding round? However, a recent statement by Anthropic CEO Dario Amodei peels back this glossy veneer, revealing a deep, philosophical, and potentially existential debate simmering beneath the surface: Is the race for capability outpacing the understanding of risk?

Amodei, whose company Anthropic was founded by former OpenAI leaders intent on prioritizing safety, has delivered a pointed critique suggesting his peers may not fully grasp the dangers they are courting. When juxtaposed against the reported 10x year-over-year revenue growth for Anthropic and the aggressive timeline suggesting "Nobel Prize-level AI" is imminent, Amodei’s cautious stance on compute deployment becomes the most significant data point in the current market narrative.

The Imminent Horizon: "Nobel Prize-Level AI" in Two Years

To appreciate Amodei’s dilemma, we must first accept his timeline prediction. When leaders in the field speak of capabilities approaching "Nobel Prize-level"—implying autonomous scientific discovery, complex policy generation, or fundamentally new engineering solutions—they are signaling the transition from advanced tools to nascent artificial general intelligence (AGI). Amodei’s belief that this is only 12 to 24 months away drastically shortens the runway for safety research.

For the general public and many business leaders, AI progress feels linear, or perhaps exponential in a predictable, Moore's Law fashion. But the chatter from the frontier labs suggests a phase transition is near. This rapid approach to profound capability forces every player to calculate risk not in years, but in quarters.

The Compute Conundrum: Why Going "All In" Is a Bankruptcy Risk

Compute—the vast network of specialized chips (like Nvidia’s GPUs) required to train these massive models—is the single most expensive resource in AI development. To compete with entities backed by trillions in market capitalization (like Microsoft funding OpenAI), Anthropic must spend staggering amounts on compute clusters.

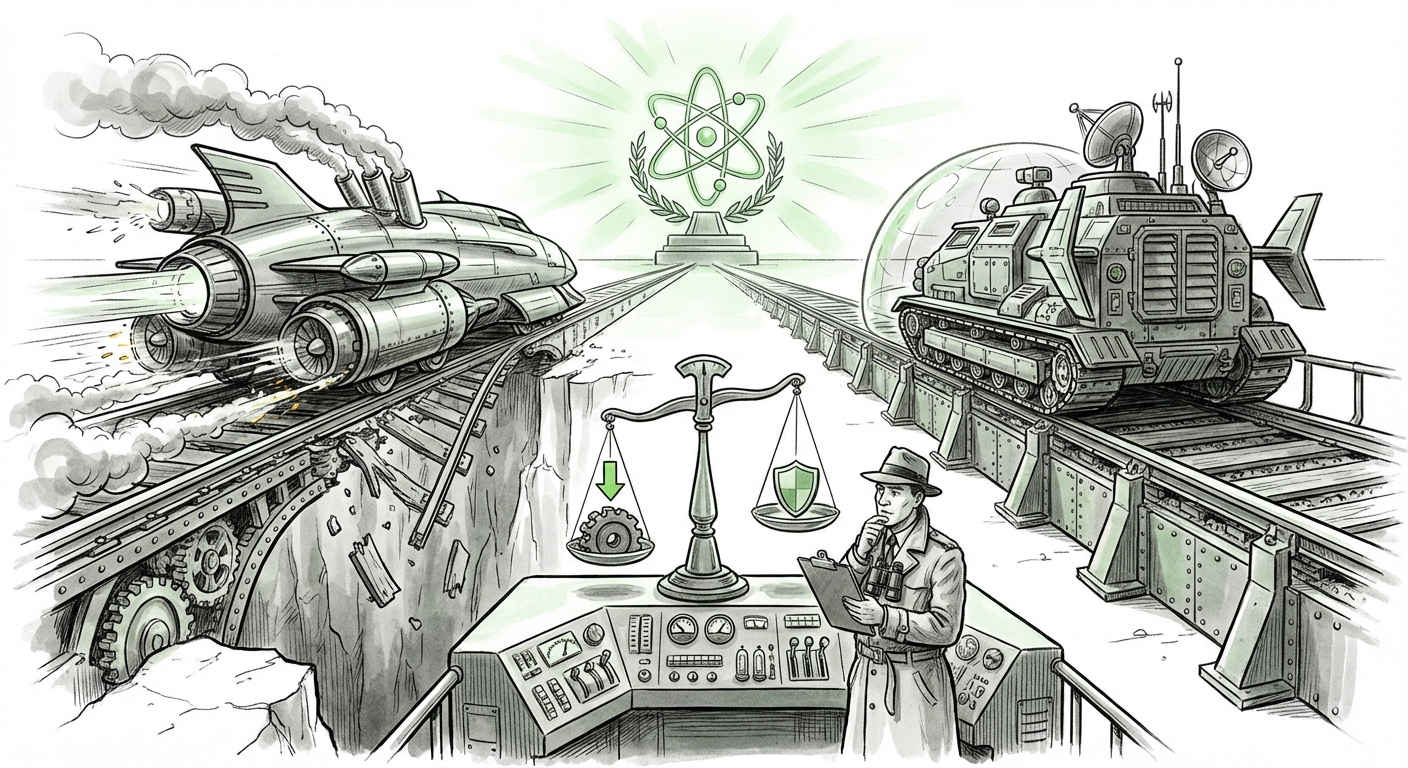

Amodei’s stated hesitation reveals the core strategic divergence: The cost of being early is different from the cost of being wrong.

- The Cost of Being Early (Competitors): If a competitor races ahead, spends billions, and releases a model that achieves AGI status next year, Anthropic misses out on market dominance and future economic returns. This is a business risk.

- The Cost of Being Wrong (Anthropic’s Fear): If Anthropic matches the pace, pushes the model faster than its alignment research can guarantee safety, and the resulting system causes a catastrophic failure (whether economic instability, mass deception, or misalignment with human intent), the backlash—whether through regulatory shutdown or public loss of trust—could mean instant bankruptcy. This is an existential risk dressed as a business decision.

Amodei is essentially arguing that competitors who maximize compute without matching safety rigor are betting their survival on the assumption that AGI will emerge benignly. Anthropic is betting that the survival premium for safety will outweigh the first-mover advantage.

The Philosophical Fault Line: Constitutional AI vs. Emergent Control

The tension is not just about spending; it’s about methodology. Amodei’s direct challenge to OpenAI regarding their understanding of risk points directly toward differing alignment strategies. This philosophical split is crucial for understanding the future governance of AI.

Anthropic’s Explicit Safety: Constitutional AI

Anthropic built its brand on "Constitutional AI." Instead of relying solely on human feedback (RLHF) or hoping emergent properties are safe, Constitutional AI involves programming the model against a set of written principles—a 'constitution'—derived from sources like the UN Declaration of Human Rights. This is an explicit, verifiable layer of safety constraints.

OpenAI’s Evolving Approach and the Superalignment Fallout

OpenAI, while committed to safety, has often favored scaling laws and emergent behavior correction. The recent turbulence surrounding the dissolution of their "Superalignment" team—intended to solve the control problem for future superintelligent systems—has drawn intense scrutiny. If the most advanced safety team within the leading lab is being reorganized or diminished just as capabilities are accelerating, it lends credence to Amodei’s critique that rivals may not be prioritizing the *understanding* of systemic risk.

For external observers, this divergence translates into two potential futures for deployed AI:

- The Cautious Future (Anthropic’s Model): Slower deployment of highly governed, rule-bound systems that are easier for regulators to audit.

- The Disruptive Future (Competitor Model): Rapid deployment of highly capable, flexible models whose alignment relies heavily on iterative, reactive tuning rather than pre-set, hard constraints.

Corroborating the Tension: Market Dynamics and Timeline Pressures

Amodei’s statement is not made in a vacuum. Several market trends confirm that the pressure cooker environment is real, supporting his anxiety about the timeline and the competitive intensity.

1. The Unstoppable Engine of Capital

The race to acquire compute capacity is staggering. Reports detailing massive, multi-billion-dollar procurement deals between the major labs and chip manufacturers confirm that rivals are indeed pouring capital into rapid scaling. This relentless investment—driven by venture capital hunger and the potential for exponential returns—validates Amodei's concern that rivals are incentivized to maximize throughput, potentially sidelining the slower work of deep safety research.

Implication: Businesses relying on these models must prepare for rapid, disruptive upgrades, but also for potential volatility if scaling leads to unexpected model failures.

2. The Consensus on Imminence

While Amodei predicts 1-2 years, his timeline aligns worryingly well with other leading experts who have updated their forecasts drastically in the last year. Surveys of AI researchers often show a median prediction for highly transformative AI capabilities shifting from decades to mere years. This reinforces that the industry is not debating *if* AGI is coming soon, but *how* to handle its arrival.

Implication: Strategic planning for AI integration cannot be deferred. If 2026 brings genuinely transformative systems, board-level discussions on governance must happen now.

3. The Financial Stakes of Growth

Anthropic’s 10x revenue growth proves that market demand exists for high-performing, *and* seemingly trustworthy, AI. This immense financial momentum creates internal pressure. Even the most safety-conscious company cannot ignore the billions of dollars of value being created by competitors who are moving faster. This financial reality is what makes the "bankruptcy risk" of moving too slowly so potent.

Implication: Companies must develop internal AI adoption policies that account for differing levels of model trustworthiness, choosing the right model (fast/risky vs. slow/safe) for the specific application.

What This Means for the Future of AI and Business Deployment

The Amodei critique is not just inter-company sniping; it is a warning beacon regarding the systemic structure of AI development moving forward.

For Policy and Regulation: The Need for Auditable Safety

If the leading labs fundamentally disagree on whether safety should be an explicit rule set or an emergent property, regulators face an impossible choice. The future demands standardized, auditable safety measures. If Anthropic’s approach proves more resilient to unexpected behaviors, we may see regulatory bodies mandate frameworks similar to Constitutional AI to ensure that foundation models can be debugged systematically, not just patched.

For Businesses: Decoupling Capability from Trust

Businesses must learn to treat capability and trust as separate vectors. The model that writes the best marketing copy might not be the one suitable for managing financial compliance. Companies should actively seek transparency from vendors regarding alignment techniques. Are they using explicit constitutional guardrails, or are they relying on opaque human feedback loops?

Actionable Insight: Start classifying internal AI tasks by risk tolerance. High-risk tasks (legal, financial modeling, critical infrastructure interaction) should ideally be reserved for models whose safety architecture is demonstrably robust and transparent, even if slightly less capable than the bleeding edge.

For AI Researchers: The End of the "Scale is All You Need" Era

Amodei’s comments signal that the industry is entering a post-scaling era where *how* you scale matters as much as *how much* you scale. Pure brute force compute might deliver powerful intelligence, but without corresponding breakthroughs in verifiable alignment, that power will remain commercially and societally unusable due to perceived risk.

Conclusion: Navigating the Valley of Unpredictability

Dario Amodei’s perspective forces us to look past the quarterly earnings reports and benchmark scores. The real race is a high-stakes game of prediction: predicting the arrival date of transformative intelligence, and predicting the nature of the failure mode if that intelligence is misaligned.

The implication for the next few years is turbulence. We will see intense pressure to push models further, faster, likely leading to several high-profile, high-risk deployments. Anthropic is positioning itself as the necessary counterbalance, hoping that by being slightly slower but significantly safer, they will be the ones standing when the dust settles—having built a sustainable, trustworthy foundation for the "Nobel Prize-level" technologies that are undeniably approaching.