The Great AI Divide: Why Anthropic vs. OpenAI Signals the Future of Frontier Model Development

The race to Artificial General Intelligence (AGI) is not just a competition for technological supremacy; it is rapidly becoming a philosophical battleground. Recent commentary from Anthropic CEO Dario Amodei has brought this tension to the forefront, suggesting that his competitor, OpenAI, might be outpacing its understanding of the profound risks involved in scaling up models faster than safety protocols can mature.

Amodei’s candid remarks—noting that Nobel Prize-level AI could be just a year or two away, yet expressing hesitation about pushing compute limits because a single misstep could lead to bankruptcy—reveal a critical strategic divergence. On one side, we have the ethos of rapid deployment and market capture; on the other, a deep commitment to measured scaling anchored in safety. Understanding this divide is key to predicting the next five years of AI development.

The Core Conflict: Speed vs. Safety in the Compute Arms Race

The foundation of modern frontier AI models (like GPT-4 or Claude 3) is **compute**—the massive amount of processing power needed to train them. This necessity has fueled a fierce, capital-intensive competition often dubbed the "Compute Arms Race." Amodei’s skepticism centers precisely here. If achieving world-changing intelligence is only 12–24 months away, the stakes of miscalculation are astronomical.

Anthropic’s reluctance to "go all in on compute" immediately, despite growing 10x year-over-year, suggests they have calculated the downside risk differently than their rivals. For a business, being off by a year on profitability can mean failure; for an AI lab chasing AGI, being off by a year on safety implementation could mean catastrophe, regardless of revenue.

Implication 1: The Financial Mathematics of Risk

When analyzing the competitive landscape, we must look beyond simple revenue metrics. The industry is now seeing public reports detailing the sheer scale of capital needed for the next generation of models. This massive investment pressure naturally rewards speed.

However, Anthropic appears to be prioritizing the *quality* of scaling over the *speed* of scaling. This implies they are dedicating significant resources not just to raw training data, but to developing robust mechanisms for control, interpretability, and alignment *before* hitting peak capability. For infrastructure specialists and investors, this means betting on two different long-term payoffs: immediate market dominance versus sustained, trustworthy innovation.

The Philosophical Divide: Constitutional AI vs. Rapid Iteration

The specific approach to building trustworthy AI forms the philosophical chasm between the leading labs. Anthropic’s answer to the control problem is **Constitutional AI**—training models against a set of written principles designed to guide behavior ethically. This methodology is inherently more structured and deliberate.

When Amodei suggests competitors "don't really understand the risks," he is implicitly questioning whether their safety overlay mechanisms are as robust as their scaling metrics. For the general public and policy makers, this highlights the critical nature of **AI Alignment**—ensuring advanced AI systems pursue goals beneficial to humanity.

If OpenAI’s development track focuses on sheer emergent capability, the risk is that safety guardrails become reactive patches applied *after* a model demonstrates concerning behavior. Anthropic’s strategy suggests a proactive defense, treating safety as foundational architecture rather than an after-market upgrade.

The Evidence of Internal Dissent

To corroborate Amodei's perspective, one must look at external indicators of internal tensions within competitor labs. Reports surrounding significant internal governance shifts often point back to fundamental disagreements over timelines, commercial pressure, and safety protocols. High-profile departures or moments of corporate instability frequently arise when safety-focused researchers feel their concerns about aggressive timelines are being overridden by commercial imperatives.

For businesses seeking to integrate these powerful tools, this internal discord signals volatility. If the development philosophy itself is in flux, the reliability and long-term roadmap of the deployed models become uncertain variables.

The Approaching Threshold: What is "Nobel Prize-Level AI"?

Amodei’s prediction of Nobel Prize-level intelligence within two years is provocative. This term suggests AI capable of genuinely novel scientific discovery, complex theoretical synthesis, and creative problem-solving far beyond current capabilities—not just pattern matching or complex coding, but true invention.

This timeline aligns with the more aggressive forecasts within the expert community. If leading minds truly believe AGI breakthroughs are imminent, it fundamentally reframes the entire technological landscape. It transforms AI from a productivity tool into an engine of scientific acceleration.

Expert Consensus and Market Reaction

If recent surveys of AI researchers confirm that the median prediction for transformative AGI is indeed compressing towards the 1-3 year mark, Amodei’s statement is validated as a pragmatic assessment of the technological horizon, rather than mere hyperbole. This near-term realization forces businesses to accelerate planning:

- For R&D Departments: The time to build internal AI competency is *now*, not when the technology is fully mature.

- For Legal/Compliance Teams: Regulatory frameworks must adapt to capabilities that appear within months, not decades.

- For Strategy Officers: Competitive advantages based on current model performance may be obsolete within 18 months.

Practical Implications for Businesses: Choosing a Partner in the Fracture

The strategic divergence between Anthropic and OpenAI places corporate users in a position where they must choose alignment with a philosophical stance, not just a technological product.

1. The High-Risk, High-Reward Play (Associated with Aggressive Scaling)

Companies that need the absolute cutting edge immediately, are willing to accept greater governance uncertainty, and possess strong internal teams capable of rigorous red-teaming might lean toward partners prioritizing speed. This choice maximizes immediate performance gains but comes with higher reputational and operational risk if unforeseen model failures occur.

2. The Sustainable, Governed Play (Associated with Measured Scaling)

Businesses operating in highly regulated sectors (finance, healthcare, law) or those prioritizing long-term brand safety will find Anthropic’s methodical, safety-first approach more attractive. Their 10x YoY revenue growth is testament that safety is a marketable feature, not just a cost center, especially when backed by major partners like Amazon and Google.

Anthropic's focus ensures their models, like Claude, are designed from the ground up to be constrained and predictable. While perhaps slightly behind the absolute bleeding edge of capability today, their path promises greater stability tomorrow.

Actionable Insights: Navigating the Divergence

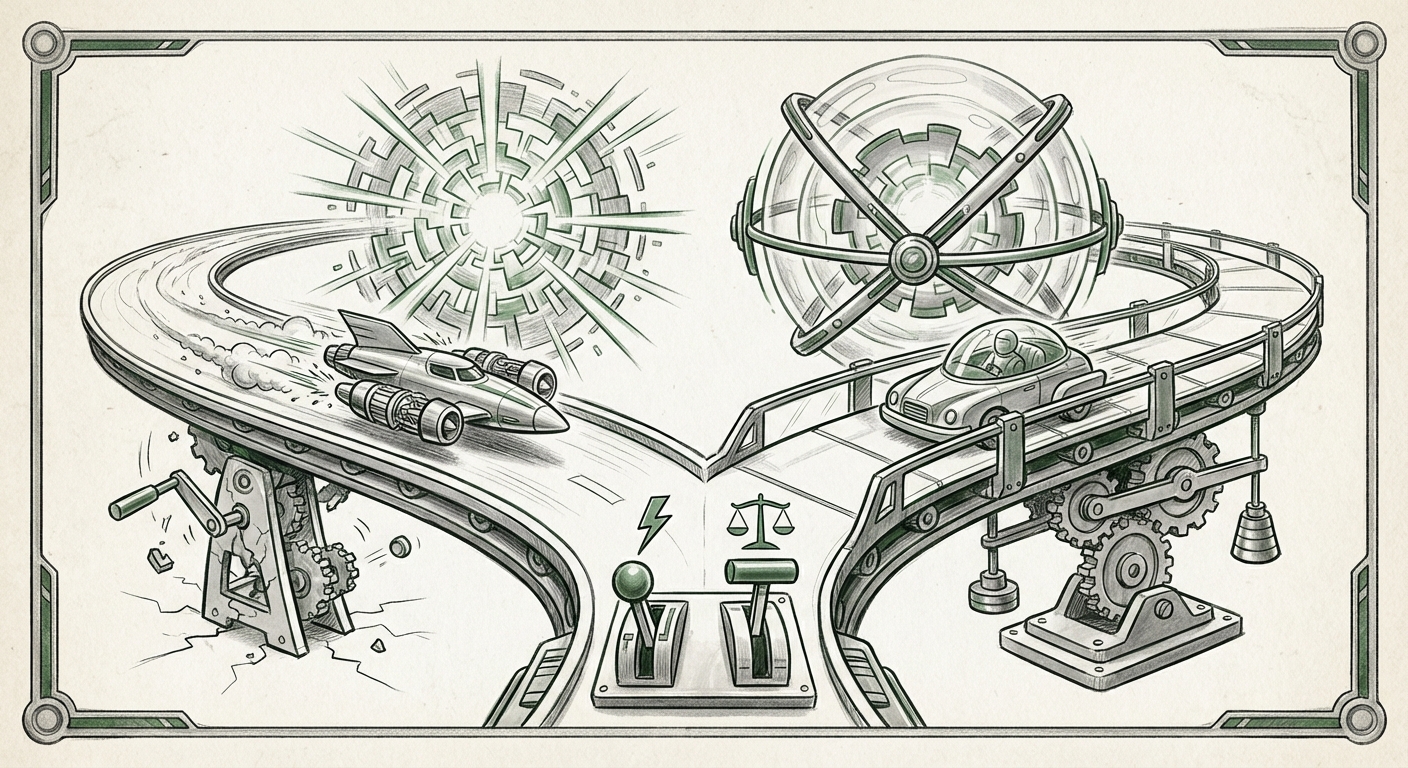

The takeaway from this ideological friction is clear: the future of AI will not be monolithic. It will be bifurcated, driven by differing tolerances for risk.

Insight 1: Diversify Your AI Supply Chain

Relying on a single frontier provider is becoming increasingly dangerous. Companies should actively test and integrate models from both philosophical camps. If one model exhibits unacceptable brittleness or bias, the other may offer a stable alternative built on different core safety assumptions.

Insight 2: Prioritize Interpretability Research Internally

Regardless of which vendor a company chooses, the internal team must prioritize understanding *why* the model makes the decisions it does. If the external vendor is prioritizing speed, the internal team must prioritize diligence. The cost of external auditing and validation must be factored into the budget for AI adoption.

Insight 3: Engage Early with Policy Makers

The debate between Amodei and his peers is the very policy debate governments are currently having. Businesses that understand the nuances—the tradeoff between faster scientific breakthroughs and greater systemic risk—are best positioned to influence emerging regulations favorably, rather than reacting to mandates handed down later.

Conclusion: The Era of Intentional AI Development

Dario Amodei’s comments serve as a critical wake-up call. They confirm that the most advanced minds in AI are grappling with the same urgent questions: How fast is too fast, and what is the real cost of being wrong?

The split between measured alignment and breakneck scaling is defining the AI landscape. It implies that the next great leaps in intelligence will be accompanied by two very different flavors of technological product: one dazzlingly powerful but potentially volatile, and the other robustly controlled but perhaps requiring more patience.

For the world, this tension is beneficial. Competition drives progress, but philosophical friction drives necessary caution. The future of powerful AI will be shaped not just by who builds the smartest model, but by who builds the model they can most confidently trust to operate within the boundaries of human flourishing.