The Price War in AI: How ByteDance's Seed2.0 is Redefining the Economics of Generative Models

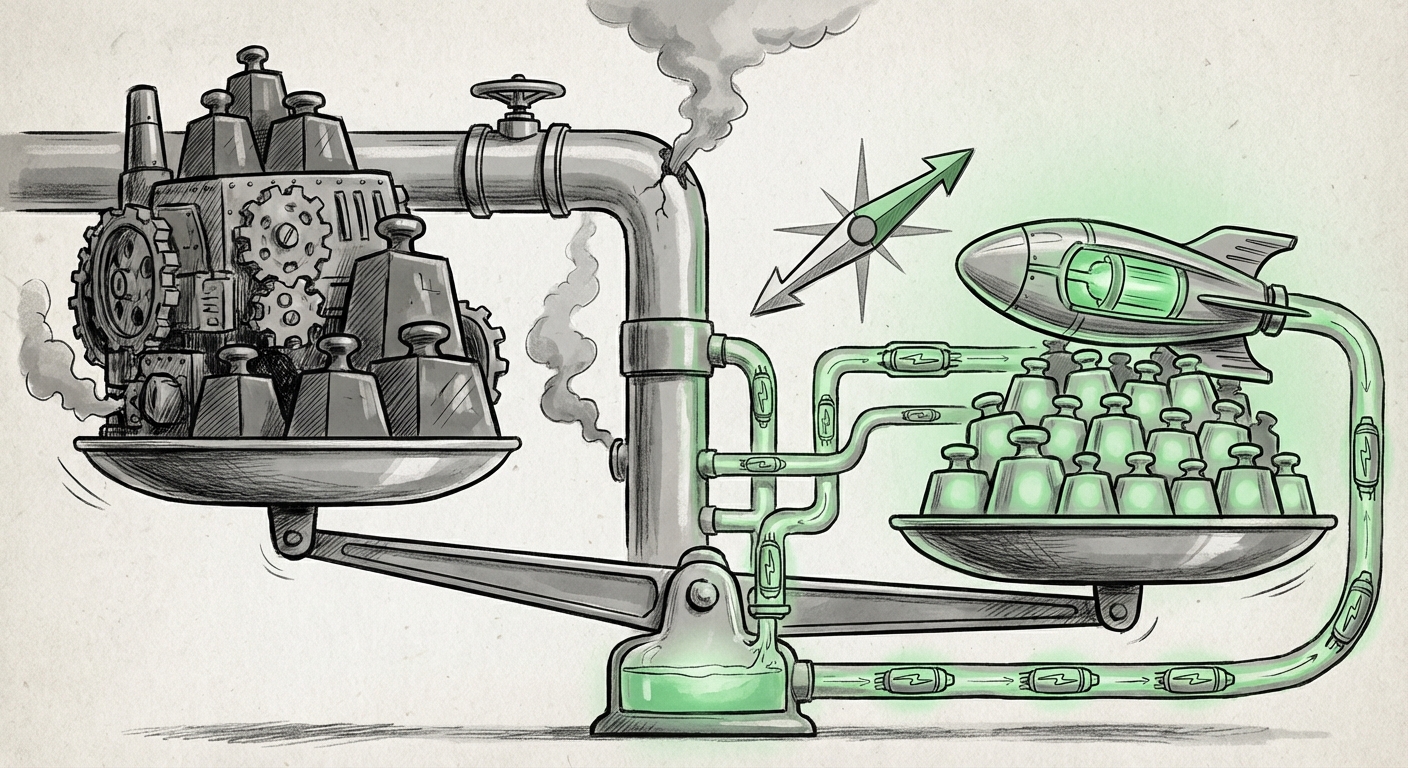

The race to build the world’s most capable Artificial Intelligence models has, until recently, been dominated by a handful of well-funded Western technology giants. The narrative centered on ever-increasing scale—more parameters, more data, and consequently, soaring training costs. However, a familiar, disruptive pattern is emerging from Asia: high performance delivered at a fraction of the price.

The recent unveiling of ByteDance’s Seed2.0 model series is the latest, loudest signal of this shift. Reports indicate that these new models match or exceed the performance benchmarks of leading Western offerings while being drastically cheaper to use. This isn't just a minor update; it is a strategic move that threatens to rewrite the unit economics of the entire generative AI industry, forcing incumbents to confront a new reality: parity might soon be commoditized.

The Shifting Landscape: From Scale to Efficiency

For years, achieving state-of-the-art (SOTA) results seemed inextricably linked to monumental investment. Companies like OpenAI and Google poured billions into massive GPU clusters to train frontier models like GPT-4 and Gemini. This created a high barrier to entry, protecting early market leaders. ByteDance’s Seed2.0 suggests that this monopoly on performance, built on brute-force scale, is crumbling.

This trend echoes historical technology cycles. When mainframes dominated, personal computers offered usability and affordability, eventually seizing the market. Now, AI is facing its PC moment. To understand the full gravity of ByteDance’s move, we must analyze four key areas that shape this developing price war:

- The direct quantitative impact of the pricing difference.

- The technological breakthroughs enabling such efficiency.

- The downstream financial effects on infrastructure providers.

- The broader geopolitical context of technological competition.

1. Quantifying the Price Squeeze: Chinese Models vs. Western Giants

When an article states that a new model costs "a fraction of the price," for business leaders and procurement managers, this translates directly to operational savings and broader accessibility. If a major enterprise relies on GPT-4 for complex coding assistance or customer service orchestration, switching to a model that performs comparably but cuts API costs by 70% or 80% becomes an urgent priority.

We need to look critically at the comparative economics. Research into "Chinese AI models vs OpenAI pricing structure" reveals a consistent strategy: undercut the market leaders not by small margins, but by magnitudes. This aggressive pricing strategy forces Western providers into a difficult bind. They cannot easily slash their own pricing because their development and operational expenditures (OpEx) remain staggeringly high due to the massive scale of their deployed models.

For the target audience of Enterprise CTOs and Investors, this means that vendor lock-in is becoming riskier. The cost-benefit analysis shifts. Why pay a premium for a marginal improvement in coherence when a significantly cheaper model handles 95% of the required tasks perfectly well? This relentless downward pressure on per-token pricing erodes the massive profit margins that high-cost models currently enjoy.

2. The "How": Unlocking Efficiency in LLM Training

How are companies like ByteDance achieving parity without seemingly matching the sheer scale of the Western flagships? The answer lies in deep engineering innovation focused on efficiency. This addresses the question: "Emerging trends in efficient large language model (LLM) training."

The focus is shifting from merely adding more layers to perfecting the training data and inference architecture. Key areas of innovation include:

- Data Curation: A greater emphasis on high-quality, targeted data rather than simply scraping the entire internet. Better data means smaller, smarter models.

- Architectural Optimizations: The adoption and refinement of techniques like Mixture-of-Experts (MoE), where only parts of the massive model are activated for any given query, drastically reducing the compute needed at inference time.

- Hardware-Aware Training: Designing models specifically optimized for the available domestic hardware infrastructure, rather than relying solely on the latest high-end chips.

For ML Engineers and Researchers, this confirms that the era of the monolithic, trillion-parameter model being the only path to intelligence is over. Efficiency is the new moat. Companies that master data quality and inference optimization can deliver SOTA performance at a sustainable, low operational cost, making their business models far more resilient to market fluctuations.

3. The Ripple Effect: Cloud Infrastructure and Investment

The cost of the foundation model itself is only one part of the equation. The massive compute required to run these models—the inference phase—is where the true long-term expense lies, heavily influencing "Impact of high-performance, low-cost AI on cloud infrastructure spending."

If Seed2.0 and its peers make AI cheaper to run, the entire financial calculus for cloud providers (AWS, Azure, GCP) and the venture capital firms backing them must adjust. Previously, the assumption was that massive compute demand would sustain high infrastructure spending for years to come.

- Deflationary Pressure: Cheaper models mean clients need less time on expensive A100 or H100 GPUs for the same workload. This creates deflationary pressure on cloud compute pricing itself.

- Shifting CAPEX: For enterprises, the decision may move away from building massive internal AI infrastructure (a high Capital Expenditure) toward smart, high-volume API consumption. However, if those API costs drop rapidly, the justification for building internal infrastructure weakens even further, favoring agility over ownership.

This has profound implications for Venture Capitalists and Infrastructure Analysts. The premium valuation placed on companies whose primary value proposition is access to massive compute capacity might need recalibration. The new winners will be those who build the most efficient software layers *on top* of the foundational models, or those who can deliver proprietary, specialized chips that offer superior performance-per-watt.

4. Beyond Benchmarks: The Geopolitical Dimension

Finally, the strategic significance of ByteDance’s release cannot be ignored when analyzing the "Geopolitical implications of open-source vs proprietary AI competition." ByteDance is a global entity with roots in China, a region currently navigating significant technological decoupling from the West, particularly concerning access to cutting-edge semiconductor technology.

The availability of powerful, affordable, non-US foundational models offers strategic optionality for global enterprises and governments alike. It mitigates reliance on any single national or corporate AI ecosystem. For Policy Makers and Corporate Strategists, this presents a complex balancing act:

- Technological Sovereignty: Nations concerned about data governance or relying on US-controlled infrastructure can pivot toward validated, high-performing alternatives emanating from other technology hubs.

- Innovation Diffusion: When world-class tools are dramatically cheaper, innovation accelerates globally, not just in the regions that invested the most initially.

This competition pushes Western leaders to focus intensely on what they can guard: true frontier capabilities that require access to the absolute newest chip generations, or leveraging proprietary enterprise data in ways that non-local competitors cannot match.

Future Implications: Actionable Insights for a New AI Era

The era of simply buying the biggest, most expensive model for every task is drawing to a close. The convergence of low-cost, high-performance models like Seed2.0 signals a maturation of the AI market into a utility-like service, similar to cloud storage or basic compute power.

Implication 1: The Rise of the 'Model Zoo' Strategy

Businesses must move away from a single-model dependency. The future involves a sophisticated "Model Zoo" approach. Why use a $100/hour premium model to summarize an internal memo when a $5/hour model that matches 98% of the performance suffices? Organizations should focus on Model Routing Intelligence—the software layer that automatically selects the cheapest, fastest, and most accurate model for any given query.

Actionable Insight: Invest immediately in MLOps tools focused on multi-model orchestration and performance tracking across diverse vendors, optimizing for cost-to-performance ratios rather than absolute benchmark scores.

Implication 2: Revaluation of AI Talent

As access to powerful models becomes cheaper, the value shifts from the model builders to the model users and integrators. The premium on deep learning researchers who can only train trillion-parameter models may plateau. Instead, high value will be placed on prompt engineers, AI ethicists, domain experts who can fine-tune these accessible models for niche tasks, and software architects who can integrate diverse AI services reliably.

Actionable Insight: Upskill existing engineering teams in fine-tuning, RAG (Retrieval-Augmented Generation), and efficient API integration, rather than solely chasing the race for proprietary foundation model development.

Implication 3: Competitive Pressure on Cloud Hyperscalers

If foundational model training and inference costs drop dramatically, the immediate pressure will be felt by the companies selling the infrastructure used to train those models. This forces hyperscalers to innovate on specialized silicon or move rapidly up the value stack into offering highly differentiated software services layered on top of the models.

Actionable Insight: Enterprises should monitor cloud providers’ custom chip roadmaps (like Google’s TPUs or AWS’s Trainium/Inferentia) as these specialized accelerators may be the only way they can justify charging a premium over commodity model access.

Conclusion: Utility, Not Rarity, Defines the Next Decade

ByteDance’s Seed2.0 is more than just a new product release; it is a declaration that the foundational AI market is entering a phase of commoditization driven by engineering rigor. The intense competition between US and Chinese technological powerhouses is serving as a powerful accelerator for efficiency gains globally.

The future of AI usage will be characterized by *ubiquity* rather than *rarity*. The capability to perform high-level reasoning and generation will become widespread and inexpensive. Success for businesses will hinge not on who can afford the most expensive foundational model, but on who can most creatively and cost-effectively deploy the optimal, often cheaper, model for millions of specific tasks. The price war has begun, and the ultimate winners will be those who master efficiency in deployment.