Bird Song to Whale Song: How DeepMind's AI Generalization Signals the Era of Universal Foundation Models

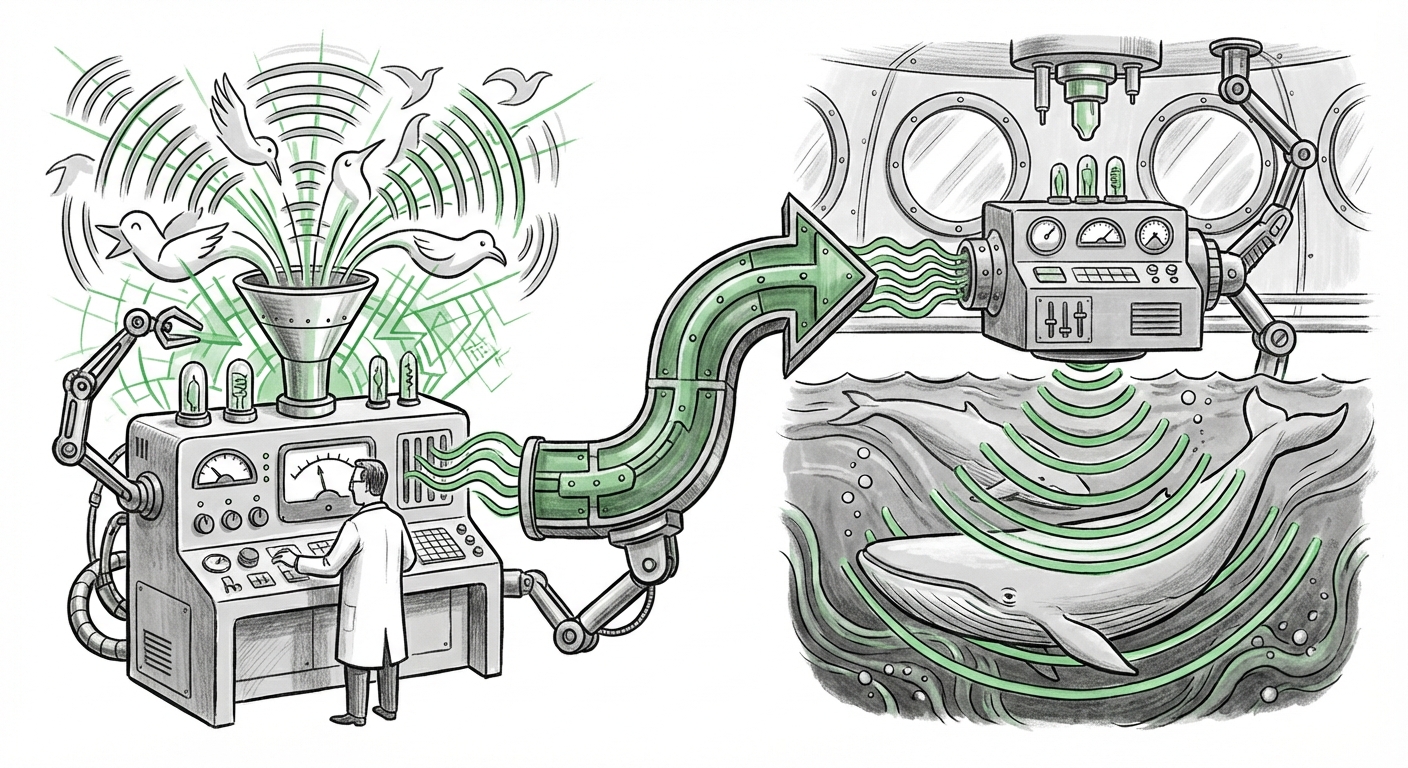

The world of artificial intelligence is constantly striving for higher performance, but often, that performance comes at a steep cost: massive, perfectly labeled datasets. However, a recent breakthrough from Google DeepMind throws a powerful curveball into this data-centric paradigm. Imagine training an Artificial Intelligence model primarily on the chirps and calls of birds—a vast, well-documented acoustic domain—and then deploying that same model to successfully identify the complex, underwater vocalizations of whales. This is precisely what DeepMind achieved, demonstrating a startling power of generalization that has profound implications for everything from ecology to industrial automation.

As an AI analyst, I view this not just as an interesting scientific footnote, but as a pivotal moment signaling the true arrival of robust, foundational models capable of transferring knowledge across vastly different specialized domains. This leap suggests our AI systems are beginning to learn the universal grammar of sound itself.

The Phenomenon: Birds Training Whales

The core concept demonstrated is overcoming the "domain shift." Traditionally, if you build an AI to classify birds (say, identifying species based on their songs), that model is essentially useless for classifying complex, low-frequency whale calls. They are acoustically distant worlds. Yet, DeepMind's model, trained predominantly on bird data, exhibited superior performance in classifying whale sounds compared to models specifically trained on whale data.

How is this possible? The answer, as suggested by the research, lies deep within evolutionary biology and the physics of sound. The AI, forced to learn complex features from the bird data, wasn't just memorizing individual calls. It was likely learning the fundamental building blocks of acoustic communication: how sound changes pitch over time (frequency modulation), the structure of repeating patterns, and the basic energetic envelope of a complex signal.

Even though a bird's chirp and a whale's low-frequency moan sound different to us, the underlying mathematical structures that define a meaningful "sound event" might share common ancestry in how animals interact with their physical environments. The AI, through its intense training, stumbled upon these shared, fundamental principles.

Context 1: The Rise of Foundational Audio Models

This bird-to-whale success story is the perfect real-world validation for the ongoing research into large, **Foundation Models** for sensory data. Just as massive language models (LLMs) learn the syntax and semantics of human language by reading the entire internet, researchers are building generalized audio models.

These models leverage **Self-Supervised Learning (SSL)**. Instead of needing millions of human labels (e.g., "this is a robin," "this is a humpback whale"), SSL allows the AI to learn by hiding parts of the data from itself and learning to predict the missing piece. This forces the model to build an internal, nuanced representation of the soundscape.

Research into models like **Wav2Vec 2.0** or **AudioCLIP** confirms this trend. They aim to create a universal translator for sound. When DeepMind’s generalized model outperforms specialized models, it confirms that the generalized representation learned through SSL is more powerful, robust, and capable of **out-of-distribution generalization** (performing well on data it has never seen before, like whale songs, after only being trained on birds). This is the holy grail for creating truly scalable AI systems.

Context 2: Revolutionizing Ecological Monitoring

For conservation science, this finding is nothing short of revolutionary. Monitoring biodiversity often relies on experts manually listening to countless hours of field recordings—a bottleneck of time and expertise. Furthermore, collecting high-quality, labeled data for rare or deep-sea species is incredibly difficult.

If a general-purpose acoustic model can be established—perhaps one trained broadly on terrestrial and avian sounds—it promises a massive reduction in the data labeling required for new ecological surveys. Researchers could deploy acoustic sensors in a new rainforest or a remote ocean trench, and the existing model might immediately begin detecting relevant species with high accuracy. This supports the push for large-scale **AI bioacoustics** deployment, helping track endangered species and assess the health of global ecosystems.

The need for models that require less specific, labeled data for deployment in remote areas is critical for initiatives like global biodiversity tracking. Robust generalization means faster deployment and lower operational costs for monitoring efforts, directly aiding conservation efforts worldwide.

Context 3: The Physics and Biology of Sound

Why did the bird data work so well? The underlying explanation loops back to **evolutionary constraints**. Animals, whether birds or whales, have evolved to communicate within physical limitations defined by their medium (air vs. water) and their physiology. This results in shared mathematical patterns in their sounds. For example, many animals use rapid frequency shifts to signal urgency or territoriality. If an AI learns to identify that structure in a bird’s trill, it can recognize the same underlying structural logic in a whale’s call, even if the absolute frequencies are drastically different.

This intersects with the field of **soundscape ecology**, which studies how organisms interact acoustically with their environment. The success of the bird-trained model suggests that AI is effectively reverse-engineering the fundamental laws of natural acoustics before learning the specifics of any single species. This hints at a future where we can develop AI that understands the physics of natural signals.

Context 4: The Industrial Leap to Domain Adaptation

While the context is ecological, the technological implications resonate across every industry reliant on machine vision or sensor analysis. Businesses constantly battle **domain shift**—when an AI trained perfectly in a lab or on one production line fails when moved to another.

Consider manufacturing quality control. A model trained to spot defects on one brand of microchip might fail when presented with a slightly different substrate or a machine vibrating at a new frequency. DeepMind’s result showcases extreme **Domain Adaptation**—the AI adapted seamlessly from one acoustic world to another. This validates the technical path toward building extremely robust industrial AI systems that are less susceptible to minor environmental or sensor changes.

If AI can manage the massive leap from bird to whale, it should be significantly better at handling the minor shifts encountered when moving from Factory A to Factory B, drastically reducing the need for constant retraining and data collection, addressing one of the biggest hurdles in scaling AI deployment.

The Future Landscape: Beyond Labeled Data

This development shifts the focus of AI development away from simply accumulating more labels and towards building smarter, more generalizable architectures.

For AI Researchers: Focus on Representation

The clear mandate for researchers is to continue prioritizing **representation learning**. The goal is no longer just accuracy on a known test set, but the creation of internal knowledge structures so rich that they capture the underlying reality (the "physics" of sound, in this case) rather than just the surface data.

We must explore how these generalized audio representations connect to other modalities, like vision (e.g., training a model on bird pictures and sound, then applying it to fish movement and sound). The possibility of a truly multimodal, general sensory foundation model is now much closer.

For Businesses: Rethinking Data Strategy

Businesses must shift their mindset regarding data. Instead of viewing data labeling as an ongoing operational cost required for every new deployment, they should invest in **pre-training** foundational models on massive, generalized, internally collected datasets (even if they are noisy or unlabeled). This pre-trained model becomes an asset that can be quickly "fine-tuned" for specific, low-data tasks across the organization.

This reduces the time-to-deployment for new machine learning projects from months to weeks, sometimes days. If your core AI architecture is designed for transferability, innovation accelerates dramatically.

For Society: Accelerated Scientific Discovery

The societal impact is perhaps the most exciting. By making AI tools vastly more accessible for scientific exploration—requiring less specialized data collection and domain expertise—we democratize discovery. Ecologists can now deploy sophisticated analytical tools immediately. Researchers studying neurology or physics can leverage models that have already mastered complex signal analysis in unrelated fields.

This trend suggests a future where generalized AI acts as a scientific co-pilot, finding hidden patterns in complex sensory data that humans or domain-specific AIs would miss entirely.

Actionable Insights: Preparing for the Generalized Future

To capitalize on this emerging capability, stakeholders should take proactive steps:

- Audit Your Data for Structure, Not Just Labels: Look critically at your existing large datasets. Are they simply labeled, or do they contain rich, underlying structural information? Focus efforts on creating high-quality, generalized pre-training sets, not just maximizing annotations for immediate tasks.

- Prioritize Self-Supervised Frameworks: Invest in engineering teams skilled in SSL and foundation model methodologies. The next competitive advantage won't come from having 10% more labeled data, but from having a 10x better representation learner.

- Embrace Cross-Domain Experimentation: Encourage AI teams to test deployment strategies in completely unrelated fields. If you develop an AI for detecting microscopic material fatigue, test its baseline performance on something acoustically distant, like speech recognition. This pressure-testing reveals the true limits of generalization.

- Partner with Foundational Research Labs: For industries with high barriers to data collection (like deep-sea exploration or drug discovery), collaborate with leading research groups working on large-scale foundation models. Applying these massive, generalized models to niche scientific problems is where the immediate ROI lies.

The discovery that a model learning bird songs can instantly understand whale calls is a monumental step forward. It implies that true artificial intelligence might not be about mastering individual tasks perfectly, but about mastering the fundamental *rules* of perception and representation. We are moving out of the age of narrow, specialized AI and firmly into the era of the Universal Foundation Model, where knowledge flows seamlessly across the landscape of data.