The Great Leaderboard Shakeup: Why Fragile LLM Benchmarks Threaten AI Progress

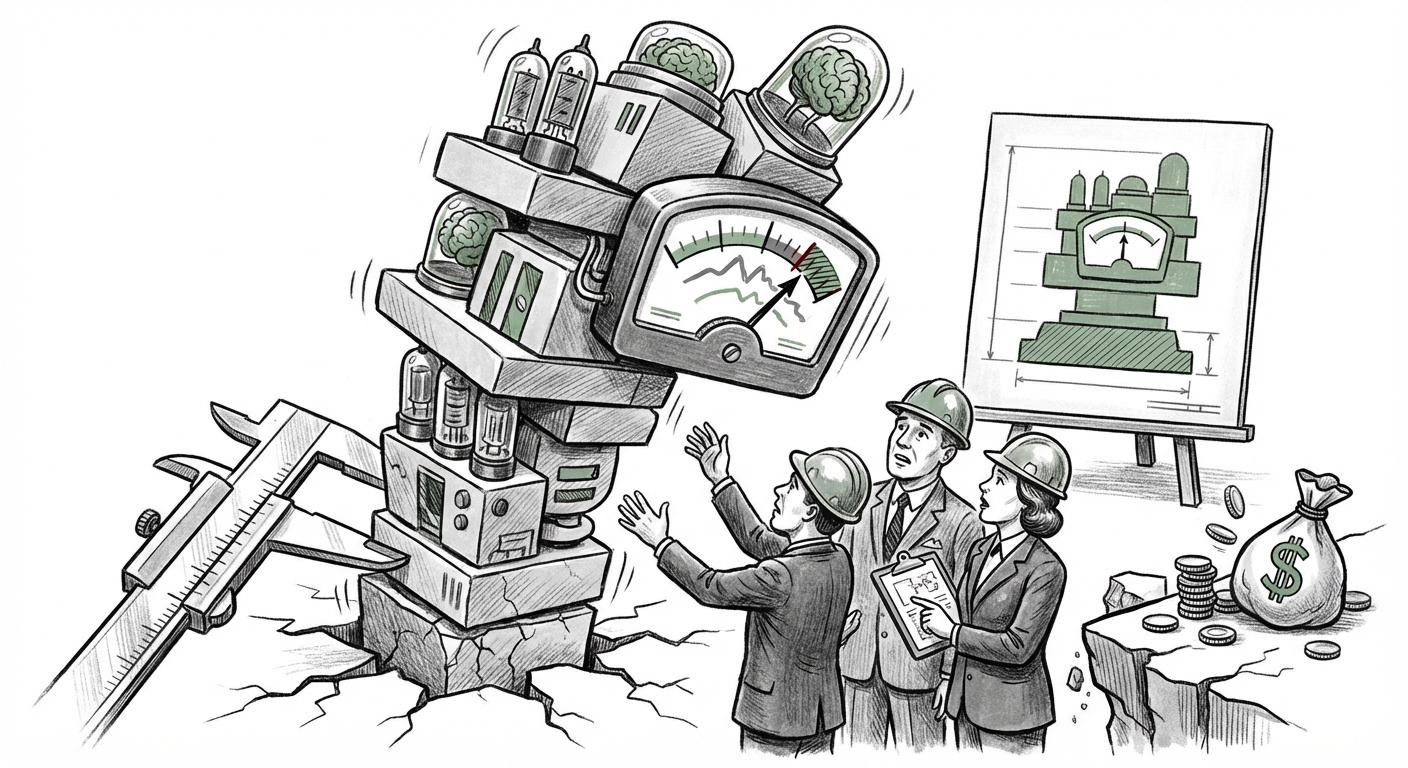

The race to build the world’s best Large Language Model (LLM) is often depicted as a clear progression: Model A beats Model B on Leaderboard X, securing bragging rights and investor dollars. But what happens when the very yardstick used to measure success—the benchmark leaderboard—is revealed to be fundamentally unstable? A recent study has sounded the alarm, indicating that popular LLM ranking platforms are statistically fragile. This isn't just an academic quibble; it strikes at the very heart of how the AI industry measures progress, allocates resources, and ultimately, builds the future.

For developers, businesses betting on AI services, and the public trying to understand which tool is truly superior, this fragility undermines confidence. If a small change in input or testing conditions can wildly swing a model's ranking, how much weight should we truly place on these public contests? This analysis dives into the roots of this instability, explores the necessary shift in evaluation paradigms, and unpacks the wide-ranging implications for the technological landscape.

The Cracks in the Foundation: Understanding Statistical Fragility

Imagine two race cars are pitted against each other. In one race, the wind is slightly in Car A’s favor, and Car A wins by a nose. In the very next race, the wind shifts slightly toward Car B, and Car B wins by a nose. If these tiny, external variations dictate the "winner," the measurement is fragile. This is precisely what appears to be happening with many public LLM leaderboards, which often rely on crowdsourced human preference data (like Elo ratings derived from pairwise battles).

The core issue lies in the statistical significance of the results. When a model jumps from the 10th spot to the 3rd spot after minor updates or testing variations, it signals that the gap between those models is smaller than the inherent noise in the testing system.

The Noise in the Crowd: Statistical Validity of Human Judgment

Many of the most popular, dynamic leaderboards rely heavily on human preference data. People are asked to compare two outputs side-by-side and choose the "better" one. While this captures real-world usefulness, it introduces subjectivity and statistical variance. As suggested by research into the "Statistical significance of human preference data in LLM testing," human judgment introduces noise that can easily dwarf the actual performance difference between two closely matched models.

For the non-expert, think of it this way: When thousands of people judge two LLMs, small groups favoring one model over another can create huge point swings, even if both models are actually performing quite well overall. The ranking becomes susceptible to demographic skew in the voters or simply random chance, which makes the published order less reliable than it appears.

The Temptation of Gaming: Adversarial Attacks on Benchmarks

Fragility isn't always accidental. When rankings become the primary signal of success, there is immense pressure to optimize for the leaderboard itself—a concept known in some circles as "benchmark saturation" or "gaming." This is where the issues raised by searching for "LLM leaderboard bias" become relevant.

If a model's performance is measured by how well it handles 100 specific types of prompts on a public platform, developers might inadvertently—or deliberately—tune their models to excel specifically at those 100 prompts, rather than improving general intelligence. Furthermore, as research on prompt injection and adversarial robustness shows [https://arxiv.org/abs/2308.08301](https://arxiv.org/abs/2308.08301), systems can be steered toward better outcomes through clever input manipulation. If the testing methodology isn't robust against these manipulations, the published scores are, at best, a snapshot of temporary exploitability, not enduring capability.

The Market Impact: Who Wins and Who Loses in an Uncertain Ranking Landscape?

The instability of these public measurements has significant, tangible effects on the AI ecosystem, impacting everything from R&D budgets to developer adoption strategies.

The Investment Conundrum

For venture capitalists and corporate strategists, leaderboards serve as essential, low-cost due diligence. When a new LLM startup emerges, the first question is often, "Where does it rank?" If the rankings are unstable, investors face a crisis of confidence. They cannot easily compare proprietary models against each other based on public data. This uncertainty may lead to two outcomes:

- Increased Secrecy: Companies may retreat entirely, relying only on closed, internal metrics that the public cannot audit. This reduces transparency across the industry.

- Over-Indexing on Hype: If hard data is unreliable, funding decisions might swing back toward marketing budgets and perceived momentum rather than validated technical capability.

The Open Source vs. Proprietary Battleground

Fragile rankings profoundly affect the dynamics between open-source and closed, proprietary models. Open-source communities often rely heavily on public leaderboards to prove their relevance against giants like OpenAI or Google. As reports on the "Impact of fragile LLM rankings on open source adoption" suggest, instability can be a double-edged sword.

If a highly funded proprietary model suddenly plummets due to a statistical anomaly, an open-source contender might briefly surge, gaining crucial visibility and developer adoption. However, if the *entire system* is deemed untrustworthy, developers might stop relying on any public comparison, leading to fragmentation where everyone runs local, proprietary evaluations.

The Way Forward: Demanding Statistical Rigor and New Metrics

The current predicament is not a dead end; it is a loud call to action for methodological evolution. The industry must move beyond simple, noisy comparisons toward evaluation frameworks built on scientific validity.

Searching Beyond Elo: The Need for New Paradigms

The reliance on Elo ratings, derived from pairwise human comparisons, is showing its limits. The solution requires exploring "Next generation LLM evaluation metrics" that are less susceptible to random variance and gaming.

What might these alternatives look like? They likely involve a multi-pronged approach:

- Focus on Robustness: Testing models not just on easy paths but on stress-test scenarios designed to provoke failure (adversarial testing).

- Deeper Contextual Evaluation: Moving away from single-question assessments to complex, multi-step reasoning tasks that require sustained coherence.

- Scientific Grounding: Requiring larger, more diverse, and statistically controlled datasets for human evaluation, coupled with rigorous statistical significance testing to ensure a measured difference is not just noise.

As suggested in the discussion around "Beyond Elo rating for LLMs," the future of evaluation likely involves benchmarks that are harder to game because they measure fundamental, emergent capabilities (like deep knowledge retrieval or complex planning) rather than surface-level output quality.

Practical Implications and Actionable Insights

For everyone engaging with LLMs—from coders to CEOs—the message is clear: Do not trust a single, static leaderboard.

For AI Developers and Researchers:

Your internal testing must be more rigorous than your public claims. Invest heavily in creating a diverse and constantly updated private evaluation suite that tests for robustness, safety, and generalization, rather than just aiming for the current leaderboard peak. If you participate in public arenas, treat the results as directional guidance, not definitive proof of superiority.

For Business Leaders and Buyers:

When evaluating a vendor’s model claims, ask pointed questions about their evaluation methodology. Ask:

- "What is the margin of error on your stated performance improvement?"

- "How often do you rotate your benchmark data to prevent saturation?"

- "How does this performance translate on metrics critical to *our specific business use case* (e.g., latency, factuality in our domain)?"

Relying solely on a vendor's claim of being "Rank #1" is now a significant operational risk.

For the Broader Ecosystem:

We need greater industry collaboration on standardized, open, and scientifically sound evaluation protocols. The current leaderboard system rewards speed over stability. We must reward verifiable rigor. If we allow the perception of progress to be dictated by statistically fragile measurements, we risk overinvesting in slightly better-tuned models instead of funding the genuine breakthroughs in AI architecture and safety that we truly need.

The realization that LLM leaderboards are fragile is a necessary moment of reckoning. It forces the industry to mature its measurement tools just as the technology itself is maturing exponentially. The next era of AI success will not be defined by who wins the next subjective battle, but by who builds the most reliable, transparent, and scientifically validated testing environments.