The Cognitive Leap: Why Emoji-Driven AI Memory Compression is Key to AGI

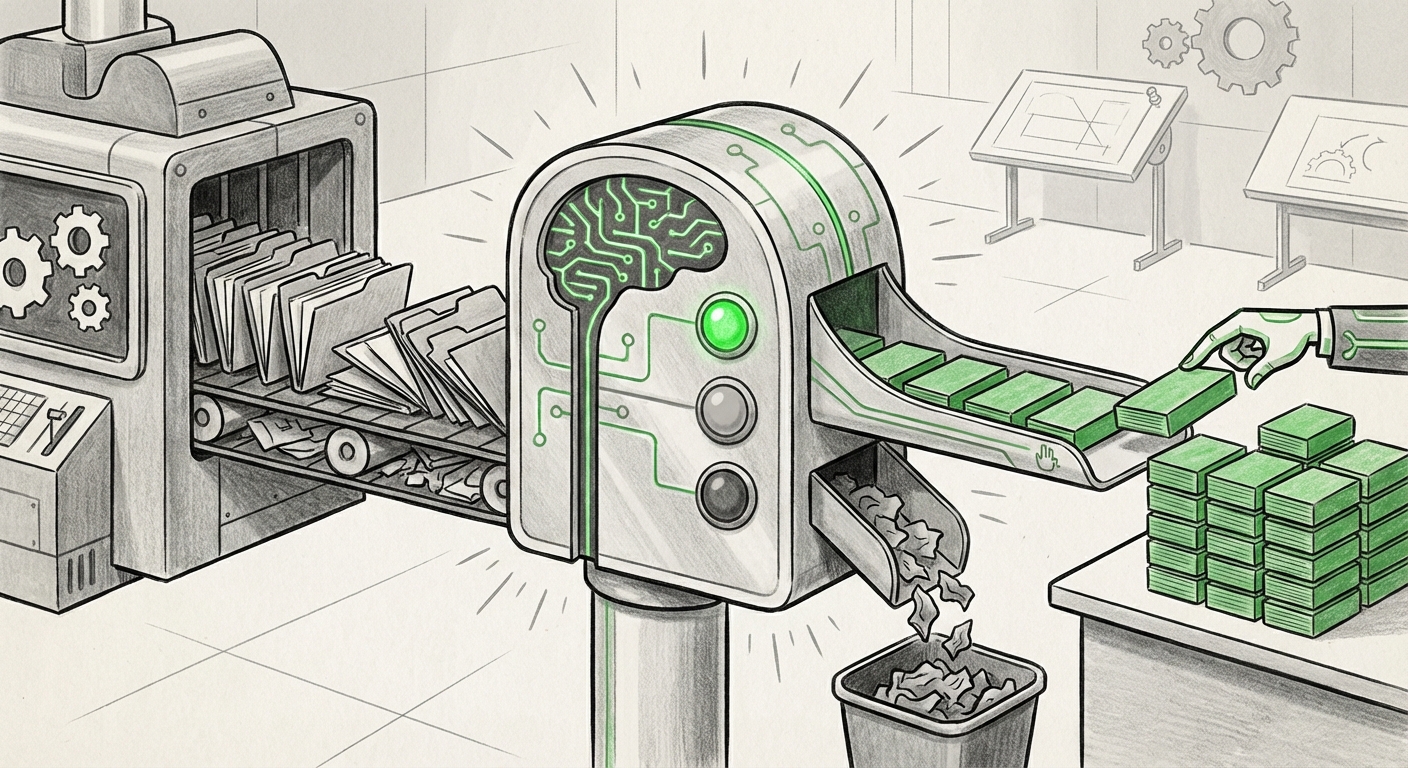

For years, the race in Large Language Models (LLMs) was about scale: more parameters, more data, and, most recently, bigger context windows. We have seen models capable of processing entire novels in a single prompt. Yet, this brute-force approach to memory is fundamentally inefficient and expensive. True intelligence isn't just about *how much* you can hold in your head at once; it’s about *what* you choose to remember and *how* you prioritize it.

The emergence of open-source frameworks like Mastra signals a crucial pivot. By modeling AI memory compression after human cognition—using simple cues like traffic light emojis to tag conversational importance—Mastra isn't just nudging the performance needle; it’s redefining the architecture of AI retention. This move from raw data capacity to intelligent, prioritized memory encoding is perhaps one of the most significant steps toward building robust, practical Artificial General Intelligence (AGI).

The Context Window Ceiling: Why Size Isn't Everything

Imagine trying to do your job by keeping every email, meeting transcript, and chat log from the last five years open on your screen simultaneously. It would be overwhelming, paralyzing, and costly. This is the current bottleneck for massive context windows in LLMs. While scaling context windows (e.g., from 32k to 200k to 1 million tokens) is impressive engineering, it runs into diminishing returns.

Technical analysis of these massive contexts often reveals the "lost in the middle" problem. LLMs tend to focus heavily on the beginning and end of a very long input, often forgetting crucial details embedded deep within the middle section. Furthermore, the computational cost of attention mechanisms scales rapidly with context length, making long-context usage prohibitively expensive for real-time applications.

Research into scaling limitations confirms this inefficiency. We need systems that can condense vast experiences into meaningful, retrievable summaries. This is where Mastra’s approach shines. Instead of forcing the LLM to re-read a million tokens to find a single forgotten fact, Mastra compresses the conversation history into dense, prioritized observations. It learns to ask: "Was that fact critical (Green light) or just conversational filler (Red light)?"

For engineers and CTOs, this means shifting focus from purchasing massive GPU memory for context buffering to investing in better **memory encoding algorithms**. The future isn't about fitting more data; it’s about distilling smarter knowledge.

Beyond RAG: Internalizing Experience Through Compression

Currently, the dominant method for giving LLMs long-term memory is Retrieval-Augmented Generation (RAG). RAG works by storing information externally (like a database) and fetching relevant snippets when the model needs them. It’s an excellent tool, but it treats the model like an external search engine.

Mastra, however, suggests an internalized, self-aware form of memory management. By achieving a top score on benchmarks like LongMemEval, it proves that its compression technique allows the agent to retain complex state information better than many sophisticated external retrieval systems. This moves the agent closer to having a true working memory.

The Power of the Emoji Cue

The use of traffic light emojis (Green for critical, Yellow for contextual, Red for dismissible) is brilliantly simple. It’s a form of meta-tagging that doesn't require complex external vector databases or intricate embedding schemas. It leverages the LLM’s existing ability to understand symbolic meaning and apply human-interpretable prioritization rules. This simplicity is vital for adoption and rapid development within the open-source community.

When we look at related research on Memory-Augmented Neural Networks (MANNs) or Compressive Transformers, the goal is similar: encode the past state efficiently. Mastra achieves this through a high-level, semantic tagging system that bypasses some of the complexity of purely mathematical compression, making the compressed memory more immediately useful to the model for decision-making.

Echoes of Biology: Aligning AI Memory with Cognition

The most profound aspect of this trend is the deliberate alignment with biological cognition. Human memory is not a perfect recording device; it is selective, biased, and prioritizes relevance for survival and future action. Our brains consolidate important emotional or actionable experiences, letting incidental details fade.

To advance toward AGI, AI must move beyond passive information storage. It needs to learn *what* to forget. Research into neuroscience models for selective memory consolidation explores how biological systems filter information. Mastra’s priority tagging system directly mimics this filtering process. An agent interacting over weeks needs to know that the client’s stated budget limit (Green) is more important than the specific color shirt the client wore yesterday (Red).

This cognitive alignment has major implications for safety and reliability. An agent that understands the relative importance of its stored knowledge is less likely to make catastrophic errors based on low-priority, irrelevant historical data. It fosters contextual wisdom over mere data recall.

The Open Source Imperative and Future Frameworks

Mastra’s choice to be open source is as important as its technical novelty. The development of foundational AI capabilities—like memory management—cannot remain siloed within a few large corporations if we expect rapid, democratized progress toward AGI.

The trend suggests that the next battleground for AI frameworks will be in these specialized, high-efficiency components. While large proprietary models continue to expand their raw context, the open-source community is winning the efficiency and specialization game. We are seeing a maturation where developers look for specific, best-in-class modules—a memory library here, a planning module there—to snap together complex agents. Articles tracking open-source frameworks for LLM memory management highlight a landscape where these modular components become standardized tools.

If Mastra’s compression methodology becomes the standard way open-source agents manage history, it creates a powerful, verifiable standard for agentic behavior that proprietary systems may struggle to match due to transparency concerns.

Future Implications: Business, Ethics, and Agentic Systems

What does this focus on efficient, prioritized memory mean for the real world?

For Business: Sustainable Agent Deployment

Businesses currently deploying sophisticated AI agents for customer service, code generation, or complex data analysis face escalating infrastructure costs tied to long context. Mastra-like compression allows organizations to maintain long, meaningful interaction histories without incurring the massive compute bill of 1M+ token contexts. This makes highly capable, personalized AI accessible to smaller enterprises.

Actionable Insight: Companies should begin auditing their current LLM usage to identify "memory hogs"—conversations or sessions that utilize excessive context tokens for minimal informational gain. Implementing a compression layer like Mastra can immediately yield cost savings while improving the agent's ability to recall core tasks.

For AI Systems: True Persistence and Personalization

The future AI agent will not just remember the last 10 minutes of conversation; it will remember the user’s long-term goals, preferences, and past failures. This persistence is the difference between a useful tool and a true collaborator. By assigning memory a priority rating, the AI learns to adapt its response strategy based on the user's history—something currently difficult to manage outside of slow, external database lookups.

For Ethics and Alignment: Interpretability

The use of human-understandable tags (emojis) enhances interpretability. If an AI agent makes a strange decision, engineers can look at its compressed memory log and see exactly what it flagged as 'Green' (critical) versus 'Red' (irrelevant). This visibility is crucial for debugging, auditing, and ensuring the AI's reasoning process remains aligned with human intent.

Conclusion: Smarter, Not Just Bigger

The breakthrough demonstrated by Mastra—leveraging human-aligned concepts like prioritization into fundamental AI memory architecture—signals a maturity in the field. We are moving past the early, frantic phase of simply scaling up context size. The focus is now shifting to quality of retention over sheer quantity of input.

This cognitive leap, whether explicitly using emojis or other semantic tagging methods, is essential for building systems that can operate reliably over long periods, learn incrementally, and act with a contextually appropriate understanding of importance. As these compression techniques mature and become standardized in open-source stacks, they will form the hidden backbone of the next generation of powerful, persistent, and genuinely intelligent AI agents.