The Triad of AI Power: Funding Explosions, Research Leaps, and Global Competition Shaping Tomorrow

The Artificial Intelligence landscape is currently defined by incredible momentum, moving forward on three critical fronts simultaneously: the flow of unprecedented capital, the deepening of fundamental scientific knowledge, and the hardening of geopolitical technological lines. Recent reports capturing these dynamics—from massive investment rounds like Anthropic’s, cutting-edge work from Google DeepMind, to the steady emergence of powerful new Chinese models—paint a picture not just of technological advancement, but of a rapidly solidifying, high-stakes industry.

Understanding the future of AI requires looking beyond any single headline. It demands synthesizing these three forces to see the resulting impact on business, research, and global society.

The Fuel: Capital Influx and the Race for Frontier Models

The reported massive funding round for Anthropic serves as a potent signal: investors are betting heavily that the next generation of foundational Large Language Models (LLMs) will yield exponential returns. This influx of capital is not merely keeping the lights on; it is fueling multi-billion dollar compute clusters and enabling the training of models with trillions of parameters.

The Investment Context: Valuations and the Competitive Moat

When a company like Anthropic secures a 'mega-round,' it signifies several things beyond simple cash injection. It solidifies a competitive moat against rivals. To understand this, we must examine the investment trends [Corroborating Source 1 Focus: Anthropic funding round valuation Series D investment trends]. This level of funding allows developers to afford the scarce resources—namely, the most advanced GPUs and the top-tier research talent—necessary to stay at the absolute frontier. For financial analysts and tech executives, this means the barrier to entry for *creating* foundational models is rising dramatically, concentrating the ultimate power among a few well-funded players.

For the rest of the industry, this signals that the most capable models will likely remain proprietary, leading to a dynamic where companies must either pay high API access fees or focus intensely on building superior applications *on top* of these commercial base models.

The Engine: DeepMind’s Scientific Leaps and Architectural Evolution

While money funds the scale, research drives capability. The mention of "major breakthroughs from DeepMind" is critical because these findings often represent the next paradigm shift in *how* AI learns and reasons. These are not just bigger models; they are often smarter architectures.

Deconstructing the Breakthroughs: Beyond Brute Force

Research articles dissecting these DeepMind advancements—perhaps focusing on improved long-context understanding, better symbolic reasoning, or novel multimodal integration [Corroborating Source 2 Focus: DeepMind recent research breakthroughs generative AI architecture 2024]—reveal where the technical industry is heading. If a breakthrough allows a model to solve complex mathematical proofs reliably, or understand visual and textual data seamlessly, it fundamentally changes the types of tasks AI can reliably handle in enterprise settings.

This progress directly impacts the practical future. For AI engineers, it means new toolkits and architectural patterns to adopt. For businesses, it means the "impossible" tasks—complex engineering design, advanced legal discovery, personalized scientific research assistance—are quickly moving into the realm of the achievable. The focus shifts from asking, "Can AI do this?" to "How quickly can we integrate this new capability?"

The Arena: Global Competition and Geopolitical Implications

AI supremacy is increasingly viewed through a national security and economic dominance lens. The steady stream of powerful model releases from China underscores a global, two-front race for technological leadership, particularly in the realm of foundation models.

National Strategies and Benchmark Races

When we analyze the context of China’s LLM releases [Corroborating Source 3 Focus: China large language model landscape competition with US], we see a divergence in strategy. While US efforts might prioritize open-ended frontier capabilities and safety alignment (often driven by venture funding models), Chinese efforts are frequently aligned with national industrial goals, focusing heavily on domestic data sovereignty, specific industry applications, and governmental utility.

For policymakers, this highlights the urgent need to manage export controls on advanced semiconductor technology while simultaneously fostering domestic innovation. For global businesses, it raises critical questions about supply chain risk, data localization requirements, and which set of foundational models will become the standard in different regions of the world. The AI race is no longer just about performance benchmarks; it is about defining the next generation of global infrastructure.

The Synthesis: From Giant Models to Practical Agents

How do these three trends—massive funding, deep research, and global competition—coalesce into actionable insights for the near future? The answer lies in the necessary diversification away from the singular pursuit of the largest model.

The Utility Layer: Specialization and Agents

The massive investment in frontier models (like those Anthropic is building) is creating a powerful, generalized base layer. However, the most immediate business impact will come from the *utility layer* built atop this base. We are seeing a clear trend moving toward highly specialized AI agents and smaller, efficient models designed for specific tasks, rather than relying solely on the largest, most expensive generalist models for every job [Corroborating Source 4 Focus: AI industry shift from mega models to specialized agents].

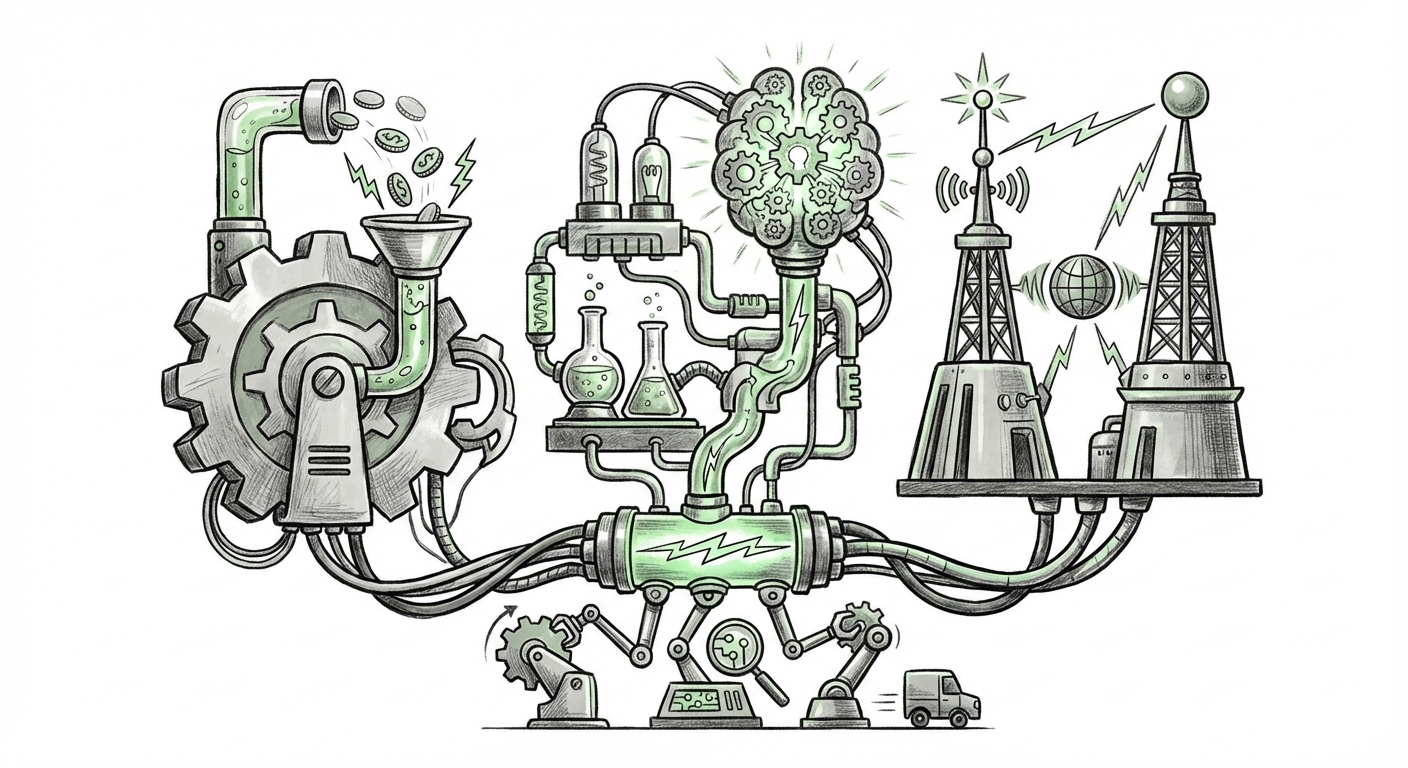

Imagine a colossal LLM as a giant, powerful engine. The massive funding ensures the engine gets bigger and stronger. The DeepMind breakthroughs ensure the engine is more efficient. The specialized agent trend, however, is about building the customized gearbox and transmission systems that apply that raw power efficiently to drive a specific car model—whether that car is handling customer service, optimizing factory logistics, or coding specialized software components.

This duality is key:

- The Base Layer (The Giants): Will continue to be dominated by those who can afford the enormous compute costs, focusing on AGI alignment and scaling laws.

- The Application Layer (The Specialists): Will see innovation explosion from startups and enterprise teams who fine-tune, RAG-optimize, or leverage smaller models to solve domain-specific problems quickly and affordably.

Practical Implications and Actionable Insights

For stakeholders navigating this accelerated environment, the path forward requires strategic positioning across all three development fronts.

For Technology Leaders (CTOs/Heads of AI):

- Dual-Sourcing Model Strategy: Do not rely on a single foundational provider. Given the geopolitical climate and proprietary nature of top models, develop abstraction layers (like model routing frameworks) that allow you to seamlessly swap between models from different providers (US, European, or Asian) based on cost, compliance, or performance for specific tasks.

- Invest in Data and Fine-Tuning: Since raw model power is becoming concentrated, your proprietary data and your ability to fine-tune or RAG-augment public/open-source models is your most defensible asset against those who can only access APIs.

- Prioritize Reasoning over Memorization: Look closely at research breakthroughs (like those from DeepMind) that improve core reasoning abilities. This is the skill that unlocks true automation, not just better text generation.

For Business Strategists and Investors:

- The Capital Cliff: Recognize that the massive funding rounds create a high-stakes environment. Investment success will depend not just on who has the *best* model today, but who can survive the next 18 months of compute cost increases and regulatory shifts.

- Adoption Velocity is King: The market will reward companies that can integrate AI capabilities (specialized agents) into core business processes fastest. Look for integration platforms that abstract away the complexity of model switching.

- Geopolitical Risk Assessment: Understand where your critical AI dependencies lie. If your market is heavily reliant on specific geographic regions, the competitive output from those regions (e.g., Chinese models) must be integrated into your long-term resilience planning.

Conclusion: A Future Built on Convergence

The current moment in AI is not a slow evolution; it is a convergence of immense financial pressure, blinding speed in fundamental science, and increasing global strategic importance. Anthropic’s mega-round provides the necessary fuel, DeepMind’s breakthroughs refine the technology, and the global competition sharpens the urgency. The resulting landscape will be one of hyper-capability, where generalized intelligence pushes the absolute limits, while specialized, context-aware agents permeate every corner of commerce and research.

For those watching, the time for passive observation is over. The future of AI—its safety, its capabilities, and its market structure—is being decided in these concurrent, high-stakes battles over capital, architecture, and global technological standards.