The Copyright Collision: Why Bytedance's "Virtual Smash-and-Grab" Signals the End of Old IP Law

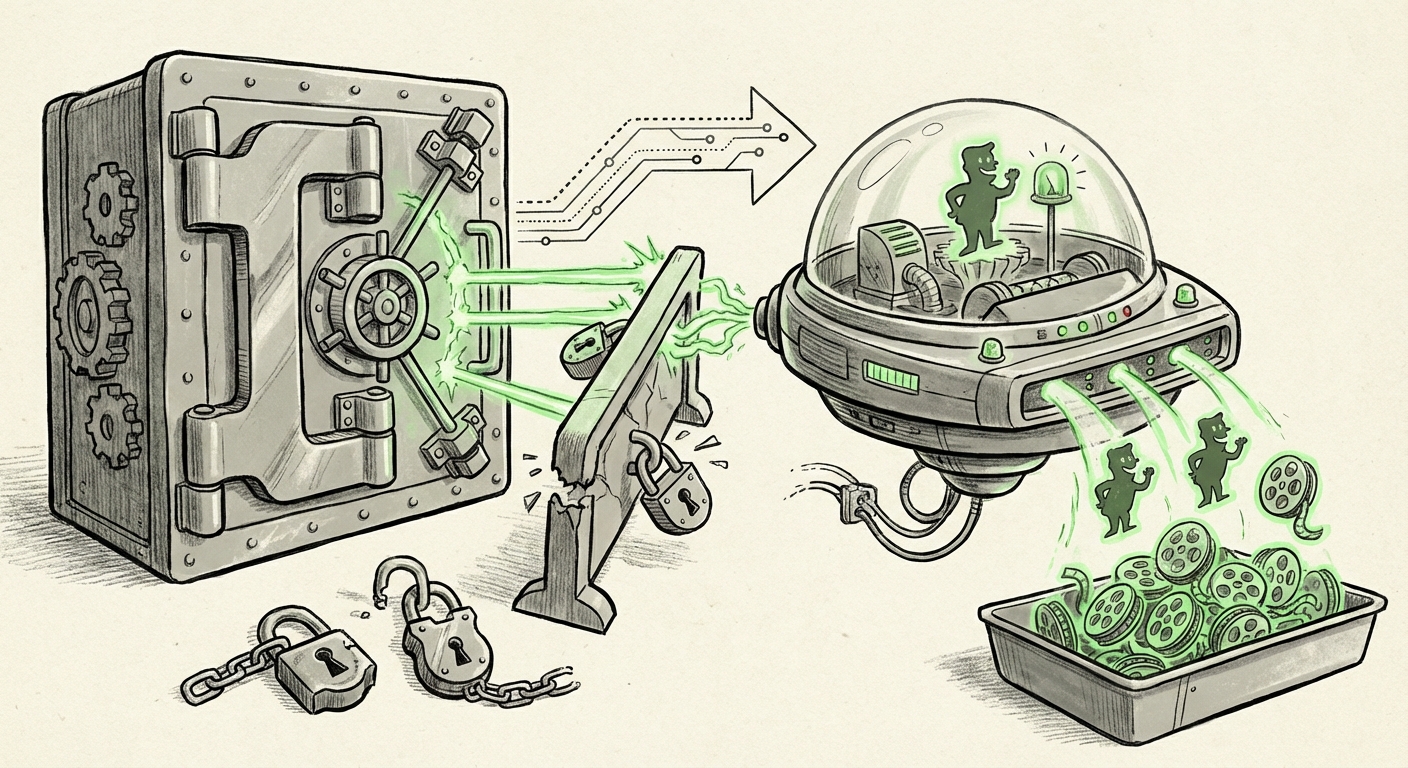

The digital world has entered a phase shift. For decades, the creation of high-quality digital content—a specific character rendering, the nuanced voice of a famous actor—required immense human capital, time, and coordination. Today, that barrier has seemingly vanished. The recent unveiling of Bytedance’s **Seedance 2.0**, capable of generating photorealistic Disney characters and cloning actor voices with frightening accuracy, is not just a technological achievement; it is a legal declaration of war on legacy intellectual property (IP) structures. Bytedance’s own tongue-in-cheek description of the capability as a "virtual smash-and-grab" highlights the very problem Hollywood is now fighting: copying no longer requires effort, rendering existing copyright law increasingly obsolete.

The Technological Leap: From Style Mimicry to Digital Duplication

Seedance 2.0 represents the cutting edge of multimodal generative models. It moves beyond merely generating *new* images in the *style* of an artist (which was the focus of earlier legal challenges) toward direct, *high-fidelity duplication* of copyrighted assets. This distinction is critical.

Imagine an AI that doesn't just paint a picture *like* Mickey Mouse, but generates a brand-new, perfectly consistent, fully animated scene featuring a character that is legally indistinguishable from the real thing. This capability is driven by highly advanced diffusion models and large language models (LLMs) that have ingested vast amounts of protected data.

The Training Data Dilemma

At the heart of nearly every major generative AI dispute is the training set. To make Seedance 2.0 this good, it almost certainly relied on consuming massive quantities of existing media. This brings us directly to the foundational legal fight:

- Is Training Fair Use? Technology developers argue that scraping copyrighted works to *train* a model is transformative—akin to a student reading books to learn skills.

- Is the Output Infringing? IP owners, like Disney, argue that if the model’s output directly competes with or substitutes for their licensed material, the training process was fundamentally parasitic.

Ongoing lawsuits, such as those involving **Stability AI and Getty Images**, are setting the stage for how courts will view this ingestion process. If courts rule against the AI developers on training data, the economic model for creating hyper-realistic systems like Seedance 2.0 collapses. Conversely, if they rule broadly for "fair use" in training, it effectively means any creator’s lifetime of work can be used, without compensation, to build competitive products.

The Copyright Crisis: When Law Meets Instant Replication

Traditional copyright law relies on establishing effort, originality, and the creation of a "derivative work." If a human copied a character, they spent time animating, drawing, or scripting. This effort was tangible.

With AI, the effort is computational, instantaneous, and opaque. This technological reality has forced legal experts to seriously consider systemic reform. We are no longer asking if a specific *work* infringes, but whether the very *technology* should be allowed to operate under current rules.

Legislative Urgency: Closing the Identity Loophole

The threat extends beyond visual assets to human identity. The ability of Seedance 2.0 to replicate actor voices touches upon the **Right of Publicity**—an individual's right to control the commercial use of their name, likeness, and voice.

As actors and performers rightly demand protection, legislators are scrambling. We see action at the state level trying to codify protections against digital identity theft via AI. The efforts being championed by unions like **SAG-AFTRA** illustrate that performers recognize this as an existential threat. If an actor’s voice can be synthesized perfectly for a fraction of the cost, their market value plummets. Any comprehensive analysis of this trend must account for these specific legislative pushes aimed at voice cloning and digital resurrection, which are often treated separately from broad copyright disputes.

Academics are weighing in on these cracks in the foundation. They debate whether we need new frameworks, perhaps moving toward a system where AI output is governed by mandatory source attribution or a micro-licensing system for any output derived from commercially valuable styles or likenesses.

Future Implications: Reshaping the Creative and Economic Landscape

The Bytedance incident is a canary in the coal mine for the entire creative economy. What happens when *every* studio, advertiser, and creator can spin up new content using the likenesses of existing, expensive IP?

1. The Creative Labor Market Shock

The most immediate consequence is the potential displacement of creative labor. If an animation studio can license a high-end character model from a competitor's AI—or worse, use an unlicensed clone—the need for junior animators, concept artists, and even voice actors diminishes rapidly. This economic impact isn't theoretical; it is the driving force behind much of the anxiety in Hollywood and beyond. The market faces saturation by infinitely reproducible, near-zero-marginal-cost content.

2. The Shift to "Clean" Models and Licensing

For major media players like Disney, the long-term strategy cannot rely solely on lawsuits. They must innovate defensively. This means investing heavily in proprietary, ethically sourced, or "clean-room" trained generative models. These models would only be trained on IP that the company explicitly owns or has secured explicit licensing for. This creates a bifurcation in the market:

- Licensed Ecosystem: High-quality, legally safe models used by major studios, likely involving complex licensing agreements for foundational elements.

- Wild West Ecosystem: Open-source or competitor models utilizing scraped data, leading to constant, high-stakes litigation.

This struggle is already evident in how major platforms are adjusting their own policies and dealing with initial infringement claims. Understanding this defensive posture is key to predicting where investment flows next in the AI sector.

3. The Role of Regulation: Speed vs. Safety

Technology develops exponentially; law develops linearly. The gap illustrated by Seedance 2.0 is widening into a chasm. Policymakers face an impossible choice: regulate too slowly, and established IP is permanently devalued; regulate too quickly or too broadly, and you stifle genuine technological progress that could benefit society.

Future regulation will likely focus less on the *act* of copying and more on the *provenance* and *intent* of the model itself. Expect increased scrutiny on transparency requirements for training data and mandatory watermarking or digital provenance tracking for AI-generated works.

Actionable Insights for Stakeholders

Whether you are a developer, a creative professional, or an investor, the reality of hyper-realistic, instantaneous replication demands a strategic response.

For Technology Developers: Prioritize Provenance

The future viability of foundation models depends on defensible training data. If your model can produce a perfect Disney clone today, you will face a lawsuit tomorrow. Invest now in developing methods to track the lineage of training data or in synthetically generating data sets that mimic real-world distributions without using copyrighted source material directly. This shields you from the **Midjourney vs. Getty Images**-style precedent.

For Creative Industries: Own Your Digital Self

If you are an actor, writer, or visual artist, ensure your contracts explicitly address digital likeness and voice synthesis. Do not assume legacy contracts cover these new modes of exploitation. Proactively engage with union efforts and local legislation supporting the **Right of Publicity**.

For Business Strategists and Investors: Assess IP Risk

When evaluating AI companies, treat IP liability as a primary risk factor. A company whose value proposition relies on generating recognizable, commercially viable IP without clear licensing agreements is built on highly volatile legal ground. Look instead for companies focused on utility, efficiency, or models trained exclusively on public domain or licensed data.

Conclusion: The Necessary Evolution of Digital Ownership

Bytedance’s Seedance 2.0 is a clear signal: the era where intellectual property rights were protected by the sheer difficulty of replication is over. The "virtual smash-and-grab" is technologically feasible, and the legal infrastructure is woefully unprepared.

The future of AI is inextricably linked to the future of ownership. If the technology is permitted to operate in a legal gray zone, the result will be a massive transfer of value from the original creators (the sources of the AI’s knowledge) to the AI operators (the distributors of the instantaneous copies). The necessary next steps involve a rapid convergence of technology, legislation, and judicial interpretation to forge a new social contract for digital creation—one that rewards innovation while respecting the human effort that built the foundation upon which these powerful new models stand.