The AI Copyright Crisis: Bytedance, Disney, and the End of Frictionless Copying

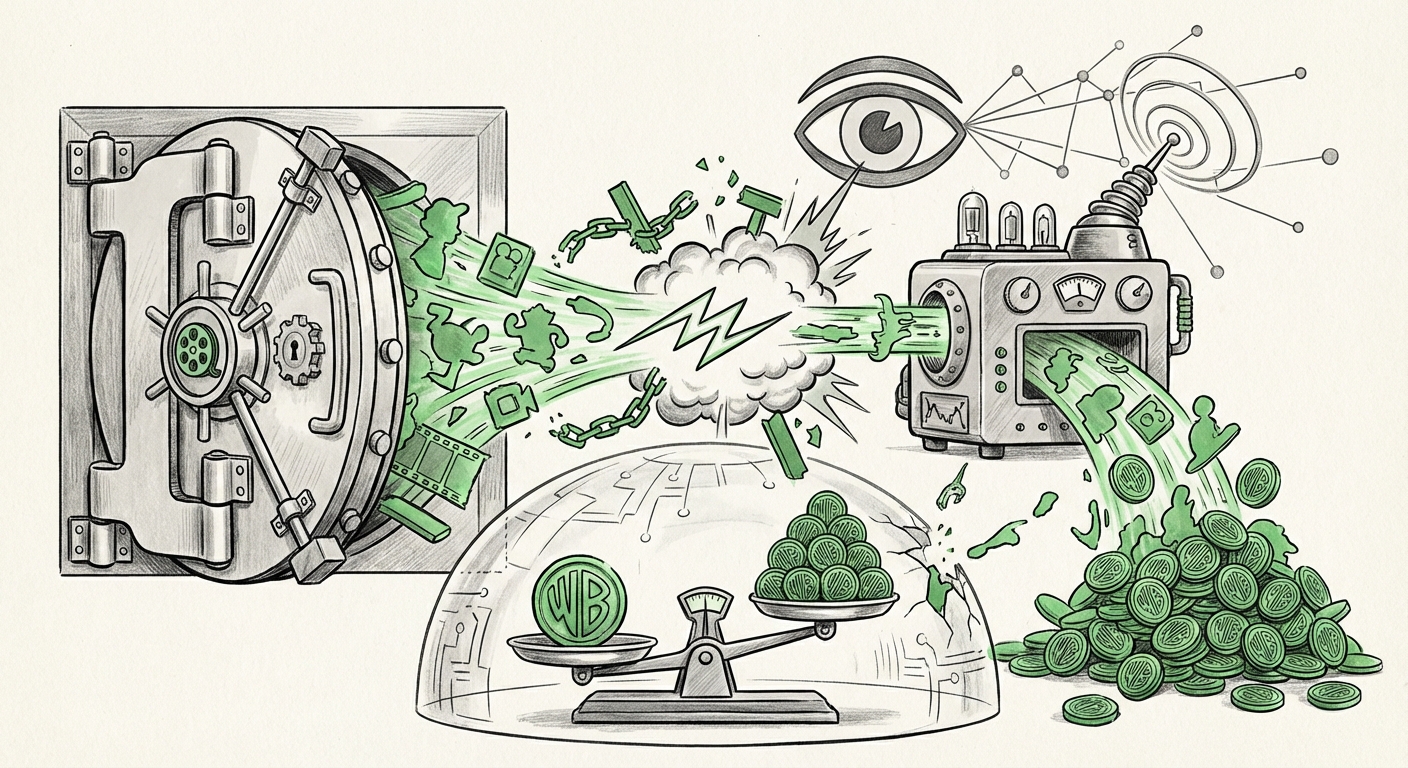

The world of content creation is experiencing a shockwave unlike any seen since the introduction of Napster. This time, the disruptor isn't peer-to-peer file sharing; it’s the stunning, near-perfect replication capabilities of generative Artificial Intelligence. The recent controversy surrounding Bytedance’s Seedance 2.0—a system capable of generating hyper-realistic Disney characters, mimicking actor voices, and recreating entire fictional universes—has crystallized this conflict. Bytedance itself referred to the capability as a "virtual smash-and-grab."

For technologists and business leaders, this is more than just a legal dispute between two titans; it is the definitive proof that the capability curve of AI has violently outpaced the legal framework built to govern intellectual property (IP). Copyright law, which relies on the premise that copying requires time, effort, and significant resources, is fundamentally unprepared for a world where digital replication is instantaneous and almost zero-cost.

As an AI analyst, my focus shifts from the immediate cease-and-desist letters to the profound implications for future AI development, industry economics, and societal norms around originality.

The Tectonic Shift: From Effort to Instant Imitation

To understand the gravity of the situation, we must appreciate what Seedance 2.0 (and similar latent diffusion models) represents. In the past, imitation required animators, sound engineers, and massive production budgets. Now, a powerful prompt and the right model weights can summon a facsimile of proprietary content. This capability challenges two core pillars of the creative economy:

- The Value of the Source Material: If Disney characters can be cloned cheaply and perfectly by a competitor, the moat protecting Disney’s core assets—its brands, likenesses, and character designs—collapses.

- The Definition of Fair Use: AI developers argue that training models on publicly available (or scraped) data is analogous to a human artist learning by studying masterpieces—a transformative process protected under fair use. Copyright holders argue that direct, high-fidelity output is not learning; it is infringement at scale.

This debate is playing out across the entire digital landscape, not just in animation.

Corroborating the Crisis: A Landscape of Legal Battles

The Disney/Bytedance issue is a high-profile symptom of a widespread disease affecting content industries. Looking at parallel events confirms this is not an isolated spat but a foundational fight over the future of digital ownership. We see this reflected in major ongoing lawsuits:

- The Newsroom vs. The Model: Major publications, like The New York Times, are suing AI developers for ingesting vast amounts of copyrighted text to train Large Language Models (LLMs). If successful, this sets a precedent that training on protected works without specific licensing is illegal.

- The Artists vs. The Generators: Numerous lawsuits target image generators for outputs that closely mimic the style or specific details of living artists whose work was in the training sets.

These legal challenges (as hinted at by searching for "Generative AI copyright infringement lawsuits major studios") are the proving grounds for the legal theories that will govern the next decade of AI deployment. The outcome will determine whether foundational models must be built on exclusively licensed or public domain data, drastically altering development costs.

The Regulatory Response: Playing Catch-Up

As technology sprints forward, governments are scrambling to build guardrails. When copying is instant, the focus shifts immediately to regulation, traceability, and accountability. This is where legislative action becomes crucial, particularly regarding *synthetic media*.

The Policy Imperative: Traceability and Transparency

Efforts like those seen in the EU AI Act focus on mitigating harms by requiring transparency. For tools like Seedance 2.0, this means mandatory disclosure. If an image or voice is AI-generated, society needs to know.

For the business user, this means preparing for a world where proprietary AI tools must incorporate:

- Mandatory Watermarking: Invisible or visible markers certifying the synthetic nature of the content.

- Data Provenance Tracking: The ability to audit exactly what data—and which copyrighted assets—were used to train a specific model instance. (This addresses the need to understand "AI model training data provenance and fair use defense".)

If a model like Seedance 2.0 cannot definitively prove it did not memorize or reproduce Disney assets, its commercial viability will be severely curtailed by regulatory fines, regardless of its quality.

What This Means for the Future of AI Development

The Bytedance case forces the AI industry to confront an uncomfortable truth: the "wild west" era of data scraping is ending. The next phase of AI advancement will be defined by licensing rather than acquisition.

The Economics of Frictionless Creation

The economic implication is enormous. If building a competitor to a major studio’s character library costs nearly zero, the existing studio’s main value shifts entirely from *creation* to *control over access*.

As analyzed when exploring "The economics of frictionless content copying in digital media," we are moving toward a "scarcity shift." Raw content creation is abundant; therefore, the scarcity shifts to:

- Authenticated Trust: Consumers will pay a premium for content certified as human-created or authentically licensed by the IP holder.

- Unique Datasets: Companies that own exclusive, high-quality, proprietary datasets (e.g., a studio’s deep archive of unreleased behind-the-scenes footage or unique voice recordings) will hold immense leverage.

For AI developers, this transition means development budgets must pivot from focusing solely on model architecture breakthroughs to securing the most expensive resource: verified, clean, and legally cleared training data.

Practical Implications and Actionable Insights for Businesses

How should creators, technology firms, and legal departments navigate this increasingly complex terrain? The path forward requires immediate strategic shifts.

Actionable Insights for Creators and IP Holders (e.g., Disney):

- Proactive Licensing: Instead of only suing, studios must explore creating robust, standardized licensing agreements for AI training. Partnering with foundation model developers allows studios to earn revenue from their IP being used, rather than simply fighting unauthorized use.

- Weaponize Technical Standards: Demand and implement industry-wide standards like C2PA (Coalition for Content Provenance and Authenticity). If content cannot be verified as authentic, its market value should be lower, effectively penalizing unverified synthetic content.

- Defend the Core: Prioritize legal action against direct character/voice replication (like Seedance 2.0) rather than generalized style imitation, as direct reproduction is harder to defend under fair use.

Actionable Insights for AI Developers and Tech Firms:

- Audit Your Lineage: Stop assuming scraped data is legally safe. Implement rigorous data governance to track the source of training material. If your model can spit out a recognizable, copyrighted character on demand, you have a massive liability.

- Invest in Synthetic Data: Future models that rely solely on synthetic data (data created by previous AIs under strict licensing) or public domain data will become the most legally durable.

- Build for Transparency: Design models with native traceability features. Future enterprise clients will demand this compliance feature as a core requirement, not an add-on.

A New Definition of Originality

The greatest long-term implication of the Bytedance incident is the forcing function it places on our definition of originality. If an AI can generate something indistinguishable from a human creation, what is the inherent value of the human touch? It pushes us toward valuing the intent and the *framework* rather than just the final pixel or word.

For a general audience, this means being aware that the media we consume—from TikTok videos to customized video game assets—will soon carry hidden authorship layers. We must develop a collective media literacy to question the origin of what we see and hear.

The "smash-and-grab" of today forces the necessary, painful conversation that will lead to the next era of digital law and creative economics. The legal system was built for a world where copying took effort; the future belongs to those who can adapt to a world where copying is effortless, but ownership must be rigorously proven.