The AI Triad: Funding Firepower, Geopolitical Races, and Foundational Leaps Shaping Tomorrow's Intelligence

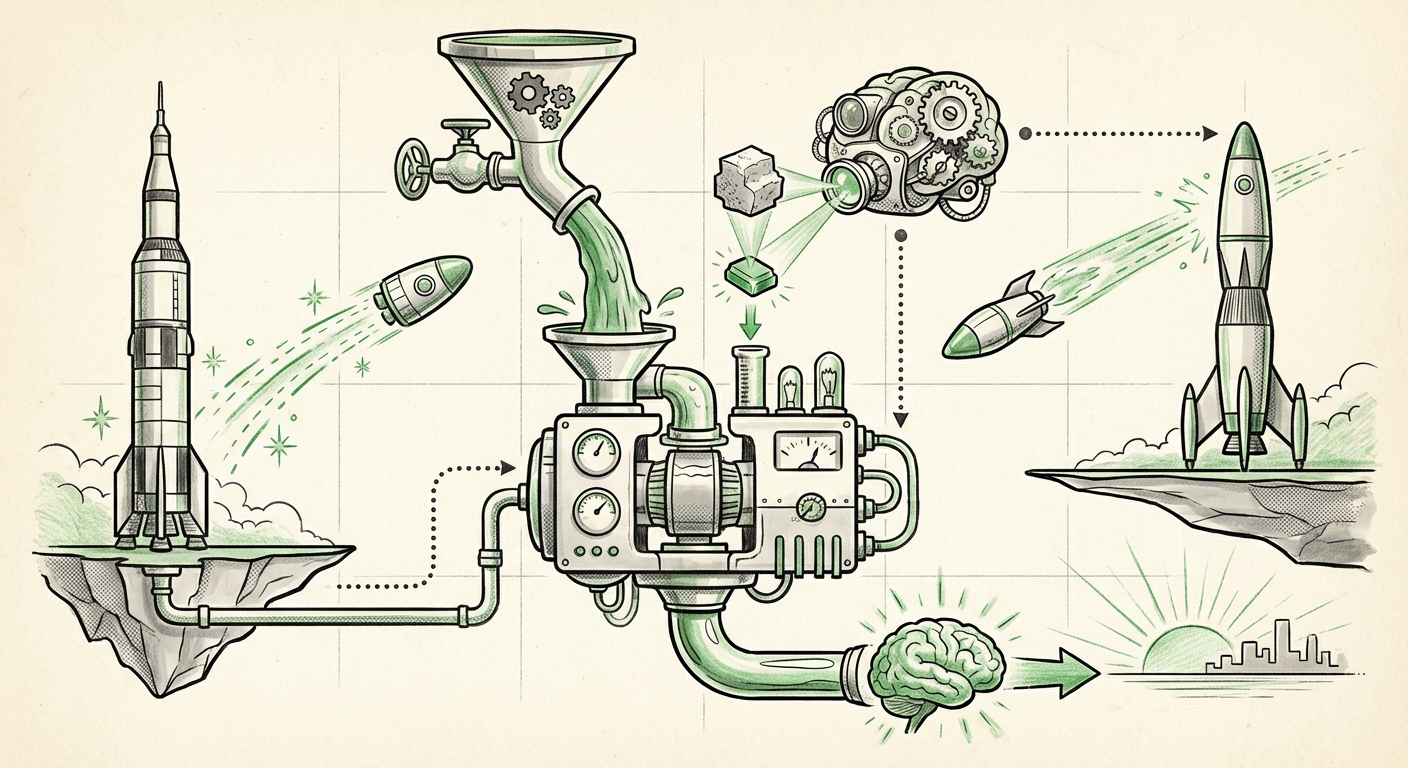

The world of Artificial Intelligence rarely experiences a quiet week. Recent observations, synthesized from key industry trackers like "The Sequence Radar," highlight not just incremental updates, but tectonic shifts occurring across three critical vectors: the sheer scale of private capital flowing into frontier research, the intensifying global competition in model deployment, and the underlying mathematical innovations driving capability leaps.

These developments—exemplified by massive investment rounds, aggressive Chinese model releases, and fundamental breakthroughs from labs like DeepMind—are not isolated events. They form a crucial triad defining the speed, direction, and accessibility of Artificial General Intelligence (AGI). Understanding this triad is essential for anyone looking to harness AI’s future potential.

Vector 1: The Capital Arms Race – Why Anthropic's Mega-Round Matters

The most visible sign of AI’s escalating importance is the staggering amount of money pouring into the leading labs. The reported "monster round" secured by Anthropic underscores a crucial reality: building frontier Large Language Models (LLMs) is now an enterprise requiring billions of dollars, often subsidized by tech giants betting on foundational infrastructure.

The Cost of Being Frontier

For a business audience, this massive injection of capital into a competitor like Anthropic (backed heavily by Amazon and Google) signals that the competitive moat is built on two things: access to cutting-edge research talent and near-unlimited computational power. It’s no longer just about having the best algorithm; it’s about having the budget to run the training for months on tens of thousands of specialized chips (GPUs or TPUs).

This trend suggests that the future of foundational model creation will likely remain consolidated among a few heavily financed entities. Smaller startups will struggle to compete at the bleeding edge of model size unless they focus on highly specialized, efficient niches or open-source democratization.

The investment focus on Anthropic also heavily emphasizes AI Safety and Alignment. Unlike pure capability sprints, their valuation confirms that the market is increasingly willing to pay a premium for models that are perceived as being developed responsibly, a necessary feature for enterprise adoption where regulatory risk is high.

What This Means for Businesses

Businesses must recognize that their AI future may depend on partnerships with these well-capitalized giants. If you are not building your own foundation model, your strategy must center on effectively leveraging the APIs and specialized offerings from these top-tier players. Furthermore, expect these well-funded leaders to set the standards for enterprise deployment and security protocols.

Vector 2: The Global AI Race – China’s Acceleration and Model Proliferation

The consistent stream of "More Chinese models releases" signals a significant geopolitical and technological shift. For years, the narrative focused primarily on US advancements (OpenAI, Google, Meta). Now, the landscape is rapidly becoming multi-polar.

Benchmarking the Gap

When new Chinese models emerge, the immediate technical question is: How do they perform on universal benchmarks like MMLU (measuring broad knowledge) or HumanEval (measuring coding ability)? Corroborating analysis suggests that while Western models often maintain a slight edge in complex reasoning and high-end creative tasks, Chinese models are achieving parity or superiority in specific domains, particularly those related to Mandarin comprehension, local cultural contexts, and compliance with domestic regulatory frameworks.

This divergence creates a bifurcated AI future. One path leads toward globally homogenized, Western-centric AI; the other sees powerful, distinct ecosystems developing tailored to different regulatory, linguistic, and industrial needs.

Implications for Open Source and Sovereignty

The proliferation of locally trained models is crucial for data sovereignty and industrial independence. For companies operating in China, reliance on Western APIs carries inherent risks related to data access and export controls. Therefore, the local model ecosystem serves a vital function: ensuring that national industries (manufacturing, finance, healthcare) can integrate advanced AI without dependence on potentially restricted foreign technology.

For global analysts, this trend necessitates monitoring open-source contributions from these emerging ecosystems. If Chinese labs begin releasing powerful, efficient open-source weights, it could dramatically lower the barrier to entry globally, challenging the closed, proprietary models of the West.

Vector 3: Beyond Scaling – The DeepMind Breakthroughs in Algorithm Efficiency

While money builds the engine (Vector 1) and competition defines the track (Vector 2), the actual speed and efficiency of the vehicle are determined by fundamental research—the breakthroughs originating from labs like DeepMind (now part of Google DeepMind).

Challenging the Scaling Hypothesis

The history of recent LLMs has been dominated by the "Scaling Hypothesis": bigger models trained on more data yield better results. However, foundational breakthroughs often seek to break this rule by making models smarter, not just bigger.

If DeepMind publishes work challenging current scaling laws or introducing novel architectural components (perhaps rethinking the Transformer block itself, or finding dramatically more efficient attention mechanisms), the implications are profound. Such breakthroughs promise to:

- Democratize Frontier AI: A 10x improvement in training efficiency means a model that currently costs $100 million to train might soon cost $10 million. This opens the door for smaller labs and universities to participate in cutting-edge research.

- Improve Deployment: Efficient models run faster and cheaper at inference time—the moment you actually use the AI to answer a question or generate an image. This is critical for real-time applications like robotics or personalized assistants.

- Address Sustainability: The energy footprint of training massive models is astronomical. Algorithmic efficiency is key to making AI sustainable for widespread global adoption.

Mathematical Innovation as a Moat

For technical audiences, these mathematical advancements are the true long-term competitive moat. While hardware and funding can be acquired, fundamental insight into how intelligence emerges from computation is rare. Analyzing the specifics of these DeepMind breakthroughs helps us predict the *next generation* of AI, moving beyond current LLM paradigms.

Synthesizing the Triad: What This Means for the Future of AI

These three vectors—Capital Intensity, Geopolitical Diversification, and Algorithmic Efficiency—are not pulling AI in different directions; they are converging to create a more complex, robust, and high-stakes technological landscape.

The Future AI Landscape: Faster, Richer, and More Divided

1. Accelerated Capability Curve: The massive funding (Vector 1) ensures that the pace of iteration will remain breakneck. Companies are incentivized to deploy sooner rather than later, even if safety features are still maturing. This means societal integration of powerful AI tools will happen quicker than many anticipate.

2. Specialized Intelligence Emerges: The combination of capital and geopolitical competition (Vectors 1 & 2) means we will see deeply specialized models. We won't just have "a good LLM"; we will have the best LLM for synthesizing Chinese legal documents, the best LLM optimized for Amazon’s logistics network, and the best LLM for advanced materials science research (fueled by DeepMind-style efficiency gains).

3. The Bifurcation of Access: Two tiers of AI will become clear. On one side, the "Frontier Stack"—expensive, closed, highly capable models accessible via API, controlled by well-capitalized entities. On the other, the "Efficient Open Stack"—models that are smaller, more customizable, and capable of running locally on consumer hardware, thanks to algorithmic breakthroughs making them feasible.

Actionable Insights for Navigating the New Era

How should leaders and innovators respond to this dynamic environment?

For Enterprise Leaders: Prioritize Strategy Over Ownership

Unless you are Google, Meta, or OpenAI, attempting to build a foundational model from scratch is likely a multi-billion dollar distraction. Focus instead on Application Layer Differentiation. Use the best available models (Anthropic, OpenAI, local Chinese providers) and integrate them deeply into proprietary data workflows. Your value will come from *how* you use the intelligence, not *who* built the core chip.

For Researchers and Engineers: Embrace Efficiency

The breakthroughs from DeepMind serve as a direct signal: the next wave of value creation lies in optimization, fine-tuning, and efficiency. Become experts in parameter-efficient fine-tuning (PEFT), quantization, and model compression. The models that can run effectively on edge devices or within private enterprise clouds will unlock trillions in market value.

For Policy Makers: Understanding Global Competition

Policymakers must look beyond simple national competitiveness. The rise of robust, diverse AI ecosystems (Vector 2) means that regulatory harmonization becomes harder, but cooperation on global risks (like large-scale misinformation or dual-use technology) becomes even more critical. The geopolitical reality is that AI power is dispersing, requiring new diplomatic frameworks.

Conclusion: The Era of Convergent Forces

The latest snapshot of AI activity confirms that we are in a period of extreme acceleration driven by three convergent forces: gargantuan investment fueling the race to the top, parallel technological development creating geopolitical competition, and fundamental research promising greater accessibility for the diligent innovator. The next few years will see these forces clash and combine, yielding AI systems that are simultaneously more powerful, more geographically distributed, and potentially more efficient than we can currently imagine. Remaining adaptive, focused on application, and keenly aware of the underlying algorithmic shifts is the only viable strategy for success in this rapidly unfolding reality.