The Great Unbundling: Why Private Cloud AI is the New Enterprise Reality for LLMs

For the past few years, the narrative surrounding Large Language Models (LLMs) was simple: if you wanted cutting-edge AI, you went to the hyperscalers—Amazon, Google, Microsoft. They offered the massive, pre-built compute farms necessary for training and hosting the world’s most powerful models. However, the infrastructure landscape is undergoing a seismic shift. The move toward **enterprise-ready private cloud hosting**, specifically engineered for intensive AI workloads like those running on hardware such as the **AMD MI355X**, signals a strategic pivot away from full reliance on public providers.

As an AI technology analyst, I see this as the "Great Unbundling" of the AI stack. Enterprises are no longer content to simply rent access; they are demanding control, customizability, and ownership over the core engine of their future digital transformation. This analysis explores the four critical pillars underpinning this move to dedicated, internal AI infrastructure, examining what it means for hardware competition, data security, operational efficiency, and the hybrid future of enterprise computing.

1. The Hardware Revolution: Beyond the Monoculture of AI Chips

The initial choice of hardware for AI training and inference dictates cost, speed, and future flexibility. Historically, this choice has been overwhelmingly dominated by one vendor. The recent spotlight on platforms offering high-performance alternatives, such as those leveraging the **AMD MI355X accelerator**, confirms that diversification is no longer optional—it is a prerequisite for serious long-term AI investment.

For the Business Leader: Think of it like relying on only one supplier for every single computer chip in your company. If that supplier faces production delays or price hikes, your entire roadmap stalls. By exploring platforms that support diverse, high-performance accelerators, organizations are building supply chain resilience. This isn't just about getting a better price; it’s about ensuring continuity.

This trend is strongly supported by the industry recognition that **"The Great Semiconductor Scramble: Why the AI Boom Depends on More Than Just NVIDIA"** is underway. Enterprises are realizing that the "best" chip isn't universal; the best chip is the one optimized for their specific workflow—be it massive model training (requiring high memory scaling) or low-latency, high-volume inference.

Actionable Insight: IT Architects must mandate a multi-vendor evaluation roadmap for all future compute purchases. Performance benchmarking must go beyond peak theoretical FLOPS and deeply analyze Total Cost of Ownership (TCO) for the intended workload over a three-year period in a private environment.

2. The Sovereignty Mandate: Data Security as the New Infrastructure Driver

Why go through the significant effort of building and maintaining a private cloud when hyperscalers offer convenience? The answer overwhelmingly lies in security, governance, and data sovereignty. When utilizing proprietary algorithms or training models on sensitive customer data (think medical records, financial predictions, or classified IP), sending that data outside the corporate perimeter becomes an unacceptable risk.

This concern is driving what analysts term **"The Enterprise Imperative: Securing Generative AI Models with Data Governance and Isolation."** For many regulated industries, the debate isn't about whether to use AI, but *where* that AI processes its inputs and stores its resulting models.

For the Technical Team: Building a private cloud cluster for LLMs allows teams to implement zero-trust security architectures directly onto the silicon level. It ensures that compliance standards (like HIPAA or regional data residency laws) are met not as an add-on service, but as the fundamental operating condition of the infrastructure. If your data cannot leave your physical or tightly controlled virtual boundaries, a private cloud solution is the only architectural pathway.

Practical Implication: The rise of these specialized private platforms means that compliance teams now have a direct line to infrastructure planning. Security requirements are now dictating hardware procurement, reversing the traditional model where hardware selection preceded security layering.

3. Mastering the Tail End: Operationalizing LLMs Through Inference Optimization

Training a massive LLM is often a one-time, massive capital expenditure. Deploying it—the process known as inference—is a continuous, operational expense that can quickly consume budgets if not managed properly. This is where the specific performance trade-offs mentioned in initial platform guides become paramount.

The shift to private cloud hosting for inference is driven by the need for granular control over latency. An enterprise chatbot or a real-time fraud detection system cannot afford the unpredictable latency spikes that can sometimes occur when queuing requests through shared public infrastructure.

This focus leads directly to deep technical dives like **"Beyond Batch: Low-Latency LLM Inference Serving in On-Premise and Private Cloud Environments."** Teams are aggressively adopting specialized software techniques like model quantization (making the model smaller and faster without losing much accuracy) and advanced serving frameworks to squeeze maximum performance out of their owned hardware.

What This Means for the Future: The value proposition of the private cloud is shifting from merely "where the data sits" to "how fast the answer returns." Organizations that can master the software stack (the MLOps layer) on their dedicated hardware will gain significant competitive advantage through faster customer interactions and reduced per-query operating costs compared to those simply paying by the token externally.

4. The Architectural Blueprint: Embracing the Sophisticated Hybrid Future

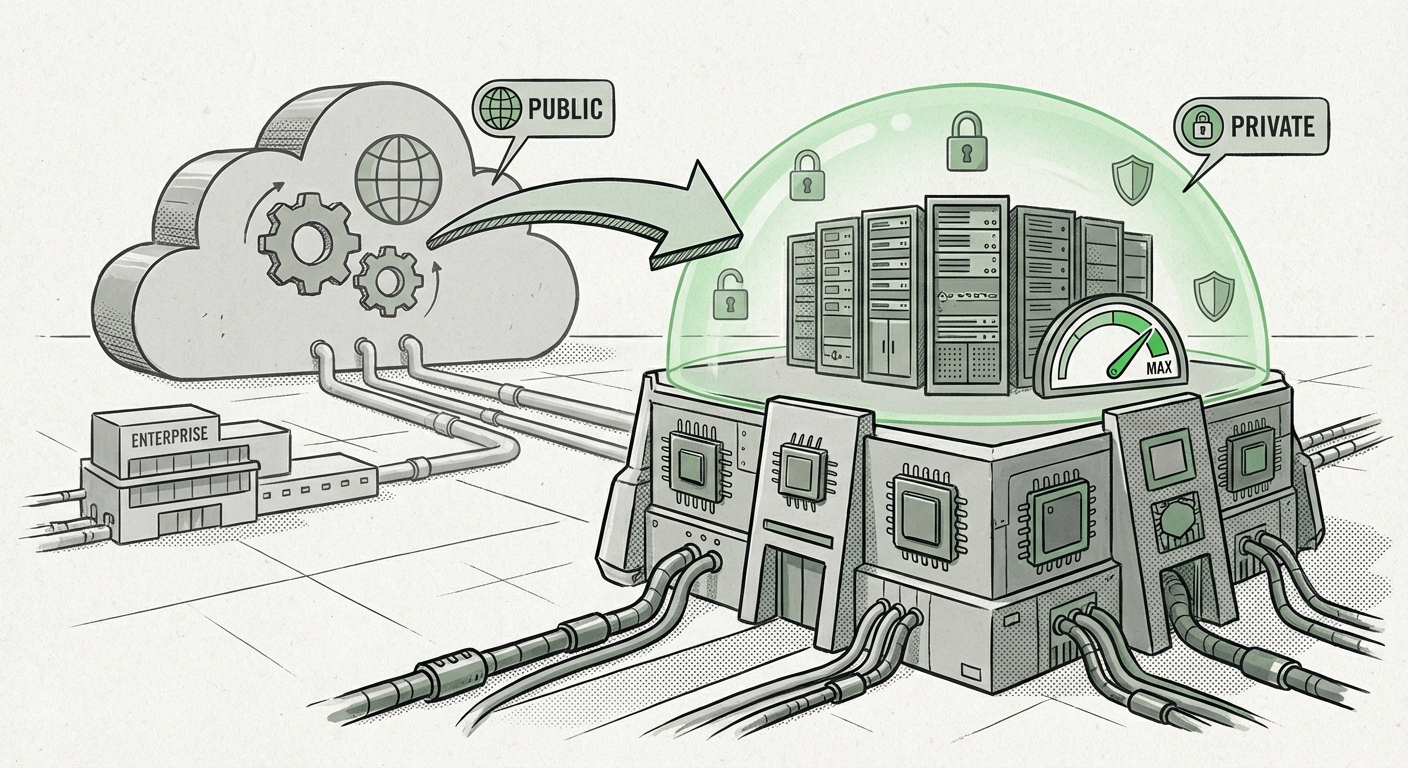

It is crucial to understand that the rise of the private AI cloud does not signal the death of public cloud services. Instead, it solidifies a more complex, mature ecosystem: the **Hybrid Cloud Strategy for AI Workloads**.

The concept of **"Defining the AI Workload Split: When to Keep Generative AI On-Premises vs. Public Cloud"** is now central to enterprise architecture. Organizations are developing sophisticated workload placement strategies:

- Private/On-Prem Cloud: Used for proprietary model training, inference on highly sensitive PII/IP, and established, high-volume production workloads where cost predictability is essential.

- Public Cloud: Used for exploratory R&D, "bursting" capacity during peak demand (e.g., holiday surges), or leveraging specialized public services (e.g., specific data labeling tools) that are not yet cost-effective to build internally.

This architectural maturity requires unified management tools that can seamlessly coordinate compute across both environments. The private cloud platform, therefore, must be designed not as a walled garden, but as a fully interoperable extension of the organization’s existing digital footprint. This ensures that resources are utilized optimally, preventing the costly underutilization of expensive internal accelerators while maintaining control where it matters most.

Actionable Insights for Strategic Planning

The convergence of specialized hardware, security mandates, and operational excellence is creating a new baseline for enterprise AI deployment. Businesses must adapt their planning immediately:

- Audit Your Data Sensitivity: Categorize all potential LLM use cases by the sensitivity of the data involved. High sensitivity mandates immediate exploration of private cloud solutions.

- Establish a Multi-Vendor Procurement Policy: Do not lock future capital expenditure into a single accelerator vendor. Design your private cloud fabric to accommodate diverse hardware (like AMD, Intel, and emerging players) to maintain leverage and supply security.

- Prioritize MLOps for Inference: Recognize that operational efficiency (inference speed and cost) will define the ROI of your private cluster. Invest in dedicated MLOps teams skilled in low-latency serving techniques (quantization, batching optimization).

- Architect for Interoperability: Ensure any private cloud deployment strategy incorporates robust network and management layers that allow for seamless, secure bursting or data movement to public clouds when necessary.

The age of the purely centralized, outsourced AI laboratory is ending. The future belongs to organizations that can skillfully wield **distributed, controlled, and purpose-built AI infrastructure**. The private cloud, far from being a fallback option, is rapidly becoming the default engine room for enterprise intelligence in the age of LLMs.