The IP Reckoning: How Disney vs. ByteDance Redefines the Future of Generative AI

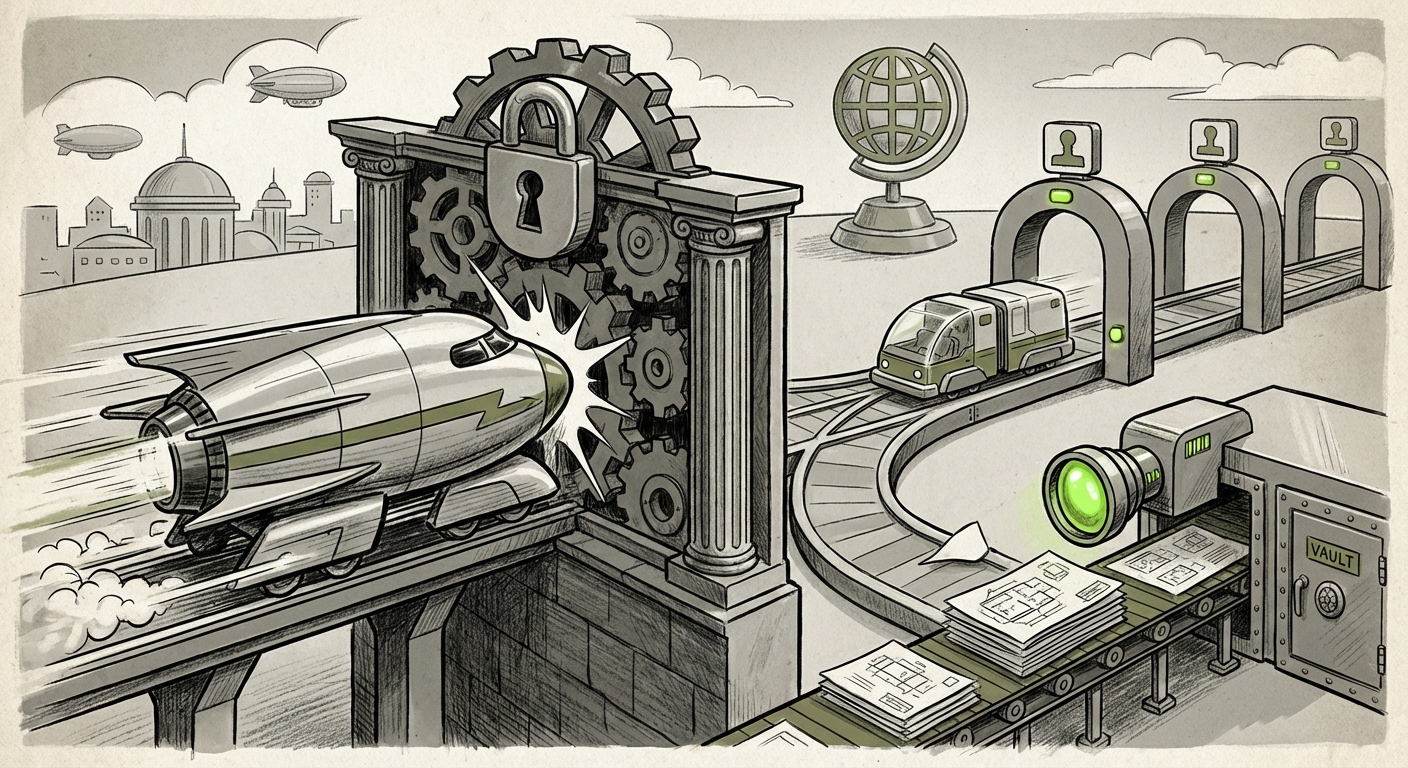

The rapid ascent of generative Artificial Intelligence has been a story of blazing technological speed, often outpacing regulatory and legal frameworks. We are now entering the inevitable phase of collision: where the established power of intellectual property (IP) meets the limitless potential of machine learning.

The recent news that ByteDance, the parent company of TikTok, has restricted its AI video tool, Seedance, following a direct legal threat from Disney, is not just a minor corporate setback. It is a watershed moment. It crystallizes the central tension shaping the next decade of AI development: Can AI tools be built commercially if their foundation rests on uncompensated copyrighted works?

The Collision Point: Speed vs. Statute

For years, many generative AI firms operated under an implied, though legally untested, assumption regarding their training data: that ingesting billions of images, texts, and video frames from the public internet for model training constituted "fair use." This allowed tools like sophisticated image generators and video synthesizers to emerge quickly, powered by vast, essentially free, data libraries.

Seedance, an AI video tool, represents the frontier of this technology, capable of synthesizing complex video content. When a media giant like Disney—whose entire business model is built on owning and exploiting unique characters, stories, and visual styles—sends a legal warning shot, the stakes immediately become existential.

Why Disney Acted: Establishing Precedent

Disney is not simply protecting one piece of content; they are strategically defending the infrastructure of creative ownership. As an AI analyst, we must look beyond this singular case to understand the broader pattern of enforcement:

- Pattern of Aggression: Disney, like other major studios and stock libraries (such as Getty Images), views unauthorized ingestion of their work as direct theft that undermines future licensing revenue. They are actively seeking to establish legal precedent that training models on their work requires permission and payment.

- The Getty Images Parallel: The action against ByteDance mirrors ongoing lawsuits where IP owners have sued AI companies like Stability AI (for visual models). The legal argument centers on whether the model output itself is an unauthorized derivative work, or if the initial *training process* violated copyright.

For technologists and investors targeting the creative sector, Disney’s move serves as a stark warning: the legal landscape is hardening. The path forward will not be paved solely by technical superiority, but by legal security. (For context on this broader pattern, industry reports analyzing the Getty Images vs. Stability AI litigation provide essential background on visual IP defense.)

The Legal Crucible: Deconstructing "Fair Use" in AI Training

At the heart of every such dispute is the concept of "fair use." In simple terms, fair use allows limited use of copyrighted material without permission for purposes like criticism, commentary, news reporting, or research. The core question that courts are now grappling with is this:

Is training a commercial AI model on copyrighted data a "transformative" use that falls under fair use, or is it mass infringement used to create a competing product?

If courts side with the AI developers, the existing foundation of many large models remains secure, albeit ethically contentious. If they side with IP holders, the financial implications for retraining models or licensing data could bankrupt smaller AI startups and force massive restructuring among tech giants.

Implications for Developers (The Technical View)

For AI engineers building the next generation of video synthesis, this uncertainty mandates a shift toward "compliance-by-design."

Future research must heavily focus on synthetic data generation or, critically, meticulously curated, licensed datasets. The era of scraping the open web without consequence is rapidly drawing to a close. (Analysis of ongoing legal proceedings regarding the *Authors Guild v. OpenAI* case often reveals the key judicial arguments concerning transformativeness, which directly informs developer strategy.)

ByteDance’s Strategic Pivot: Beyond Consumer Recklessness

ByteDance’s decision to restrict Seedance was swift, suggesting they recognized the legal threat was both potent and immediate. This action offers crucial insight into the company’s overall AI strategy.

Understanding the Broader AI Portfolio

ByteDance is not just a social media company; it is a powerful AI research organization investing heavily in foundational models. While Seedance was a consumer-facing creative application, the company’s deeper strategic interests lie in enhancing personalization, advertising efficiency, and potentially creating B2B enterprise AI solutions.

Restricting Seedance might be a tactical sacrifice to protect the core, more legally secure aspects of their AI research portfolio. They may have determined that the market validation gained from a quick consumer launch was not worth the potential crippling financial penalty Disney could impose. (Reports tracking ByteDance's investment in proprietary foundational models outside of TikTok provide the necessary context here.)

The Investor Perspective

For investors tracking Chinese tech giants, this highlights a critical risk factor: geopolitical and regulatory divergence on IP standards. While internal Chinese regulations might be more lenient on data use, deploying products globally requires adherence to US and EU copyright regimes, which are currently hostile to uncontrolled training data aggregation. The risk profile for any generative tool touching Disney’s core markets has just increased significantly.

Future Implications: Licensing, Regulation, and the Creative Economy

The restriction of Seedance is the canary in the coal mine for the future trajectory of creative AI. We see three undeniable shifts occurring:

1. The Inevitable Rise of Licensed Datasets

The "move fast and break things" ethos, perfected during the early days of web platforms, is untenable in the age of high-stakes IP litigation. The future of robust, commercially viable generative models will rely on transparent licensing agreements.

We anticipate the growth of lucrative, centralized "AI data marketplaces." Major media conglomerates, music publishers, and large artistic archives will transition from being litigators to being high-priced **data vendors**. They will charge significant fees for the rights to train models on their protected catalogs. This shifts the cost structure of AI development dramatically.

2. Compliance-by-Design: A Mandatory Framework

For AI developers, **Compliance-by-Design** will become as critical as model accuracy. This means building guardrails into the very architecture of AI systems:

- Output Filtering: Developing stronger mechanisms to prevent AI output from too closely resembling specific, copyrighted inputs.

- Data Provenance Tracking: Implementing internal logging to prove which data sources contributed to specific model weights, allowing for rapid auditing if challenged.

- Proactive Opt-Out Mechanisms: Moving beyond reactive lawsuits to offer clear, easy ways for creators to opt their work out of future training sets.

3. Regulatory Pressure Intensifies

This corporate standoff will accelerate government intervention. Lawmakers in the US and Europe are already attempting to draft legislation regarding AI transparency. Disney’s successful leverage against ByteDance provides powerful ammunition for those arguing that IP holders need stronger protections against data scraping.

We expect increased scrutiny on model transparency. If an AI tool is deemed infringing, the scrutiny will shift from *what* the tool produced to *how* it was trained. Regulators will demand clear documentation of data sources.

Actionable Insights for Stakeholders

What must businesses do now to navigate this newly restrictive environment?

For AI Developers and Startups:

Action: Audit Your Data Diet. Immediately map the provenance of all data used to train current commercial models. If the data source is ambiguous or public web scrapes, prepare a strategy for retraining on licensed or fully public domain data. Prioritize securing early licensing deals, even if costly, to ensure product longevity.

For Content Creators and IP Holders:

Action: Monetize Your Data or Defend It Vigorously. If you hold valuable, unique content, do not assume litigation is the only path. Engage with AI firms to negotiate licensing terms *now*. If you are unwilling to license, you must commit significant resources to active digital monitoring and enforcement, as the industry has shown it will enforce its rights.

For Investors and Executives:

Action: Factor IP Risk into Valuation. Any generative AI company whose success hinges on unverified, massive data ingestion must carry a substantial IP litigation risk discount. Focus investment on companies demonstrating clear data governance, proactive licensing strategies, or those specializing in synthetic data generation.

Conclusion: The Maturation of Generative AI

The ByteDance-Disney saga is painful but necessary. It signals the end of the honeymoon phase for generative AI, where rapid capability scaling overshadowed legal realities. The future of AI will be less about how fast a model can learn, and more about how legally sound its education was.

As consumers and businesses, we still want powerful, magical tools like Seedance. However, the technology will mature only when it builds on a foundation that respects the creators whose work made that technology possible. The next great wave of AI innovation won't just be technological; it will be legal and ethical—a necessary maturation phase for an industry on the cusp of transforming global creativity.