The Copyright Singularity: Bytedance, Disney, and the End of Intellectual Property as We Know It

Welcome to the era where creation is instantaneous, and imitation is perfect. As an AI technology analyst, I monitor the leading edge where technology meets law, and rarely is that intersection as explosive as it is right now. The recent revelation surrounding Bytedance’s **Seedance 2.0**—a model capable of generating staggeringly realistic Disney characters, mimicking actor voices, and recreating entire fictional worlds—isn't just a technological achievement; it’s an earthquake shaking the very foundations of intellectual property.

The technology company itself reportedly called the capability a "virtual smash-and-grab," a stark admission that they possess the tools to extract enormous value from established creative assets with minimal friction. Hollywood is responding with the predictable but perhaps insufficient weapons of the past: cease-and-desist letters and calls for legal action. But this case highlights a crucial reality: copyright law was built for a world where copying required significant effort.

The Effort Gap: Where Technology Outpaces Legislation

For decades, copyright protection worked as a natural barrier. If you wanted to copy Mickey Mouse or use the voice of a famous actor, you needed animators, studios, voice talent, and massive budgets. This effort created a "moat" around intellectual property (IP). Generative AI, specifically advanced diffusion and video synthesis models, has obliterated that moat. They have created what I term the "Effort Gap."

Consider the complexity involved. Seedance 2.0 isn't just blending pixels; it’s demonstrating deep, contextual understanding of copyrighted material. To achieve this level of realism, the underlying models must have been trained on vast datasets containing the specific artistic styles, character designs, and vocal intonations of the targets. This brings us directly to the heart of the current legal challenge:

The Legal Crucible: Fair Use vs. Theft

The Bytedance situation is the high-stakes sequel to ongoing legal battles where AI developers are being sued for using copyrighted works—from books and code to images—as training data. If you search for current **"Generative AI" copyright infringement lawsuits "fair use" defense** (Query 1), you will find the battle lines drawn:

- The AI Developer Argument: Training is "transformative" and falls under fair use because the model learns concepts, not copies the specific work.

- The Creator Argument: When the output is near-identical or directly substitutes for the original market, it is infringement, regardless of the method of copying.

When an AI can generate a scene featuring a Disney character that bypasses the need to hire Disney artists or license the character, the "market substitution" argument becomes incredibly powerful. The courts will soon have to decide if training on copyrighted material for the purpose of near-perfect replication is a legal form of theft or a revolutionary, protected form of learning.

The Technology Underpinning the Threat

To appreciate the severity of the threat, we must look under the hood. This isn't basic image manipulation; it is advanced generative choreography. Searching technical literature using terms like **Diffusion models character consistency "video generation"** (Query 2) reveals massive leaps in model coherence.

Early generative models struggled with consistency—a character’s face would change slightly from frame to frame in a video. Today’s cutting-edge systems are overcoming this by using highly sophisticated temporal layering within diffusion processes. They can now maintain the specific geometric structure, texture, and—crucially for Bytedance—the *IP-specific design elements* of a replicated character across a sustained sequence. This means the AI can generate a new, fully animated short film starring an unauthorized character. For a business audience, this translates to:

- Near-Zero Marginal Cost: Once the model is built, producing a new piece of IP-violating content costs pennies.

- Speed to Market: New "content" can be generated in hours, not months or years.

The Economic Fallout: Beyond Copyright Infringement

The real danger signaled by the industry’s alarm bells isn't just about losing licensing fees; it's about the destabilization of the entire creative economy. When we investigate the **"Virtual smash-and-grab" Hollywood reaction economic impact** (Query 3), the narrative shifts from legal theory to workforce survival.

Labor unions, most notably SAG-AFTRA, have already fought hard to define rules around digital likenesses. The Bytedance development signals that these negotiated safeguards might become instantly obsolete. If an AI can perfectly recreate a living actor’s performance and voice—or a deceased actor’s likeness—the need for that specific human talent vanishes. This isn't just about background actors; it threatens lead performers, voice artists, and specialized concept artists.

For content creators, the implication is stark: If your life's work is used to train a model that immediately undercuts your ability to earn a living by replicating your style, the economic incentive to create valuable, copyrighted material diminishes sharply. Why invest millions in developing a character universe if a competitor can leverage a stolen dataset to churn out infinite, free derivatives?

The Geopolitical Dimension: Where the Models Are Trained

Since Bytedance is a major global technology player headquartered in China, the regulatory context of its home country is vital. Researching **China generative AI regulation deepfake likeness rights** (Query 4) reveals that while the West debates "fair use," Beijing has moved toward stringent, centralized controls over synthetic media, often focusing on content authenticity and compliance with state narratives.

However, the rules governing *training data acquisition* and *commercial output* for international markets might operate under different constraints. This divergence creates an enforcement nightmare. If Seedance 2.0 is deployed outside US jurisdiction, relying solely on US copyright law becomes ineffective. We are seeing the rapid formation of global "AI regulatory spheres" where the enforcement of IP rights will depend entirely on the political and legal alignment of the developer's home country.

What This Means for the Future of AI: Actionable Insights

The Bytedance example forces us to confront the limitations of current AI governance. As analysts, we must look beyond the immediate legal skirmish to the long-term technological trajectory.

1. The Shift from Output Control to Input Control

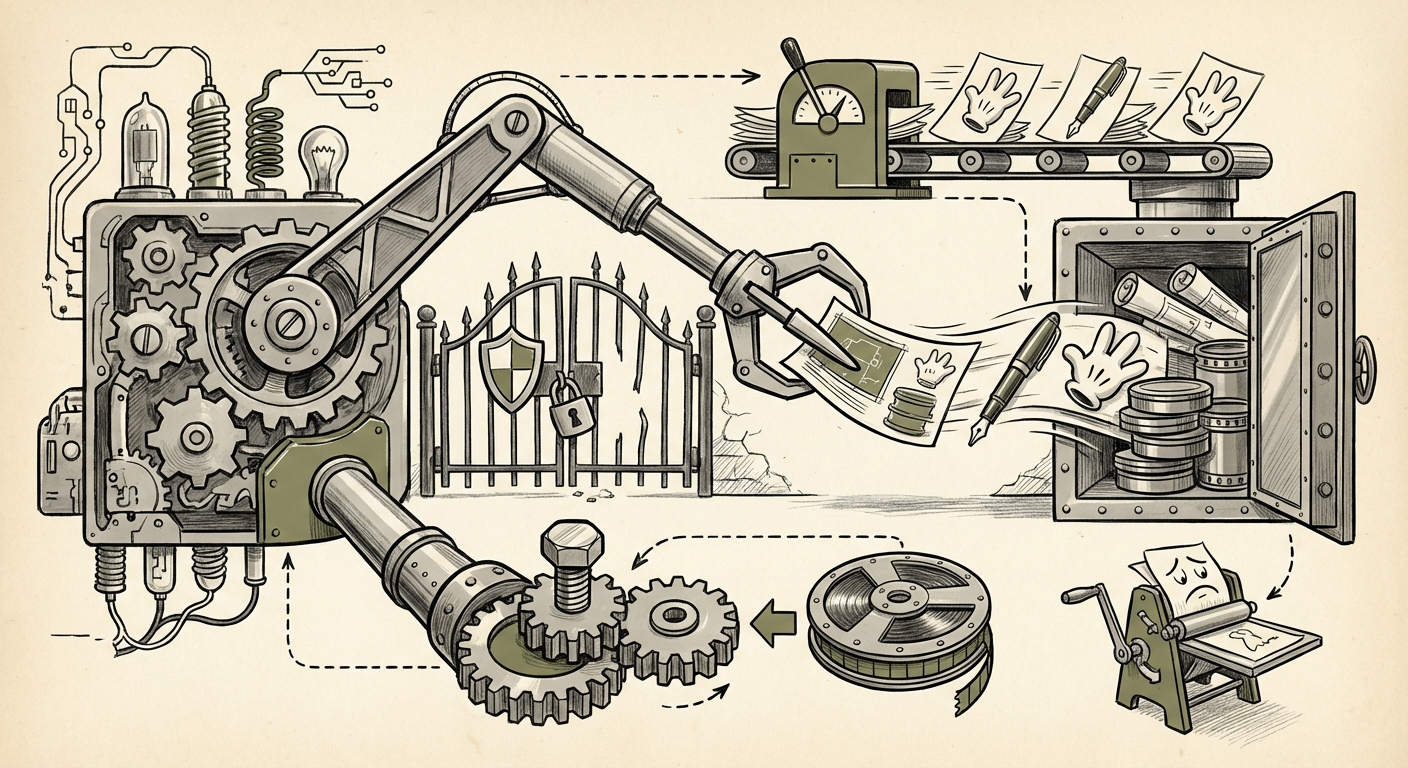

The current legal fight focuses on the *output*—is the generated video too similar? In the future, the fight will move to the *input*. We will see a massive push toward **Data Provenance and Licensing Gateways.**

Actionable Insight for Developers: The next generation of profitable foundation models will likely be those that are explicitly, legally licensed for training. Models built on legally sound, clean data—even if smaller—will be safer and more marketable than those trained indiscriminately on the public web. Prepare for a premium market for "ethically sourced" or "licensed-for-AI" datasets.

2. The Rise of Digital Identity Wallets and Provenance Tracking

To combat perfect replication, we need perfect verification. Blockchain and cryptographic solutions will become essential for digital identity. Imagine every actor's performance, every concept artist’s sketch, embedded with an immutable digital signature proving its origin.

Actionable Insight for Creative Businesses: Studios must immediately begin tokenizing or watermarking their valuable IP assets (voices, character models, scripts) using C2PA standards or similar verifiable frameworks. If you cannot prove *you* own the digital essence, you cannot defend it against a "virtual smash-and-grab."

3. Legal Innovation: Beyond Copyright

Copyright law, designed for fixed works, struggles with dynamic, learned behaviors. We may need to see the rapid evolution of laws governing Digital Likeness Rights (DLRs), establishing an inalienable economic right to one's digital self, separate from traditional copyright.

Actionable Insight for Policymakers: Legislation must move quickly to define the "right to control one’s digital twin." This means requiring explicit, granular consent and ongoing residual payments for the use of digital likenesses in training data and subsequent generative outputs.

4. Technological Countermeasures: Defensive AI

The arms race will inevitably lead to defensive technologies. If Bytedance is using advanced diffusion, expect to see AI models designed specifically to degrade or poison training data—making copyrighted assets unusable for adversarial training.

Actionable Insight for Tech Security Teams: Investment in data poisoning tools (e.g., techniques that subtly alter pixels in ways imperceptible to humans but confusing to neural networks) will become a necessary insurance policy for IP holders.

Conclusion: Navigating the Uncharted Waters of Digital Ownership

The incident involving Seedance 2.0 is a loud, flashing warning light. It tells us that the foundational assumption of creative economics—that creation requires labor and time, which are then monetized—is breaking down under the weight of hyper-efficient AI.

The conflict between Disney and Bytedance is not merely a lawsuit; it is a philosophical debate playing out in the digital realm over who owns the blueprint of culture. Will the legal system adapt swiftly enough to protect creators, or will the "Effort Gap" lead to a massive devaluation of human creative labor? The answer will define the next decade of the digital economy. For businesses relying on proprietary assets, inaction is no longer an option; the defense of digital identity and training data must begin now.