DPO vs. PPO: How Alignment, Hardware Wars, and New Architectures Define the Future of LLM Customization

The age of general-purpose Large Language Models (LLMs) is rapidly giving way to the age of customized, aligned, and efficient LLMs. For businesses, researchers, and developers, the difference between a generic model and one perfectly tuned for a specific task can mean billions in efficiency gains or missed opportunities. At the heart of this customization battle are the complex training methods used to instill human preferences into these powerful systems.

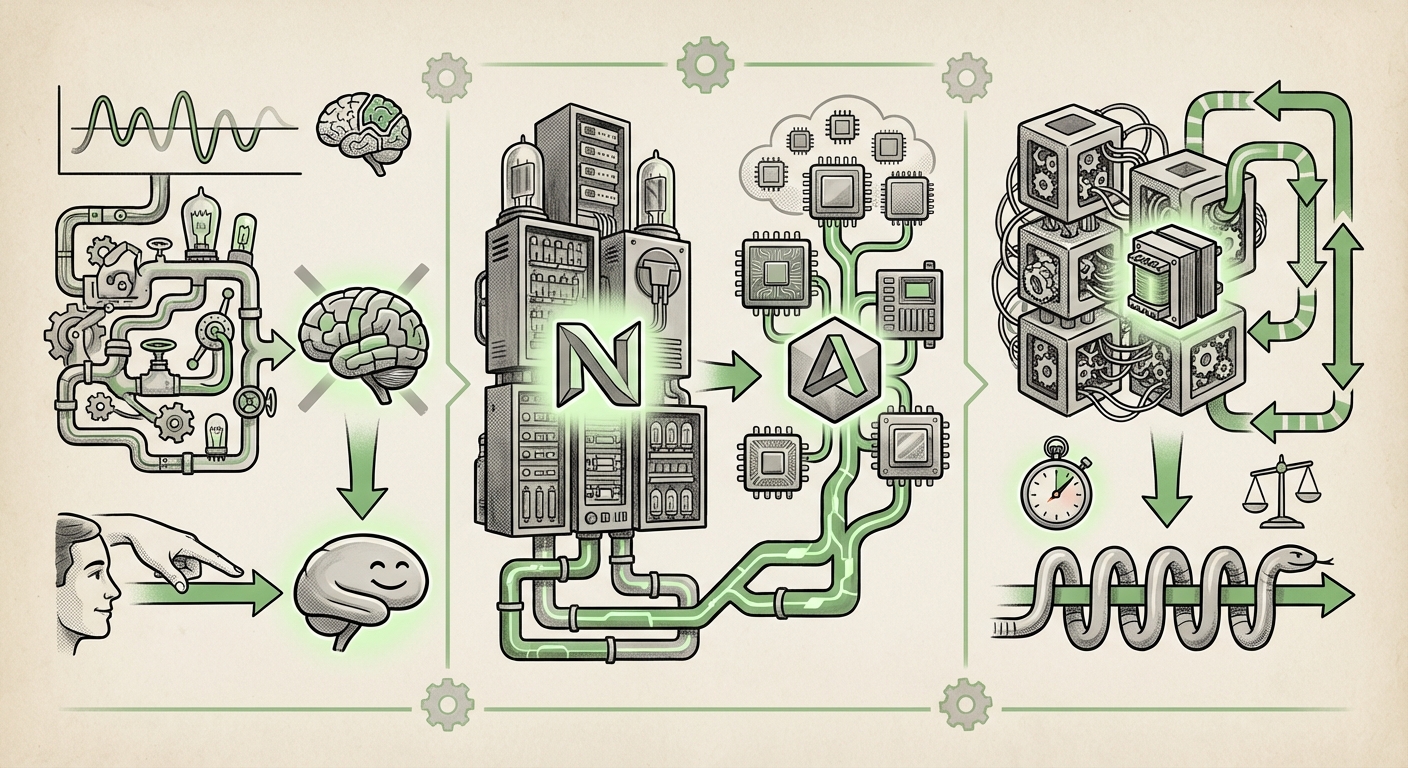

Recent technical discussions have centered on the showdown between two alignment techniques: Proximal Policy Optimization (PPO) and the surging challenger, Direct Preference Optimization (DPO). However, looking deeper, this technical skirmish reveals three overarching trends defining the next era of AI: the pursuit of simpler alignment, the intense hardware diversification battle, and the architectural search for the next Transformer.

Trend 1: The Great Alignment Simplification—DPO Overtakes PPO’s Complexity

To make an LLM truly useful—safe, helpful, and polite—it must learn human preferences. This process is called alignment. Historically, the gold standard has been Reinforcement Learning from Human Feedback (RLHF), which heavily relies on the PPO algorithm.

The PPO Baseline: Powerful but Cumbersome

When models like InstructGPT demonstrated the power of alignment, they did so using PPO. PPO works by training a separate "Reward Model" based on human rankings (e.g., "Response A is better than Response B"). The main LLM is then treated like an agent in a reinforcement learning environment, constantly trying to maximize the score given by that Reward Model. While effective, this is complex. It requires training three separate models (the policy model, the reference model, and the reward model) and navigating the notoriously unstable waters of policy gradient optimization. This complexity translates directly to high computational cost and engineering overhead.

As the OpenAI blog post on training language models to follow instructions details, the initial breakthrough required intricate steps to bridge the gap between statistical language modeling and human-aligned behavior (Source: Training language models to follow instructions with human feedback).

DPO: Directness as a Strategy

DPO flips the script. Instead of training a separate reward model and using complex RL, DPO uses the human preference data *directly* within a simpler classification loss function. It mathematically shows that the optimal policy (the fine-tuned LLM) can be derived directly from the preferences, eliminating the reward model entirely.

Why this matters for the future: DPO drastically lowers the technical barrier for alignment. Fewer moving parts mean faster iteration, reduced resource consumption, and greater stability. For small and medium enterprises that cannot afford massive RL research teams, DPO democratizes high-quality fine-tuning. We are seeing a trend where DPO is becoming the default choice for many research labs and open-source initiatives precisely because it cuts training time and complexity.

Trend 2: The Hardware Arms Race Escalates—Diversifying Beyond the GPU Monopoly

Training alignment algorithms, whether PPO or DPO, demands vast amounts of specialized compute. For years, the industry standard has been dominated by NVIDIA GPUs. However, as the demand for LLMs explodes, reliance on a single vendor creates massive supply chain risks and price volatility.

The Rise of the Challenger: Enterprise Readiness

The mention of enterprise-ready hardware like the AMD MI355X in recent guides signals a palpable shift. Major cloud providers and large enterprises are actively pursuing multi-vendor strategies to secure compute capacity and negotiate better pricing. This is not just about saving money; it’s about ensuring deployment continuity.

Hardware analysts are closely tracking benchmarks comparing platforms like the AMD Instinct MI300X against the NVIDIA H100 specifically on LLM tasks. These comparisons move beyond raw FLOPS to examine real-world performance, memory scalability, and, crucially, software ecosystem compatibility. If AMD (or other emerging chip designers) can provide near-parity performance at a competitive price point, the entire economic structure of AI development will change.

This hardware diversification is essential to sustain the computational load demanded by complex alignment techniques. If DPO reduces the *algorithmic* cost, the hardware race ensures there are enough accessible *physical* resources to handle the trillions of tokens needed for foundation model refinement (as explored in deep-dive benchmarks like those examining the Mamba architecture, which also places high demands on memory bandwidth).

Practical Implication: IT Directors must now factor in training stack portability. Solutions optimized for one hardware architecture may require significant re-tooling for another, meaning investments in flexible software frameworks are more critical than ever.

Trend 3: The Efficiency Imperative—Scaling Alignment and Seeking Architectural Alternatives

The future of AI is not just about making models better; it's about making them smaller, faster to adapt, and cheaper to run. This imperative applies equally to alignment methods and the underlying model structure.

Quantifying Efficiency in Alignment

The move to DPO isn't just about stability; it’s about resource conservation. While PPO necessitates training an entire reward model and managing complex RL pipelines, leading research suggests DPO significantly reduces the operational footprint. Finding sources that quantify this memory usage difference is key for enterprise adoption. If DPO can reduce the VRAM required for fine-tuning by 30% compared to PPO, that translates directly into fewer GPU hours billed or less cloud infrastructure needed.

This focus on efficiency also pushes forward Parameter-Efficient Fine-Tuning (PEFT) techniques like LoRA and QLoRA. These methods allow users to adapt massive models by training only a tiny fraction of the weights. When combined with DPO, the result is a highly optimized pipeline: a stable alignment process running on memory-efficient architectural tricks.

Beyond the Transformer: The Search for Linear Scaling

The Transformer architecture, while revolutionary, suffers from scaling issues in sequence length due to its quadratic complexity. The future demands models that can process extremely long contexts (e.g., entire codebases, full legal documents) without computational explosion.

This is why the emergence of alternatives like State Space Models (SSMs), exemplified by Mamba, is so significant. The foundational work on Mamba demonstrates linear scaling with sequence length, a massive theoretical advantage over the Transformer’s quadratic scaling.

If Mamba or similar architectures become mainstream, they will require new ways to integrate human feedback. Can DPO be easily adapted to SSMs? How will the hardware providers mentioned above optimize for these non-Transformer workloads? These are the next frontiers. The future of LLM customization likely involves choosing the right *architecture* first (e.g., Mamba for long context tasks) and then applying the most *efficient alignment* method (likely DPO) to tailor it.

This interconnectedness—better alignment methods demanding better hardware, and new architectures requiring new alignment paradigms—drives the pace of innovation.

Actionable Insights for a Shifting Landscape

For organizations navigating this rapidly evolving field, understanding these converging trends provides a strategic roadmap:

- Prioritize DPO for Immediate Alignment: If your goal is quick, stable, and cost-effective adaptation of existing Transformer models to specific enterprise data or tone, DPO offers the best return on investment right now. Leverage DPO to quickly iterate on preference datasets rather than wrestling with complex PPO reward modeling.

- Future-Proof Your Compute Strategy: Do not commit 100% of your compute budget to a single accelerator vendor. Begin testing workloads on alternative hardware platforms (like AMD Instinct) where performance gains are being aggressively pursued, especially for inference and initial fine-tuning stages. Hardware diversification is a business continuity strategy.

- Investigate PEFT and New Architectures: For cutting-edge R&D, focus resources on Parameter-Efficient Fine-Tuning (PEFT) combined with exploring new model families like Mamba. If your use case involves handling massive sequential data, adopting these non-Transformer models early could yield a significant long-term performance and cost advantage over relying solely on optimized Transformers.

Conclusion: Customization Becomes Accessible and Diversified

The technical debates surrounding DPO versus PPO are more than just academic sparring; they are bellwethers for where AI development is headed. The trend is clear: alignment is getting simpler, compute is getting broader, and architectures are evolving toward efficiency.

The ability to tightly control and customize AI behavior—to move from a "generalist" tool to a "specialist" employee—will be the primary differentiator in the next wave of enterprise AI adoption. By embracing simpler alignment techniques like DPO, investing strategically in diversified hardware ecosystems, and keeping a keen eye on architectural shifts away from legacy scaling laws, leaders can ensure they are building tomorrow’s powerful, aligned, and economically viable AI applications.