The Great AI Pivot: How MoE, Specialized Silicon, and New Architectures Will Define the Next Decade of Large Language Models

The field of Artificial Intelligence is moving at a blinding pace. Just as the industry began to digest the sheer scale and power of the foundational Transformer model—the engine behind GPT and Llama—the roadmap for the *next* generation is already being drawn. Recent enterprise analyses, such as those detailing the integration of architectures like Mixture of Experts (MoE) alongside specific hardware like the AMD MI355X, show that the focus has shifted decisively from "Can we build it?" to "Can we run it efficiently, affordably, and at scale?"

As an analyst looking beyond the hype cycle, it’s clear that the path forward for LLMs is not just about making models bigger; it’s about making them smarter, sparser, and intrinsically linked to the silicon running them. This article synthesizes three critical threads shaping this pivot: the optimization of current architectures (MoE), the reality of hardware co-design, and the disruptive potential of truly novel model designs.

The Efficiency Revolution: Why Mixture of Experts (MoE) Rules the Near Future

For years, the scaling law of LLMs meant adding more parameters. A 100-billion parameter model was better than a 10-billion parameter model. This resulted in "dense" models—huge digital brains where every neuron fires for every single query. This is incredibly powerful, but also incredibly expensive and slow for everyday tasks.

The Sparsity Advantage: Teaching the Model to Choose

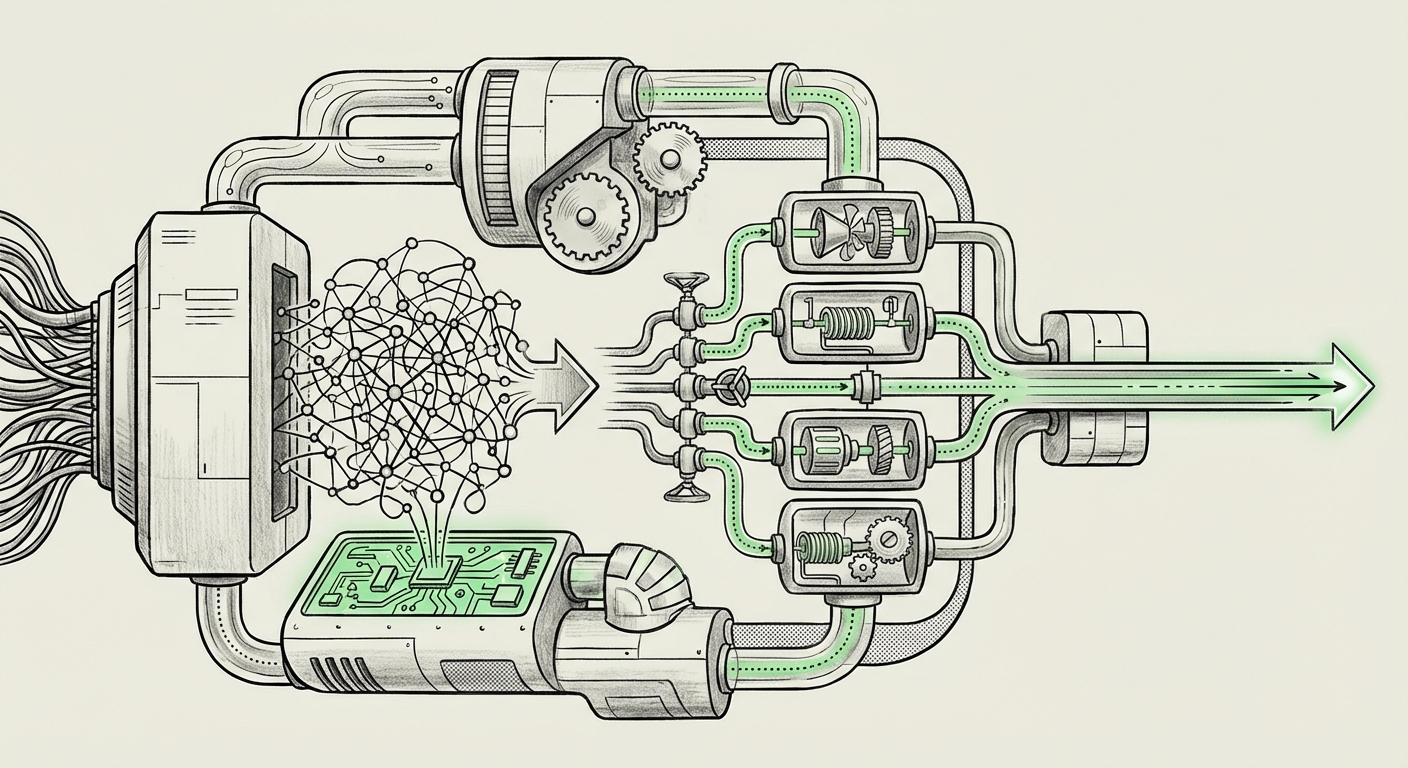

The Mixture of Experts (MoE) architecture flips this script. Imagine a large consulting firm. Instead of having every partner review every single document, the firm has specialized teams (the "Experts"). When a new client comes in, a "Router" decides which 2 or 3 experts are best suited for the job. Only those experts do the heavy lifting.

This is precisely what MoE does. It keeps a vast number of parameters (making the model knowledgeable) but only activates a small fraction of them—often just 10% to 20%—for any given input. This is known as conditional computation. The result?

- Faster Inference: Fewer calculations mean quicker responses, which is vital for real-time applications.

- Cheaper Training: Models can reach high performance levels with less total computation time.

- Greater Capacity: We can build models with trillions of parameters without the prohibitive cost of dense equivalents.

The Implementation Hurdles: Load Balancing and Routing

While MoE is mathematically appealing, implementing it in the real world presents challenges. We need sophisticated routing mechanisms that are fair and effective. If one Expert is constantly chosen (a "load imbalance"), the benefit of sparsity disappears because that one Expert becomes a bottleneck, tying up expensive compute resources. Technical deep dives into MoE optimization focus heavily on algorithms that ensure load balancing and prevent routing mistakes. For enterprises, mastering MoE means mastering these routing strategies to maximize hardware utilization.

Actionable Insight for Business: Companies looking to deploy large-scale generative AI now need MLOps pipelines specifically designed for sparse models. The deployment strategy for an MoE model is fundamentally different from a dense one, requiring specialized inference engines.

The Silicon Reality Check: Hardware Dictates Architectural Fate

Architectural innovation cannot happen in a vacuum; it is tethered to the capabilities of the silicon used for training and inference. The mention of enterprise-ready accelerators like the **AMD MI355X** is not merely a product placement; it signifies a crucial shift: AI development is becoming deeply integrated with hardware roadmaps.

The HBM Bottleneck and The Great Memory Race

The performance of modern LLMs is often less about raw computational speed (FLOPs) and more about how fast data can be moved in and out of memory—a concept known as the memory wall. High Bandwidth Memory (HBM) is the critical technology here. The battle between chip makers like NVIDIA and AMD is often fought over HBM capacity and speed.

MoE models exacerbate this issue. Although they use fewer compute units during inference, they require loading the weights for *all* experts into memory for the router to choose from. This puts immense pressure on memory capacity and bandwidth. If the hardware cannot feed the router and experts quickly enough, the speed gains from sparsity are lost to waiting.

The Co-Design Imperative

This reality forces a strong trend toward hardware-software co-design. Hardware vendors are designing chips optimized not just for matrix multiplication (the core of neural nets) but specifically for sparse operations and efficient communication between specialized processing units. Similarly, software teams must design their models (like MoE routers) to align perfectly with the memory layout and instruction sets of the target hardware.

For enterprise strategists, this means vendor lock-in is becoming more complex. Choosing a hardware platform like AMD or NVIDIA is no longer just a cost decision; it’s a commitment to a specific set of software libraries and architectural optimizations. A model optimized for one accelerator’s memory hierarchy might perform poorly on another.

The Next Horizon: Architectures Challenging the Transformer’s Reign

While MoE fine-tunes the Transformer, the next major architectural breakthrough aims to replace it entirely for specific tasks. The Transformer's central innovation—the self-attention mechanism—allows it to relate any token in a sequence to any other token. This is powerful, but it scales quadratically ($O(n^2)$) with the sequence length ($n$). For context windows measured in millions of tokens (required for tasks like analyzing entire legal dossiers or massive codebases), quadratic scaling quickly becomes computationally impossible.

Enter State Space Models (SSMs) and Mamba

The most exciting architectural development challenging the Transformer is the emergence of State Space Models (SSMs), popularized recently by the **Mamba** architecture. Mamba fundamentally changes how context is processed. Instead of looking backward at every previous token via self-attention, Mamba summarizes the past into a compact "state."

This shift yields a linear scaling complexity ($O(n)$) with sequence length. Put simply: doubling the length of the text you want the AI to read doubles the time it takes, rather than quadrupling it (as with standard Transformers).

What This Means for the Future:

- Truly Long Context: SSMs unlock practical applications for handling massive inputs efficiently, moving AI from paragraph-level understanding to document-level or even book-level comprehension without exorbitant cost.

- Speed Differential: In areas where context length matters most, Mamba-style models are proving to be significantly faster during both training and inference than their Transformer counterparts.

While Mamba is rapidly evolving and still faces integration challenges (especially regarding parallel training efficiency compared to established Transformer frameworks), it represents the most credible threat to the Transformer's dominance in the long term. The future AI ecosystem will likely be hybrid: Transformers for high-detail, short-to-medium context tasks, and SSMs for long-range analysis and sequential data processing.

Practical Implications for Enterprise Strategy and Deployment

Synthesizing these three trends—architectural refinement (MoE), hardware reality (Silicon Co-Design), and architectural disruption (SSMs)—reveals a clear strategic roadmap for any organization leveraging LLMs.

1. Optimize for Inference Cost First

Training large models is a massive sunk cost, but inference—the cost of actually *using* the model every day—is the recurring operational expense. The widespread adoption of MoE signals that the industry values deployment efficiency. Businesses must prioritize inference optimization. This means exploring quantized models, efficient routing mechanisms, and exploring non-Transformer architectures where context length permits.

2. Diversify the Compute Stack

Reliance on a single hardware vendor for cutting-edge AI is increasingly risky given the rapid pace of innovation on both the NVIDIA and AMD roadmaps. Enterprises should build flexibility into their deployment stacks. This requires ensuring that core model frameworks support abstraction layers that allow workloads to be shifted based on performance benchmarks, pricing, and specific accelerator features (like optimized HBM access for MoE models).

3. Prepare for Architectural Shifts

The development roadmap for the next 18-24 months will likely involve heavy experimentation with SSMs. If Mamba or its successors prove capable of matching Transformer quality on core reasoning tasks while offering superior context scaling, companies relying solely on dense or standard Transformer MoE systems may find themselves technologically outpaced.

This requires MLOps teams to maintain architectural literacy. They must understand the difference between quadratic and linear scaling and be ready to prototype workloads on new model families that arrive optimized for different hardware targets.

Conclusion: The Age of Intelligent Specialization

We are moving out of the era of brute-force scaling and entering the age of intelligent specialization. The massive, general-purpose LLMs of yesterday are being carved up into highly efficient, task-specific machinery:

- The Transformer + MoE hybrid is the workhorse for high-quality, manageable near-term deployment, demanding hardware that balances compute with high memory bandwidth.

- The focus on specific silicon (like the MI355X) underscores that hardware and software must evolve together; the chip defines the possible speed, and the architecture defines the possible capability.

- The looming presence of SSMs suggests that the next major leap in capability won't be iteration, but revolution, solving the context length barrier that currently constrains the most ambitious AI applications.

For any organization serious about integrating AI deeply into its operations, ignoring these underlying technical shifts is no longer an option. Success will belong to those who can navigate the trade-offs between architectural elegance, silicon reality, and the next wave of computational breakthroughs.