Beyond Token Limits: Why Emoji-Driven AI Memory Compression is the Next Big Leap for Autonomous Agents

The world of Artificial Intelligence is currently trapped in a paradox of scale. Models are getting bigger, their capabilities are expanding exponentially, but their ability to reliably remember context—especially over long interactions—remains fundamentally constrained. We are witnessing an arms race to stuff more data into the "context window," the AI's short-term working memory. But what if the solution isn't just adding more space, but teaching the AI how to *forget* and *summarize* like a human?

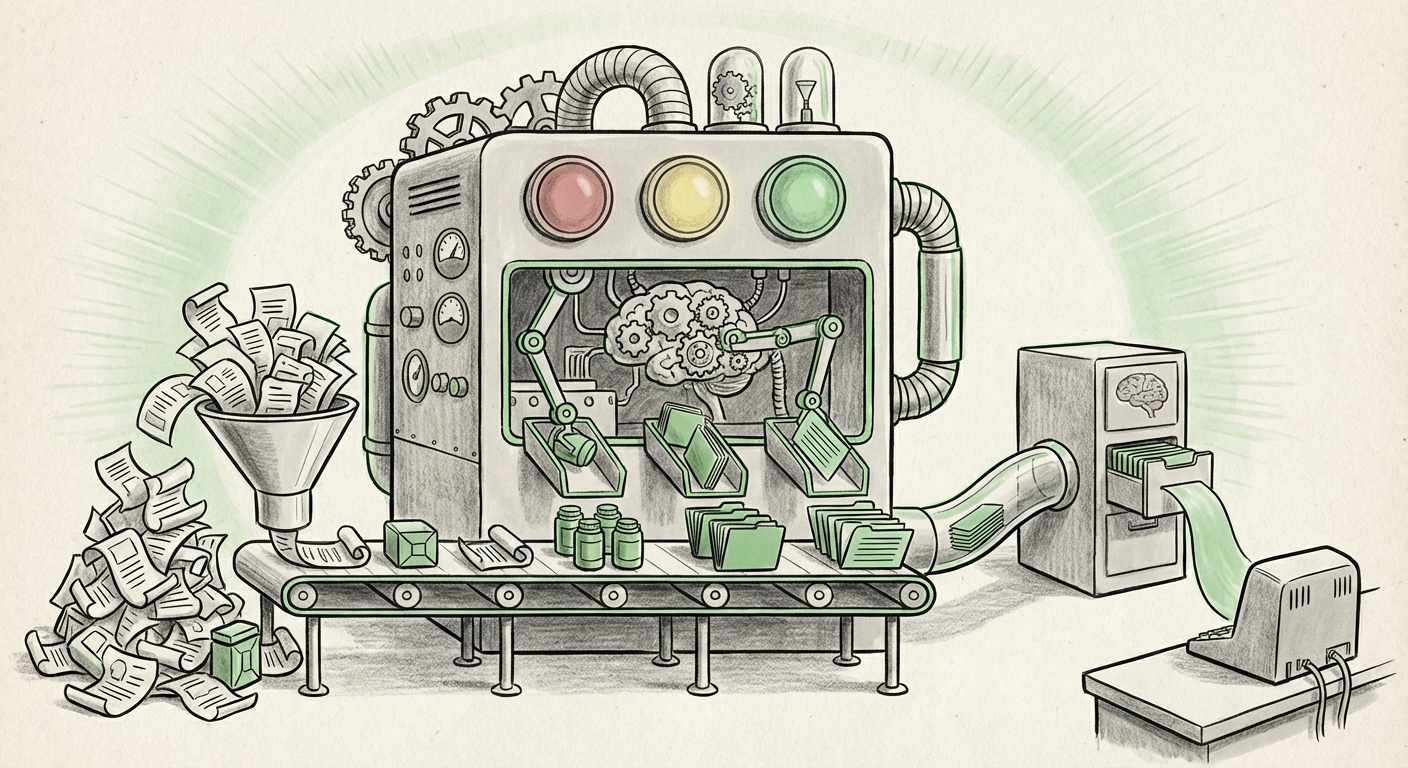

This challenge has just seen a fascinating, open-source breakthrough: the introduction of Mastra. This framework compresses lengthy AI agent conversations into dense, meaningful observations, scored highly on the LongMemEval benchmark. Its secret sauce? Using traffic light emojis (Green, Yellow, Red) to prioritize memory fragments based on perceived significance, mimicking the way human brains tag memories for later retrieval.

This seemingly simple, emoji-based compression is not just a clever trick; it signals a fundamental shift in how we architect long-term intelligence for autonomous systems. To grasp its significance, we must look beyond the novelty and explore the surrounding technological trends it intersects.

The Core Problem: Context vs. Memory

Large Language Models (LLMs) operate with a short-term memory called a context window. Think of it as a whiteboard—everything written on it is instantly available. While models like Claude 3.5 Sonnet and Gemini 1.5 Pro boast context windows reaching millions of tokens (enough to hold entire novels), relying solely on this has severe drawbacks. It’s incredibly expensive to process, dramatically increases latency (slows things down), and research suggests that models often struggle to find crucial information buried deep within these massive contexts—the "lost in the middle" phenomenon. The future requires Long-Term Memory (LTM), where experiences are stored efficiently and retrieved selectively, much like human recall.

The Significance of Mastra’s Approach: Salience Over Storage

Mastra is positioning itself as a framework for achieving true episodic memory for AI agents. Instead of storing every sentence verbatim, it distills conversations into key takeaways, prioritizing them with visual cues.

- 🔴 Red (Critical/Urgent): Information that directly contradicts a previous state, indicates a failure, or requires immediate high-priority recall.

- 🟡 Yellow (Important/Actionable): Key decisions, preferences stated, or milestones achieved that require moderate recall priority.

- 🟢 Green (Contextual/Background): General chat, pleasantries, or lower-stakes background information that can be summarized sparsely.

This categorization forces the AI to create a highly structured, lossy compression. Why does this matter?

Connecting Engineering to Cognition

As we investigated potential corroborating research into AI models mimicking human episodic memory compression, the pattern becomes clear: the most robust biological memory systems prioritize information based on emotional salience, novelty, and utility. We don't remember the exact wording of every conversation, but we vividly recall arguments (Red) or important agreements (Yellow).

Mastra’s emoji tagging is an accessible, immediate analogue to this cognitive principle. By forcing compression based on synthesized "significance," the resulting memory store is small, fast to index, and directly relevant when the agent needs to recall *how* to act next. This moves AI agents from being reactive transcriptionists to proactive entities with reliable past experience.

Validating the Leap: Benchmarks and Limitations

Mastra achieved a new high score on the LongMemEval benchmark. But what is this benchmark, and how reliable is its assessment?

For developers and researchers, understanding the benchmark’s limitations is crucial. If LongMemEval rewards perfect factual replay over synthesis, Mastra's compression might introduce subtle, yet catastrophic, errors in nuanced reasoning. However, if the benchmark successfully tests relational recall—for instance, asking the agent to integrate a low-priority detail from the start of a long session with a high-priority constraint from the end—then Mastra’s explicit prioritization system provides a significant advantage over simple vector database retrieval or raw context expansion.

The industry needs robust evaluation methods that test synthesis and reasoning over massive timelines, not just needle-in-a-haystack retrieval. Mastra’s success pushes the community to develop benchmarks that truly test the quality of *compressed, actionable memory*.

The Ecosystem Shift: Open Source and Agent Infrastructure

Mastra’s status as an open-source framework for persistent agent memory places it directly in the path of the rapidly evolving ecosystem of AI tooling. The infrastructure supporting autonomous agents is segmenting:

- The Core Model (LLM): The brain that processes language.

- The Tools: APIs or functions the agent can call (e.g., searching the web, running code).

- The Memory Layer: Where context and history reside.

Currently, the Memory Layer is often handled by specialized vector databases (like Pinecone or Weaviate) combined with Retrieval-Augmented Generation (RAG). These systems are fantastic at retrieving documents, but they often dump retrieved text wholesale into the context window. Mastra suggests an entirely different architecture: one where the memory system pre-processes and synthesizes the history before it ever hits the LLM.

Because it is open-source, Mastra invites rapid iteration. Developers can inspect the compression logic, fork the prioritization schema, or integrate it directly into existing agent orchestration tools (like LangChain or AutoGen). This level of transparency accelerates the development of production-ready, complex agents that need reliable state management across days or weeks of interaction.

The Inevitable Endpoint: Escaping the Context Cage

The drive behind Mastra is fundamentally an economic and technical reaction to the limitations of fixed context windows. We can’t simply keep increasing context size forever. The computational cost rises quadratically or worse, making real-time, million-token conversations prohibitive for most commercial applications.

Compression changes the equation entirely. If Mastra can effectively reduce 100,000 tokens of dense technical dialogue into 1,000 tokens of high-signal, emoji-tagged memory, the agent can maintain perfect conversational context cheaply and instantly. This isn't just about saving money; it’s about enabling AI applications that were previously impossible:

- Long-Horizon Projects: AI assistants managing multi-week software development cycles or complex legal discovery.

- Continuous Personalization: An AI tutor that remembers every single learning stumble a student had over a semester, not just the last few hours.

- Robust Error Recovery: Agents that can instantly recall the critical step that caused a failure days prior, allowing immediate, context-aware correction.

Practical Implications: What This Means for Business and Society

For both businesses building AI products and societies grappling with autonomous systems, memory architecture is paramount.

For Developers and Engineers (The Technical Audience):

Actionable Insight: Start integrating modular memory frameworks now. Do not rely on prompt engineering to manage history beyond a few thousand tokens. Mastra, or frameworks inspired by it, should be evaluated as the "Summary/Schema Generator" layer that sits between the interaction and the final LLM call. The ability to tag memories with explicit priority (Green/Yellow/Red) allows for fine-grained control over retrieval during execution, offering far greater interpretability than opaque vector similarity scores.

For Business Leaders and Product Managers (The Strategic Audience):

The Shift to Persistence: The era of stateless AI bots is ending. Customers expect continuity. If your customer service AI must ask the same qualifying questions every time a user returns, you are using outdated technology. Mastra’s methodology proves that efficient, human-like memory compression is the path to truly persistent, value-adding AI agents. Investing in frameworks that manage agent state and long-term memory will become a competitive differentiator, reducing operational costs associated with redundant prompting and improving customer satisfaction through genuine recall.

Societal Implications: The Trust Factor

If AI agents are going to become integrated deeply into personal finance, healthcare, or education, we must trust their consistency. An agent that "forgets" a critical safety instruction (a potential Red tag failure) is dangerous. Conversely, an agent that drowns you in irrelevant historical detail (a failure of Green tag compression) breeds frustration. Techniques like Mastra—which explicitly prioritize what matters most—are crucial steps toward building trustworthy AI systems where critical information is never lost, and background noise is intelligently filtered.

Conclusion: The Dawn of Economical Intelligence

Mastra’s open-source project, leveraging the almost poetic simplicity of traffic light emojis, throws down a gauntlet to the industry. It suggests that the breakthrough in creating truly autonomous, long-lived AI agents lies not just in model size or raw token capacity, but in developing smarter, biologically inspired methods for information distillation and prioritization.

We are moving away from simply making LLMs bigger, toward making them smarter rememberers. By modeling human cognitive shortcuts—tagging, summarizing, and prioritizing based on salience—we can unlock persistent, cost-effective AI intelligence that retains what is crucial and discards the chaff. The road to AGI is paved not just with massive data centers, but with efficient, context-aware memory architectures like the one Mastra is pioneering.