Beyond the Context Window: How Emoji-Driven Memory Compression is Redefining AI Agents

The current generation of Large Language Models (LLMs) has demonstrated superhuman fluency, but their effectiveness is often capped by a crucial technical limitation: the context window. Think of the context window as the LLM’s short-term memory. If a conversation or task exceeds this window, the model "forgets" the details from the beginning. For autonomous AI agents that need to manage long projects, maintain complex personas, or interact over weeks, this limitation is an absolute ceiling on capability.

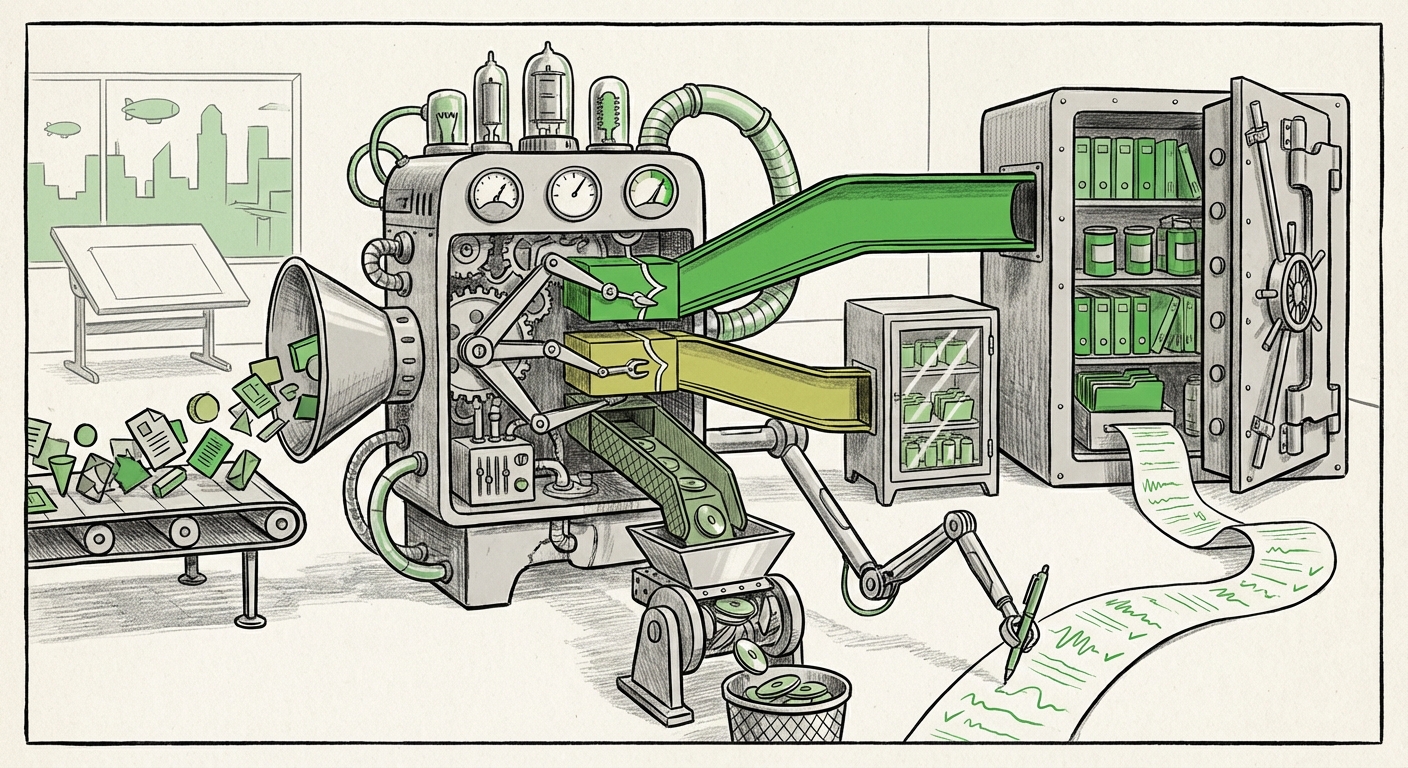

Enter Mastra, an open-source framework that is sending ripples through the AI research community. Mastra doesn't just try to build a bigger context window; it seeks to build a smarter one. By compressing entire streams of agent interactions into "dense observations" prioritized with familiar traffic light emojis (Green, Yellow, Red), Mastra offers a compelling glimpse into the future of stateful, long-term AI memory. This approach suggests that efficiency and human intuition might be more powerful than brute-force token expansion.

The Bottleneck: Why Context Windows are Not Enough

For years, the primary race in LLM development involved increasing the context window size—moving from 4K tokens to 128K, 200K, or even larger proprietary windows. While impressive, this approach faces diminishing returns and massive computational costs. Processing extremely long contexts requires exponential increases in GPU memory and processing time, making deployment expensive and slow.

Moreover, sheer size does not equal intelligence. A 100,000-token context window is useless if the model cannot effectively pinpoint the one critical piece of information buried on token 99,999. This is where memory augmentation systems come in. The industry standard has largely been Retrieval Augmented Generation (RAG), where external databases store information, and the system retrieves relevant chunks during runtime.

However, standard RAG is often clumsy. It retrieves large blocks of text, forcing the LLM to re-read potentially irrelevant data just to find a small fact. Mastra’s innovation is to move beyond simple chunk retrieval toward semantic compression.

Mastra’s Insight: Compression Modeled After Human Recall

The core genius of Mastra lies in its adoption of a cognitive model for data storage. Humans rarely recall entire conversations verbatim; we recall key events, decisions, and emotional markers. Mastra translates the agent's history into prioritized summaries:

- Green (High Priority): Critical facts, core objectives, successful conclusions. These are instantly accessible and frequently referenced.

- Yellow (Medium Priority): Supporting details, minor decisions, or information that might be needed contextually later.

- Red (Low Priority): Peripheral chatter, tangential discussions, or errors that have been corrected. This data is heavily compressed or archived.

This stratification is powerful because it mirrors how we structure episodic memory. By scoring highly on the LongMemEval benchmark, Mastra suggests that this compressed, prioritized structure allows an agent to maintain long-term coherence more effectively than simply dumping raw text into a massive context buffer. For researchers examining the landscape, tools that test this capability are vital (Context Source 1: Searching for LongMemEval evaluations helps confirm where Mastra stands against state-of-the-art models trying to solve context retention).

The Shift: From Retrieval to Sophisticated Memory Architecture

Mastra is part of a much larger, accelerating trend: the evolution of AI agent architecture beyond basic RAG setups. We are moving from AI systems that merely *read* data to systems that *remember* and *reason* based on structured internal states.

The Limitations of Current Architectures

Traditional RAG systems are reactive. They query an index based on the immediate prompt. They lack true statefulness—the ability to carry forward the 'feeling' or 'understanding' of a long interaction. This is particularly problematic for multi-turn, complex tasks like debugging code over several days or managing a long-term creative project.

The Rise of Hierarchical and Compressed Memory

Mastra fits into the emerging category of advanced memory architectures. These systems often involve:

- Working Memory: The current context window (short-term).

- Episodic Memory: A chronological record of events (what happened).

- Semantic Memory: Abstracted knowledge and learned concepts (what was learned).

Mastra’s "dense observations" seem to function as a highly efficient bridge between episodic memory and the working context. It’s not just vector searching; it’s intelligent summarization and prioritization driven by symbolic markers (the emojis). This suggests a convergence between purely statistical modeling (vectors) and symbolic reasoning (the explicit priority tags) (Context Source 2: Research into agent memory architectures beyond standard RAG shows a clear industry pivot toward stateful, hierarchical storage).

Cognitive Modeling: The Viability of Human Analogies

Why use traffic lights? Because they are universally understood, immediate, and require zero computational overhead to interpret their meaning. This move to incorporate simple, human-centric symbolism into the memory compression layer is perhaps the most forward-looking aspect of Mastra.

For decades, cognitive scientists have tried to map how the human brain handles massive inputs, focusing on phenomena like selective attention and consolidation of memories during sleep. The brain doesn't store every photon it absorbs; it stores salient events that impact future behavior. Mastra seems to be implementing a computational proxy for salience.

When an LLM or agent processes data, it can assign weights or tags based on task relevance. Mastra formalizes this tagging system using universally recognized symbols. While this might seem like a cute gimmick to some, analysts recognize it as a critical step in bridging the gap between statistical fluency and genuine cognitive understanding (Context Source 3: Research into modeling human episodic memory often explores how symbolic representation aids or hinders long-term knowledge retention).

The implication is profound: if we can successfully model the *structure* of human memory—prioritization, consolidation, and selective recall—we might unlock efficiency gains that simply throwing more parameters or more context at the problem cannot achieve.

Future Implications: What This Means for AI Development and Business

The success of open-source projects like Mastra has massive implications for how AI systems will be built, deployed, and governed.

1. Democratization of Long-Term Agents

Because Mastra is open source, it lowers the barrier to entry for creating truly persistent AI agents. Companies no longer need to rely solely on proprietary, massive context windows offered by large foundational model providers. Developers can integrate Mastra’s memory compression layer into smaller, customized models, allowing for:

- Cost Reduction: Less data processing means lower inference costs.

- Customization: Teams can fine-tune the compression/prioritization logic specific to their domain (e.g., prioritizing legal precedents over casual chat in a legal AI).

(Context Source 4: The adoption rate of open-source agent frameworks is directly tied to the viability and ease of integration of their memory components.)

2. The End of "Context Amnesia" in Business Workflows

Imagine an AI project manager that tracks a software development cycle spanning six months. With current tech, tracking dependencies from Month 1 when you are in Month 5 is nearly impossible without constant manual feeding of context. With an architecture like Mastra, the AI retains a prioritized "Green" summary of Month 1's core architectural decisions, allowing it to instantly reference them when confronting a Month 5 integration problem, ensuring architectural drift is minimized.

For customer service, an agent could maintain a "Green" memory of a client's core business needs and past premium purchases, providing service that feels deeply personalized and consistent, even across thousands of interactions.

3. Actionable Insight: Prioritizing Architecture Over Scale

For CTOs and AI leads, the message from Mastra is clear: Architecture trumps raw scale when dealing with continuity. The investment should shift from simply paying for larger context windows to investing in intelligent memory handling.

Actionable Insight: Enterprises should begin auditing their current RAG implementations. Are they retrieving massive documents when only a few key sentences are needed? Could a symbolic or hierarchical memory layer improve retrieval precision and reduce latency? Look at Mastra not just as a memory tool, but as a blueprint for forcing models to perform better distillation of knowledge.

The Road Ahead: Emojis as a New Interface for AI State

While Mastra uses emojis for illustration, the principle is what matters: a clear, easily parsable symbolic layer on top of dense vector storage. This pushes us toward a future where AI memory isn't a hidden black box of vectors, but a structured, introspectable state managed by the agent.

If agents can self-assess the "color" (priority) of their own memories, they can self-correct, choose better retrieval strategies, and ultimately become more reliable partners in complex, long-running tasks. The traffic light is a simple, brilliant abstraction that makes the complex task of memory management transparent and, crucially, more efficient.

The next evolution in AI will not be defined by the largest model, but by the agent that remembers what truly matters. Mastra is providing the open-source toolkit to start building that future today.