The $100 Billion Green Bet: How Adani's AI Data Centers are Reshaping Global Compute and Energy

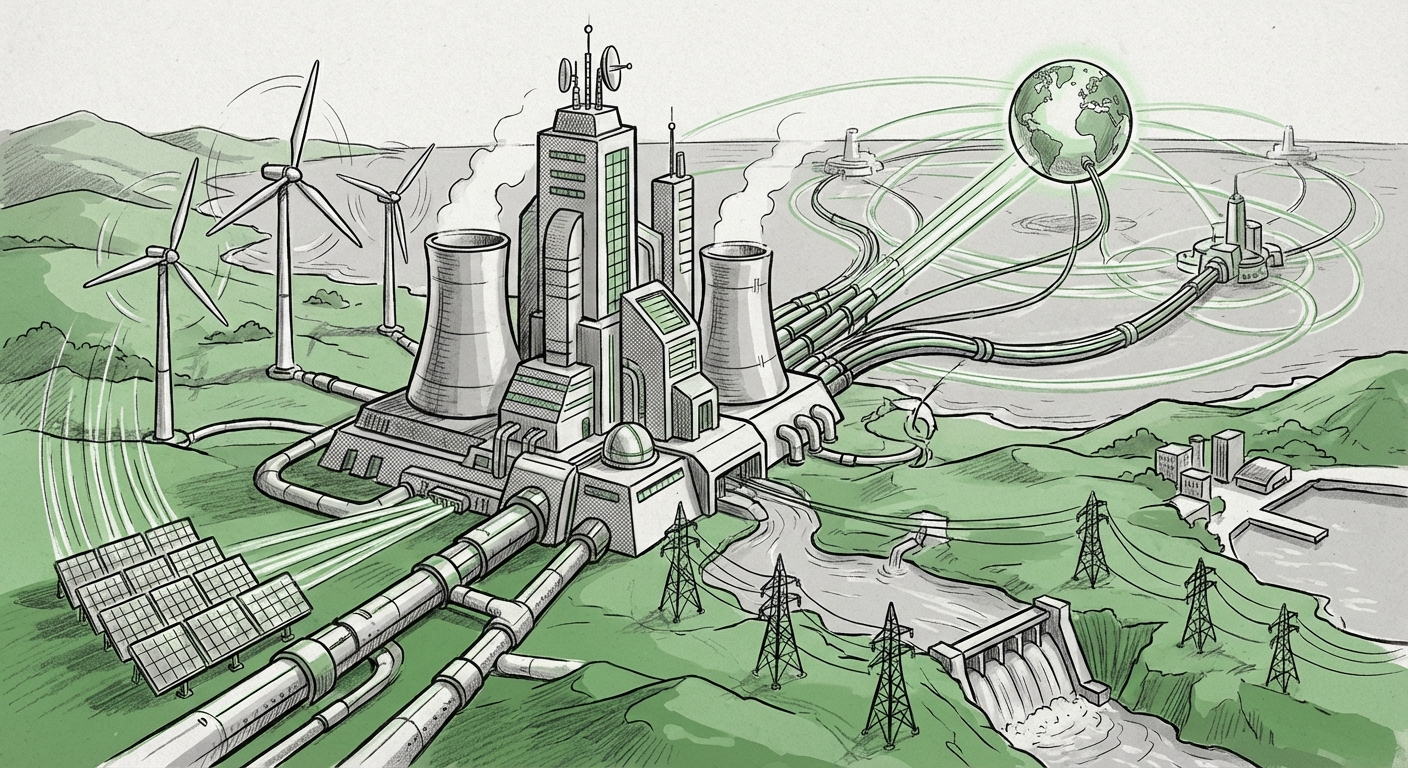

The technological world is accelerating at a pace few predicted, driven almost entirely by the insatiable hunger of Artificial Intelligence. As models grow larger, training them requires exponentially more computing power. This demand has just triggered one of the largest infrastructure announcements in recent memory: the Adani Group's plan to deploy approximately **$100 billion by 2035** into building AI-capable data centers powered entirely by renewable energy.

This move is not merely a large capital outlay; it is a strategic declaration. It links the future of high-performance computing (AI) directly to the imperative of sustainability. To understand the true scope of this development, we must examine it through three critical lenses: the exploding global need for data centers, the staggering energy cost of modern AI, and the geopolitical significance of placing this infrastructure in India.

1. The Data Deluge: Global Data Center Expansion in the AI Era

Data centers are the physical backbone of the digital world—they are giant warehouses filled with servers where information is stored, processed, and delivered. Traditionally, data center growth was driven by cloud adoption (like Netflix streaming or enterprise migration to the cloud). However, the arrival of powerful Generative AI models (LLMs) has radically altered the demand curve.

The Scaling Problem

Training a state-of-the-art AI model is computationally intensive, demanding thousands of specialized high-powered chips (GPUs) running non-stop for weeks or months. Once trained, running these models for millions of users (inference) still requires substantial energy. This AI load is driving global forecasts for data center capacity to previously unseen heights.

Analysis of global trends suggests that this is not a temporary spike. Projections for global data center capacity growth through 2035 indicate a monumental expansion is necessary simply to keep up with current digital services, let alone the new demands of AI. (Corroborating industry reports often point to capacity doubling or tripling in key markets.) This aggressive scaling means that simply finding space and securing hardware is not enough; the primary bottleneck is becoming power access.

The Scale of Adani’s Ambition

Adani’s $100 billion commitment signals their belief that the need for this infrastructure, particularly in the Asia-Pacific region, will dwarf current supply. For business decision-makers and infrastructure investors, this figure contextualizes the competitive landscape: companies that can deliver vast, secure, and scalable computing resources will control the next decade of digital innovation.

2. The Unseen Cost: AI’s Staggering Energy Demands

The most compelling, and perhaps most challenging, aspect of Adani’s plan is the commitment to **renewable energy**. This is because AI compute is an energy glutton.

Training vs. Running Models

To make this accessible: Imagine a standard personal computer uses a small amount of electricity to do homework. Training a massive AI model is like making every student in a country do homework simultaneously, using the most powerful computers available, 24 hours a day for months. The energy needed is enormous.

Research focusing on the impact of generative AI on data center energy consumption consistently shows that as models become more capable (e.g., moving from GPT-3 to GPT-4 or its successors), the efficiency improvements struggle to keep up with the scale increase. This dynamic means the sector's overall power usage is projected to soar.

The Renewable Advantage

For any major cloud provider or enterprise looking to deploy large-scale AI operations, sustainability is no longer a corporate social responsibility footnote—it is an operational necessity:

- Regulatory Pressure: Governments are increasingly scrutinizing the carbon footprint of digital services.

- Investor Demand: ESG (Environmental, Social, and Governance) mandates mean that capital flows preferentially toward sustainable projects.

- Operational Stability: Relying on centralized, older power grids for massive, continuous loads is risky. Renewable energy, especially when integrated directly with industrial-scale power generation (as Adani specializes in), offers a more direct and potentially more stable power supply for these critical facilities.

By linking its data center buildout directly to its established renewable energy portfolio, Adani is offering a 'green premium' service. They are effectively promising their future tenants (hyperscalers like Microsoft or Google, or major Indian corporations) a clear path to meeting their own Net Zero commitments without having to shoulder the entire burden of building new clean energy infrastructure themselves.

3. India’s Strategic Ascent in the Global Tech Landscape

This investment solidifies India’s transition from being primarily a consumer of global digital services to becoming a primary producer and host of the underlying infrastructure.

Policy Meets Private Ambition

A $100 billion infrastructure bet does not happen in a vacuum. It requires robust government support, streamlined regulations, and a clear national strategy. India has been aggressively courting high-tech investment, particularly in semiconductors and digital infrastructure, through initiatives designed to reduce reliance on foreign manufacturing and build domestic digital sovereignty.

Searches into India's policy landscape reveal active incentives for data center development and emerging support for green energy projects critical to these hubs (such as hydrogen or large-scale solar/wind farms). Adani’s move is perfectly synchronized with these national ambitions, positioning the group as a key executor of India’s vision to become a major global technology node.

The Asia-Pacific Competitive Play

The Asia-Pacific region is the fastest-growing market for cloud computing. While established hyperscalers like Amazon Web Services (AWS) and Microsoft Azure have significant footprints, building large-scale, renewable-powered infrastructure requires massive capital and local integration expertise—areas where Adani excels.

This investment forces a comparison with existing hyperscaler spending trends in Asia. Is Adani building *to lease* to these giants, or is it building a competitive, independent platform? The answer is likely both. By creating enormous, green-certified capacity, Adani becomes an indispensable partner for global firms restricted by their own sustainability mandates, and a potent domestic competitor for Indian enterprises.

Future Implications for AI and Business

What does this massive infrastructure deployment mean for the trajectory of AI development and for businesses relying on it?

A. Democratization of Green Compute

Currently, cutting-edge AI development is often centralized in regions with ample, cheap, and available power grids (often dominated by legacy energy sources). By expanding high-density, renewable-powered compute capacity in India, Adani potentially democratizes access to the *cleanest* compute. This lowers the barrier for Indian startups and mid-sized firms to train and deploy cutting-edge AI without incurring massive carbon debt.

B. Data Sovereignty and Latency

For many international businesses, data security and latency (the delay in data transmission) are critical. Placing massive compute resources within India means faster service delivery for local users (lower latency) and compliance with local data residency laws (data sovereignty). Adani’s commitment ensures that India remains a local option, rather than funneling all complex AI tasks overseas.

C. The Convergence of Energy and Tech M&A

This convergence signals that the lines between traditional infrastructure giants and cutting-edge technology providers are dissolving. Companies like Adani, with deep expertise in logistics, ports, energy generation, and transmission, are now positioned to dominate the physical layer of the AI economy. This convergence will likely lead to more strategic partnerships, joint ventures, or even acquisitions between pure-play tech firms and energy/infrastructure conglomerates.

Actionable Insights for Stakeholders

For organizations looking ahead, this development requires strategic adjustments:

- For Enterprise CTOs: Begin auditing your AI supply chain's carbon footprint. Future procurement decisions for cloud services will heavily favor providers who can guarantee renewable energy sources. Start conversations now with potential Indian partners or cloud vendors planning capacity expansion in the region.

- For Infrastructure Investors: This signals a massive opportunity in the 'picks and shovels' of AI—specifically, specialized cooling technologies, high-efficiency power distribution units (PDUs), and green energy storage solutions required to balance intermittent renewable supply for constant AI workloads.

- For Policy Makers: The investment validates the strategic importance of building robust, high-capacity digital infrastructure. Policies must now focus on streamlining grid connections and permitting for these renewable-AI energy projects to ensure India can absorb and utilize this capital effectively before the 2035 deadline.

The Adani Group’s $100 billion investment is more than a real estate play; it is a blueprint for the next era of global computing. It recognizes that raw processing power is useless without a sustainable power source, and that the center of gravity for digital growth is rapidly shifting eastward. The race to power the intelligent future has officially entered a green phase, and infrastructure giants are now leading the charge.