The Governance Frontier: Why the Anthropic-Infosys Partnership Signals AI's Move to Regulated Industries

The landscape of Artificial Intelligence is undergoing a profound metamorphosis. For years, the buzz centered on consumer applications—creative tools, general knowledge assistants, and viral platforms. Today, the center of gravity is shifting sharply toward the enterprise, specifically into the most complex, risk-averse corners of the global economy: regulated industries. The recent collaboration between Anthropic, a leader in responsible AI development, and Infosys, a titan of global IT services, is not just another business deal; it is a litmus test for the maturation of Generative AI.

This partnership signals the move from "proof-of-concept" AI deployment to building AI agents designed to operate within strict regulatory frameworks like finance, insurance, and healthcare. To understand the seismic shift this represents, we must examine the unique demands of these sectors, the specialized capabilities Anthropic brings, and the critical role integrators like Infosys play in bridging the gap between raw AI power and industrial reliability.

The Demand Side: Why Compliance is the New AI Bottleneck

General-purpose LLMs (Large Language Models) are powerful mimics, but they are often too unpredictable for tasks involving millions of dollars or sensitive patient data. Regulated industries cannot afford the risk of hallucination or data leakage. Compliance isn't a feature; it is the bedrock of operation.

For Chief Compliance Officers (CCOs) and banking executives, deploying AI requires verifiable answers to three fundamental questions:

- Traceability: Where did the AI get its information, and how can we audit its decision process?

- Privacy: Is our proprietary or customer data fully isolated and secure from the model provider?

- Adherence: Does the AI understand and actively enforce complex, multi-layered regulations (like Basel III in banking or HIPAA in healthcare)?

Articles tracking Generative AI adoption in financial services compliance confirm that without secure, auditable systems, adoption stalls. This need for governance transforms AI from a mere tool into a complex integration project. This is the market void Anthropic and Infosys are aiming to fill, moving beyond generic chat interfaces toward specialized agents that execute specific, regulated workflows.

Anthropic’s Edge: Safety as a Selling Point

Anthropic’s foundational philosophy, centered around "Constitutional AI," positions them uniquely for this high-stakes environment. Constitutional AI involves training models (like their Claude series) not just on vast amounts of data, but on a set of explicit principles or rules—a "constitution"—that guide their behavior toward harmlessness and honesty. In regulated environments, this approach is gold.

When we examine Anthropic Claude 3 enterprise deployment security, we see a focus on offering models that respect data boundaries. For an enterprise building an AI agent to review loan applications or process insurance claims, knowing the underlying model is inherently designed with guardrails—that it resists generating fraudulent advice or exposing protected information—significantly lowers the integration barrier.

For the technical audience, this means the heavy lifting of establishing basic safety protocols might already be partially handled by the model itself, freeing up Infosys’s integration teams to focus on customizing the agent for the client’s *specific* policy library rather than constantly patching general model vulnerabilities. This dedication to inherent safety validates why Infosys, seeking robust, long-term solutions for conservative clients, would partner with Anthropic.

The System Integrator Mandate: Building the Rails for AI

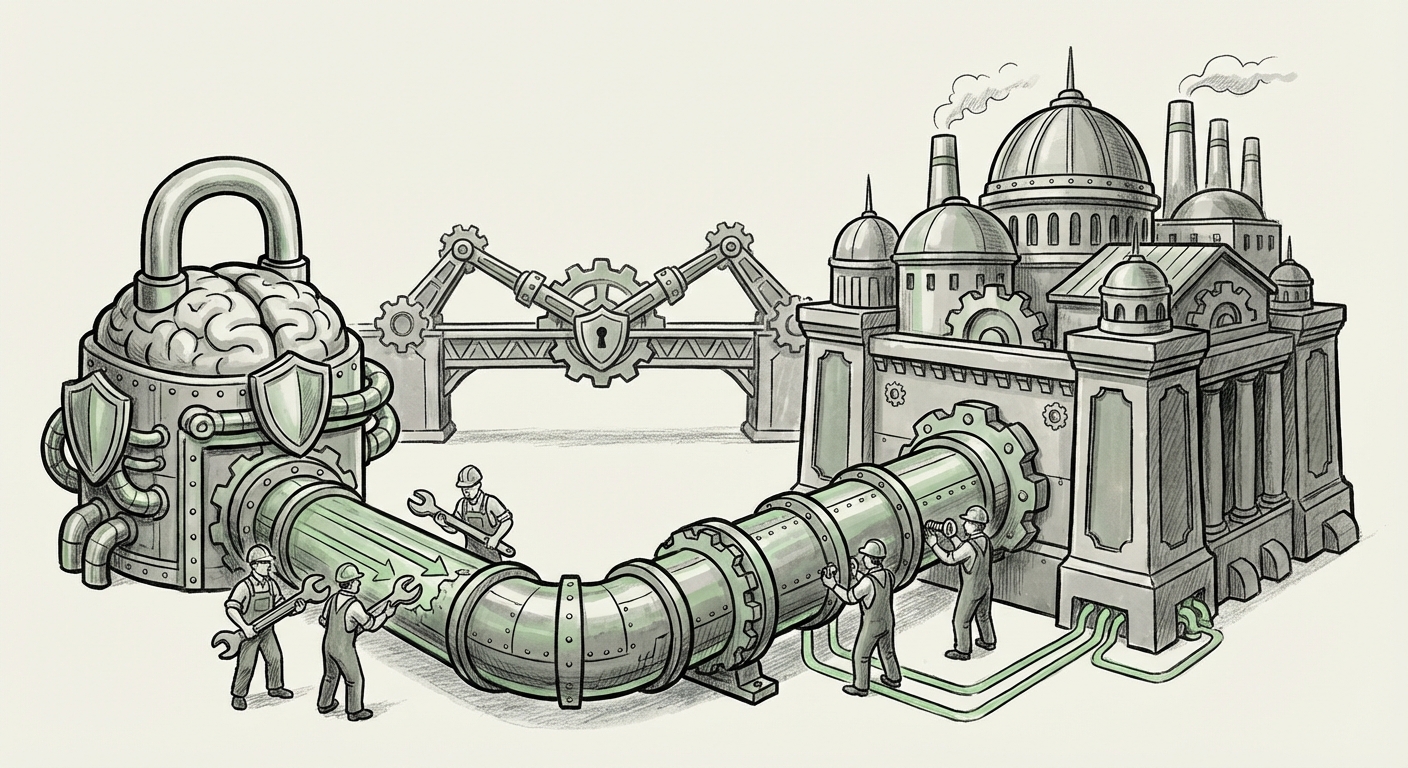

A brilliant LLM is useless to a massive bank or insurer without infrastructure, legacy system integration, security overlays, and user training. This is where Infosys, and the broader tier of IT service providers, becomes indispensable. They are the bridge builders.

The strategic importance of companies like Infosys, Wipro, and TCS in the AI revolution cannot be overstated. They don't just sell software; they manage massive, decades-old digital estates. Articles analyzing the GenAI strategy and partnerships of Indian IT firms consistently highlight that their future revenue depends on embedding third-party foundational models securely into client workflows.

The Infosys-Anthropic deal is a classic ecosystem play: Anthropic provides the cutting-edge intelligence, and Infosys provides the necessary plumbing—the data pipelines, the governance layers (ensuring outputs are logged and auditable), the custom fine-tuning on proprietary client data, and the massive scalability required for global deployment.

For CIOs, this partnership simplifies the procurement headache. Instead of managing separate contracts with an AI developer and a system integrator, they get a bundled solution ready for regulated environments.

The Future: Specialized, Auditable AI Agents

The term AI Agent implies a system capable of reasoning, planning, and executing multi-step tasks autonomously. This capability is where the true disruption lies, but also where the greatest regulatory friction occurs.

In the context of regulated industries, we must distinguish between full autonomy and high-precision augmentation. Fully autonomous AI agents making life-altering decisions (like rejecting a major insurance claim without human review) are years away from widespread acceptance. However, agents that automate 95% of the document review, flag high-risk items for human specialists, and generate compliant first drafts are immediately valuable.

The future trajectory hinges on defining the boundaries between machine execution and human accountability. Research into autonomous AI agents vs. regulated workflows reveals intense focus on establishing "human-in-the-loop" protocols where the AI agent acts as an expert analyst, presenting synthesized, verifiable recommendations rather than final decisions.

This partnership is effectively designing AI agents that have built-in compliance checks at every step. If an agent is tasked with summarizing a complex legal document for a compliance officer, its output must reference the exact source paragraphs and confirm adherence to internal policy X. This level of operational rigor is what transforms a promising technology into a sustainable enterprise asset.

Implications and Actionable Insights for Business Leaders

The Anthropic-Infosys alliance is a blueprint for the next wave of enterprise AI. Here is what business leaders should take away:

1. AI Procurement is Becoming Verticalized

The era of "buy one LLM for everything" is ending. Expect more specialized partnerships targeting specific compliance domains (e.g., a partnership focused solely on FDA regulations or EU financial directives). If your industry is heavily regulated, look for partnerships that combine a safety-focused model provider with a proven system integrator.

2. Governance Must Be Designed In, Not Bolted On

For technical teams, the priority must shift from optimizing model accuracy to maximizing model explainability and auditability. If you cannot log why an agent took an action, you cannot deploy it in a regulated setting. Ensure your chosen platform supports robust tracing and version control for the AI's outputs.

3. The Value of the Integrator Skyrockets

The fundamental constraint on AI adoption is no longer the model itself, but the ability to integrate it safely into ancient, mission-critical IT systems. Service providers like Infosys are moving up the value chain; their expertise in regulatory risk management is now as valuable as their coding skills. They are becoming indispensable partners in minimizing AI adoption risk.

In simple terms, AI is growing up. It’s moving out of the sandbox and into the high-security vault. The partnership between Anthropic and Infosys is a clear signal that the next frontier of AI success won't be measured by creative flair, but by verifiable, trustworthy, and compliant execution.