The Silent Guardians: How AI is Revolutionizing Research Integrity Amidst Data Overload

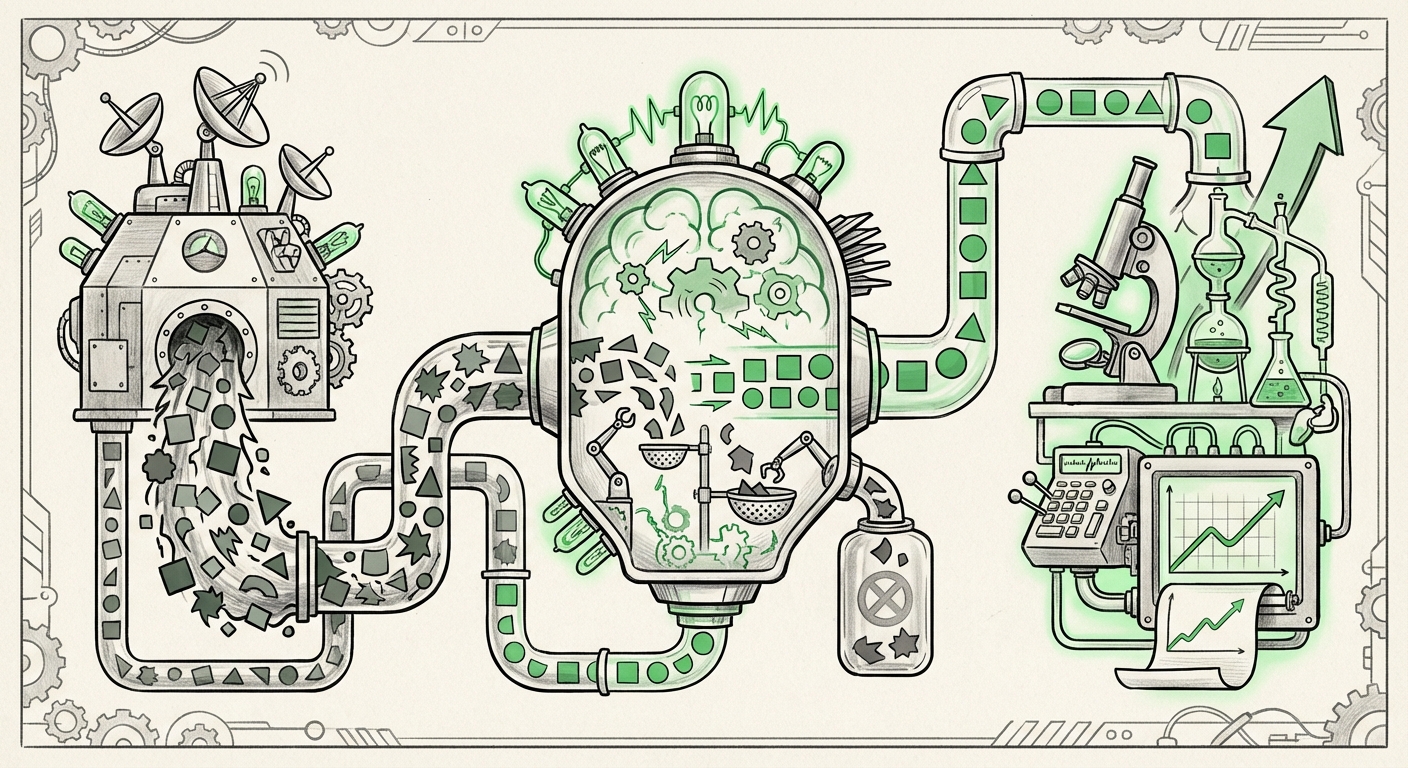

The engine of scientific progress runs on data. Today, that engine is roaring louder than ever before, fueled by the convergence of Artificial Intelligence (AI) and the Internet of Things (IoT). From automated lab equipment generating petabytes of genomic data to smart sensors monitoring environmental factors in real-time, the sheer *volume*, *velocity*, and *variety* of information pouring into research pipelines have reached a critical threshold.

This acceleration, while promising revolutionary breakthroughs in medicine, materials science, and climate modeling, has introduced an existential threat: The integrity crisis of scale. What a human researcher could manually vet yesterday spans millions of data points today. If we cannot reliably validate the data feeding our most advanced AI systems, we risk propagating systemic errors, leading to flawed conclusions, wasted resources, and a fundamental erosion of scientific trust. The key takeaway, validated by industry analysis, is that automated data validation is no longer an enhancement—it is the mandatory bedrock of modern research integrity.

The Perfect Storm: AI, IoT, and the Velocity Challenge

To understand the urgency, we must visualize the modern research ecosystem. Imagine a materials science lab using AI to design a new alloy. The process involves:

- IoT Ingestion: Hundreds of sensors monitor temperature, pressure, and chemical composition during synthesis, sending constant streams of time-series data.

- Automated Pipelines: This raw data is fed immediately into cloud processing units.

- AI Analysis: Machine Learning models analyze the outcomes to predict optimal parameters for the next experiment.

If a single sensor drifts out of calibration (an IoT failure) or if a data stream is corrupted during transfer (a pipeline failure), the error is not noticed until the resulting AI model produces faulty predictions. Because this loop happens in hours rather than weeks, systemic issues propagate exponentially.

Simplifying the Complexity for All Audiences

Think of it like baking a massive cake using a factory full of robot arms. The robots measure flour, sugar, and heat thousands of times a second. If one robot mistakenly uses salt instead of sugar, you cannot stop every robot and taste the batter manually. You need a smart monitoring system—an electronic "taster"—that knows what the batter *should* taste like based on the recipe (the expected data schema) and instantly flags the salty batch before it ruins a million other cakes. That electronic taster is automated data validation driven by AI.

The Solution Deep Dive: Machine Learning as the New Validator

The necessary automation requires moving beyond simple rule-based checks (e.g., "Is this number between 0 and 100?"). We need intelligence that understands context and relationship. This is why focusing on "Machine Learning for automated data validation in scientific research pipelines" (Search Query 1) is crucial.

Contextual Anomaly Detection

Traditional validation fails when an "outlier" is actually a valid, rare discovery. Modern ML validation systems excel here because they don't just check against fixed rules; they learn the *distribution* of "normal."

- Drift Monitoring: AI models track changes in the incoming data's statistical properties over time. If a thermometer starts reading 2 degrees hotter than it statistically should, the ML system flags it, indicating potential hardware failure or environmental change, thereby protecting the integrity of the subsequent analysis.

- Schema Evolution: As research evolves, the data structures change. AI-assisted tools can automatically suggest updates to the data schema or flag data that no longer fits established norms, preventing data incompatibilities down the line.

For technical audiences, this translates directly into MLOps practices where data quality monitoring is treated as a first-class citizen, integrated directly into CI/CD pipelines for research data. For R&D leaders, it means significant risk reduction in validating multi-million dollar experimental runs.

The Governance Imperative: Trust in the Automated Age

Technical prowess is only half the battle. The data validation tools must operate within a strict framework of accountability. Our second area of focus—the "Impact of large-scale data automation on research reproducibility and integrity standards" (Search Query 2)—highlights the governance gap.

Reproducibility Under Scrutiny

The bedrock of science is reproducibility—the ability for another team to achieve the same results using the same methods and data. When data processing is opaque, governed by complex, self-correcting AI validation layers, reproducibility becomes harder to prove. If a paper is published based on data curated by an autonomous validation system, stakeholders—regulators, journals, peer reviewers—need assurance that the system itself is auditable.

This brings forward the necessity of adopting principles like **FAIR Data** (Findable, Accessible, Interoperable, Reusable). Automated systems must be designed not just to check data, but to document how the checking occurred.

Practical Implication: Compliance officers and regulatory bodies must begin defining standards for "validated data provenance." If a clinical trial uses AI to filter out noisy patient monitoring data (IoT input), regulators must know the exact algorithm used to filter it and confirm that the filtering process did not systematically bias the results toward a desired outcome.

The Edge Problem: Validating Data at the Source

The initial contamination often happens where the data is born: at the sensor or the edge device. This is the core challenge addressed by examining "Data quality challenges integrating IoT sensor data into centralized research databases" (Search Query 3).

IoT data is inherently messy. A robot arm vibrates, a chemical sensor fogs up, or the network briefly drops, creating gaps or spikes in the data stream. If this raw, noisy data bypasses any validation before hitting the central cloud environment, the entire downstream AI process is poisoned from the start.

Moving Validation Leftward

The trend is clear: validation must move "left" (closer to the data source). Edge AI processing units, which are small computers embedded near the sensors, are increasingly tasked with the first layer of cleansing. They use lightweight ML models to immediately reject obvious sensor errors or interpolate missing short segments of data based on contextual history.

This "pre-validation" saves massive computational resources downstream and ensures that the centralized AI systems receive data that is already reasonably trustworthy. Architects in labs utilizing massive sensor arrays must prioritize building resilience and basic validation logic directly into the IoT infrastructure.

The Future Landscape: AI as the Integrity Architect

Looking ahead, these trends suggest a future where data integrity is continuously managed by AI, rather than periodically audited by humans.

1. Dynamic Data Contracts

We will see the rise of 'Data Contracts' enforced by smart contracts or similar blockchain/DLT technologies, monitored by AI. These contracts define the expected shape, quality, and constraints of data flowing between systems (e.g., between the IoT collection layer and the central processing unit). If the contract is violated—even momentarily—the data flow halts until remediation occurs.

2. Synthetic Ground Truth Generation

To test the effectiveness of validation tools themselves, AI will increasingly generate synthetic, yet highly realistic, contaminated datasets. Validation systems will then be tested against these known "bad" datasets to prove their effectiveness under stress. This allows for rigorous testing of reliability without compromising real, sensitive research data.

3. Cross-Disciplinary Benchmarks

As disparate fields (e.g., climate modeling, clinical genomics, materials science) all grapple with data velocity, we will see the creation of shared, open-source AI validation toolkits. A successful anomaly detection technique developed for satellite imagery analysis might be rapidly adapted via transfer learning to validate genomic sequencing runs, accelerating the adoption of best practices across the entire research landscape.

Actionable Insights for Today’s Leaders

For organizations currently grappling with scaling their AI and research operations, the path forward requires proactive structural changes:

- Audit the "Last Mile": Identify the single point where human manual review ends and automated processing begins. This is your highest risk area. Invest in ML-driven anomaly detection tools immediately for this transition point.

- Mandate Data Lineage Documentation: If you cannot trace an outcome back to the exact raw data record that informed it, your research is inherently vulnerable. Adopt tools that automatically log every transformation and validation check applied to a dataset.

- Train for Data Skepticism: Researchers must be trained not just on how to use AI, but how to question AI-validated inputs. Integrity shifts from being a manual check to being an intelligent partnership between human skepticism and machine efficiency.

- Prioritize Edge Governance: If you use significant IoT/sensor data, deploy lightweight validation models at the network edge. Prevent dirty data from ever entering the expensive central data lake.

The marriage of AI and IoT offers humankind an unparalleled opportunity to accelerate discovery. However, without a commensurate investment in the technologies ensuring the veracity of that data—the silent guardians of research integrity—we risk building magnificent castles on foundations of sand. The future of trustworthy, transformative science depends on mastering automated validation now.