The Private Cloud AI Revolution: Why Custom Hardware Like AMD MI355X is Essential for the Future of LLMs

For the last few years, the narrative around Artificial Intelligence has been dominated by massive public cloud providers—the hyperscalers offering access to near-infinite computational power. However, as Large Language Models (LLMs) move from experimental labs into mission-critical enterprise applications—handling customer data, generating proprietary code, or managing sensitive industrial processes—a powerful counter-narrative is emerging. The future of competitive AI deployment is migrating back to the secure confines of the enterprise: the Private Cloud.

Recent industry analyses, focusing on platforms ready for the 2026 landscape—such as those highlighting the potential of next-generation hardware like the **AMD MI355X** in private cloud hosting—confirm a pivotal strategic shift. This isn't just about security; it's about performance, cost control, and owning the AI destiny of the organization. We are witnessing the convergence of cutting-edge semiconductor design and enterprise infrastructure strategy.

The Trilemma Driving Private AI Adoption

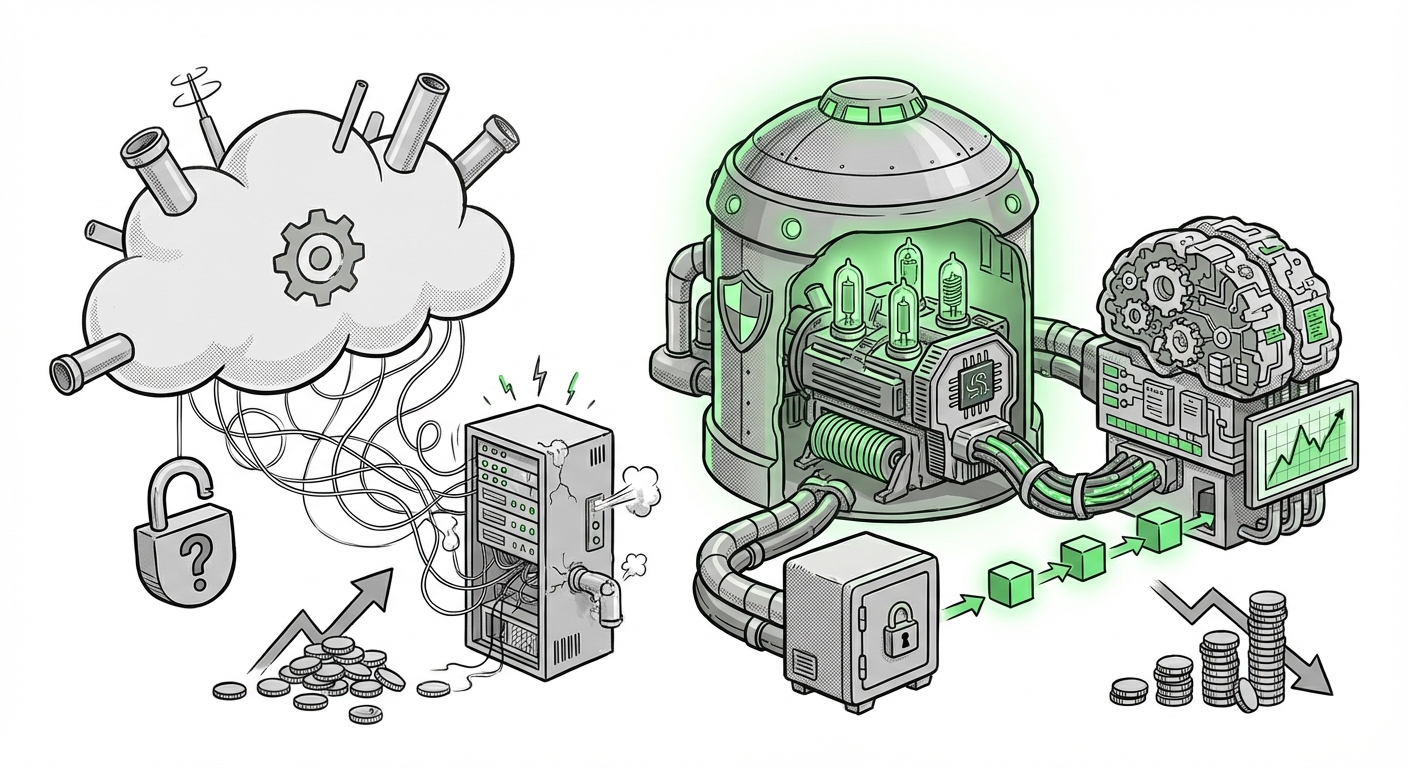

Why are global enterprises suddenly pivoting away from the simplicity of Public Cloud API calls? The answer lies in a classic technological trilemma that LLMs are exacerbating:

- Data Sovereignty and Security: Enterprises cannot afford to send proprietary training data or sensitive inference queries outside their direct control.

- Performance and Latency: Real-time applications (like customer service bots or automated trading) demand extremely low, predictable latency that public cloud congestion can disrupt.

- Total Cost of Ownership (TCO): The cost of running high-volume, continuous LLM inference at scale via pay-per-token models quickly eclipses the upfront capital expenditure (CapEx) of owning dedicated hardware.

This structural pressure necessitates infrastructure designed specifically for these workloads, leading directly to the need for advanced private cloud solutions built around accelerators designed for AI.

Corroboration 1: The Inevitability of AI Sovereignty

The fundamental driver behind private cloud adoption is control. As AI models become foundational to business operations, outsourcing control becomes an unacceptable risk. Analysts confirm this trend is codified in corporate policy:

- Research focusing on "AI workload migration private cloud sovereignty" highlights that regulatory environments (like GDPR or industry-specific finance/health mandates) are forcing model training and inference *on-premise* or within highly controlled sovereign cloud regions.

- This ensures that governance rules (who can see the data, where the model resides) are strictly enforced, a level of granularity often impossible to guarantee in multi-tenant public environments. [See related trends on Hybrid Cloud AI Strategy].

For a CTO, this means building a dedicated AI fabric. The foundational question is no longer if they need proprietary hardware, but which hardware offers the best long-term performance for their specific models.

The Hardware Apex: Why Specialized Accelerators Matter

The performance demands of training state-of-the-art LLMs—which might involve trillions of parameters—are immense. They strain conventional data center components. This is where the hardware successor story, exemplified by the rumored **AMD MI355X**, becomes crucial.

To understand this, imagine a massive library (the AI model). Training requires shuffling the entire collection constantly. Inference requires only quickly finding and reading a specific passage. Both require fast access to the books (memory).

Corroboration 2: The Memory and Performance Equation

The MI355X, as the evolution beyond existing high-end accelerators, directly addresses LLM requirements through superior memory architecture. Deep dives into hardware roadmaps confirm the focus areas:

- Memory Scaling (HBM Successors): LLMs frequently suffer from running out of fast memory (VRAM). Next-gen chips like the 355X are expected to significantly boost High Bandwidth Memory (HBM) capacity and speed. This directly translates to handling larger models without resorting to slow, costly offloading techniques.

- Performance Trade-offs: While raw FLOPS (computational speed) is important, the modern AI bottleneck is data movement. Analyzing hardware against benchmarks confirms that the efficiency in moving data *between* processing units and memory is often the defining factor in training time and inference throughput. AMD's ongoing hardware announcements consistently place emphasis on these interconnected metrics.

For the hardware engineer, the MI355X isn't just a faster chip; it's a fundamentally re-architected compute unit optimized for the sparsity and parallel nature of transformer models. Its presence in a private cloud offering makes that platform a serious contender against established alternatives.

The Economic Reality: Taming the Inference Beast

Training an LLM might be a multi-million dollar, one-time cost. Serving millions of user queries daily, however, is an ongoing operational hemorrhage if not managed correctly. This is the battleground where private cloud infrastructure earns its investment.

Corroboration 3: The Inference Cost Cliff

Technical deep dives into operationalizing LLMs reveal a stark reality: per-query costs can destroy profitability. The strategic choice to deploy proprietary hardware is often an aggressive TCO play:

- When an enterprise pays a cloud provider for every token generated, the model size—and thus the quality of the output—is artificially capped by budget constraints.

- By investing in dedicated resources (like those powered by the MI355X), the marginal cost of serving one more query drops dramatically. This enables businesses to run higher-quality, larger, or even multiple specialized models concurrently without facing immediate financial penalties. [Understanding the hidden costs of LLM production] illustrates this financial imperative clearly.

This shifts AI strategy from a variable operational expense (OpEx) back toward a managed capital investment (CapEx), aligning technology spending more closely with long-term asset strategy.

The Bridge: Software Making Hardware Usable

Exceptional hardware is useless without a capable software stack. A core component of the "Best Private Cloud Hosting Platforms" narrative is ensuring that these powerful new accelerators can be easily integrated into existing MLOps workflows. This is where interoperability becomes key.

Corroboration 4: The MLOps Ecosystem and Containerization

Enterprises do not want vendor lock-in on the software side either. They need frameworks that run reliably on Kubernetes, manage resource allocation seamlessly, and abstract away the specific hardware details:

- The move toward standardized container orchestration (Kubernetes) is central to private cloud strategy. Frameworks that support hardware-agnostic deployment—or, specifically, robust support for non-NVIDIA architectures via stacks like ROCm—are essential for platform flexibility. The role of Kubernetes in MLOps underscores the need for accessible, containerized deployment pipelines.

- This ensures that whether a company runs an existing legacy model or deploys the latest open-source LLM, the process remains consistent, fast, and predictable on their dedicated hardware cluster.

Future Implications: What This Architecture Means

The trend toward customized, high-performance private cloud AI—validated by the focus on specialized silicon like the MI355X—signals a maturation of the entire AI industry. We are moving past the "AI Wow" factor and into the "AI Utility" phase.

Actionable Insights for Business Leaders

- Audit Your Data Moats: Identify which AI use cases offer the highest strategic value (e.g., proprietary IP generation, specialized code completion) and prioritize moving those workloads to controlled private/hybrid environments first.

- Evaluate TCO Beyond Hardware: When analyzing the cost of owning MI355X clusters versus renting GPU time, factor in the cost of downtime, regulatory fines averted, and the ability to continuously fine-tune models without budget constraints.

- Demand Software Portability: When evaluating private cloud vendors, rigorously test the ease of deploying your chosen open-source models using industry-standard serving frameworks (like vLLM or TGI). The hardware must speak the language of your MLOps team.

The Future Landscape: Decentralized Intelligence

In the near future, AI intelligence will become highly distributed. We won't just have one centralized LLM in the public cloud; we will have hundreds of specialized, highly efficient models running locally within enterprises, secured by their private infrastructure.

The convergence described in private cloud hosting guides—marrying advanced memory scaling (MI355X) with enterprise data governance needs—is building the engine room for this decentralized future. This architecture allows organizations to maintain a competitive edge by customizing performance exactly where they need it most, ensuring that the next wave of AI innovation is secure, cost-effective, and fundamentally owned by the enterprise that built the intelligence.